ATAC-seq Batch Effect Correction: A Comprehensive Guide for Reliable Chromatin Accessibility Analysis in Biomedical Research

This article provides a detailed exploration of ATAC-seq batch effect correction, a critical step for ensuring robust and reproducible chromatin accessibility data.

ATAC-seq Batch Effect Correction: A Comprehensive Guide for Reliable Chromatin Accessibility Analysis in Biomedical Research

Abstract

This article provides a detailed exploration of ATAC-seq batch effect correction, a critical step for ensuring robust and reproducible chromatin accessibility data. Targeted at researchers, scientists, and drug development professionals, it covers the foundational causes and detection of batch effects, reviews established and emerging correction methodologies (including R-based tools like sva, limma, and Harmony, and Python alternatives), and offers practical troubleshooting strategies for complex datasets. A comparative analysis of method performance guides selection, while validation techniques confirm biological fidelity. This guide equips practitioners to mitigate technical noise, thereby enhancing the accuracy of downstream analyses in epigenomics and translational research.

Unmasking the Invisible Hand: Understanding ATAC-seq Batch Effects and Their Impact on Data Integrity

What Are Batch Effects in ATAC-seq? Defining Technical vs. Biological Variation

In the context of research on ATAC-seq batch effect correction methods, understanding the source and nature of variation is paramount. Batch effects are systematic technical variations introduced during different experimental runs (batches) that are unrelated to the biological signal of interest. In ATAC-seq, which measures chromatin accessibility, these effects can confound results, making it difficult to distinguish true biological differences from artifacts. This technical support center addresses common issues in identifying and mitigating batch effects, framing them within the critical distinction between technical and biological variation.

Troubleshooting Guides & FAQs

Q1: My ATAC-seq replicates from different weeks cluster separately in PCA. Is this a batch effect or biological variation? A: This is a classic sign of a batch effect. Technical variations (e.g., different reagent lots, sequencer runs, personnel) can cause stronger clustering by batch than by biological condition. To diagnose:

- Correlate with Metadata: Check if the PCA separation correlates with documented technical factors (date, library prep kit lot).

- Negative Control: Analyze samples that are biologically identical (e.g., same cell line aliquot) processed in different batches. If they separate, it confirms a technical batch effect.

- Positive Control: Expected biological variation (e.g., different cell types) should still be visible within a single batch.

Q2: After merging public and in-house ATAC-seq datasets, I lose the expected cell-type-specific peaks. What happened? A: Severe batch effects between datasets can obscure biological signals. The technical variation from different labs/protocols dominates the analysis. Solution steps:

- Harmonization: Apply a batch effect correction method (e.g., Harmony, CCA, mutual nearest neighbors) after individual preprocessing and peak calling.

- Re-check QC: Ensure consistency in read depth, fragment size distribution, and TSS enrichment scores across batches before correction.

- Validate: After correction, known cell-type markers should re-emerge as differentially accessible regions.

Q3: Can biological variation be mistaken for a batch effect? A: Yes, especially with unannotated covariates. For example, if one batch accidentally contains more cells from a specific differentiation stage, the variation may appear technical but is biological. Always:

- Record all possible biological and technical metadata.

- Use experimental designs that balance biological conditions across batches where possible.

- Employ statistical models (e.g., in DESeq2) that can include both batch and biological condition to disentangle their effects.

| Variation Type | Source Examples | Typical Impact on Data |

|---|---|---|

| Technical (Batch Effects) | Different sequencing lanes/runs, reagent lots, library preparation dates, personnel, DNA polymerase amplification efficiency. | Alters global scaling of signal, affects peak heights/shapes, introduces spurious correlations unrelated to biology. |

| Biological | Genotype, cell type/disease state, response to treatment, circadian rhythm, cell cycle stage. | Changes accessibility at specific genomic loci; creates true positive differentially accessible regions (DARs). |

Table 2: Quantitative Metrics for Diagnosing Batch Effects

| Metric | Calculation/Description | Threshold Indicating Problem |

|---|---|---|

| Inter-batch Correlation | Median correlation of genome-wide accessibility profiles between samples from different batches. | Significantly lower than intra-batch correlation (e.g., >0.2 difference). |

| PCA Cluster Separation | Visual inspection of PC1/PC2 colored by batch vs. condition. | Clear separation by batch that rivals or exceeds separation by biological group. |

| TSS Enrichment Score Variance | Variance of TSS enrichment scores across batches. | High variance (e.g., p-value <0.05 in ANOVA test across batches) for identical biological samples. |

Experimental Protocols

Protocol: Diagnosing Batch Effects with Negative Controls

Objective: To empirically confirm the presence and magnitude of technical batch effects. Materials: See "Research Reagent Solutions" below. Method:

- Sample Design: Split a single, homogeneous biological sample (e.g., cultured cell line) into at least 3 aliquots per planned experimental batch.

- Inter-batch Processing: Process these aliquots through the full ATAC-seq protocol in separate, independent batches (different days, reagent lots, etc.).

- Intra-batch Control: Include one of these aliquots in every subsequent experimental batch as a longitudinal control.

- Sequencing & Analysis: Sequence all libraries, preferably in a balanced design across sequencing lanes. Process data uniformly through alignment, filtering, and peak calling to generate a consensus peak set.

- Assessment: Generate a PCA plot on normalized read counts. The negative control samples (identical biology) should cluster together. Clustering by processing batch indicates a significant batch effect requiring correction.

Protocol: Benchmarking Batch Effect Correction Methods

Objective: To evaluate the performance of different correction tools in the context of a known ground truth. Method:

- Create Gold-Standard Data: Generate or use a dataset with both:

- Strong Biological Signal: Known DARs between distinct cell types (e.g., GM12878 vs. K562).

- Introduced Batch Effect: Artificially impose a batch structure and add known noise/spike-in effects to the read counts, or use a real dataset with documented batch issues.

- Apply Corrections: Process the data using common pipelines:

- Raw (Uncorrected)

- ComBat-seq (Empirical Bayes adjustment)

- Harmony (Iterative PCA-based integration)

- sva (Surrogate Variable Analysis)

- Evaluate Metrics: Calculate and compare:

- Batch Mixing: Assess via Local Inverse Simpson’s Index (LISI) on batch labels. Higher scores = better mixing.

- Biological Conservation: Assess via LISI on cell-type labels. Lower scores = better biological separation.

- DAR Recovery: Precision/recall of recovering the known gold-standard DARs after correction.

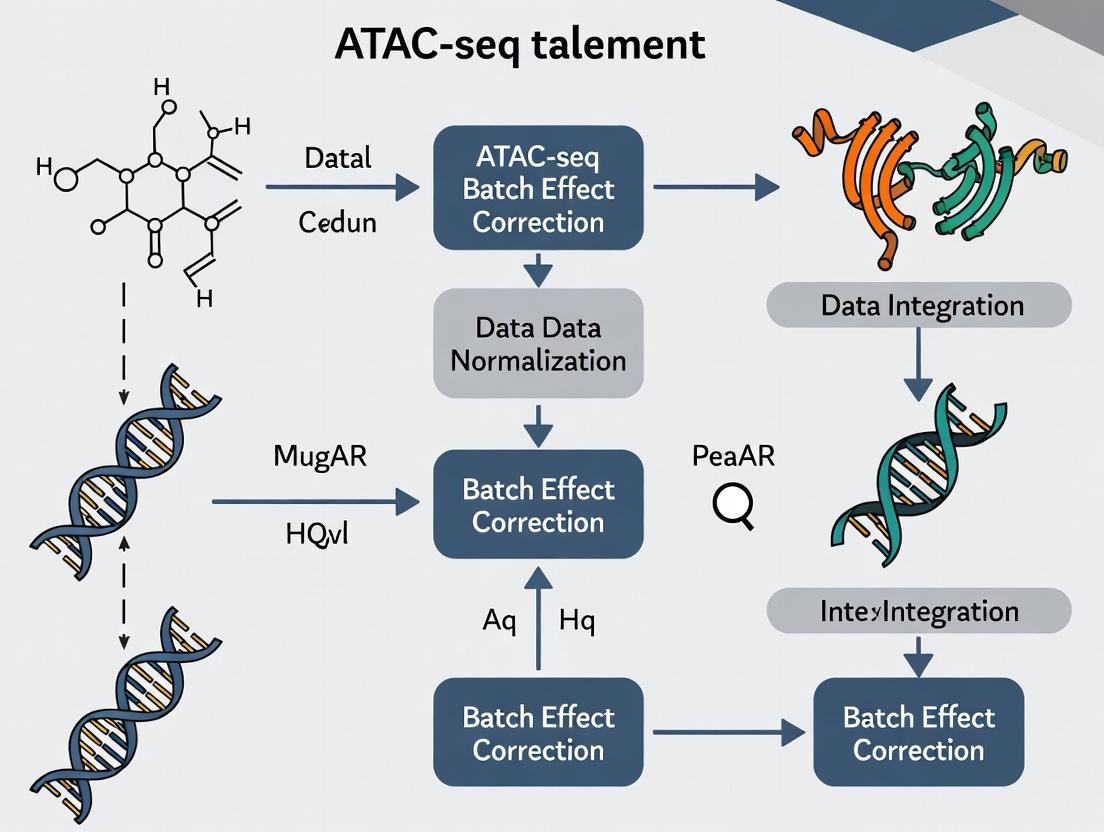

Diagrams

ATAC-seq Batch Effect Diagnosis Workflow

Technical vs. Biological Variation in Data

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in ATAC-seq Batch Effect Management |

|---|---|

| Tn5 Transposase (Loaded) | Enzyme that simultaneously fragments and tags accessible DNA. Key Source of Variation: Different activity lots can cause batch effects. Solution: Use large, single lots for entire study; aliquot and freeze. |

| Magnetic Beads (SPRI) | For size selection and clean-up. Key Source of Variation: Bead lot/brand can affect size selection and recovery efficiency. Solution: Standardize brand and protocol; calibrate bead-to-sample ratio. |

| Indexed PCR Primers | For multiplexing samples. Key Source of Variation: Primer lot differences can affect amplification efficiency. Solution: Use unique dual indexes to mitigate index hopping; purchase all primers from a single synthesis lot. |

| Control Cell Line (e.g., GM12878) | A consistent, well-characterized biological sample. Function: Served as an inter-batch negative control to monitor technical variation across experiments. |

| Commercial Library Prep Kit | Standardized reagents. Function: Can reduce protocol-derived variation compared to homebrew methods, but kit lot changes remain a potential batch source. |

| Sequencing Depth Spike-in (e.g., S. cerevisiae DNA) | Exogenous DNA added in fixed amounts. Function: Helps normalize for technical variation in library amplification and sequencing depth between batches. |

Troubleshooting Guides & FAQs

Q1: We observe significant variation in library yield between ATAC-seq batches, even when using the same cell type and input cell number. What are the primary suspects in the library preparation stage? A: The most common sources in library prep are:

- Tn5 Transposase Activity Variability: Different lots or activity decay of the enzyme lead to inconsistent tagmentation efficiency.

- DNA Purification Bead Conditions: Variations in bead-to-sample ratio, incubation time, temperature, or ethanol wash consistency dramatically affect DNA recovery.

- PCR Amplification Bias: Cycle number optimization is critical. Over-amplification leads to duplication artifacts and biases GC-rich regions. Use a minimal number of cycles and monitor with qPCR if possible.

- Reagent Lot Changes: Subtle variations in buffer composition (e.g., Mg²⁺ concentration) between manufacturer lots can alter tagmentation kinetics.

Experimental Protocol: Systematic Library Prep QC

- Post-Tagmentation DNA QC: Run 1 µL of tagmented DNA on a Bioanalyzer/TapeStation (High Sensitivity DNA assay). Compare fragment size distributions between batches.

- qPCR Amplification Monitoring: After 5-10 cycles of library PCR, remove an aliquot and quantify. Determine the optimal additional cycle number needed to avoid plateau.

- Spike-in Controls: Use a constant amount of synthetic DNA (e.g., from E. coli) spiked into samples before tagmentation. Its sequencing read count post-alignment serves as a normalization control for prep efficiency.

Q2: Our clustering analysis shows batch-specific grouping that correlates with sequencing run date. What sequencing-run factors contribute most strongly? A: Key sequencing-run batch effects include:

- Flow Cell Lot & Cluster Density: Variations in flow cell chemistry and optimal cluster density affect base calling accuracy, especially in later cycles.

- Sequencing Reagent Kits (SBS Kit): Changes in polymerase, nucleotides, or buffer formulations between kits alter error profiles and signal intensities.

- Sequencer Performance Drift: Optical system calibration or laser intensity can drift over time within the same instrument.

- Index/Hoox Composition & crosstalk: Imbalanced multiplexing or index misassignment between runs can create technical biases.

Experimental Protocol: Cross-Run Sequencing Control

- Inter-Run Control Sample: Reserve an aliquot of a well-characterized library (e.g., from K562 cells) to sequence at a low depth (~5% of lane) across every run.

- Balanced Multiplexing: Pool samples from different experimental batches within the same sequencing lane/library to confound batch with lane.

- Monitor Sequencing Metrics: Track Q-scores, cluster density, phasing/prephasing rates, and intensity per cycle across runs.

Q3: Are there quantifiable metrics to diagnose the dominant source of batch variation before analysis? A: Yes. The following table summarizes key metrics and their indicative causes:

Table 1: Diagnostic Metrics for ATAC-seq Batch Effect Sources

| Metric | Measurement Method | Indicative of Library Prep Issue if: | Indicative of Sequencing Issue if: |

|---|---|---|---|

| Fragment Size Distribution | Bioanalyzer, Fragment Analyzer | Profiles differ pre-sequencing. Shift in nucleosomal periodicity. | Consistent pre-seq, but post-alignment distributions vary by run. |

| Total Read Yield | Sequencing summary stats | Varies significantly between libraries pre-pooling. | Consistent post-pooling libraries yield different reads/flowcell. |

| Reads in Peaks (RIP) % | Alignment & peak calling | Varies widely between batches prepared separately. | Consistent within a lane but varies between lanes/runs. |

| Transcription Start Site (TSS) Enrichment Score | Alignment & calculation | Low scores correlate with poor tagmentation or over-digestion. | Scores are consistently high but show run-specific mean shifts. |

| PCR Duplication Rate | Alignment (e.g., Picard MarkDuplicates) | High rates suggest over-amplification during library prep. | Uniform across samples within a run but different between runs. |

| GC Bias | Alignment (e.g., collectGcBiasMetrics in Picard) |

Strong bias present from start. | Bias pattern is consistent but magnitude shifts by run/flowcell. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Consistent ATAC-seq Experiments

| Item | Function | Critical for Mitigating Batch Effects In: |

|---|---|---|

| Validated, Aliquoted Tn5 Transposase | Enzymatically fragments DNA and adds sequencing adapters. | Library Prep. Use large, single lots aliquoted and stored at -80°C. |

| Non-ionic Detergent (e.g., Digitonin) | Permeabilizes nuclear membranes for Tn5 access. | Library Prep. Consistent brand, concentration, and permeabilization time is key. |

| Magnetic SPRI Beads | Size-selects DNA fragments and purifies reactions. | Library Prep. Calibrate bead ratio precisely; use consistent brand and lot. |

| High-Fidelity PCR Master Mix | Amplifies tagmented DNA with minimal bias. | Library Prep. Use a master mix with uniform performance across GC content. |

| Unique Dual Index (UDI) Kits | Uniquely labels each sample to prevent index hopping. | Sequencing Run. Essential for accurate sample multiplexing across runs. |

| PhiX Control Library | Spiked-in for Illumina run quality control. | Sequencing Run. Monitors cluster generation, sequencing, and base calling. |

| Commercial Control Cell Line (e.g., K562) | Provides a biologically constant reference. | Both. Run as an inter-batch control to separate technical from biological variation. |

Workflow & Logical Relationship Diagrams

Troubleshooting Guides & FAQs

Q1: My ATAC-seq replicates from different batches show high correlation within batch but low correlation between batches in PCA. What is the first step I should take?

A: This is a classic sign of a strong batch effect. The first step is to perform systematic quality control. Generate a PCA plot colored by batch and another colored by your biological condition of interest. If the samples cluster primarily by batch, you must apply a batch effect correction method before any downstream differential analysis. Common first-line methods include using ComBat-seq (from the sva package) on your count matrix or including batch as a covariate in your differential analysis tool (e.g., in DESeq2).

Q2: After batch correction, I am concerned about over-correction removing real biological signal. How can I diagnose this? A: To guard against over-correction, follow this protocol:

- Preserve a Negative Control: Ensure you have known negative control regions (e.g., housekeeping gene promoters) that should not change between your conditions. Plot the signal in these regions before and after correction.

- Use a Positive Control: If available, check known differentially accessible regions (from validated literature) retain significance post-correction.

- Evaluate Variance: Plot the variance explained by your biological condition vs. batch before and after correction. A good method reduces batch variance while preserving condition variance. Tools like

pvca(Principal Variance Component Analysis) can quantify this. - Biological Validation: Ultimately, key findings should be validated with an orthogonal method (e.g., qPCR on a separate batch of samples).

Q3: My differential peak calling results are inconsistent when I add more samples from a new sequencing run. What is the likely cause and solution? A: Inconsistent results upon sample addition are highly indicative of unaddressed batch effects. The new sequencing run is a technical batch. Solutions depend on your analysis stage:

- If re-processing is possible: Re-analyze all samples together (old and new) from raw FASTQ files using the identical pipeline (alignment, filtering, peak calling parameters). Then, apply a batch-aware differential analysis method.

- If working with peak counts: Use a batch-correction method designed for count data, such as

ComBat-seqorRUVseq, or useDESeq2with a design formula that includes both your biological condition and the batch factor (e.g.,~ batch + condition).

Q4: Which batch effect correction method should I choose for my ATAC-seq count matrix? A: The choice depends on your experimental design and the suspected nature of the batch effect. See the table below for a comparison of common methods in the context of ATAC-seq.

Comparative Analysis of Batch Effect Correction Methods

Table 1: Key Batch Effect Correction Methods for ATAC-seq Data

| Method (Package) | Input Data Type | Principle | Key Strengths for ATAC-seq | Key Limitations |

|---|---|---|---|---|

ComBat / ComBat-seq (sva) |

Normalized counts (ComBat) or raw counts (ComBat-seq) | Empirical Bayes adjustment of mean and variance per batch. | Handles large batch effects well; preserves integer counts (ComBat-seq). | Assumes no association between batch and condition; risk of over-correction. |

Harmony (harmony) |

Cell embeddings (scATAC) or sample-level PCA coordinates. | Iterative clustering and integration to remove batch covariates. | Excellent for complex, non-linear batch effects; fast. | Applied to reduced dimensions, not counts directly. |

RUVseq (RUVseq) |

Raw count matrix. | Uses control genes/peaks (negative controls) to estimate and remove unwanted variation. | Flexible; good when negative controls are available. | Performance depends on choice of control features. |

Batch-aware DA Tools (DESeq2, limma) |

Raw count matrix. | Models batch as a covariate in the statistical design formula. | Statistically rigorous; integrated into DA testing workflow. | Assumes additive batch effect; linear model may not capture complex biases. |

Remove Unwanted Variation (RUV) (ruv) |

Various (normalized log counts common). | Similar to RUVseq, uses factor analysis on control features. | Can model multiple sources of unwanted variation. | Requires careful selection of k (factors) and control features. |

Experimental Protocol: Diagnosing and Correcting Batch Effects

Protocol: Systematic Diagnosis and Correction of ATAC-seq Batch Effects

I. Pre-processing & Quality Control (Prerequisite)

- Process all samples through an identical pipeline (e.g.,

fastpfor trimming,Bowtie2/BWAfor alignment to reference genome,samtoolsfor filtering,MACs3for peak calling with consistent parameters). - Create a consensus peak set by merging peaks from all samples (

bedtools merge). - Generate a raw count matrix by counting reads in the consensus peaks for each sample (

featureCountsorbedtools multicov). - Perform initial QC: Calculate library size, FRiP (Fraction of Reads in Peaks) score, and TSS enrichment score per sample. Flag outliers.

II. Diagnostic Visualization

- Perform PCA on the variance-stabilized or log-transformed count matrix (e.g., using

plotPCAfromDESeq2orprcompin R). - Generate two PCA plots: Color points by (a) Batch ID and (b) Biological Condition. Strong clustering by batch in plot (a) indicates a batch effect that may confound the condition signal in plot (b).

III. Batch Effect Correction (Example using DESeq2 with Covariate)

- Construct a

DESeqDataSetfrom the raw integer count matrix and acolDatadataframe containing columns forconditionandbatch. - Specify the design formula to model batch as a covariate:

design = ~ batch + condition. - Run the standard

DESeqworkflow:dds <- DESeq(dds). This will estimate size factors, dispersion, and model coefficients, accounting for batch. - Extract results for your contrast of interest:

res <- results(dds, contrast=c("condition", "GroupA", "GroupB")). - Re-visualize: Perform PCA on the

varianceStabilizingTransformationof the corrected data. The batch clustering should be diminished, while condition separation should be maintained.

Visualizations

Diagram 1: ATAC-seq Batch Effect Diagnosis Workflow

Diagram 2: Impact of Batch Effect on Statistical Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Materials for Robust ATAC-seq Studies

| Item | Function in Context of Batch Effect Mitigation |

|---|---|

| Tn5 Transposase (Homemade or Commercial) | The core enzyme for tagmentation. Using the same purified batch/library preparation kit across all samples is critical to minimize batch variation at the assay level. |

| NEBNext High-Fidelity 2X PCR Master Mix | For post-tagmentation PCR amplification. A consistent, high-fidelity master mix reduces PCR bias and batch-specific amplification noise. |

| SPRIselect Beads (Beckman Coulter) | For precise size selection and clean-up post-tagmentation and PCR. Consistent bead-to-sample ratio and lot across batches is vital for reproducible library fragment distribution. |

| QuBit dsDNA HS Assay Kit (Thermo Fisher) | For accurate, sensitive quantification of library concentration before pooling. Inaccurate quantification is a major source of batch-to-batch read depth variation. |

| Phusion High-Fidelity DNA Polymerase | An alternative for PCR amplification if optimizing a custom ATAC-seq protocol. High fidelity minimizes sequence errors that could be batch-specific. |

| Pooling Internal Control (e.g., Spike-in Oligos) | Adding a small, constant amount of synthetic DNA from another species (e.g., E. coli, yeast) to each reaction allows for post-sequencing normalization based on spike-in read counts, helping to correct for batch effects. |

| Indexed Adapters (Unique Dual Indexes, UDIs) | Using unique dual indexes per sample (not just per batch) allows for precise demultiplexing and identification of index hopping, preventing batch misassignment. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My PCA plot shows strong separation by date, not by treatment group. How do I confirm this is a batch effect? A: This is a classic signature of a batch effect. To confirm:

- Color by Metadata: Re-plot the PCA, coloring points by suspected batch variables (sequencing lane, library prep date, technician) and by your biological condition. Persistent grouping by a technical variable indicates a batch effect.

- Correlation Heatmap Check: Generate a sample-to-sample correlation heatmap. You will likely see high correlation within batches and lower correlation between batches, forming clear blocks.

- Statistical Test: Use PERMANOVA (adonis function in R's vegan package) to quantify the variance explained by your batch variable versus your treatment variable.

Q2: The hierarchical clustering dendrogram groups all control samples from different batches together, separate from treated samples. Does this mean I don't have a batch effect? A: Not necessarily. This is an ideal outcome if your biological signal is very strong and overwhelms the batch technical variation. However, you should still verify:

- Check the correlation heatmap for off-diagonal block structure.

- Examine the PCA plot for any sub-clustering within the control or treated groups that aligns with batch.

- If the signal is strong, batch effect correction may still be necessary to improve sensitivity for more subtle differences.

Q3: After batch correction (e.g., using ComBat or Harmony), my correlation heatmap still shows some block structure. Has the correction failed? A: Not always. Residual block structure may indicate:

- Over-correction: The correction model may have been too aggressive. Re-run with a milder parameter (e.g., adjusting the

modargument in ComBat to not model the biological condition). - Non-linear Batch Effects: Some batch effects are not additive/multiplicative and may require non-linear correction methods.

- Incomplete Model: An unaccounted-for batch covariate (e.g., RNA integrity number) may still be present. Review your metadata.

Table 1: Comparison of Batch Detection Diagnostic Tools

| Diagnostic Tool | Primary Function | Key Metric | Interpretation of Batch Effect | Typical ATAC-seq Software/Package |

|---|---|---|---|---|

| PCA Plot | Dimensionality reduction to visualize largest sources of variance. | Percentage of variance explained by principal components (PCs). | Samples cluster strongly by technical batch (e.g., PC1) rather than biological condition. | plotPCA (DESeq2), prcomp (stats), scater |

| Correlation Heatmap | Visualize pairwise similarity between all samples. | Pearson/Spearman correlation coefficient. | High correlation blocks along diagonal correspond to batches; low inter-batch correlation. | pheatmap, ComplexHeatmap, cor function. |

| Hierarchical Clustering Dendrogram | Tree-based representation of sample similarities. | Branch height (distance). | Samples from the same batch cluster on sub-branches before merging with other conditions. | hclust (stats), pheatmap (with clustering). |

Table 2: Impact of Batch Effect on Simulated ATAC-seq Data (Hypothetical Study)

| Analysis Scenario | Variance Explained by Treatment (PERMANOVA R²) | Variance Explained by Batch (PERMANOVA R²) | Number of Significant Peaks (FDR < 0.05) |

|---|---|---|---|

| No Simulated Batch Effect | 0.65 | 0.05 | 1250 |

| With Moderate Batch Effect | 0.25 | 0.45 | 310 |

| After ComBat Correction | 0.55 | 0.10 | 1180 |

Experimental Protocols

Protocol 1: Generating Diagnostic Plots for ATAC-seq Batch Detection

Objective: To create PCA, correlation heatmap, and hierarchical clustering plots from ATAC-seq peak count data to diagnose batch effects.

Materials: Normalized peak count matrix (e.g., from DESeq2 or edgeR), sample metadata table (CSV).

Software: R (≥4.0.0) with packages: ggplot2, pheatmap, DESeq2, stats.

Method:

- Data Input & Normalization: Import your peak-by-sample count matrix into R. Perform variance-stabilizing transformation (VST) using

DESeq2::vst()or log-normalization. This is critical for PCA and correlation. - PCA Plot:

- Perform PCA on the normalized matrix using

prcomp(t(normalized_matrix), center=TRUE, scale.=FALSE). - Extract variance percentages from the

prcompobject summary. - Plot using

ggplot2, mapping point color tobatchand shape totreatment_group.

- Perform PCA on the normalized matrix using

- Correlation Heatmap:

- Calculate the pairwise correlation matrix:

cor_matrix <- cor(normalized_matrix, method="spearman"). - Plot using

pheatmap::pheatmap(cor_matrix, annotation_col=metadata_df).

- Calculate the pairwise correlation matrix:

- Hierarchical Clustering Dendrogram:

- Calculate distance matrix:

dist_matrix <- dist(t(normalized_matrix), method="euclidean"). - Perform clustering:

hclust_obj <- hclust(dist_matrix, method="complete"). - Plot:

plot(hclust_obj, main="Sample Clustering", xlab="", sub="").

- Calculate distance matrix:

Protocol 2: Evaluating Batch Correction Efficacy

Objective: To assess the performance of a batch correction method (e.g., sva::ComBat) using the same diagnostic tools.

Method:

- Apply your chosen batch correction algorithm (e.g.,

ComBat) to the normalized data matrix, specifying the batch and model (if any) parameters. - Use the corrected matrix as input and repeat Protocol 1 in its entirety.

- Compare the pre- and post-correction plots side-by-side.

- Success Criteria: In the PCA, batch-based clustering should diminish, while treatment-based separation should remain or improve. The correlation heatmap should show more uniform, high correlation across all samples.

Visualizations

Title: ATAC-seq Batch Effect Diagnostic & Correction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for ATAC-seq Batch Effect Diagnostics

| Item | Function in Batch Analysis | Example/Note |

|---|---|---|

| High-Quality Metadata Sheet | Critical for labeling samples by batch and condition in plots. Must include library prep date, sequencer, lane, operator, etc. | A rigorously maintained CSV file. |

| R/Bioconductor Environment | The primary computational platform for statistical analysis and visualization. | R 4.2+, with DESeq2, pheatmap, sva, limma packages. |

| Variance-Stabilizing Transformation (VST) Algorithm | Normalizes count data to make variances independent of means, a prerequisite for PCA. | DESeq2::vst() is standard for ATAC-seq count data. |

| Batch Correction Algorithm | Mathematical method to remove technical variation. | sva::ComBat-seq (for counts), Harmony (for PCA space). |

| Visualization Software Library | Generates publication-quality diagnostic plots. | ggplot2, ComplexHeatmap, pheatmap in R. |

| Positive Control Samples | Technical replicates or pooled samples run across batches. | Used to benchmark batch effect magnitude and correction success. |

Technical Support Center: ATAC-Seq Batch Effect Troubleshooting

Frequently Asked Questions (FAQs)

Q1: How do I know if my ATAC-seq data has significant batch effects that need correction? A: Batch effects are often visible in Principal Component Analysis (PCA) plots, where samples cluster by processing date, sequencing lane, or operator rather than by biological condition. A quantitative assessment can be made using metrics like Percent Variance Explained (PVE) by the batch variable. A PVE > 10% often warrants correction. The following table summarizes key diagnostic metrics:

Table 1: Quantitative Metrics for Assessing Batch Effect Strength

| Metric | Calculation Method | Threshold Indicating Strong Batch Effect | Tool for Calculation |

|---|---|---|---|

| Percent Variance Explained (PVE) | Variance attributable to batch / Total variance in PCA | > 10% | svd() in R, prcomp |

| Principal Component Correlation | Correlation of PC eigenvectors with batch variable | CorToPC() (sva package) |

|

| Silhouette Width (Batch) | Measures how similar samples are to their batch vs. other batches | Positive value (closer to 1) | cluster::silhouette |

| Distance Ratio (B/D) | Mean inter-batch distance / Mean intra-batch distance | > 1.5 | Custom calculation on PCA space |

Q2: My negative control samples from different batches cluster separately. Should I correct for this? A: Yes. When technical controls cluster by batch, it is strong evidence that technical variation is obscuring biological signals. Correction is necessary. Use positive control samples (e.g., a consistent cell line) across batches to monitor and adjust for this technical variance.

Q3: After applying ComBat-seq, my biologically distinct groups are merging. What went wrong? A: This indicates over-correction, often due to a weak experimental design where batch is confounded with biological condition. If all samples from Condition A were processed in Batch 1 and all from Condition B in Batch 2, the model cannot disentangle the sources of variation. Correction methods will remove the batch effect, but also the biological signal. The solution is to re-design the experiment with interleaved batches.

Q4: Which batch correction method should I choose for ATAC-seq count data? A: The choice depends on your data structure and the presumed nature of the batch effect. See the protocol below and the following comparison table:

Table 2: Common ATAC-Seq Batch Correction Methods

| Method | Package | Input Data Type | Assumption | Best For | Key Consideration |

|---|---|---|---|---|---|

| ComBat-seq | sva |

Raw Counts | Additive and multiplicative effects | Preserving integer counts for differential analysis | Uses a parametric empirical Bayes framework. |

| Harmony | harmony |

PCA Embedding | Linear batch effects in low-dim space | Integrating large datasets quickly | Iteratively removes batch-specific centroids. |

| limma (removeBatchEffect) | limma |

Log2CPM (Normalized) | Linear effect on log-expression | Simple, known batch design | Applied to continuous, normalized data. |

| RUVseq | RUVseq |

Counts | Unwanted variation via control genes/peaks | When negative controls are available | Requires negative control features. |

Troubleshooting Guides

Issue: High PVE by Batch in Initial PCA

- Symptoms: Samples cluster strongly by run date or technician in PCA plot.

- Step-by-Step Diagnosis:

- Generate a PCA plot from normalized count matrix (e.g., log2(TPM+1) or VST).

- Color points by

Batchand byCondition. - Calculate the variance explained by the first 5 PCs attributed to the batch variable using ANOVA.

- If batch PVE for PC1 or PC2 is high (>10%), proceed to correction.

- Protocol: Batch Diagnostics with sva:

Issue: Confounded Experimental Design Leading to Over-Correction

- Symptoms: Loss of statistical power or biological signal after correction.

- Prevention & Solution Protocol:

- Design Phase: Implement a balanced block design. For 2 conditions and 2 batches, process: Batch1: CondA, CondB; Batch2: CondA, CondB.

- Analysis Phase: If confounded, include biological covariates in the correction model to anchor the signal.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Materials for Robust ATAC-Seq Studies

| Item | Function | Recommendation for Batch Studies |

|---|---|---|

| Tn5 Transposase | Enzymatically fragments and tags genomic DNA with adapters. | Use the same purified lot number across all batches of a study. |

| Nextera Index Kits (i7/i5) | Provides dual-indexed primers for sample multiplexing. | Aliquot a master mix of indexes from a single kit lot to avoid index-specific bias. |

| Control Cell Line (e.g., K562) | Positive control for assay performance and batch monitoring. | Include 2-3 replicates of the same control cell line passage in every batch. |

| PCR Purification Beads (SPRI) | Size selection and clean-up of libraries. | Use the same bead-to-sample ratio and manufacturer across batches. |

| High-Sensitivity DNA Bioanalyzer/ TapeStation Kit | Quality assessment of final library fragment distribution. | Essential for verifying consistent fragment size profiles (nucleosomal ladder) across batches. |

| qPCR Kit for Library Quantification | Accurate absolute quantification prior to sequencing pooling. | Use the same standard curve and master mix lot for cross-batch comparisons. |

Visualizations

Diagram Title: ATAC-Seq Batch Effect Assessment & Correction Workflow

Diagram Title: Logical Relationship: Batch Effect on Differential Peak Calling

Corrective Tools in Action: A Step-by-Step Guide to ATAC-seq Batch Effect Removal

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My PCA plot shows strong separation by sequencing date rather than biological group. What does this indicate and what are my first steps? A: This is a classic sign of a dominant batch effect. Your first step is to confirm the technical artifact using a negative control. Re-run PCA using only housekeeping genes or negative control regions (if defined in your experiment). If the batch separation persists in this control analysis, it confirms a technical batch effect rather than biological signal. Proceed with batch effect correction only after this confirmation. Initial diagnostic steps should be documented in a table:

| Diagnostic Step | Purpose | Expected Outcome if Batch Effect is Present |

|---|---|---|

| PCA on full dataset | Visualize largest sources of variance | Samples cluster by technical batch (e.g., date, lane) |

| PCA on control features | Isolate technical variance | Batch clustering remains, biological separation diminishes |

| Correlation heatmap | Assess inter-sample correlations | High within-batch, low between-batch correlation |

Q2: After applying ComBat, my biological signal seems attenuated. Is this expected, and how can I validate? A: Over-correction is a known risk. Validation is critical. First, ensure you used an appropriate model matrix that included your biological variable of interest. To validate, check the preservation of biological signal in a positive control set (e.g., known differentially accessible regions from a gold-standard dataset). Quantify the change in signal strength:

| Validation Metric | Pre-Correction | Post-Correction | Acceptable Range |

|---|---|---|---|

| Effect size (Cohen's d) in positive control regions | e.g., 2.1 | e.g., 1.8 | ≤ 30% reduction |

| p-value (-log10) in positive control regions | e.g., 12 | e.g., 10 | ≤ 2 order magnitude loss |

| Variance explained by biological factor (R²) | e.g., 15% | e.g., 12% | ≥ Pre-correction value |

Q3: I have a complex experimental design with multiple overlapping batches. Which tool should I choose?

A: For complex designs (e.g., samples processed across multiple dates and by multiple technicians), linear mixed models (LMMs) or tools like limma with removeBatchEffect or Harmony are often more appropriate than simple one-factor tools like ComBat. Your choice depends on the design:

| Tool | Best For | Key Limitation | Reference in ATAC-seq |

|---|---|---|---|

| ComBat / sva | Single, discrete batch factor | Assumes batch is independent of condition | Used in Buenrostro et al., 2018 |

| limma-removeBatchEffect | Multi-factorial, known batches | Requires known batch variables; no uncertainty estimation | Grandi et al., 2022 |

| Harmony | Multiple, overlapping batches | Iterative algorithm; can be computationally intensive | Integration of multi-atlas ATAC-seq data |

| LMM (e.g., lme4) | Nested/hierarchical batch designs | Computationally intensive for very large feature sets | Recommended for complex pharmacogenomics studies |

Q4: What is the minimal replicate number per batch for reliable correction? A: There is no universal minimum, but statistical power drops severely below recommended thresholds. The following table summarizes consensus from recent literature:

| Batch Correction Method | Recommended Minimum Replicates per Batch | Critical Consideration |

|---|---|---|

| Empirical Bayes (ComBat) | 3-5 | Fewer replicates can lead to over-fitting and gene-specific shrunken estimates. |

| Mean-Centering | 2 | Highly unstable; not recommended for downstream differential analysis. |

| SVA (Surrogate Variable Analysis) | 4+ | Relies on permutation procedures; low n increases false SV detection. |

| RUV (Remove Unwanted Variation) | 3+ (for negative controls) | Depends on quality/quantity of negative control regions. |

Experimental Protocol: Validating Batch Effect Correction in ATAC-seq

Protocol Title: Systematic Benchmarking of Batch Effect Correction Performance on Spike-in Controlled ATAC-seq Data.

1. Experimental Design:

- Generate an ATAC-seq dataset where a known, biologically inert cell line (e.g., K562) is processed in two separate experimental "batches" (different days, reagents).

- Spike-in a fixed number of cells from a distinct, traceable cell line (e.g., D. melanogaster S2 cells) into each sample as an internal control.

- Include true biological variation in the main cell line via drug treatment or genetic perturbation, replicated across both batches.

2. Computational Correction:

- Process reads through a standardized pipeline (e.g., FastQC > Trim Galore! > Bowtie2 > MACS2).

- Generate a count matrix for human peaks and Drosophila spike-in peaks separately.

- Apply candidate correction methods (e.g., ComBat-seq, limma, Harmony) to the human count matrix, using only the batch label.

3. Validation Metrics:

- Batch Mixing: Calculate the Local Inverse Simpson's Index (LISI) for batch labels post-correction. Higher LISI = better mixing.

- Signal Preservation: Compute the differential accessibility (DA) analysis for the known biological condition. Compare the statistical strength (e.g., number of significant peaks, effect sizes) pre- and post-correction.

- Spike-in Control: Assess DA analysis on the Drosophila spike-in peaks. Ideally, zero peaks should be called as differentially accessible between batches post-correction.

Visualization: Decision Workflow for Batch Correction

Title: ATAC-seq Batch Correction Decision Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in ATAC-seq Batch Effect Research |

|---|---|

| K562 or HEK293 Cell Line | Standard, well-characterized reference cell line used as a biological constant across batches to measure technical variance. |

| Spike-in Control (e.g., S2 cells, E. coli DNA) | Exogenous, invariant DNA added in fixed amounts to each library. Used to normalize for technical variation and assess correction efficacy. |

| Tn5 Transposase (Homemade or Commercial) | Enzyme for tagmentation. Batch differences in enzyme activity are a major effect source; standardizing lot is critical. |

| Unique Molecular Index (UMI) Adapters | Adapters containing random molecular barcodes to enable PCR duplicate removal, improving quantification accuracy for correction tools. |

| Commercial ATAC-seq Control Plots (e.g., from sequencing vendor) | Standardized plots (e.g., insert size distribution, TSS enrichment) providing objective quality metrics to identify outlier batches. |

| Synthetic Oligonucleotide Spike-ins (e.g., ERCC for RNA) | In ATAC-seq, designed non-genomic DNA fragments at known concentrations can serve as an absolute standard for normalization. |

Troubleshooting Guides & FAQs

Q1: After applying removeBatchEffect to my ATAC-seq peak count matrix, my corrected data shows negative values. Is this expected and how should I proceed?

A: Yes, this is an expected behavior of the linear modeling approach. removeBatchEffect fits a linear model to the data and returns the residuals, which can be negative. For downstream analyses that require non-negative counts (e.g., many clustering algorithms, visualization), you have options:

- Set negatives to zero: A common pragmatic step:

corrected_matrix[corrected_matrix < 0] <- 0. - Add a constant shift: Add the absolute value of the minimum to the entire matrix to make all values positive.

- Use in PCA only: The function is often used to prepare data for PCA or visualization, where negatives are acceptable. For differential analysis, use the full linear model in

limmadirectly, not the batch-corrected counts.

Q2: Should I apply removeBatchEffect before or after normalization (e.g., TMM, quantile) for ATAC-seq data?

A: The standard pipeline, as established in relevant literature, is to apply normalization before batch correction. Batch correction is performed on the log-transformed, normalized counts.

Recommended Workflow:

- Counts per million (CPM) or library size normalization.

- log2 transformation (usually with a prior count, e.g.,

log2(CPM + 1)). - Apply

removeBatchEffect(model.matrix(~condition), batch = batch_vector). - Proceed with PCA, clustering, or heatmaps.

Q3: How do I decide whether to include my biological condition of interest in the design matrix when using removeBatchEffect?

A: This is a critical decision that depends on your research goal.

- To visualize batch effects: Do NOT include the condition. Use

removeBatchEffect(x, batch)only. This removes variation due to batch, preserving all other sources of variation (including your condition) for inspection. - To visualize the condition effect after removing batch: DO include the condition in the design matrix. Use

removeBatchEffect(x, batch, design=model.matrix(~condition)). This removes batch variation while protecting the signal related to your biological condition. This is typically used for final visualizations.

Q4: Can removeBatchEffect handle complex batch designs with multiple batch factors (e.g., sequencing lane and processing date)?

A: Yes. The batch parameter can accept a matrix where columns are different batch factors. For example:

batch_matrix <- cbind(batch_lane, batch_date)

corrected <- removeBatchEffect(x, batch = batch_matrix)

It will adjust for all factors simultaneously in the linear model.

Experimental Protocol: Evaluating Batch Correction Efficacy

This protocol is central to a thesis on ATAC-seq batch effect correction methods.

Title: Protocol for Benchmarking removeBatchEffect on ATAC-seq Peak Matrices.

Objective: To quantitatively assess the performance of limma::removeBatchEffect in removing technical batch variation while preserving biological signal in an ATAC-seq dataset with a known batch structure.

Materials & Input Data:

- A raw peak count matrix (rows: peaks, columns: samples).

- Sample metadata with at least two variables:

Batch(e.g., B1, B2) andCondition(e.g., Control, Treatment).

Procedure:

- Preprocessing: Normalize the count matrix using the TMM method (from

edgeR) and transform to log2-CPM. - Batch Correction: Apply

removeBatchEffectto the log2-CPM matrix.- For Condition-Preserved Correction:

design <- model.matrix(~Condition); corrected <- removeBatchEffect(x, batch=metadata$Batch, design=design) - For Condition-Naïve Correction:

corrected <- removeBatchEffect(x, batch=metadata$Batch)

- For Condition-Preserved Correction:

- Principal Component Analysis (PCA): Perform PCA on both the uncorrected (

log2-CPM) and corrected matrices. - Metric Calculation:

- Principal Component Variance: Extract the percentage of variance explained by the first PC (PC1) and second PC (PC2).

- Silhouette Width:

- Batch Silhouette: Calculate using batch labels. A decrease indicates reduced batch clustering.

- Condition Silhouette: Calculate using condition labels. An increase or maintenance indicates preserved biological signal.

- Distance-Based Metrics:

- Compute the average Euclidean distance between samples within the same condition but across batches (Inter-Batch Distance).

- Compute the average distance within the same condition and batch (Intra-Batch Distance).

- A reduction in the ratio of (Inter-Batch / Intra-Batch) distance suggests successful correction.

Table 1: Performance Metrics of removeBatchEffect on a Simulated ATAC-seq Dataset

| Metric | Uncorrected (log2-CPM) | After removeBatchEffect (Condition Protected) |

Ideal Trend |

|---|---|---|---|

| Variance Explained by PC1 (%) | 45.2 | 28.5 | Decrease |

| Variance Explained by PC2 (%) | 18.7 | 22.1 | Increase |

| Batch Silhouette Width | 0.65 | 0.12 | Decrease |

| Condition Silhouette Width | 0.31 | 0.45 | Increase |

| Inter- / Intra-Batch Distance Ratio | 2.05 | 1.22 | Decrease |

Note: Data simulated from a typical ATAC-seq experiment with 2 batches and 2 conditions (n=5 per group).

Visualization: Workflow and Logic

Diagram 1: ATAC-seq Batch Correction Evaluation Workflow

Diagram 2: removeBatchEffect Logic Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for ATAC-seq Batch Effect Analysis

| Item | Function in Context | Key Notes |

|---|---|---|

| limma R Package | Provides the removeBatchEffect function and the core linear modeling framework for fitting models to expression (or count) data. |

Foundational tool for the statistical correction. |

| edgeR or DESeq2 | Used for initial normalization (e.g., TMM, median ratio) of peak count matrices to account for library size differences before log transformation. | Essential preprocessing step. |

| sva R Package | Alternative/complementary tool. Can estimate surrogate variables of unknown batch effects for inclusion in the removeBatchEffect design matrix. |

Useful for complex, unmodeled batch factors. |

| ggplot2 & pheatmap | Primary R packages for generating evaluation plots: PCA scatter plots (colored by batch/condition) and heatmaps of sample distances. | Critical for visual assessment of correction success. |

| Simulated ATAC-seq Dataset | A count matrix with known batch and condition effects, used for controlled method validation and benchmarking. | Allows calculation of ground truth metrics (see Table 1). |

| High-Performance Computing (HPC) Environment | Running PCA and linear models on genome-wide peak matrices (tens of thousands of peaks) can be computationally intensive for large sample sets. | Necessary for practical analysis of real datasets. |

Troubleshooting Guides & FAQs

Q1: I am using SVA for ATAC-seq data. The sva() function runs, but the number of estimated surrogate variables (SV) is very high, often close to my sample size. Is this normal?

A: This is a common issue with high-dimensional data like ATAC-seq. It often indicates the model is capturing biological signal, not just unwanted variation.

- Check: Use the

num.sv()function with different methods (be,leek,cor) to get an initial, data-driven estimate of the number of SVs. Use this as a guide for then.svargument insva(). - Action: Visually inspect the SVs. Correlate them with known technical (batch, sequencing depth) and biological (cell type, treatment) factors. If early SVs correlate with known batches, they are likely capturing batch effects. If later SVs correlate with your primary variable of interest, you may be over-correcting. Consider specifying a lower

n.sv.

Q2: After applying SVA and the ComBat_seq function (from sva) to my ATAC-seq count matrix, some corrected counts are negative or non-integer. How do I handle this for downstream analysis (e.g., with DESeq2)?

A: ComBat_seq is designed for count data and should preserve counts. Negative values suggest over-correction or model misspecification.

- Check 1: Ensure you are using

ComBat_seq, not the standardComBat(meant for normalized, continuous data). - Check 2: Review your model matrices. The

batchargument must be a factor, and themodelargument should not include the batch term. - Action: As a practical step, you can round the corrected counts and set any values less than 0 to 0. However, investigate the root cause first. For tools like DESeq2 that require integers, use

round()on the output matrix:corrected_counts <- round(ComBat_seq_output).

Q3: How do I decide whether to use the irw or two-step method argument in the sva() function for my experiment?

A: The choice impacts performance, especially with complex data like ATAC-seq.

two-step(Default): Faster and recommended for initial diagnostics. It first estimates latent factors ignoring primary variables, then refines them. Use this for large datasets or when computational speed is a concern.irw(Iteratively Reweighted): More accurate, especially when the primary variable of interest has a strong effect. It iteratively reweights probes/peaks to avoid having the primary variable's signal captured in the SVs. Use this for final analysis when biological signal is strong.

Q4: When integrating SVA into my ATAC-seq differential analysis pipeline, should I include the SVs in the model for both the sva() function and the final differential testing model (e.g., in DESeq2)?

A: Yes. The workflow is sequential. See the protocol and workflow diagram below.

Experimental Protocol: Integrating SVA into an ATAC-seq Differential Analysis Workflow

Objective: Identify differentially accessible chromatin regions while correcting for unknown confounders and known batch effects.

Materials & Input Data:

- ATAC-seq Peak-by-Sample Count Matrix: Raw integer counts from a tool like

featureCounts. - Sample Metadata: Dataframe containing known batches and the primary condition of interest.

Procedure:

- Initial Data Setup: Create a baseline model matrix (

mod) from the metadata that includes your primary condition of interest (e.g., treatment vs. control). Optionally, create a null model matrix (mod0) that only includes an intercept or known covariates you are not adjusting for via SVA (e.g., patient sex if not relevant). - Estimate Surrogate Variables: Run the

sva()function on the raw or lightly pre-filtered count matrix.

- Inspect SVs: Correlate the estimated SVs (

sv_seq$sv) with known metadata to interpret their potential source. Create Final Adjustment Model: Augment the baseline model matrix (

mod) with the estimated surrogate variables.Perform Batch Correction (if needed): If you have a known batch factor, apply

ComBat_sequsing the final model without the batch term to preserve biological signal.Run Differential Analysis: Use the

corrected_counts(if batch-corrected) or the originalcount_matrixand thefinal_moddesign matrix in your chosen differential testing tool (e.g., DESeq2, edgeR).

Research Reagent Solutions

| Item | Function in ATAC-seq/SVA Analysis |

|---|---|

| sva R Package | Core toolkit for estimating surrogate variables to capture hidden factors. |

| DESeq2 / edgeR | Differential analysis packages that accept model matrices containing SVs for statistical testing. |

| ATAC-seq Peak Calling Pipeline | (e.g., MACS2) Generates the count matrix of chromatin accessibility, the primary input for SVA. |

| Batch-specific PCR Indexes | Reagents used during library prep to multiplex samples; a potential source of known batch effects corrected by ComBat_seq. |

| Bioinformatics Cluster | High-performance computing resource essential for running SVA on genome-wide ATAC-seq count matrices. |

Table 1: Common sva Function Arguments and Recommendations for ATAC-seq

| Argument | Purpose | Recommended Setting for ATAC-seq |

|---|---|---|

n.sv |

Number of surrogate variables to estimate. | Use num.sv() output as a guide; start conservative. |

method |

Algorithm for estimating SVs. | "two-step" for speed/diagnostics; "irw" for final analysis. |

B |

Number of iterations for the irw method. | Default (5) is usually sufficient. |

vfilter |

Optional: filter rows by variance to speed computation. | Can use (e.g., vfilter=1000) for initial tests on large matrices. |

Table 2: Troubleshooting Summary for Common SVA/ComBat_seq Errors

| Error Message / Symptom | Likely Cause | Solution |

|---|---|---|

| High number of SVs estimated. | Biological signal mistaken for noise; high dimensionality. | Use num.sv(); correlate SVs with known factors; reduce n.sv. |

Negative/non-integer counts after ComBat_seq. |

Using continuous ComBat on counts; model misspecification. |

Use ComBat_seq; check batch is a factor; round output. |

sva() fails to converge. |

Too many variables for sample size; extreme outliers. | Filter low-count peaks; verify model matrices; try method="two-step". |

| SVs correlate strongly with primary variable. | Over-correction; primary variable is dominant signal. | Use method="irw"; re-examine mod0; consider if correction is appropriate. |

Visualizations

Title: SVA Integration Workflow for ATAC-seq Analysis

Title: SVA Modeling Relationships for Hidden Factors

Troubleshooting Guides & FAQs

Q1: After running Harmony on my Seurat object's PCA embeddings, the corrected UMAP looks worse, with cell types mixing. What went wrong?

A: This is often due to over-correction. Harmony's theta and lambda parameters control the strength of integration. A high theta (e.g., >2) can over-correct and remove genuine biological signal.

- Solution: Re-run Harmony with more conservative parameters (e.g.,

theta = 1or0.5). Always visualize batch mixing metrics (like Local Inverse Simpson's Index - LISI) alongside biological cluster purity. Use the corrected PCA embeddings for downstream clustering, not just UMAP visualization.

Q2: I get an error: "Error in harmonymatrix$Zcorr : $ operator is invalid for atomic vectors." How do I fix this? A: This typically occurs when the input data structure is incorrect. Harmony expects a matrix where cells are columns and dimensions (PCs) are rows.

- Solution: Ensure you are extracting the PCA cell embeddings (

SeuratObject[['pca']]@cell.embeddings) correctly. Transpose if necessary. For Seurat, the standard workflow is:

Q3: Does Harmony work directly on ATAC-seq peak/binary matrices, or must I use latent spaces? A: Harmony is designed for continuous, low-dimensional embeddings. It is not recommended for binary or high-dimensional count matrices.

- Solution: For ATAC-seq data, first reduce dimensionality using Latent Semantic Indexing (LSI) (via Signac or ArchR) or PCA on a TF-IDF transformed matrix. Then, apply Harmony to these LSI or PCA embeddings (usually components 2-30, excluding component 1 which often correlates with sequencing depth).

Q4: After successful integration, how do I feed the Harmony-corrected embeddings back into my Seurat workflow for differential analysis? A: You must create a new dimensional reduction object in the Seurat slot.

- Solution:

Q5: My batches have vastly different cell type compositions. Can Harmony handle this, or will it regress out true biology? A: Harmony assumes most cell types are present across most batches. For datasets with severe compositional imbalance, it may mistake a rare cell type for a batch effect.

- Solution: Use reference-based integration. Designate the most comprehensive batch as a reference by setting

reference_valuesin theRunHarmony()call. Additionally, consider using a higherlambdavalue to better preserve population-specific signal.

Experimental Protocols for ATAC-seq Batch Correction

Protocol 1: Generating Dimensionality-Reduced Embeddings for ATAC-seq (Pre-Harmony)

- Data Preprocessing: Using the Signac package in R, filter cells based on unique nuclear fragments, nucleosome signal, and TSS enrichment. Call peaks using MACS2 on aggregated data.

- Matrix Creation: Create a peak x cell count matrix. Binarize the matrix (0/1 for absence/presence).

- TF-IDF Transformation: Apply Term Frequency-Inverse Document Frequency (TF-IDF) normalization to the binarized matrix.

- Dimensionality Reduction (LSI): Perform singular value decomposition (SVD) on the TF-IDF matrix to obtain Latent Semantic Indexing (LSI) components.

- Embedding Selection: Select components 2 through 30 for downstream integration (component 1 is typically correlated with sequencing depth/read count).

Protocol 2: Benchmarking Harmony's Performance in an ATAC-seq Thesis Context

- Dataset Curation: Combine public ATAC-seq datasets of the same tissue from different studies (e.g., PBMCs from 5 separate projects). Introduce an artificial batch label.

- Integration Runs: Apply Harmony (varying

theta,lambda), Seurat's CCA, and a no-correction baseline to the LSI embeddings. - Evaluation Metrics Calculation:

- Batch Mixing: Calculate LISI scores for batch labels (higher is better).

- Biological Conservation: Calculate LISI scores for known cell type labels (should remain stable or improve). Compute cell type clustering accuracy (ARI) against a gold-standard label.

- Differential Peaks: Perform differential accessibility (DA) testing between cell types pre- and post-integration. Measure the inflation of false DA peaks attributed to batch.

- Quantitative Comparison: Aggregate metrics into a summary table for cross-method comparison.

Table 1: Benchmarking Results of Batch Effect Correction Methods on Synthetic Multi-Batch ATAC-seq Data (PBMCs)

| Method | Batch LISI Score (↑) | Cell Type LISI Score (↑) | Cell Type ARI (↑) | Runtime (min) (↓) | False DA Peak Reduction % (↑) |

|---|---|---|---|---|---|

| No Correction | 1.2 ± 0.1 | 2.1 ± 0.3 | 0.85 | N/A | 0% |

| Harmony (theta=1) | 3.8 ± 0.4 | 2.3 ± 0.2 | 0.88 | 4.2 | 92% |

| Harmony (theta=3) | 4.5 ± 0.5 | 1.9 ± 0.4 | 0.76 | 4.5 | 85% |

| Seurat CCA | 3.5 ± 0.3 | 2.4 ± 0.3 | 0.87 | 18.7 | 89% |

Table 2: Impact of Lambda Parameter on Harmony Integration Performance (Theta fixed at 2)

| Lambda Value | Use Case | Integration Strength | Risk of Over-correction |

|---|---|---|---|

| 0.1 | Strong batch effects, balanced cell types | Very High | High |

| 1 (Default) | General use, moderate batch effects | High | Moderate |

| 10 | Weak batch effects or highly variable compositions | Low | Low |

Visualizations

ATAC-seq to Harmony Integration Workflow

Harmony's Iterative Correction Algorithm

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for ATAC-seq Batch Correction Research

| Tool / Reagent | Function / Purpose | Example / Note |

|---|---|---|

| Signac (R Package) | End-to-end toolkit for single-cell chromatin data analysis. Used for QC, LSI, and integration with Harmony. | Critical for pre-processing ATAC-seq data before Harmony. |

| Harmony (R/Python) | Algorithm for integrating single-cell data by correcting embeddings in a PCA-like space. | Core method being evaluated. Use RunHarmony() (Seurat wrapper) or harmonypy. |

| Seurat | Framework for single-cell genomics data analysis, providing object structure and helper functions. | Commonly used to store data and embeddings pre/post Harmony. |

| ArchR | Comprehensive toolkit for scATAC-seq analysis, includes its own LSI and integration methods. | An alternative to Signac for generating initial embeddings. |

| LISI Score Metric | Local Inverse Simpson's Index. Quantifies batch mixing and biological signal preservation. | Primary quantitative metric for evaluating correction quality. |

| MACS2 | Software for peak calling from sequencing data. Identifies open chromatin regions. | Used in the initial peak-calling step to define features. |

| TF-IDF Transform | Normalization method for scATAC-seq data that downweights high-frequency peaks. | Standard pre-processing step before LSI to generate informative embeddings. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: I get a ValueError: Cannot concatenate matrices with different numbers of features when setting up my AnnData object for scVI. What is the cause and solution?

A: This error occurs when the peak regions (features) are not identical across all batches. The solution is to ensure your peaks are harmonized before integration.

- Cause: Different peak calling parameters or genome annotations were used for different batches.

- Solution: Re-process all samples using the same peak set. Generate a consensus peak set from all samples (e.g., using tools like

cellranger-atac aggror functions inepiscanpy). Then, create a unified count matrix where all cells are quantified against this identical feature set.

Q2: After scVI integration, my UMAP still shows strong batch-specific clustering. What are the potential reasons?

A: This indicates the model may not have successfully removed batch variation. Troubleshoot using the following steps:

- Check Model Convergence: Ensure the scVI model has trained sufficiently. Plot the training loss (

scvi.model.SCVI.history["elbo_train"]). The ELBO should plateau. Increasemax_epochsif it hasn't converged. - Adjust Model Complexity: The

n_latentparameter might be too low to capture the biological variance. Try increasing it (e.g., from 10 to 30). - Review Data Normalization: scVI expects raw counts. Do not log-transform or otherwise normalize your data before passing it to

scvi.model.SCVI.setup_anndata.

Q3: BBKNN integration returns a graph with disconnected components, making visualization misleading. How can I fix this?

A: This happens when the batch effect is so severe that neighborhoods cannot be built across batches with the current parameters.

- Primary Fix: Increase the

n_pcsparameter (e.g., from 10 to 50) to provide BBKNN with more biological signal to match on. - Secondary Fix: Adjust the

neighbors_within_batchparameter. Increasing it forces each cell to connect to more neighbors within its own batch, which can sometimes help bridge to other batches. - Tertiary Check: Ensure the input to BBKNN (the scVI latent representation) itself does not show completely separated batches. If it does, revisit scVI training.

Q4: What metrics should I use to quantitatively assess the success of my scVI+BBKNN integration for ATAC-seq data?

A: Use a combination of batch mixing and biological conservation metrics. Key quantitative assessments are summarized below:

| Metric | Purpose | Ideal Outcome | Tool/Source |

|---|---|---|---|

| kBET Acceptance Rate | Measures local batch mixing. | Higher score (closer to 1). | scanpy.external.pp.kBET |

| Graph Connectivity | Measures if cells from all batches form a connected graph. | Score of 1.0. | scanpy.pp.neighbors, then compute. |

| ASW (Batch) | Average Silhouette Width on batch labels. | Score close to 0 (negative indicates bad mixing). | scanpy.metrics.silhouette_score |

| ASW (Cell Type) | Average Silhouette Width on known cell type labels. | Preserved or increased after integration. | scanpy.metrics.silhouette_score |

| LISI Score | Local Inverse Simpson's Index for batch/cell type. | Batch LISI: low. Cell Type LISI: high. | scib.metrics.lisi_graph |

Q5: My scVI training is very slow. Are there ways to speed it up?

A: Yes, consider the following adjustments:

- Leverage GPU: Ensure

scvi-toolsis installed with PyTorch GPU support. Training on a GPU provides a significant speedup. - Reduce Data Size: Use highly variable peaks for integration (

scanpy.pp.highly_variable_genes). For very large datasets, consider cell filtering or training on a representative subset. - Adjust Training Parameters: Reduce

max_epochsif convergence is reached earlier. Increasebatch_sizeto the maximum your GPU memory allows.

Experimental Protocols

Protocol 1: Consensus Peak Set Generation

This protocol is critical for creating a unified feature space for integration.

- Input: Binary peak files (

.narrowPeakor.bed) from MACS2 or CellRanger-ATAC for each sample/batch. Merge Peaks: Use

bedtools mergeto combine all peaks from all samples into a non-redundant set.Create a Safe Peak Set: Filter out peaks in blacklisted genomic regions (e.g., ENCODE blacklist) using

bedtools intersect -v.- Re-quantify: Using tools like

featureCounts(from Subread) orMOODS(viachromvarin R), count fragments overlapping each consensus peak for every cell. This creates the unified count matrix.

Protocol 2: scVI + BBKNN Integration Workflow

A detailed step-by-step methodology for integration.

- Data Preparation: Load the unified count matrix into an

AnnDataobject (adata). Ensureadata.Xcontains raw counts. - Quality Control & Filtering: Filter cells based on unique fragment counts and nucleosome signal. Filter peaks based on minimum cell expression.

scVI Setup & Training:

BBKNN Graph Correction:

Downstream Analysis: Compute UMAP using the BBKNN graph (

sc.pp.neighbors,sc.tl.umap). Cluster cells (sc.tl.leiden).

Visualizations

Diagram 1: scVI+BBKNN Integration Workflow

Diagram 2: Batch Effect Correction Concept

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in ATAC-seq Integration |

|---|---|

| Cell Ranger ATAC | Primary software for initial alignment, barcode processing, and peak calling of 10x Genomics scATAC-seq data. Provides the foundational count matrix. |

| SnapATAC2 / Signac | Alternative pipelines for processing scATAC-seq data from raw FASTQ files to count matrices, offering flexibility in peak calling and quality control. |

| scvi-tools (v1.0+) | The primary Python package implementing the scVI deep generative model. It learns a batch-invariant latent representation of single-cell data. |

| BBKNN | A graph-based algorithm that rapidly corrects the k-nearest neighbor graph to improve mixing across batches, operating on the latent space from scVI. |

| Scanpy | The fundamental ecosystem for single-cell analysis in Python. Used for data manipulation, visualization (UMAP), and clustering post-integration. |

| BedTools | Essential suite of command-line tools for genomic interval arithmetic. Critical for generating consensus peak sets from multiple samples. |

| ENCODE Blacklist | A curated set of genomic regions with anomalous signal in sequencing assays. Used to filter out unreliable peaks from the consensus set. |

| scIB / scMetrix | Toolboxes for computing standardized integration metrics (kBET, LISI, ASW) to quantitatively evaluate batch correction and biological conservation. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: After integrating a new batch correction tool, my pre-alignment corrected data shows severe loss of signal in open chromatin regions during peak calling. What could be the cause? A: This is often due to over-correction. Pre-alignment methods like GC-content normalization or insert size filtering can be too aggressive. Verify the distribution of fragment lengths post-correction. A truncated distribution (e.g., all fragments forced to a narrow size range) removes biologically relevant nucleosome patterning. Recommended Action: Re-run the correction with milder parameters (e.g., a wider acceptable GC bias window) and compare the fragment length distribution plot against raw data.

Q2: When using post-alignment correction (e.g., on a consensus peak matrix), my t-SNE plot shows corrected batches, but differential accessibility analysis yields inflated p-values and no significant hits. How should I proceed? A: This indicates residual confounding where batch vectors are correlated with weak biological signals. Common with methods like ComBat or limma. Recommended Action: 1) Check for batch-cell type confounding using a PCA colored by batch and known cell labels. 2) If confounded, use a guided approach like Harmony or RUVseq with control peaks (e.g., housekeeping gene promoters) to anchor biological variation. Avoid unsupervised correction in this scenario.

Q3: My negative control samples (e.g., DNAse-free samples) show artifactual "peaks" after pre-alignment correction. What step is introducing this noise?

A: This points to contamination or an inappropriate correction assumption. Some sequence-based correction tools assume all data is true signal. Recommended Action: 1) Always include negative controls in the pipeline. 2) For pre-alignment, ensure adapter-trimming is performed before any other normalization. 3) Switch to a post-alignment strategy where negative controls can be used explicitly to model and subtract technical noise (e.g., using RUVseq's negative control genes option).

Q4: Integrating both pre- and post-alignment steps caused computational crashes due to memory overload. How can I optimize the workflow? A: Pre-alignment correction on raw FASTQ/BAM files is I/O and memory intensive. Chaining it directly to a post-alignment tool on a large matrix is inefficient. Recommended Action: Implement a modular pipeline with checkpoints. Use the following optimized workflow: 1. Pre-alignment correction on batches separately. 2. Align and call peaks per sample. 3. Create a consensus peak set and count matrix. 4. Save this matrix. Then apply post-alignment correction on this lighter object.

Experimental Protocols for Cited Methodologies

Protocol 1: Evaluating Pre-alignment GC Bias Correction with DeepTools

Objective: Assess and correct for GC-bias in ATAC-seq fragment data before alignment.

Steps:

1. Compute GC content: Use deepTools computeGCBias on your aligned BAM file (from uncorrected data) and a reference genome (e.g., hg38).

2. Generate Bias Plot: The tool outputs a plot of read count versus GC content. Observe the curve.

3. Correct Bias (Pre-alignment): Use a tool like ATACseqQC::gcnCorrection or Xiao et al.'s method to weight fragments in silico before re-aligning. Alternatively, use deepTools correctGCBias on the BAM file to create a corrected BAM.

4. Validation: Re-run computeGCBias on the corrected BAM. The curve should be flatter. Re-call peaks and compare peak counts in high-GC regions.

Protocol 2: Implementing Post-alignment Batch Correction with Harmony on Peak Matrices

Objective: Remove batch effects from a multi-batch ATAC-seq peak count matrix.

Steps:

1. Input: Create a consensus peak set (e.g., using DiffBind). Generate a raw count matrix (reads in peaks) for all samples across all batches.

2. Normalization: Perform library size normalization (e.g., CPM, TMM) and transformation (e.g., log2(CPM+1)).

3. PCA: Run PCA on the normalized matrix to confirm batch clustering.

4. Harmony Integration: In R, use RunHarmony() from the Harmony package, providing the PCA embedding and a batch metadata vector.

5. Embedding: Use the Harmony-corrected embeddings for downstream clustering (t-SNE/UMAP) and differential analysis. Crucially, perform differential testing using a linear model (e.g., in limma) that includes the Harmony-corrected coordinates as covariates to avoid over-correction.

Table 1: Performance Comparison of Pre-vs. Post-Alignment Correction Strategies

| Metric | Pre-Alignment Correction (e.g., GC/Insert Size) | Post-Alignment Correction (e.g., Harmony, ComBat) | No Correction |

|---|---|---|---|

| Primary Goal | Mitigate sequence/technical artifacts before signal generation. | Align sample distributions in feature space after signal generation. | Baseline |

| Batch Mixing (LISI Score)* | Low to Moderate (1.2 - 1.8) | High (1.8 - 2.5) | Low (1.0 - 1.2) |

| Signal Preservation | Risk of Over-correction (Loss of ~5-15% true peaks) | Risk of Under-correction if confounded | Full signal, maximal batch effect |

| Computational Load | Very High (processes raw/BAM data) | Moderate (processes count matrix) | Low |

| Best Use Case | Strong known technical bias (e.g., PCR duplicates, sequence bias). | Clear batch structure with known biological groups to preserve. | Single-batch experiments. |

*LISI (Local Inverse Simpson's Index): Higher score indicates better batch mixing. Ideal is near the number of batches.

Table 2: Tool-Specific Recommendations and Resource Requirements

| Tool Name | Correction Stage | Key Parameter to Tune | Essential Resource/Reagent |

|---|---|---|---|

| ATACseqQC (R) | Pre-Alignment | minFragment, maxFragment for size selection |

High-quality reference genome (e.g., GRCh38.p13) |

| DeepTools (correctGCBias) | Pre/Post-Alignment | effectiveGenomeSize |

Pre-computed GC bias file for organism |

| Harmony (R/Python) | Post-Alignment | theta (Diversity penalty). Higher = more correction. |

A robust consensus peak set (>50k peaks recommended) |

| sva/ComBat (R) | Post-Alignment | mod (Model matrix for biological covariates) |

Preliminary sample metadata specifying biological groups |

Visualizations

Diagram 1: ATAC-seq Correction Strategy Decision Workflow

Diagram 2: Modular Pipeline Integration Architecture

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in ATAC-seq Batch Correction |

|---|---|

| Tn5 Transposase (Custom or Commercial) | Enzyme for tagmentation. Batch-to-batch variability in this reagent is a MAJOR source of batch effects. Use the same lot for an entire study. |

| Control Cell Line (e.g., K562, GM12878) | Biological reference sample processed in every batch. Essential for diagnosing batch effects and benchmarking correction efficacy. |

| PCR Indexing Primers (Dual-Indexed, Unique) | Critical for multiplexing. Avoid index hopping (use unique dual indexing) to prevent cross-batch sample contamination. |

| High-Fidelity PCR Polymerase | Used in library amplification. Minimizes PCR duplicate bias and sequence errors that can interfere with pre-alignment correction metrics. |

| SPRI Beads (for Size Selection) | Determines fragment size distribution. Consistent bead-to-sample ratio is vital for reproducible insert size profiles between batches. |

| External Spike-in DNA (e.g., E. coli DNA) | Can be added pre-tagmentation to quantitatively monitor and correct for technical variation in efficiency across batches. |

Navigating Pitfalls: Advanced Troubleshooting for Complex Batch Correction Scenarios

Technical Support Center: ATAC-seq Batch Effect Correction

Troubleshooting Guide: Common Issues and Resolutions

Issue T-01: Loss of Cell-Type-Specific Peaks After Correction

- Symptoms: A known, biologically validated cell-type-specific open chromatin region is no longer significant or detectable in differential analysis after applying batch correction.

- Diagnosis: Likely over-correction where a strong batch correction algorithm has mistakenly treated the biological signal of interest as a technical artifact.