Bridging the Data Divide: A 2024 Guide to Multi-Omics Harmonization for Biomarker Discovery and Precision Medicine

Integrating genomics, transcriptomics, proteomics, and metabolomics promises revolutionary insights into human disease and drug response.

Bridging the Data Divide: A 2024 Guide to Multi-Omics Harmonization for Biomarker Discovery and Precision Medicine

Abstract

Integrating genomics, transcriptomics, proteomics, and metabolomics promises revolutionary insights into human disease and drug response. However, combining these heterogeneous, high-dimensional datasets presents significant analytical and computational hurdles. This article provides a comprehensive framework for researchers and drug development professionals navigating multi-omics data harmonization. We explore the foundational challenges of technical and biological variation, detail current methodological approaches from batch correction to AI-driven integration, offer troubleshooting strategies for common pitfalls, and establish validation frameworks to assess integration quality. By synthesizing current best practices, this guide aims to equip scientists with the knowledge to unlock robust, reproducible biological insights from complex multi-omics studies, accelerating the path to clinically actionable discoveries.

Understanding the Multi-Omics Landscape: Core Challenges in Data Heterogeneity and Integration

Troubleshooting Guides & FAQs

Q1: After running my samples across two batches, my PCA shows strong batch clustering. How can I determine if this is due to technical variation or a true biological confounder (e.g., processing day correlated with treatment)?

A: Perform a correlation analysis between Principal Components (PCs) and metadata variables.

- Calculate the correlation (e.g., Pearson's r) between the first 5-10 PCs and all technical (batch, run date, reagent lot) and biological (disease status, age, treatment) metadata variables.

- Create a summary table. A strong correlation (> |0.7|) of early PCs with technical factors suggests a batch effect requiring harmonization.

Table: Correlation of Principal Components with Metadata\

| Principal Component | Correlation with Batch ID | Correlation with Treatment | Correlation with Processing Date |

|---|---|---|---|

| PC1 (35% variance) | r = 0.89 | r = 0.12 | r = 0.85 |

| PC2 (22% variance) | r = 0.08 | r = 0.91 | r = 0.10 |

| PC3 (10% variance) | r = 0.05 | r = 0.03 | r = 0.04 |

Experimental Protocol: Metadata Correlation Analysis

- Input: Normalized feature matrix (e.g., gene counts, protein intensities), sample metadata table.

- PCA: Perform PCA on the feature matrix. Retain scores for the first N PCs (where N explains ~80% variance).

- Correlation Test: For each PC (dependent variable), run a linear model against each metadata variable. For categorical variables (e.g., Batch A/B), use ANOVA to obtain the coefficient of determination (R²).

- Visualization: Generate a heatmap of correlation coefficients (r or R²) for PCs vs. metadata.

Q2: My negative control samples show high variability in proteomics data. What are the key technical sources I should investigate?

A: High control variability often points to pre-analytical or instrumental issues. Follow this checklist:

- Sample Preparation: Inconsistent digestion time or temperature; variable protease activity.

- LC-MS System: Column degradation, contaminant buildup, or unstable electrospray ionization.

- Reagent Lot Shift: New lot of trypsin, buffers, or LC solvents introduced mid-experiment.

Experimental Protocol: Systematic QC for Proteomics Variability

- Run QC Samples: Inject a pooled sample (a mix of all individual samples) repeatedly at the start, middle, and end of the batch.

- Monitor Metrics: Track retention time drift, peak width, total ion current (TIC), and intensities of standard peptides.

- Analyze: Calculate the coefficient of variation (CV%) for QC sample features. A CV > 20% for high-abundance features in QCs indicates significant technical instability.

- Troubleshoot: If CV is high, perform system maintenance (column clean/change, source cleaning) and recalibrate.

Q3: When using ComBat for batch correction, my p-value distributions become inflated. Am I over-correcting and removing biological signal?

A: Yes, this is a classic sign of over-harmonization. ComBat's "empirical Bayes" approach can be aggressive. To diagnose:

- Check the distribution of p-values from a differential analysis before and after ComBat. A shift towards a uniform distribution (flat histogram) is expected. A shift towards 1.0 (right-skewed) indicates loss of signal.

- Use spike-in controls or known positive/negative biological markers to assess signal retention.

Experimental Protocol: Validating Harmonization with Known Truths

- Establish Ground Truth: Identify a set of features known to be differentially expressed (DE) and non-DE based on prior studies or spike-in controls.

- Apply Harmonization: Run your chosen algorithm (e.g., ComBat, limma's

removeBatchEffect) on the full dataset. - Perform DE Analysis: Conduct the same statistical test (e.g., t-test, DESeq2) on pre- and post-harmonized data.

- Evaluate: Calculate the sensitivity (recall of known DEs) and specificity (correct identification of non-DEs). Optimal harmonization maximizes both.

Q4: In single-cell RNA-seq, should I integrate datasets by patient or by sequencing batch? The two are confounded.

A: This is a core harmonization challenge. The consensus is to correct for technical batch first, while protecting patient-specific biology. Use methods designed for this, such as Harmony or scVI's batch_key and labels_key parameters, which can model both. Do not use patient ID as the batch covariate, as this will remove all inter-patient biological variation.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Multi-omics Harmonization Studies

| Item | Function in Harmonization |

|---|---|

| Commercial Reference RNA (e.g., ERCC Spike-Ins) | Exogenous controls added to samples pre-processing to quantify technical variation in transcriptomics. |

| Pooled QC Sample | An aliquot created by combining small volumes of all test samples; run repeatedly to monitor and correct for instrumental drift. |

| Bridged Samples | A set of identical samples divided and processed across all batches/centers to directly measure batch effects. |

| Processed Data Standards (e.g., SDRF files) | Standardized templates for experimental metadata ensure consistent annotation, which is critical for identifying variation sources. |

| Multi-omics Internal Standard (e.g., labeled peptides, isotopically labeled metabolites) | Added to samples for mass spectrometry-based assays to correct for run-to-run sensitivity fluctuations. |

Visualizations

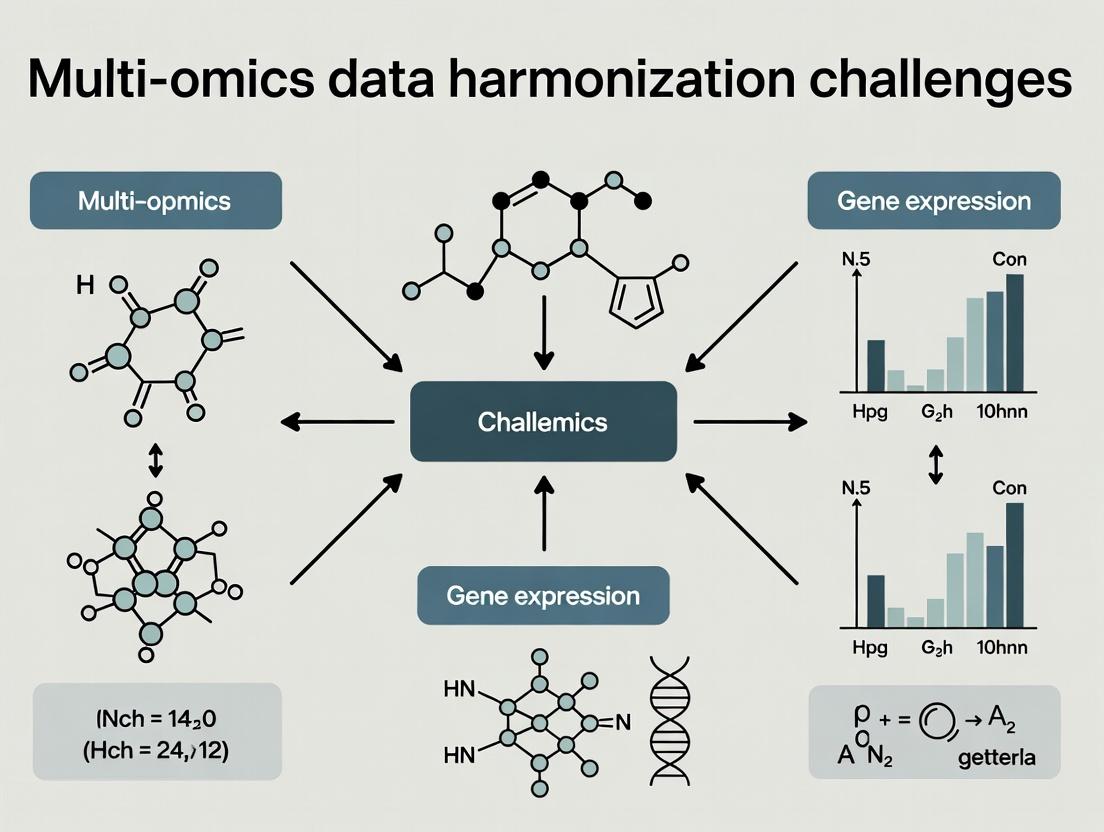

Diagram 1: Core harmonization challenge: separating technical from biological variance.

Diagram 2: Standard workflow for diagnosing and addressing batch effects.

Technical Support Center: Troubleshooting Batch Effects in Multi-omics Experiments

This support center provides solutions for researchers addressing batch effect challenges within multi-omics data harmonization projects. The following FAQs and guides address common experimental issues.

Frequently Asked Questions (FAQs)

Q1: After integrating RNA-seq data from two different sequencing platforms (e.g., Illumina NovaSeq vs. HiSeq), we observe strong clustering by platform, not by biological condition. What is the primary cause and how can we diagnose it? A1: This is a classic platform batch effect. Key technical sources include differences in read length, base-calling algorithms, and flow-cell chemistry. Diagnose by performing a Principal Component Analysis (PCA) on the normalized count data, coloring samples by platform. A table of common platform differences is below.

Q2: Our proteomics data, generated across two labs using the same mass spectrometer model, shows significant variability in protein quantification. What protocol steps are most likely responsible? A2: Lab-specific protocol variations are the likely source. Critical steps include sample lysis method, protein digestion time/temperature, choice of trypsin lot, peptide clean-up kit, and LC column aging. Standardizing these steps using a detailed SOP is essential.

Q3: How can we determine if observed variation in our metabolomics data is due to a true biological signal or a batch effect from sample processing date?

A3: Use statistical batch effect detection. Include "processing batch" as a covariate in a linear model (e.g., limma package in R) for each metabolite. A significant batch term for a large proportion of metabolites indicates a strong processing batch effect. Visualization through a PCA plot colored by batch is also critical.

Q4: What are the most effective wet-lab strategies to prevent batch effects before data generation in a multi-omics study? A4: The core strategy is randomization and balancing. Randomize sample processing order across experimental groups. If processing in multiple batches, ensure each batch contains a balanced representation of all biological groups. Always include technical control samples (e.g., reference cell line aliquots) in every batch.

Troubleshooting Guides

Guide 1: Diagnosing Platform-Specific Batch Effects in Genomic Data

- Objective: Identify and quantify technical variation introduced by different analytical platforms.

- Protocol:

- Data Input: Start with normalized, but not batch-corrected, feature matrices (e.g., gene counts, peak intensities).

- Exploratory Visualization: Generate a PCA plot (for high-dimensional data) or a boxplot of per-sample summary statistics (e.g., library size, median intensity) grouped by platform.

- Statistical Test: Perform PERMANOVA (using

veganpackage in R) on the sample distance matrix withPlatformas a factor. A significant p-value (<0.05) confirms a platform effect. - Quantification: Calculate the percentage of total variance explained by the

Platformfactor in the first 5 principal components.

Guide 2: Correcting for Lab-Specific Protocol Biases in Proteomics

- Objective: Harmonize data generated across multiple labs prior to integrative analysis.

- Protocol:

- Reference Sample Design: Each lab must process a common aliquot of a universal reference sample (e.g., a standard cell line digest) in tandem with their experimental samples.

- Data Alignment: For each lab, calculate the median log2-intensity for each protein in the reference samples.

- Adjustment: Apply a linear adjustment (e.g., using ComBat or mean-centering) to the experimental samples from each lab so that the reference sample profiles align across labs.

- Validation: Confirm that post-correction, the reference samples cluster together in a PCA, separate from the experimental conditions.

Table 1: Common Platform-Specific Technical Variations in Sequencing

| Platform Type | Typical Read Length | Base Calling System | Common Artifact Sources |

|---|---|---|---|

| Illumina HiSeq 2500 | 50-125 bp | Real Time Analysis (RTA) | Declining quality over later cycles |

| Illumina NovaSeq 6000 | 50-150 bp | NovaSeq Control Software | Intensity crosstalk between clusters |

| Ion Torrent S5 | 200-400 bp | Torrent Suite | Homopolymer indel errors |

| PacBio Sequel II | 10-25 kb HiFi | SMRT Link | Random sequencing errors |

Table 2: Statistical Impact of Batch Effects in a Simulated Multi-omics Study

| Omic Layer | % Features with Significant Batch Effect (p<0.01) | Avg. % Variance Explained by Batch | Recommended Correction Method |

|---|---|---|---|

| Transcriptomics (RNA-seq) | 35-60% | 15-40% | ComBat-seq, limma removeBatchEffect |

| Proteomics (LFQ) | 50-70% | 20-50% | ComBat, Reference Sample Alignment |

| Metabolomics (LC-MS) | 40-75% | 25-60% | QC-RLSC, Batch Normalizer |

| Methylation (Array) | 20-40% | 10-30% | Functional normalization (R minfi) |

Experimental Protocols

Protocol: Reference Sample-Based Harmonization for Cross-Lab Transcriptomics

- Objective: Generate comparable gene expression data from samples processed in two different laboratories (Lab A & B).

- Materials: See "The Scientist's Toolkit" below.

- Method:

- Sample Allocation: Distribute aliquots of all biological samples and a universal reference standard (e.g., ERCC Spike-In Mix or commercial cell line RNA) to both labs.

- Randomized Processing: Each lab processes their set of samples in a fully randomized order within a single batch on their local platform (e.g., Lab A: NovaSeq 6000; Lab B: HiSeq 4000).

- Local Analysis: Each lab performs read alignment and gene quantification using a shared bioinformatics pipeline (e.g.,

STAR->featureCounts). - Data Submission: Labs submit raw count matrices to a central analyst.

- Harmonization: The analyst performs cross-platform normalization and batch correction using the reference sample profiles as anchors (see Troubleshooting Guide 2).

Protocol: Systematic Evaluation of DNA Extraction Kit Bias in Metagenomics

- Objective: Quantify the batch effect introduced by different DNA extraction kits on microbial community profiles.

- Method:

- Sample Splitting: Start with 10 homogenized stool or soil samples.

- Parallel Extraction: Split each sample into 3 technical replicates. Extract DNA from each replicate using one of three different kits (e.g., Kit Q, Kit M, Kit P).

- Library Prep & Sequencing: Perform 16S rRNA gene amplicon sequencing (V4 region) for all 30 extracts in a single, randomized sequencing run on one platform to isolate the kit effect.

- Analysis: Process sequences through DADA2. Perform PERMANOVA on Bray-Curtis distances with

Sample_IDandExtraction_Kitas factors. The variance explained byExtraction_Kitquantifies the kit-induced batch effect.

Diagrams

Platform Batch Effect Workflow

Multi-Lab Data Harmonization Flow

The Scientist's Toolkit: Key Reagent Solutions for Batch Effect Mitigation

| Item | Function in Mitigating Batch Effects |

|---|---|

| Universal Reference Standard (e.g., ERCC RNA Spike-In Mix, Commercial Cell Line Lysate) | Provides an identical technical signal across batches/labs for alignment and normalization. |

| Balanced Block Randomization Template | Ensures samples from all experimental groups are evenly distributed across processing batches to confound batch with biology. |

| Single-Lot Critical Reagents (Trypsin, Columns, Kits) | Using one manufacturer lot for an entire study eliminates variability introduced by inter-lot reagent differences. |

| Internal Standard Mix (for MS-based assays) | Spiked into each sample prior to processing to correct for run-to-run instrument variability. |

| Pooled QC Sample | An aliquot created by combining small amounts of all study samples, run repeatedly throughout the batch to monitor drift. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During integrative multi-omics analysis, my genomic variants (SNPs) and metabolite abundance matrices have vastly different dimensions (e.g., 1 million SNPs vs. 500 metabolites). How do I preprocess this data to avoid technical artifacts dominating the integration? A: This is a classic scale mismatch. The key is dimensionality reduction and normalization tailored to each data type.

- For Genomic Data (High-Dimensional): Apply linkage disequilibrium (LD) pruning to reduce redundant SNPs. Follow this with a Principal Component Analysis (PCA) to capture population structure in a lower-dimensional space (e.g., 20-100 PCs).

- For Metabolomic Data (Low-Dimensional): Use a variance-stabilizing transformation (e.g., log2) followed by quantile normalization to correct for batch effects and bring the distribution closer to normality.

- Integration Protocol: Use methods like MOFA+ or DIABLO which are designed for disparate dimensionalities. Center and scale all features to unit variance after the omics-specific preprocessing.

Q2: I am trying to map metabolites from my LC-MS run to known metabolic pathways, but the identifiers (e.g., KEGG, HMDB) are inconsistent, and coverage is low (~40%). What steps can I take to improve mapping and functional interpretation? A: This is an identifier/annotation mismatch issue.

- Protocol for Metabolite Identifier Harmonization:

- Gather All Annotations: Compile all available identifiers from your LC-MS platform (m/z, RT, adducts) and database searches.

- Use a Bridge Database: Employ tools like

MetaboAnalystor theChemical Translation Service(CTS) API to cross-reference identifiers (e.g., from PubChem CID to KEGG). - Prioritize High-Confidence Matches: Use tandem MS (MS/MS) spectral matching scores (e.g., Dot Product > 0.8) to select the most reliable identifier for each feature.

- Pathway Analysis: Use the harmonized identifier list in tools that support multiple ID types, such as

MetaboAnalystorPathVisio, which have built-in ID translation.

Q3: When correlating transcriptomic and proteomic data from the same samples, the expected concordance is poor (Pearson r < 0.3). What are the main technical and biological causes, and how can I diagnose them? A: Poor transcript-protein correlation is expected due to post-transcriptional regulation. Diagnose technical issues first.

- Troubleshooting Checklist:

- Sample Timing: Ensure paired samples were collected simultaneously. Protein turnover rates cause lag effects.

- Platform Alignment: Verify gene identifiers are consistently mapped (e.g., Ensembl Gene ID for both).

- Data Quality: Check proteomic coverage depth. A low number of quantified proteins (<5000) may miss key players.

- Normalization: Apply correct, platform-specific normalization (e.g., TMM for RNA-seq, median normalization for proteomics).

- Experimental Protocol for Paired Analysis: To improve alignment, perform a targeted proteomic experiment (SRM/MRM) for proteins corresponding to the top differentially expressed transcripts to validate the relationship.

Q4: My multi-omics dataset has missing values—especially in the metabolomics and proteomics blocks. What are the best practices for imputation without introducing massive bias before integration? A: Use data-type-specific imputation.

- Imputation Methodology Table:

| Data Type | Recommended Imputation Method | Rationale | Key Parameter |

|---|---|---|---|

| Metabolomics (LC-MS) | k-Nearest Neighbors (k-NN) or Random Forest (MissForest) | Accounts for correlation structure between metabolites. | k=10 for k-NN; 100 trees for MissForest. |

| Proteomics | MinProb (quantile regression-based) or imp4p R package |

Handles missing-not-at-random (MNAR) values common in proteomics. | Quantile = 0.01, tail strength = 1.65. |

| Transcriptomics (RNA-seq) | SVD-based imputation (e.g., bcv package) or KNN. |

Low proportion of missing data, often missing at random (MAR). | k=10, center/scale data first. |

- Critical: Perform imputation separately on each omics dataset before integration. Never impute a concatenated matrix.

Data Presentation

Table 1: Characteristic Scale and Dimension of Major Omics Modalities

| Omics Layer | Typical Features per Sample | Measurement Scale | Dynamic Range | Common Identifier System |

|---|---|---|---|---|

| Genomics (WGS SNPs) | 3 - 5 million | Count (0,1,2) / Allele Frequency | Low (0-2) | rsID, Genomic Coordinates (GRCh38) |

| Transcriptomics (RNA-seq) | 20,000 - 60,000 | Continuous (Counts, FPKM/TPM) | ~10^5 | Ensembl Gene ID, RefSeq Accession |

| Proteomics (Shotgun LC-MS) | 3,000 - 10,000 | Continuous (Intensity, LFQ) | ~10^6 | UniProt Accession, Gene Symbol |

| Metabolomics (LC-MS) | 200 - 1,000 | Continuous (Peak Intensity) | ~10^8 | HMDB ID, KEGG Compound ID, m/z+RT |

| Epigenomics (ChIP-seq) | ~500,000 peaks | Continuous (Peak Enrichment) | ~10^3 | Genomic Coordinates (GRCh38) |

Table 2: Quantitative Impact of Dimensionality Reduction on Integration Performance

| Preprocessing Method | Input Dimension (e.g., SNPs) | Output Dimension | Resulting Integration Concordance* (r) | Computational Time Savings |

|---|---|---|---|---|

| None (Raw Data) | ~1,000,000 | ~1,000,000 | 0.15 +/- 0.08 | Baseline (1x) |

| LD Pruning (r²<0.1) | ~1,000,000 | ~150,000 | 0.18 +/- 0.07 | ~3x Faster |

| LD Pruning + PCA (50 PCs) | ~1,000,000 | 50 | 0.45 +/- 0.10 | ~50x Faster |

| Feature Selection (p-value <1e-5) | ~1,000,000 | ~500 | 0.55 +/- 0.12 | ~20x Faster |

*Concordance measured as canonical correlation between omics layers in a simulated dataset.

Experimental Protocols

Protocol 1: Cross-Platform Identifier Harmonization for Pathway Mapping Objective: To unify identifiers from genomic, transcriptomic, and metabolomic datasets for joint pathway enrichment analysis.

- Extract Identifiers: From your processed data tables, create lists of: Gene Symbols (from RNA-seq), SNP rsIDs (from GWAS), and metabolite common names (from metabolomics).

- Use Bioconductor/MetaboAnalystR:

- For genes/SNPs: Use

biomaRtR package to map all identifiers to Entrez Gene ID and official Gene Symbol. - For metabolites: Use the

MetaboAnalystRSetup.MapDatafunction with the "HMDB" or "KEGG" option.

- For genes/SNPs: Use

- Create a Master Mapping File: Build a table with columns:

Original_ID,Omics_Layer,Entrez_Gene_ID(if applicable),KEGG_Compound_ID(if applicable),Common_Name. - Pathway Enrichment: Submit the unified Entrez and KEGG Compound ID lists to the

PGSEAR package or the web-basedMetaboAnalystjoint pathway analysis module.

Protocol 2: Multi-omics Data Fusion Using MOFA+ Objective: To integrate multiple omics datasets with different scales and dimensions into a unified latent factor model.

- Data Preparation: Format each omics dataset (e.g., RNA-seq, Proteomics, Metabolomics) into a samples x features matrix. Ensure sample order is identical. Apply modality-specific normalization and scaling (z-scoring per feature is recommended).

- Create MOFA Object: In R, use

create_mofa()function, passing a named list of your matrices. - Model Training: Set training options (

TrainOptions), model options (ModelOptions), and data options (DataOptions). Key is to usescale_views = TRUE. Run training withrun_mofa(). - Downstream Analysis: Extract factors (

get_factors()), inspect variance decomposition (plot_variance_explained()), and identify key drivers per view and factor (get_weights()).

Mandatory Visualization

Title: Multi-omics Data Harmonization and Integration Workflow

Title: From SNP to Metabolite: A Multi-omics Regulatory Cascade

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Multi-omics Harmonization |

|---|---|

| Universal Reference RNA/Protein | Provides an inter-platform calibration standard for transcriptomic and proteomic assays, enabling technical batch effect correction. |

| Stable Isotope Labeled Metabolite Standards | Spike-in controls for absolute quantification in mass spectrometry-based metabolomics, allowing cross-study data merging. |

| Multiplexed Protein Assay Kits (e.g., Olink, SomaScan) | Enables high-throughput, targeted proteomics from minimal sample volume, improving alignment with transcriptomic depth. |

| DNA/RNA/Protein Co-isolation Kits | Allows extraction of multiple analytes from a single biological specimen (e.g., AllPrep), ensuring perfect sample pairing for integration. |

| Indexed Sequencing Adapters (Unique Dual Indexes) | Enables pooled sequencing of multi-omic libraries (e.g., RNA-seq and ATAC-seq) on the same flow cell, reducing lane effects. |

| Cross-linking Mass Spectrometry Reagents | Captures protein-metabolite or protein-protein interactions, providing mechanistic data to bridge molecular layers. |

| Synthetic Spike-in RNAs (e.g., ERCC) | Added to RNA-seq libraries to construct non-linear normalization curves, improving accuracy for low-abundance transcripts. |

Troubleshooting Guides & FAQs

FAQ 1: What are the primary sources of missing data in a multi-omics integration workflow? Missing data arises from technical and biological sources. Technically, limits of detection (LOD), sample processing failures, or instrument sensitivity variations cause Missing Not At Random (MNAR) data, particularly in proteomics and metabolomics. Biologically, genuine absence of a molecule (e.g., a non-mutated gene, unexpressed protein) leads to Missing Completely At Random (MCAR) or Missing At Random (MAR) data. Integration exacerbates sparsity, as a sample present in one assay (e.g., transcriptomics) may be missing in another (e.g., metabolomics).

FAQ 2: My matrix has >20% missing values after LC-MS/MS proteomics. Should I impute or remove these features? The decision depends on the mechanism and your downstream analysis. See the quantitative summary below.

Table 1: Guidelines for Handling High Missingness in Proteomics Data

| Missingness Rate | Suggested Action | Common Method | Key Consideration |

|---|---|---|---|

| <5% | Remove features/samples | Complete-case analysis | Minimal information loss. |

| 5% - 20% | Impute features | K-Nearest Neighbors (KNN), Bayesian PCA | Assess pattern (MNAR vs MCAR). KNN is good for MAR. |

| >20% (Likely MNAR) | Use MNAR-specific imputation or model-based approaches | MinProb, QRILC, or Mixed Models (e.g., nlme) | Methods like MinProb down-shift normal distributions for left-censored data. |

| Systematic across samples | Investigate batch effect or sample quality | Remove the batch/sample | Check QC metrics before any imputation. |

Experimental Protocol for Assessing Missingness Mechanism (MAR vs MNAR):

- Construct a Presence/Absence Matrix: For your data matrix, create a binary matrix where 1 indicates a measured value and 0 indicates missing.

- Log-transform and Scale: Log-transform the observed (non-missing) values and perform z-scoring.

- Two-Way ANOVA: Perform a two-way ANOVA on the observed values with factors for

Sample GroupandFeature Abundance Level(e.g., median-derived quartiles). - Interpretation: A significant p-value for the

Feature Abundance Levelfactor suggests the missingness depends on the underlying abundance (MNAR). If onlySample Groupis significant, it may be MAR. - Visualization: Use a density plot of observed values vs. a shifted distribution to visualize left-censoring (MNAR).

FAQ 3: How do I handle "block-wise" missingness when samples are missing entire omics layers? This is a core challenge in multi-omics harmonization. Simple row-wise deletion leads to catastrophic sample loss. Preferred methods include:

- Multi-Omic Factor Analysis (MOFA): A Bayesian framework that learns a common latent factor space from all available data, tolerating block-wise missingness.

- Integrative Imputation: Use methods like Multi-Omics Imputation (MOMI) or kernel-based imputation that leverage correlations across omics layers to impute entire missing blocks.

Experimental Protocol for Imputation with MOFA2 (R package):

- Data Preparation: Format each omics dataset (e.g., mRNA, methylation) as a

matrixwith matched samples as columns and features as rows. Samples missing a layer should have anNAmatrix of correct dimensions. - Create MOFA object:

MOFAobject <- create_mofa(data_list) - Set Data Options:

DataOptions <- get_default_data_options(MOFAobject); DataOptions$scale_views <- TRUE - Set Model Options:

ModelOptions <- get_default_model_options(MOFAobject); ModelOptions$num_factors <- 10 - Set Training Options:

TrainOptions <- get_default_training_options(MOFAobject); TrainOptions$convergence_mode <- "slow" - Train Model:

MOFAobject.trained <- prepare_mofa(MOFAobject, data_options = DataOptions, model_options = ModelOptions, training_options = TrainOptions) %>% run_mofa - Extract Imputed Data: Imputed values are contained within the trained model object and can be extracted for downstream complete-case analysis.

FAQ 4: Does sparsity in single-cell RNA-seq (scRNA-seq) data require different handling than bulk omics missingness? Yes. scRNA-seq "dropouts" (zero counts from failed mRNA capture) are a severe, feature-dependent MNAR problem. Do not use standard imputation.

- Use Methods Designed for Dropouts: MAGIC (diffusion-based imputation), scImpute (statistical model to identify and impute dropouts), or DeepLearning models (e.g., DCA, scVI).

- Protocol Choice: For clustering, consider algorithms (e.g., Seurat) that internally handle dropouts. For integration with other single-cell omics (e.g., scATAC-seq), use multi-view methods like Weighted Nearest Neighbors (WNN) or UnionCom.

Diagram: Decision Workflow for Multi-Omics Missing Data

Diagram: Missing Data Gaps in the Omics Cascade

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Tools for Managing Missing Data Experiments

| Item | Function in Context of Missing Data/Sparsity |

|---|---|

| Quality Control Spike-Ins (e.g., ERCC for RNA, UPS2 for Proteomics) | Distinguish technical zeros (dropouts) from biological zeros by providing a known abundance gradient to model detection limits. |

| Internal Standards (Stable Isotope Labeled, e.g., for Metabolomics) | Correct for batch variation and signal drift, allowing more accurate detection of low-abundance features and defining a missingness threshold. |

| Single-Cell Multiplexing Kits (e.g., CellPlex, Hashtag Antibodies) | Reduces batch effects—a major source of structured missingness—by pooling samples prior to scRNA-seq processing. |

| Proteomics Fractionation Kits (e.g., High-pH RP, SCX) | Reduces missing values in LC-MS/MS by decreasing sample complexity, increasing the depth of quantification and lowering the limit of detection. |

| DNA/RNA/Protein Preservation Reagents (e.g., RNAlater, PAXgene) | Preserves sample integrity from collection, preventing degradation-related missing data that compounds in later omics layers. |

| Cross-linking Reagents (e.g., formaldehyde, DSG) | For multi-omics assays like ATAC-seq or ChIP-seq, improves signal-to-noise, reducing spurious zeros in chromatin accessibility data. |

Technical Support Center: Multi-Omics Data Harmonization

Frequently Asked Questions (FAQs)

Q1: Why do my transcriptomic and proteomic data from the same sample set show poor correlation, even after standard normalization? A: This is a common symptom of the "perfect alignment myth." Biological systems incorporate regulatory layers (e.g., post-transcriptional modification, protein turnover) that inherently decouple mRNA and protein abundance. Technical factors like differing assay sensitivities (RNA-Seq depth vs. MS detection limits) and batch effects unique to each platform further misalign measurements. Perfect 1:1 correlation is biologically unrealistic.

Q2: How do I handle missing values when integrating metabolomic and genomic datasets? A: Missing values are endemic in multi-omics. The approach must be hypothesis-driven. For metabolomics, missing data often indicates levels below the limit of detection (LOD). Options include: 1) Imputation using methods like k-NN or MissForest, tailored to the left-censored nature of MS data. 2) Using a binary presence/absence matrix for certain network analyses. Never use simple mean imputation across platforms.

Q3: What is the biggest source of "misalignment" in cross-platform integrative analysis? A: According to recent literature, batch effects and platform-specific technical variation account for a substantial portion of observed misalignment, often overshadowing biological signal if uncorrected. A 2023 review indicated that for a typical multi-omics study, over 30% of the variance in a given dataset can be attributable to non-biological technical factors.

Q4: Can AI/ML models overcome harmonization challenges and achieve "perfect" integration? A: No. While deep learning models (e.g., autoencoders, multimodal networks) are powerful for learning joint representations, they do not create perfect alignment. They model complex, non-linear relationships but are constrained by the same fundamental data limitations: noise, missingness, and biological asynchronicity. They are tools for approximation within a reference framework, not a solution to the myth.

Troubleshooting Guides

Issue: Failed Integration Using Generalized Linear Models

- Symptoms: Model non-convergence, extreme coefficient values, poor predictive performance on validation set.

- Potential Causes & Solutions:

- Cause 1: Severe multi-collinearity within and between omics layers.

- Solution: Apply dimensionality reduction (PLS, PCA) to each omics layer separately before integration, or use regularization methods (LASSO, Ridge regression) that penalize correlated variables.

- Cause 2: Scale disparity between datasets (e.g., RNA-Seq counts vs. methylation beta values).

- Solution: Use platform-appropriate scaling. For example, use Variance Stabilizing Transformation (VST) for counts, and logit transformation for beta values, followed by global Z-scoring.

- Cause 1: Severe multi-collinearity within and between omics layers.

Issue: Inconsistent Biomarker Discovery from Integrated vs. Single-Omics Analysis

- Symptoms: Top biomarkers from integrated analysis are not significant in any single-omics analysis, leading to interpretability challenges.

- Diagnosis: This is a feature, not a bug, of true multi-omics integration. It highlights emergent signals only visible through interaction.

- Action Protocol: 1) Validate using a complementary biological assay. 2) Perform network enrichment analysis on the integrated biomarker set to see if it converges on a coherent pathway. 3) Check if the biomarkers serve as "bridge nodes" connecting different functional modules in a network.

Table 1: Common Sources of Variance in Multi-Omics Studies

| Variance Source | Typical Contribution to Total Variance | Primary Affected Omics Layers |

|---|---|---|

| Biological Variation (True Signal) | 20-50% | All |

| Platform-Specific Batch Effects | 15-35% | Proteomics, Metabolomics |

| Sample Preparation & Library Batch | 10-25% | Genomics, Transcriptomics |

| Longitudinal/Time-Dependent Shift | 5-20% | Metabolomics, Proteomics |

| Missing Data (Below LOD) | 5-30% | Metabolomics, Proteomics |

Table 2: Performance Comparison of Harmonization Methods (Simulated Data)

| Harmonization Method | Average Correlation Gain* | Runtime (Medium Dataset) | Key Assumption/Limitation |

|---|---|---|---|

| ComBat | 0.15 | <1 min | Batch mean and variance are invariant. |

| Harmony | 0.18 | ~2 min | Data lies in a low-dimensional space. |

| Mutual Nearest Neighbors (MNN) | 0.22 | ~5 min | Requires a subset of shared biological states. |

| Seurat v5 CCA Anchor-Based | 0.25 | ~10 min | Defines a "reference" dataset for alignment. |

| *Measured as increase in Spearman's ρ between known matched samples post-correction. |

Experimental Protocols

Protocol: Cross-Platform Batch Effect Correction Using Harmony Objective: To align single-cell RNA-Seq and single-cell ATAC-Seq datasets from the same tissue samples processed in separate batches.

- Input: scRNA-Seq count matrix (genes x cells) and scATAC-Seq peak matrix (peaks x cells) with associated batch metadata.

- Dimensionality Reduction: Perform PCA on the scRNA-Seq matrix. Perform latent semantic indexing (LSI) on the scATAC-Seq matrix.

- Harmonization: Apply the Harmony algorithm (

RunHarmony()in R,harmony.integrate()in Python) separately to the PCA and LSI embeddings, using the batch ID as the grouping variable. Usetheta = 2to allow for moderate batch correction strength. - Joint Embedding: Concatenate the harmonized PCA and LSI embeddings into a single matrix.

- Validation: Visualize using UMAP colored by batch and cell type. Successful correction shows cells clustering by cell type, not by batch. Quantify using a batch mixing metric (e.g., Local Inverse Simpson's Index - LISI).

Protocol: Metabolomics-Genomics Integration via Sparse Multi-Block PLS (sMB-PLS) Objective: Identify latent variables linking genetic variants (SNPs) to plasma metabolite levels.

- Data Preprocessing:

- Genomics: Filter SNPs for MAF > 0.05. Code as 0,1,2 for allele dosage. Standardize (mean=0, variance=1).

- Metabolomics: Log-transform peak areas. Impute values below LOD with half the minimum detected value. Pareto-scale.

- Model Training: Use the

mixOmicsR package (block.splsda()function). Define two data blocks: X1 (SNP matrix), X2 (Metabolite matrix). Set the outcomeYas the disease phenotype vector. - Tuning: Use 10-fold cross-validation to tune the number of components and the sparsity penalty (

keepXparameter) for each block to maximize classification accuracy. - Interpretation: Extract weight vectors for each component. Metabolites and SNPs with large absolute weights contribute strongly to the latent component. Project samples onto components to identify clusters.

Mandatory Visualizations

Title: Multi-Omics Data Harmonization and Integration Workflow

Title: The Gap Between Idealized Reference and Observed Data Reality

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Primary Function in Multi-Omics Harmonization |

|---|---|

| Reference Standard Materials (e.g., NIST SRM 1950) | Provides a metabolomic and proteomic benchmark across labs to calibrate instruments and assess inter-batch variability. |

| Universal Human Reference RNA (UHRR) | Serves as a transcriptomic control across sequencing runs and platforms to correct for technical variance in gene expression studies. |

| Stable Isotope Labeled Standards (SILIS for proteomics, SIL for metabolomics) | Spiked into samples prior to MS processing to enable absolute quantification and correct for sample preparation losses and ionization efficiency. |

| Pooled QC Samples | Created by combining aliquots from all experimental samples; run repeatedly throughout the analytical sequence to monitor and correct for instrumental drift. |

| Multiplexing Kits (e.g., TMT, barcoding) | Allows pooling of multiple samples for simultaneous LC-MS/MS processing, minimizing run-to-run variation. |

| Cell Line References (e.g., HEK293, GM12878) | Well-characterized genomic and phenotypic profiles provide a ground truth for benchmarking integration algorithm performance. |

From Theory to Practice: Methodologies and Tools for Effective Multi-Omics Integration

This technical support center provides troubleshooting guides and FAQs for researchers encountering issues during multi-omics data preprocessing within harmonization workflows.

Frequently Asked Questions (FAQs) & Troubleshooting Guides

Q1: After RNA-Seq count normalization, my PCA plot still shows a strong batch effect. What steps should I take next?

- A: This indicates that standard normalization (e.g., TMM for bulk RNA-Seq) may be insufficient for your dataset. Proceed as follows:

- Verify Method: Confirm you used a between-sample normalization method suitable for your technology (e.g., TMM, DESeq2's median of ratios, or upper-quartile for bulk RNA-Seq; SCTransform or log-normalize for scRNA-Seq).

- Apply Transformation: Apply a variance-stabilizing transformation (e.g., log2(CPM+1), VST in DESeq2, or rlog). This reduces the mean-variance relationship, improving performance for downstream PCA.

- Employ Batch Correction: Use a combat-like method (e.g.,

limma::removeBatchEffect, ComBat fromsva, or Harmony for single-cell). Always apply these after normalization and transformation, not before. - Validate: Check the PCA with the top 500-2000 highly variable genes (HVGs) post-correction. Use negative controls if available.

Q2: When integrating proteomic (LFQ intensity) and metabolomic (peak area) data, how do I handle their different missing value mechanisms?

- A: Missing values in proteomics are often "Missing Not At Random" (MNAR, due to low abundance), while in metabolomics they can be "Missing At Random" (MAR). A combined strategy is required:

| Data Type | Suggested Imputation Method | Rationale | Tool/Function Example |

|---|---|---|---|

| Proteomics (LFQ) | MNAR-aware imputation | Accounts for values missing due to being below detection limit. | MsCoreUtils::impute(MNAR, method="min"), imputeLCMD::impute.MinDet() |

| Metabolomics | MAR-aware imputation | Models missingness based on observed data. | MsCoreUtils::impute(MAR, method="knn"), pcaMethods::ppca() |

| Post-Imputation | Probabilistic ComBat (if batches exist) | Harmonizes distributions across datasets after individual preprocessing. | promise::ProbabilisticComBat() |

Protocol for Joint Imputation: 1) Preprocess and impute each dataset separately using its type-appropriate method. 2) Perform quantile normalization within each dataset to standardize distributions. 3) Merge datasets on common samples (feature-wise). 4) Apply cross-platform batch correction (e.g., ProBatch, MultiBaC) to address technical variation between the omics layers.

Q3: My DNA methylation beta/M-value distributions are inconsistent across samples post-normalization. How do I troubleshoot?

- A: Inconsistent distributions often point to poor background correction or dye bias in array data.

- For Illumina EPIC/450k arrays: Re-run the preprocessing with

minfi::preprocessNoob()(normal-exponential out-of-band) orsesame::preprocesspipeline, which includes robust background correction and dye-bias equalization. - Check QC Metrics: Generate and compare

minfi::getQC()plots pre- and post-normalization. Median intensities should align. - Probe Filtering: Aggressively filter out poor-quality probes (

minfi::detectionP> 0.01), cross-reactive probes, and probes containing SNPs. Re-normalize after filtering. - Validation: Plot the density distributions of beta values. They should be tightly aligned across all samples. Persistent issues may indicate fundamental sample quality problems.

- For Illumina EPIC/450k arrays: Re-run the preprocessing with

Q4: What is the best practice for normalizing 16S rRNA microbiome sequencing data before integrating with host transcriptomics?

- A: Microbiome data is compositional and requires special handling. Do not use standard RNA-Seq normalization.

- Rarefaction OR CSS: For alpha-diversity, use rarefaction to even sequencing depth. For integration, use Cumulative Sum Scaling (CSS) in

metagenomeSeqor Variance Stabilizing Transformation (VST) on centered log-ratio (CLR) transformed data, which accounts for compositionality. - Address Sparsity: Apply a pseudo-count (e.g., +1) before CLR transformation.

- Integration Workflow: Preprocess host transcriptomics (e.g., TMM -> log-CPM). Preprocess microbiome (CSS or CLR). Use methods designed for compositional data integration, such as DIABLO in the

mixOmicspackage, which can model the covariance structure between these distinct data types.

- Rarefaction OR CSS: For alpha-diversity, use rarefaction to even sequencing depth. For integration, use Cumulative Sum Scaling (CSS) in

Q5: During multi-omics integration, my feature scaling method seems to dominate the integrated clustering. How do I choose the right scaling?

- A: Feature scaling (e.g., Z-score, Min-Max) profoundly impacts distance metrics. Follow this experimental protocol:

Protocol: Evaluating Scaling Impact on Integration

- Individual Layer Preprocessing: Normalize and transform each omics layer appropriately for its technology (see FAQs above).

- Parallel Scaling: Create three versions of the preprocessed data for each layer:

- Version A: No feature scaling.

- Version B: Z-score standardization per feature (mean=0, sd=1).

- Version C: Robust scaling (median=0, IQR=1).

- Integration & Clustering: Use a chosen integration method (e.g., MOFA+, MultiCCA) on each version set.

- Evaluation: Compare outcomes using:

- Cluster Concordance: Adjusted Rand Index (ARI) with known biological labels.

- Variance Explained: Per omics layer in the latent factors.

- Technical Confound: Check correlation of latent factors with batch variables.

- Decision: Select the scaling strategy that maximizes biological signal concordance and minimizes technical artifact carryover. There is no universal best method; it is study-dependent.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Multi-omics Preprocessing & Harmonization |

|---|---|

| Reference Standard Sample (e.g., Universal Human Reference RNA, Pooled QC Plasma) | Serves as a technical replicate across batches/runs to monitor and correct for platform drift and inter-batch variation. |

| Internal Standards (SIS peptides for proteomics, isotope-labeled metabolites) | Enables absolute quantification and corrects for ion suppression/filtering efficiency within a sample in MS-based assays. |

| Spike-in Controls (ERCC RNA spikes, External RNA Controls Consortium) | Distinguishes technical from biological variation in sequencing assays, allowing for precise normalization. |

| Degradation Control Markers (RNA Integrity Number/RIN assessors) | Quality metric for nucleic acid samples; can be used to filter out low-quality samples or as a covariate in models. |

| Batch-matched Solvents/Reagents | Using the same lot of critical reagents (e.g., enzymes, buffers) across a study minimizes introducible technical variation. |

| Multimodal Cell Line Controls (e.g., cell lines assayed by all platforms) | Provides a ground truth for evaluating the performance and integration fidelity of multi-omics pipelines. |

Workflow & Pathway Diagrams

Multi-omics Data Preprocessing Core Workflow

Gene Expression Data Preprocessing Steps

Goal of Preprocessing in Multi-omics Harmonization

Multi-omics Data Harmonization Troubleshooting Guides & FAQs

Frequently Asked Questions on Integration Paradigms

Q1: What are the primary differences between Early, Intermediate, and Late Integration, and when should I choose one over the other? A: The choice depends on your research question, data types, and computational goals.

- Early Integration (Data-Level): Raw data from different omics layers are concatenated before model building. Use when you hypothesize strong, direct interactions between molecular layers from the outset and have high-quality, normalized data.

- Intermediate Integration (Feature-Level): Features are extracted separately, then integrated in a joint latent space. Use when dealing with heterogeneous data scales or missing data, as it allows for modality-specific preprocessing.

- Late Integration (Decision-Level): Separate models are built for each omics type, and their results are combined. Use for validation-heavy studies, when data types are fundamentally incompatible, or to assess the individual predictive power of each omics layer.

Q2: During early integration, my concatenated dataset leads to a model that overfits and fails to generalize. What is the most common cause and solution? A: This is typically caused by the "curse of dimensionality"—the number of features (p) vastly exceeds the number of samples (n). The concatenated high-dimensional space is sparse, and models memorize noise.

- Troubleshooting Protocol:

- Apply Dimensionality Reduction before concatenation: Perform PCA or autoencoder-based reduction on each omics dataset independently.

- Implement rigorous feature selection: Use variance filters, LASSO regression, or multi-omics specific methods (like Multi-Omics Factor Analysis, MOFA) to select informative features from each layer.

- Use regularization: Employ models with built-in L1 (Lasso) or L2 (Ridge) penalties to prevent overfitting.

- Validate correctly: Use nested cross-validation to avoid data leakage and obtain unbiased performance estimates.

Q3: In intermediate integration using a multi-view model, one omics layer (e.g., metabolomics) dominates the shared latent space, obscuring signals from others (e.g., transcriptomics). How can I balance their contributions? A: This indicates a scale or variance imbalance between the input data matrices.

- Troubleshooting Protocol:

- Standardize features within each omics layer: Use z-score normalization (mean=0, std=1) for each feature independently per modality. Do not normalize across modalities.

- Weight the layers: Many frameworks (e.g., MOFA2, mixOmics) allow setting a weight for each data type to control its influence on the shared factors.

- Experiment with integration methods: Switch from a strict joint matrix factorization to a multi-omics clustering (e.g., SNF) or a kernel-based fusion approach, which can be more robust to scale differences.

Q4: For late integration, my ensemble model's final prediction is no better than the best single-omics model. What does this mean and how can I fix it? A: This suggests a lack of complementary information between the omics layers for your specific prediction task.

- Troubleshooting Protocol:

- Diagnose with correlation analysis: Calculate the correlation between the prediction scores/outputs from each single-omics model. High correlation (>0.8) indicates redundancy.

- Refine the meta-integration strategy: Instead of a simple average or vote, use a stacked generalization model. Train a meta-learner (e.g., logistic regression) on the hold-out predictions from each single-omics model to optimally combine them.

- Re-evaluate the biological question: The outcome may be driven predominantly by one molecular layer. Consider if a single-omics approach is sufficient, or if different omics data types (e.g., proteomics instead of transcriptomics) are needed.

Quantitative Comparison of Integration Paradigms

Table 1: Key Characteristics of Multi-omics Integration Paradigms

| Aspect | Early Integration | Intermediate Integration | Late Integration |

|---|---|---|---|

| Integration Stage | Raw/Preprocessed Data | Feature/Latent Space | Model Results/Decisions |

| Handles Heterogeneity | Poor | Excellent | Good |

| Model Interpretability | Challenging (Black Box) | Moderate (Shared Factors) | High (Per-layer models) |

| Data Requirements | Strict alignment & completeness | Flexible, handles missing views | Flexible, independent pipelines |

| Common Algorithms | Concatenation + ML (RF, DNN) | MOFA, iCluster, DJINN | Weighted Voting, Stacking |

| Best Use Case | Holistic pattern discovery | Identifying co-varying signals | Validating & combining robust findings |

Table 2: Reported Performance Metrics from Recent Multi-omics Studies (2022-2024)

| Study Focus | Early Integration (Avg. AUC) | Intermediate Integration (Avg. AUC) | Late Integration (Avg. AUC) | Noted Advantage |

|---|---|---|---|---|

| Cancer Subtyping | 0.82 | 0.89 | 0.85 | Intermediate best for novel subtype discovery |

| Drug Response Prediction | 0.76 | 0.84 | 0.86 | Late integration leveraged prior knowledge best |

| Microbial Community Function | 0.91 | 0.88 | 0.79 | Early integration captured complex microbe-metabolite links |

| Longitudinal Biomarker Identification | 0.71 | 0.90 | 0.83 | Intermediate handled temporal heterogeneity |

Detailed Experimental Protocols

Protocol 1: Implementing Intermediate Integration with MOFA2 for Subtype Discovery Objective: To identify integrated latent factors from transcriptomics and DNA methylation data that define novel disease subtypes.

- Data Input: Prepare two matrices (samples x features) for RNA-seq (TPM values) and Methylation array (beta values). Ensure sample order is identical.

- Preprocessing & Imputation:

- Log-transform RNA-seq data (log2(TPM+1)).

- Perform mean imputation for missing methylation probes (<5% missing).

- Center and scale features to unit variance within each view.

- MOFA2 Model Training (R/Python):

- Factor Analysis: Extract factors (

get_factors(mofa_trained)). Use factor values for unsupervised clustering (e.g., k-means) of samples. - Validation: Perform survival analysis (log-rank test) on derived clusters and assess enrichment for known biological pathways.

Protocol 2: Late Integration via Stacked Generalization for Clinical Outcome Prediction Objective: To predict patient treatment response by combining separate models for genomics, proteomics, and clinical data.

- Train Base Learners:

- Split data into Train (70%) and Hold-out Test (30%).

- On the Train set, perform 5-fold cross-validation (CV).

- In each CV fold, train independent models: Lasso regression on mutation data, Random Forest on protein array data, Gradient Boosting on clinical variables.

- Generate Level-One Data (Meta-features):

- For each sample in the Train set, collect its out-of-fold prediction from each of the three base models. This forms a new 3-column matrix (the "level-one" data).

- Train Meta-Learner:

- Train a logistic regression model on the level-one data, using the true labels.

- Final Testing & Evaluation:

- Run the Hold-out Test set samples through all three base models to get three predictions per sample.

- Feed these three predictions into the trained meta-learner to get the final ensemble prediction.

- Evaluate using AUC, precision, recall on the Hold-out Test set only.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Multi-omics Integration Experiments

| Item / Resource | Function in Multi-omics Workflow | Example Product/Platform |

|---|---|---|

| Cross-omics Normalization Suite | Harmonizes technical variation across platforms (e.g., batch effects between microarray and sequencing). | limma (R), ComBat or Harmony |

| Multi-omics Factor Analysis Tool | Performs intermediate integration by decomposing data into shared and specific latent factors. | MOFA2 (R/Python package) |

| Similarity Network Fusion Software | Constructs patient similarity networks per omics layer and fuses them for clustering. | SNFtool (R package) |

| Containerization Platform | Ensures computational reproducibility of complex pipelines across different systems. | Docker, Singularity |

| Interactive Visualization Dashboard | Allows exploration of integrated results, factor loadings, and sample clusters. | OmicsVisualizer (Cytoscape App) |

Integration Pathway & Workflow Visualizations

Diagram Title: Multi-omics Integration Paradigms Workflow

Diagram Title: Choosing a Multi-omics Integration Paradigm

This technical support center addresses common issues encountered when using popular multi-omics integration frameworks within the context of overcoming data harmonization challenges. It provides troubleshooting guides, FAQs, and essential resources for researchers.

Frequently Asked Questions & Troubleshooting

Q1: My MOFA+ model fails to converge or yields a "RuntimeError." What are the common causes? A: This is often related to data preprocessing or model configuration.

- Data Scale: Ensure all data views are centered and scaled (unit variance). MOFA+ is sensitive to differences in feature variance.

- Likelihoods: Specify the correct likelihood for each data type (

gaussianfor continuous,bernoullifor binary,poissonfor counts). Mismatched likelihoods cause convergence failure. - Factor Number: Start with a small number of factors (

n_factors=5) and increase gradually. Too many factors can lead to overfitting and instability. - Missing Data: Check for excessive missingness (>30% per feature). Consider filtering or using stronger regularizers.

Q2: When using mixOmics (sPLS, DIABLO), how do I choose the number of components and the number of features to select per component? A: This is a critical model tuning step.

- Use

perf()function with repeated cross-validation to evaluate the model's performance (e.g., classification error rate, correlation) across different numbers of components. - Use

tune.spls()ortune.block.spls()to optimize the number of features to select (keepX,keepYparameters) via cross-validation. The output provides diagnostic plots to guide selection. - A common mistake is selecting too many features, which reduces interpretability and can introduce noise.

Q3: In OmicsPLS, how do I handle datasets with vastly different numbers of features (e.g., Transcriptomics >> Metabolomics)? The model becomes biased towards the larger dataset. A: This is a core harmonization challenge. OmicsPLS offers specific parameters to mitigate this.

- Regularization: Use the

ridge_lambdaparameter in theo2m()function. Apply stronger regularization (higherridge_lambdavalues) to the dataset with more features to penalize its model complexity. - Penalization: Alternatively, use the

sparseoption incrossval_o2m()to perform sparse O2PLS, which performs feature selection during integration, directly addressing the high-dimensionality issue. - Pre-filtering: Independently pre-filter the larger dataset (e.g., via variance or univariate association) to reduce dimensionality before integration.

Experimental Protocol: A Standard Multi-omics Integration Workflow

This protocol outlines a generalized workflow for integrating two omics datasets (e.g., Transcriptomics 'X' and Metabolomics 'Y') using a joint factor model (MOFA+) and a pairwise method (OmicsPLS/Sparse PLS).

1. Objective: Identify shared and dataset-specific latent factors driving variation across transcriptomic and metabolomic profiles from the same samples.

2. Preprocessing & Harmonization:

- Step 1 (Individual): Perform standard normalization within each dataset (e.g., log-CPM + quantile normalization for RNA-seq; Pareto scaling for metabolomics).

- Step 2 (Matching): Align samples by their unique identifiers. Remove non-matching samples.

- Step 3 (Filtering): Filter low-variance features (e.g., remove bottom 20% by variance) within each dataset.

- Step 4 (Scaling): Center each feature to mean=0 and scale to unit variance (Z-scoring) across samples. This is critical for MOFA+.

3. Integration & Modeling:

- Path A (MOFA+):

- Create a

MOFAobject with the scaled matricesXandYas differentviews. - Set data

likelihoods("gaussian" for both). - Set training

options:scale_views=FALSE(already done),convergence_mode="slow". - Run

run_mofa()with a modest number of factors (e.g., 10-15).

- Create a

- Path B (OmicsPLS / mixOmics sPLS):

- For OmicsPLS: Use

crossval_o2m()to tune the number of joint (n), X-specific (nx), and Y-specific (ny) components, along with sparsity/ridge parameters. Fit final model witho2m(). - For mixOmics sPLS: Use

tune.spls()to tunekeepXandkeepY. Fit final model withspls().

- For OmicsPLS: Use

4. Downstream Analysis:

- Factor/Component Inspection: Correlate latent factors with known sample phenotypes (e.g., clinical outcome).

- Feature Scoring: Extract top-weighted features (loadings) for each factor/component for biological interpretation.

- Visualization: Plot factor/component scores (sample plot) and loadings (arrow plot or heatmap).

| Framework | Core Method | Key Strength | Ideal for Data Types | Tuning Critical Parameter | R/Python Package |

|---|---|---|---|---|---|

| MOFA+ | Bayesian Group Factor Analysis | Unsupervised, handles missing data, multiple views (>2), identifies shared & specific factors | Any (Gaussian, Bernoulli, Poisson) | Number of Factors (n_factors) |

R (MOFA2) |

| mixOmics (sPLS/DIABLO) | Sparse Partial Least Squares | Supervised/unsupervised, feature selection, discriminant analysis, multi-class | Continuous (Microarray, Metabolomics) | Features per component (keepX, keepY) |

R (mixOmics) |

| OmicsPLS | O2PLS | Separates joint vs. dataset-specific variation, symmetrical, efficient | Continuous (Two matched datasets) | Joint (n), Specific (nx, ny) components |

R (OmicsPLS) |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Multi-omics Integration |

|---|---|

| R/Bioconductor | Primary computational environment for statistical analysis and framework implementation. |

| Python (SciPy/NumPy) | Alternative environment, useful for custom scripting and deep learning extensions. |

| Sample Matrices | Aliquots of the same biological sample (e.g., tissue, plasma) used for all omics assays. Critical for technical validation. |

| Internal Standard Mixes | Spiked-in standards (isotope-labeled metabolites, spike-in RNA) for normalization and batch correction within assays. |

| Reference Databases | KEGG, Reactome, HMDB for functional annotation and pathway enrichment of integrated feature lists. |

| High-Performance Computing (HPC) Cluster | Essential for running permutation tests, cross-validation, and large-scale integration jobs. |

Visualization: Multi-omics Integration Workflow & Model Logic

Technical Support Center

Troubleshooting Guides & FAQs

Q1: When training a variational autoencoder (VAE) for single-cell RNA-seq data harmonization, the model outputs blurry or non-informative latent representations. What could be the cause?

A: This is often the "blurriness" problem common in VAEs. For omics data, the primary culprits are:

- Mismatched Likelihood Function: Using a Mean Squared Error (MSE) loss (Gaussian likelihood) on inherently count-based or highly sparse data. Solution: Use a negative binomial or zero-inflated negative binomial distribution in the decoder to model count data.

- Excessive KL Divergence Weight: The Kullback–Leibler (KL) term in the loss forces the latent distribution too close to a standard normal, crushing information. Solution: Implement KL annealing (gradually increasing the weight of the KL term from 0 to 1 over training) or use a β-VAE framework to carefully tune the weight (β).

- Protocol: For single-cell data, pre-process with log(1+x) normalization. Use a decoder architecture with a negative binomial output layer. The loss function is:

Loss = Reconstruction_Loss(NB) + β * KL(q(z|x) || p(z)). Start with β=0.01 and anneal to a target value (e.g., 0.1) over 50 epochs.

Q2: My Graph Neural Network (GNN) for integrating protein-protein interaction (PPI) networks with gene expression data suffers from severe over-fitting, despite a small graph. How can I regularize it?

A: GNNs, especially Graph Convolutional Networks (GCNs), are prone to over-fitting on small, dense graphs.

- Use Dropout Correctly: Apply dropout (

rate=0.5-0.7) to the node features before each graph convolution layer, not just on the final layers. This is feature dropout. - Graph Augmentation: Use edge dropout (randomly remove a fraction, e.g., 10-20%, of edges during each training epoch) to prevent over-reliance on specific pathways.

- Simplify the Model: Reduce the number of GCN layers. For many biological networks, 2-3 layers are sufficient. Deeper GNNs can lead to over-smoothing where all node representations become similar.

- Protocol: Implement a 2-layer GCN with feature dropout (0.6) and edge dropout (0.2). After each GCN layer, use a ReLU activation. Optimize with weight decay (L2 regularization of 5e-4). Monitor performance on a validation set of held-out nodes or graphs.

Q3: When using an autoencoder to harmonize bulk RNA-seq and microarray data from different studies, the aligned data still shows strong batch effects. What steps are missing?

A: Autoencoders alone may not disentangle biological signal from technical batch effects.

- Adversarial Training: Introduce a discriminator network that tries to predict the batch (study) source from the latent code. The autoencoder is simultaneously trained to fool this discriminator. This adversarially encourages batch-invariant latent representations.

- Explicit Batch Correction Loss: Use a maximum mean discrepancy (MMD) loss between the latent distributions of data from different batches to make them statistically similar.

- Protocol: Build a dual-objective autoencoder. Primary loss: reconstruction loss (MSE for normalized data). Adversarial loss: binary cross-entropy loss for the batch classifier. The total loss is:

L_total = L_recon + λ * L_adv, where λ controls the strength of batch removal (start with λ=0.1). Train in alternating steps.

Q4: How do I handle missing or heterogeneous node/edge features when constructing a multi-omics knowledge graph for a GNN?

A: This is a fundamental challenge in real-world biological graphs.

- For Missing Node Features: Initialize missing features using a learnable embedding per node type, or use a simple imputation (e.g., mean/median of known features) as a placeholder, allowing the GNN to refine them.

- For Heterogeneous Edge Types (e.g., activates, inhibits, interacts): Use a Heterogeneous GNN (HetGNN) or Relational GCN (R-GCN). These models maintain separate weight matrices for different edge types, allowing them to process the distinct biological relationships.

- Protocol: Define a meta-graph schema (e.g., Gene—[interacts_with]→Gene; Gene—[expresses]→Protein). For each relation type, assign a unique identifier. Use an R-GCN layer where the propagation rule is modified to sum over relation-specific transformations:

h_i^(l+1) = σ( Σ_(r∈R) Σ_(j∈N_i^r) (1 / c_i,r) W_r^(l) h_j^(l) + W_0^(l) h_i^(l) ), wherec_i,ris a normalization constant.

Key Experimental Protocols

Protocol 1: Multi-omics Integration via Variational Graph Autoencoder (VGAE)

- Graph Construction: Create a heterogeneous graph. Nodes: Genes, Proteins, Metabolites. Edges: PPI (from STRING), metabolic pathways (from KEGG), gene co-expression.

- Feature Initialization: Node features: gene expression (RNA-seq), protein abundance (mass spec), metabolite levels. Normalize each modality separately (z-score).

- Model Architecture:

- Encoder: A 2-layer GCN to produce node embeddings. A second GCN branch outputs parameters for a Gaussian distribution (mean

μ, log-variancelog σ²) per node. - Sampling: Latent representation

z_i = μ_i + σ_i * ε, whereε ~ N(0, I). - Decoder: An inner product decoder:

A_hat = sigmoid(z * z^T), to reconstruct the graph adjacency matrix.

- Encoder: A 2-layer GCN to produce node embeddings. A second GCN branch outputs parameters for a Gaussian distribution (mean

- Training: Optimize the ELBO loss:

L = E_[q(Z|X,A)][log p(A|Z)] - KL[q(Z|X,A) || p(Z)]. Use Adam optimizer (lr=0.01). - Output: Use the mean latent vectors

μas the harmonized, lower-dimensional representations for downstream tasks like patient stratification.

Protocol 2: Adversarial Autoencoder for Batch Effect Removal

- Input: Matrix

Xof multi-omics samples (rows=samples, columns=features), with batch labelsb. - Architecture:

- Encoder

E: MapsXto latent codez. - Decoder

D: ReconstructsX_hatfromz. - Discriminator

C: A classifier predicting batch labelbfromz.

- Encoder

- Training Loop:

- Step 1 - Update

C: FreezeE, trainConzto correctly classifyb. - Step 2 - Update

EandD: FreezeC, trainEandDto minimize reconstruction loss while maximizing the classification error ofC(using a gradient reversal layer).

- Step 1 - Update

- Validation: Assess via kNN classification on held-out biological labels (e.g., disease state) and monitor that batch label prediction is at chance level in the latent space.

Visualizations

Diagram 1: VGAE for Multi-omics Graph Harmonization

Diagram 2: Adversarial Autoencoder Training Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in AI/Deep Learning for Multi-omics |

|---|---|

| High-Fidelity Graph Databases (e.g., Neo4j) | Stores and queries complex, heterogeneous biological relationships (PPI, pathways) for GNN graph construction. |

| Sparse Matrix Libraries (e.g., SciPy sparse) | Enables efficient memory handling of large, sparse omics data matrices and adjacency matrices during model training. |

| Automatic Differentiation Frameworks (PyTorch/TensorFlow) | Provides the computational engine for building and training complex models like VAEs and GNNs with backpropagation. |

| Graph Neural Network Libraries (PyTorch Geometric, DGL) | Offers pre-built, optimized layers for GCN, GAT, and R-GCN, drastically reducing implementation time. |

| Single-Cell Analysis Suites (Scanpy, Seurat) | Provides standard pipelines for pre-processing and normalizing scRNA-seq data before feeding it into autoencoders. |

| Pathway Databases (STRING, KEGG, Reactome) | Sources of ground-truth biological interactions used to build prior knowledge graphs for GNN models. |

| Adversarial Training Modules (e.g., Gradient Reversal Layer) | A key software component for implementing adversarial de-confounding in autoencoders for batch correction. |

| GPU Computing Resources (NVIDIA CUDA) | Essential hardware acceleration for training deep neural networks on large-scale multi-omics datasets in a reasonable time. |

Table 1: Comparison of Model Performance on Multi-omics Integration Tasks

| Model Type | Primary Task | Dataset (Example) | Key Metric | Reported Performance | Key Challenge Addressed |

|---|---|---|---|---|---|

| Vanilla VAE | Dimensionality Reduction | TCGA Pan-Cancer | Cluster Purity (NMI) | ~0.55 | Learns compressed but entangled representations. |

| β-VAE (β=0.1) | Disentangled Representation | Single-cell Multiome (ATAC+RNA) | Disentanglement Score | ~0.85 | Separates biological factors from technical noise. |

| Graph Convolutional Network (GCN) | Node Classification (e.g., Gene Function) | PPI Network (STRING) | Classification Accuracy (F1) | ~0.78 | Integrates network structure with node features. |

| Variational Graph Autoencoder (VGAE) | Graph Reconstruction & Latent Embedding | Metabolic Pathway Graph | ROC-AUC (Link Prediction) | ~0.92 | Harmonizes node features and graph structure jointly. |

| Adversarial Autoencoder (AAE) | Batch Effect Removal | Multi-study Bulk RNA-seq | Batch Mixing (kBET) / Biological AUC | 0.95 (AUC) | Removes study-specific artifacts while preserving disease signal. |

| Heterogeneous GNN (HetGNN) | Multi-omics Knowledge Graph Reasoning | Drug-Gene-Disease Graph | Hits@10 (Drug Repurposing) | ~0.31 | Handles diverse node and edge types in biological knowledge graphs. |

Technical Support Center

Troubleshooting Guide & FAQs

Q1: After batch effect correction, my differentially expressed gene (DEG) list is significantly smaller than before harmonization. Is this a software error or an expected outcome? A: This is an expected outcome. Batch effects can create false positive signals. Harmonization removes non-biological variance, often leading to a more stringent but biologically relevant DEG list. Verify by checking positive control genes (housekeeping genes) show stable expression post-harmonization and that known cancer-associated pathways remain enriched.

Q2: My multi-omics integration yields inconsistent biomarker signatures when I run the analysis on different compute clusters. How can I ensure reproducibility?

A: This typically stems from non-deterministic algorithms or differing package versions. 1) Containerize your workflow using Docker or Singularity with fixed version packages. 2) Set explicit random seeds in all functions (e.g., in R: set.seed(123); in Python: np.random.seed(123)). 3) Harmonize software environments across clusters by using the same container image.

Q3: When applying ComBat to my RNA-seq dataset, the corrected data for one batch shows an unexpected shift in distribution. What should I check?

A: This often indicates an outlier sample or severe batch-confounding with a biological condition. 1) Inspect the batch design matrix: Use Principal Component Analysis (PCA) colored by batch and biological group to check for severe confounding. 2) Validate sample metadata: Ensure no sample mislabeling. 3) Consider using an alternative method: For confounded designs, use methods like Harmony, limma removeBatchEffect (with appropriate model), or sva with supervised surrogate variable estimation.

Q4: Post-harmonization, my survival analysis biomarker loses statistical significance. Does this mean harmonization removed critical signal? A: Not necessarily. First, benchmark against a gold standard. Use a validated public dataset (e.g., from TCGA) processed with the same pipeline to see if known survival biomarkers remain significant. Second, perform simulation: Spiked-in known signal should be retained post-harmonization. If lost, review harmonization parameters (e.g., over-correction). The initial signal may have been partly driven by batch.

Q5: How do I choose between singular value decomposition (SVD)-based and mean/median-centric harmonization methods for my proteomic and metabolomic data? A: The choice depends on data sparsity and batch structure.

Table 1: Method Selection Guide for Omics Data

| Data Type | Recommended Method | When to Use | Key Parameter to Tune |

|---|---|---|---|

| Dense Proteomics (DIA/MS) | ComBat, limma | Clear batch design, >70% data completeness | mod (model matrix) for biological covariates |

| Sparse Proteomics (DDA/MS) | RUV (Remove Unwanted Variation), Harmony | High missingness, batch confounded with condition | k (number of unwanted factors) |

| Metabolomics (Targeted) | Mean/Median Scaling, PQN | Known controls, intensity drift across batches | Quality Control (QC) sample correlation threshold |

| Metabolomics (Untargeted) | SVD-based (e.g., svacor), Drift Correction | Large technical variance, unknown compounds | Number of significant components in QC-PCA |

Experimental Protocols

Protocol 1: Cross-Platform Microarray/RNA-seq Data Harmonization using Seurat's CCA/Integration Workflow This protocol aligns gene expression data from microarray (Affymetrix HuGene) and RNA-seq (Illumina) platforms for integrated biomarker discovery.

- Data Preprocessing: Independently normalize microarray data with RMA and RNA-seq data with DESeq2's median of ratios. Select common genes (HUGO symbols).

- Feature Selection: Identify 2,000 highly variable genes (HVGs) separately for each dataset using the

FindVariableFeaturesfunction (vst method). - Anchor Identification: Input the two normalized expression matrices into the

FindIntegrationAnchorsfunction (method = "cca", k.anchor = 5). This finds mutual nearest neighbors (MNNs) across platforms. - Data Integration: Pass the anchors object to the

IntegrateDatafunction (dims = 1:20) to create a harmonized expression matrix. - Validation: Project integrated data via PCA. Platform-specific clusters should be mixed. Confirm retention of known biological variation (e.g., tumor vs. normal separation).

Protocol 2: Multi-omic (RNA-DNA) Biomarker Concordance Check Post-Harmonization Validates that harmonization preserves biological relationships between genomic alteration and transcriptomic output.

- Input: Somatic copy number alteration (CNA) matrix (e.g., from GATK) and harmonized RNA-seq expression matrix for the same patient cohort.

- Gene-level CNA: Segment CNA data and map to gene loci using a tool like

GISTIC2.0orCNTools(Bioconductor). Create a gene-by-sample matrix of CNA status (-2, -1, 0, 1, 2). - Correlation Analysis: For each gene, compute Spearman's correlation (ρ) between its CNA status and its harmonized expression value across all samples.

- Background Model: Generate a null distribution by permuting sample labels in the expression matrix 1000 times and recalculating ρ.

- Significance: Genes with observed |ρ| > 0.3 and permutation FDR < 0.01 are considered concordant. A successful harmonization should show high concordance for known oncogenes (e.g., ERBB2 amplification with overexpression).

Diagrams

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Kits for Multi-omics Harmonization Studies

| Item | Function in Biomarker Pipeline | Example Product/Catalog |

|---|---|---|

| Reference RNA/DNA | Serves as inter-batch calibration standard for sequencing runs, enabling technical variance assessment. | Thermo Fisher External RNA Controls Consortium (ERCC) Spike-In Mix; Coriell Institute Genomic Reference Materials. |

| Universal Protein Standard | Normalizes signal intensity across MS runs for proteomic harmonization; a mix of known, quantifiable proteins. | Sigma-Aldraft UPS2 (48 human protein mix); Promega Protein Mass Standard. |

| Pooled Quality Control (QC) Sample | Homogenized sample from study matrix (e.g., tumor tissue lysate) run repeatedly across batches to monitor and correct drift. | Prepared in-house from a representative pool of study biospecimens. |

| Multiplexed Immunoassay Panels | Allows simultaneous measurement of multiple protein biomarkers from a single small-volume sample, reducing batch numbers. | Luminex xMAP Cancer Biomarker Panels; Olink Target 96/384 Oncology Panels. |

| Indexed Sequencing Adapters | Enables sample multiplexing within a single sequencing lane, drastically reducing lane-to-lane (batch) effects. | Illumina TruSeq DNA/RNA UD Indexes; IDT for Illumina Nextera UD Indexes. |

| Internal Standard Mix (Metabolomics) | Spike-in stable isotope-labeled compounds for retention time alignment and peak intensity normalization in LC/MS. | Cambridge Isotope Laboratories MSK-CAFC-AA (SIL-Amino Acid Mix); IROA Technologies Mass Spectrometry Metabolite Library. |

Navigating Pitfalls: Practical Solutions for Common Harmonization Failures

Technical Support Center

Troubleshooting Guide: Common Multi-omics Integration Artifacts