Decoding Gene Ontology: A Complete Guide to GO Annotation Methods, Sources, and Best Practices for Researchers

This comprehensive guide demystifies the Gene Ontology (GO) annotation process for researchers and bioinformaticians.

Decoding Gene Ontology: A Complete Guide to GO Annotation Methods, Sources, and Best Practices for Researchers

Abstract

This comprehensive guide demystifies the Gene Ontology (GO) annotation process for researchers and bioinformaticians. We explore the foundational concepts of the GO's three structured vocabularies—Molecular Function, Biological Process, and Cellular Component. The article details the step-by-step methodology for assigning GO terms, from manual curation by experts to automated computational pipelines like InterProScan and Ensembl. We address common challenges in annotation consistency, evidence code reliability, and data integration, providing troubleshooting strategies for accurate functional analysis. Finally, we compare key data sources (UniProt, Ensembl, Model Organism Databases) and validation tools (GO Enrichment Analysis, REVIGO), equipping scientists with the knowledge to critically evaluate and leverage GO annotations to drive discoveries in genomics, systems biology, and drug development.

What is Gene Ontology? Core Concepts and the GO Annotation Landscape

The Gene Ontology (GO) constitutes a foundational computational resource for modern biology, providing a structured, controlled vocabulary for describing gene and gene product attributes across all species. Developed and maintained by the Gene Ontology Consortium (GOC), it is indispensable for the functional interpretation of high-throughput genomic, transcriptomic, and proteomic data, directly supporting research in biomedicine and drug development. This whitepaper, framed within the broader context of GO annotation processes and data sources, details the purpose, scope, and application of GO as a critical tool for biological knowledge representation and analysis.

The Purpose and Structure of the Gene Ontology

The primary purpose of GO is to address the need for consistent descriptions of gene functions, enabling data integration and comparative analysis. The ontology consists of three independent, non-overlapping domains (also called aspects):

- Molecular Function (MF): The biochemical activity of a gene product (e.g., "catalytic activity," "transporter activity").

- Biological Process (BP): The larger biological objective accomplished by one or more molecular functions (e.g., "signal transduction," "cell proliferation").

- Cellular Component (CC): The location in a cell where a gene product is active (e.g., "nucleus," "ribosome").

GO terms are organized in a directed acyclic graph (DAG) structure, where each term can have multiple parent (more general) and child (more specific) terms, allowing for rich representation of biological relationships.

Diagram Title: The Three Domains of the Gene Ontology

Scope and Coverage in Modern Biological Research

The scope of GO is species-agnostic, covering genes from all kingdoms of life. Its application spans diverse research areas:

- Functional Genomics: Interpreting gene lists from RNA-seq, CRISPR screens, or GWAS.

- Systems Biology: Modeling pathways and networks.

- Comparative Genomics: Inferring function across species.

- Biomarker & Drug Target Discovery: Identifying enriched biological themes in disease-associated genes.

Table 1: Current Quantitative Overview of the Gene Ontology (Source: Gene Ontology Consortium, 2024)

| Metric | Count | Description |

|---|---|---|

| Total GO Terms | ~48,000 | Active terms in the ontology. |

| Annotations (Total) | ~8.6 million | Links between a gene product and a GO term. |

| Species Covered | ~6,600 | Organisms with manual or computational annotations. |

| Manual Annotations | ~1.2 million | Curated by trained biologists from primary literature. |

| Computational Annotations | ~7.4 million | Inferred using standardized methods (e.g., ISS, IEA). |

Annotations are statements linking a specific gene product to a GO term, supported by an evidence code. The annotation process integrates data from multiple sources.

Table 2: Primary GO Evidence Codes and Their Meaning

| Evidence Code | Type | Description & Data Source |

|---|---|---|

| EXP, IDA, IPI, IMP, IGI, IEP | Experimental | Manually curated from primary literature (e.g., Nature, Science). |

| ISS, ISO, ISA, ISM, IGC, RCA | Computational/ Sequence Analysis | Inferred from sequence/structural similarity or phylogenetic analysis. |

| IEA | Electronic | Automated assignment from external resources (e.g., InterPro, UniProtKB). |

| TAS, NAS | Author/Curator | Traceable Author Statement or Non-traceable Author Statement. |

| IC, ND | Curatorial | Inferred by Curator or No biological Data available. |

Experimental Protocol for Manual Curation (EXP/IDA Evidence)

Manual annotation remains the gold standard. A typical workflow for a curator is:

- Literature Selection: Identify peer-reviewed papers describing functional experiments for a specific gene/protein.

- Data Extraction: Read the paper to identify key experimental findings (e.g., "Knockdown of Gene X reduced cell proliferation in assay Y").

- Term Mapping: Map the described biological activity to the most specific applicable GO term(s).

- Evidence Tagging: Assign the correct experimental evidence code (e.g., IDA for a direct assay).

- Database Entry: Submit the annotation (Gene ID, GO Term, Evidence Code, Reference PMID) to the GO database (e.g., UniProt-GOA, PomBase).

Diagram Title: Workflow for Manual GO Annotation Curation

The Scientist's Toolkit: Key Research Reagent Solutions for GO-Based Research

Table 3: Essential Tools and Resources for Functional Analysis Using GO

| Resource/Reagent | Function & Application | Key Provider/Example |

|---|---|---|

| GO Database (AmiGO, QuickGO) | Browsers to query and download the ontology and annotations. | Gene Ontology Consortium, EBI |

| Functional Enrichment Software | Statistical tools to identify over-represented GO terms in gene lists. | g:Profiler, DAVID, clusterProfiler (R/Bioconductor) |

| Curation Platforms (Noctua, PAINT) | Web-based tools used by consortium members for manual annotation. | Gene Ontology Consortium |

| High-Quality Antibodies | Validate protein localization (CC) and interactions (BP) via IF/Co-IP. | CST, Abcam, Invitrogen |

| CRISPR Knockout/Knock-in Libraries | Perform genome-wide screens; resulting gene lists are analyzed for GO enrichment. | Horizon Discovery, Synthego |

| qRT-PCR Assays & RNA-seq Kits | Measure gene expression changes; input for differential expression & GO analysis. | Thermo Fisher, Illumina, Bio-Rad |

| Pathway Reporter Assays | Validate involvement in specific biological processes (e.g., apoptosis, signaling). | Promega, Qiagen |

Application in Drug Development: A Case Study

A common pipeline involves identifying disease-associated genes and using GO enrichment to pinpoint dysregulated biological processes as potential therapeutic targets.

Diagram Title: GO Analysis Pipeline in Target Discovery

Experimental Protocol for GO Enrichment Analysis (Using g:Profiler):

- Input Preparation: Generate a target gene list (e.g., 150 upregulated genes in disease tissue). Prepare a background list (e.g., all genes detected in the experiment, ~15,000).

- Tool Configuration: Access the g:Profiler web interface. Paste the target list. Select the correct organism. Set the background list. Choose "GO: Biological Process" as the data source. Set a significance threshold (e.g., Bonferroni-corrected p-value < 0.05).

- Execution & Analysis: Run the analysis. The tool performs hypergeometric testing to identify GO terms containing more genes from your target list than expected by chance.

- Output Interpretation: Review the resulting table of enriched GO terms, their p-values, and the list of intersecting genes. Terms like "positive regulation of cell migration" may implicate a pathway of therapeutic interest.

- Downstream Validation: Design functional experiments (e.g., using reagents from Table 3) to perturb genes from the enriched term and measure the resulting phenotypic impact relevant to the disease.

The Gene Ontology provides an essential, unifying framework for representing biological knowledge in a computationally tractable form. Its rigorous annotation process, integrating both high-quality manual curation and large-scale computational methods, ensures a continuously expanding resource that reflects current scientific understanding. For researchers and drug developers, GO is not merely a glossary but a critical analytical engine, enabling the translation of complex genomic data into testable biological hypotheses and actionable insights for therapeutic intervention. Its scope and utility will continue to grow in lockstep with advancements in omics technologies and systems biology.

Within the framework of Gene Ontology (GO) annotation and data integration, the three structured, controlled vocabularies (ontologies)—Molecular Function (MF), Biological Process (BP), and Cellular Component (CC)—provide the essential foundation for representing gene product attributes across all species. This technical guide deconstructs these ontologies, detailing their formal structure, interrelationships, and practical application in computational and experimental biology, with a focus on the annotation pipeline and data sourcing critical for researchers and drug development professionals.

Formal Definitions and Structural Distinctions

The three ontologies are designed to be complementary yet distinct.

Molecular Function (MF): Describes the elemental activities of a gene product at the molecular level. These activities are defined as biochemical reactions without specifying where, when, or in what broader context they occur. Examples include "catalytic activity" and "transporter activity."

Biological Process (BP): Represents a series of events accomplished by one or more ordered assemblies of molecular functions. A process is a recognized biological program or objective. Examples include "signal transduction" and "cell proliferation."

Cellular Component (CC): Refers to the locations, at the levels of cellular anatomy and macromolecular complexes, where a gene product operates. Examples include "mitochondrial matrix" and "proteasome complex."

Table 1: Core Attributes of the Three GO Ontologies

| Ontology | Scope | Granularity | Example Terms | Annotation Cardinality (Typical) |

|---|---|---|---|---|

| Molecular Function (MF) | Elemental activity | Fine | GO:0005524 ATP binding | A protein can have multiple MFs. |

| Biological Process (BP) | Biological program | Coarse to fine | GO:0007165 signal transduction | A protein is annotated to multiple BPs. |

| Cellular Component (CC) | Location & complex | Spatial | GO:0005739 mitochondrion | A protein can localize to multiple CCs. |

Ontology Relationships and Annotation Propagation

The ontologies are structured as directed acyclic graphs (DAGs), where terms are nodes connected by defined relationships. The primary relationships are "isa" and "partof."

- "isa": Denotes a subclass relationship. If term A *isa* term B, then every instance of A is also an instance of B (e.g., "nuclear chromosome" is_a "chromosome").

- "partof": Denotes a component relationship. If term A *partof* term B, then A is always a constituent of B, but A may exist independently in other contexts (e.g., "nucleus" part_of "cell").

The true power of GO lies in the True Path Rule: annotations propagate upwards through these relationships. An annotation to a specific term implies annotation to all its parent (less specific) terms. This enables both specific querying and high-level functional analysis.

Diagram 1: GO Graph Structure & Annotation Propagation

GO annotations are created by multiple curation groups (e.g., UniProtKB, model organism databases). Each annotation links a gene product to a GO term with an evidence code (ECO) denoting the supporting data.

Table 2: Primary GO Evidence Codes and Data Sources

| Evidence Code | Data Source Type | Experimental/Computational | Reliability Tier |

|---|---|---|---|

| EXP, IDA, IPI, IMP, IGI, IEP | Direct experimental assay results | Experimental | High |

| ISS, ISO, ISA, ISM, IGC, RCA | Sequence/structural similarity to annotated proteins | Computational | Curator-judged |

| IC | Inferred by curator from other annotations | Curatorial | Medium |

| IEA | Automated electronic annotation from algorithms | Computational | Lower (Requires filtering) |

| TAS, NAS | Traceable author statement or published literature | Literature-based | Medium-High |

Protocol 1: Manual Curation via Literature Review (ISS/IDA Evidence)

- Literature Triaging: Curators use query systems (e.g., PubMed) with targeted keywords to identify papers for specific genes or pathways.

- Data Extraction: Relevant statements (e.g., "Protein X phosphorylates Y," "Knockout of gene A disrupts process B") are extracted.

- GO Term Mapping: The biological activity is mapped to the most specific applicable GO term(s) (MF, BP, CC).

- Evidence Assignment: An evidence code is assigned (e.g., In vitro kinase assay → IDA for "kinase activity"; Mutant phenotype → IMP for a BP).

- Annotation Record Creation: The gene ID, GO term, evidence code, and reference are formatted per GO Consortium standards and submitted to the annotation database.

Protocol 2: High-Throughput Automated Annotation (IEA Evidence)

- Input Data Preparation: Whole proteome sequences or domain architectures are prepared.

- Algorithmic Analysis: Tools like InterProScan identify protein domains, families, and motifs by scanning against member databases (Pfam, SMART, etc.).

- Rule-Based Mapping: Pre-established mapping files (e.g., InterPro2GO) associate specific domains/families with corresponding GO terms.

- Annotation Generation: For each protein, all matching domains generate corresponding GO term annotations.

- Quality Filtering: Annotations may be filtered based on parameters like InterPro entry quality or taxonomic scope to reduce noise.

Experimental Methodologies for GO Annotation Support

Key wet-lab experiments generate data that underpins specific evidence codes.

Protocol 3: Co-Immunoprecipitation with Mass Spectrometry (Co-IP/MS) for CC & BP (Evidence: IPI)

- Purpose: To identify protein-protein interactions and infer complex membership (CC) and involvement in processes (BP).

- Procedure:

- Cell Lysis: Lyse cells expressing a tagged version of the bait protein under native conditions.

- Immunoprecipitation: Incubate lysate with antibody beads specific to the tag to capture the bait protein and its interactors.

- Washing: Stringently wash beads to remove non-specifically bound proteins.

- Elution: Elute the protein complex using tag peptide competition or low-pH buffer.

- Mass Spectrometry: Subject eluate to tryptic digestion, LC-MS/MS analysis, and database searching to identify co-precipitated proteins.

- Data Analysis: Use statistical filters (e.g., SAINT, MaxQuant) to distinguish specific interactors from background contaminants. Specific interactors support annotation to relevant protein complexes (CC) and associated BPs.

Protocol 4: Gene Knockout and Phenotypic Analysis for BP (Evidence: IMP)

- Purpose: To determine the biological process a gene is involved in by observing the consequences of its loss of function.

- Procedure:

- Mutant Generation: Create a homozygous knockout organism (e.g., mouse, yeast, plant) using CRISPR/Cas9 or homologous recombination.

- Phenotypic Screening: Subject mutant and wild-type controls to a battery of phenotypic assays (growth rate, morphology, response to stress, metabolic profiling, histology).

- Phenotype Mapping: Map the observed specific defect(s) to established biological processes using phenotype ontologies (e.g., Mammalian Phenotype Ontology).

- GO Annotation: Annotate the gene to the GO BP term(s) that best explain the observed mutant phenotype (e.g., embryonic lethality → GO:0009790 embryo development; UV sensitivity → GO:0006974 cellular response to DNA damage stimulus).

Diagram 2: Knockout to GO BP Annotation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Key GO-Relevant Experiments

| Item | Function in GO Context | Example Product/Catalog |

|---|---|---|

| Tagged Expression Vector | Enables expression of bait protein with an affinity tag (e.g., FLAG, HA) for Co-IP/MS experiments to identify interactions (CC). | pCMV-FLAG Vector (Sigma, E7908) |

| Anti-Tag Magnetic Beads | For immunoprecipitation of tagged protein complexes with high purity and low background. | Anti-FLAG M2 Magnetic Beads (Millipore, M8823) |

| CRISPR/Cas9 System | For generating knockout cell lines or organisms to study loss-of-function phenotypes (BP annotation). | LentiCRISPR v2 (Addgene, #52961) |

| Phenotypic Screening Kit | Pre-configured assays for specific processes (e.g., apoptosis, cell cycle) to quantify mutant phenotypes. ApoTox-Glo Triplex Assay (Promega, G6320) | |

| LC-MS/MS System | For identifying proteins in complexes (Co-IP) or profiling changes in protein expression/PTMs. | Orbitrap Eclipse Tribrid Mass Spectrometer (Thermo Fisher) |

| GO Enrichment Analysis Software | To statistically determine over-represented GO terms in a gene list derived from experiments. | PANTHER, g:Profiler, clusterProfiler |

What is a GO Annotation? Defining the Link Between Gene Product and GO Term

A Gene Ontology (GO) annotation is an assertion of a specific relationship between a gene product (or gene) and a GO term. It is the foundational unit of knowledge that populates the GO resource, creating a computable representation of biological system functions. Within the broader thesis on the GO annotation process and data sources, this guide details the technical definition, creation, provenance, and application of these annotations, serving as a critical reference for researchers and drug development professionals.

The Anatomy of a GO Annotation

A GO annotation is not a simple tag but a structured statement with multiple required components, each providing essential context and provenance.

Core Data Elements

Each annotation connects the following entities:

| Element | Description | Example |

|---|---|---|

| Gene Product Identifier | A unique database ID for the gene/gene product (e.g., from UniProtKB, Ensembl). | P12345 (UniProtKB) |

| GO Term ID | The identifier for the specific GO concept. | GO:0005634 (nucleus) |

| Evidence Code | A code indicating the type of evidence supporting the assertion. | IDA (Inferred from Direct Assay) |

| Reference | The source that supports the annotation (e.g., PubMed ID, DOI). | PMID:26767044 |

| Assigned By | The database or project that made the annotation. | UniProtKB, SGD |

| Annotation Extension | Additional contextual information (e.g., a process occurs in a specific cell type). | occurs_in(CL:0000540) (neuron) |

| Date | The date the annotation was made or last reviewed. | 2024-02-15 |

Evidence Ontology: The Foundation of Credibility

The evidence code is critical for assessing an annotation's reliability. Codes are hierarchical, ranging from experimental to computational.

Quantitative Distribution of Evidence Types (GO Consortium, 2023 Data Release):

| Evidence Type | Percentage of Annotations | Example Codes |

|---|---|---|

| Experimental | ~22% | IDA (Direct Assay), IMP (Mutant Phenotype), IPI (Physical Interaction) |

| Phylogenetic | ~14% | IEP (Expression Pattern), IGI (Genetic Interaction) |

| Computational | ~54% | ISS (Sequence/Structural Similarity), IBA (Biological Aspect of Ancestor) |

| Author Statement | ~7% | TAS (Traceable Author Statement), NAS (Non-traceable Author Statement) |

| Curatorial | ~3% | IC (Inferred by Curator), ND (No biological Data available) |

The GO Annotation Process: Detailed Workflow

Creation of high-quality annotations follows a rigorous pipeline. The following diagram illustrates the standard workflow for manual curation.

Title: Standard Manual Curation Workflow for GO Annotation

Experimental Protocols for Key Evidence Types

Protocol 1: Generating IDA (Inferred from Direct Assay) Evidence for Cellular Component.

- Objective: To localize a protein of interest (POI) to a specific cellular compartment using fluorescence microscopy.

- Reagents & Materials:

- Expression Construct: Plasmid encoding POI fused to a fluorescent tag (e.g., GFP).

- Cell Line: Appropriate model system (e.g., HEK293, HeLa).

- Transfection Reagent: (e.g., Lipofectamine 3000).

- Fixative: 4% Paraformaldehyde (PFA).

- Mounting Medium with DAPI: For nuclear counterstain.

- Confocal Fluorescence Microscope: Equipped with appropriate lasers and filters.

- Methodology:

- Transfect cells with the POI-GFP construct according to manufacturer protocol.

- At 24-48 hours post-transfection, fix cells with 4% PFA for 15 minutes.

- Permeabilize cells with 0.1% Triton X-100 (optional, for improved staining).

- Mount coverslips using medium containing DAPI.

- Image using confocal microscopy. Acquire Z-stacks to confirm co-localization.

- Analyze images. Clear, specific co-localization of GFP signal with a known organelle marker (or morphological feature like the nucleus) supports a

IDAannotation to the corresponding GO Cellular Component term.

Protocol 2: Generating IMP (Inferred from Mutant Phenotype) Evidence for Biological Process.

- Objective: To link a gene to a biological process via observation of a phenotypic change upon gene disruption.

- Reagents & Materials:

- Mutant Model: Knockout/knockdown organism or cell line (e.g., CRISPR-Cas9 generated).

- Wild-type Control: Isogenic control line.

- Phenotypic Assay Reagents: Specific to the process (e.g., apoptosis assay kit, growth measurement reagents).

- Statistical Analysis Software: (e.g., GraphPad Prism, R).

- Methodology:

- Establish matched wild-type and mutant populations.

- Subject both populations to an assay quantifying a specific biological process (e.g., cell proliferation assay, oxidative stress resistance assay).

- Measure and quantify the assay output.

- Perform rigorous statistical analysis (e.g., t-test, ANOVA) to confirm a significant difference in the mutant.

- A statistically significant, specific defect in the process supports an

IMPannotation to the relevant GO Biological Process term. The reference must describe both the mutation and the assayed phenotype.

The Scientist's Toolkit: Research Reagent Solutions

| Reagent/Material | Function in GO-Relevant Experiments | Example Vendor/Identifier |

|---|---|---|

| CRISPR-Cas9 Knockout Kit | Creates precise gene disruptions for IMP phenotype analysis. |

ToolGen TrueGuide sgRNA + Cas9 protein |

| Tagged Protein Expression Vector | Creates fusion proteins for localization (IDA) or interaction (IPI) studies. |

Addgene: pEGFP-N1 backbone |

| Co-Immunoprecipitation (Co-IP) Kit | Identifies protein-protein interactions for IPI evidence. |

Thermo Fisher Scientific Pierce Co-IP Kit |

| RNA-Seq Library Prep Kit | Profiles gene expression changes for IEP evidence. |

Illumina TruSeq Stranded mRNA Kit |

| Specific Chemical Inhibitor/Agonist | Modulates protein activity to observe process disruption (IMP). |

e.g., Wortmannin (PI3K inhibitor) from Sigma-Aldrich |

| Validated Antibody for ChIP | Maps protein-DNA interactions for IDA/IPI evidence. |

Cell Signaling Technology, Catalog #9991 |

| Phenotypic Microarray Plate | High-throughput profiling of mutant phenotypes for IMP. |

Biolog Phenotype MicroArrays |

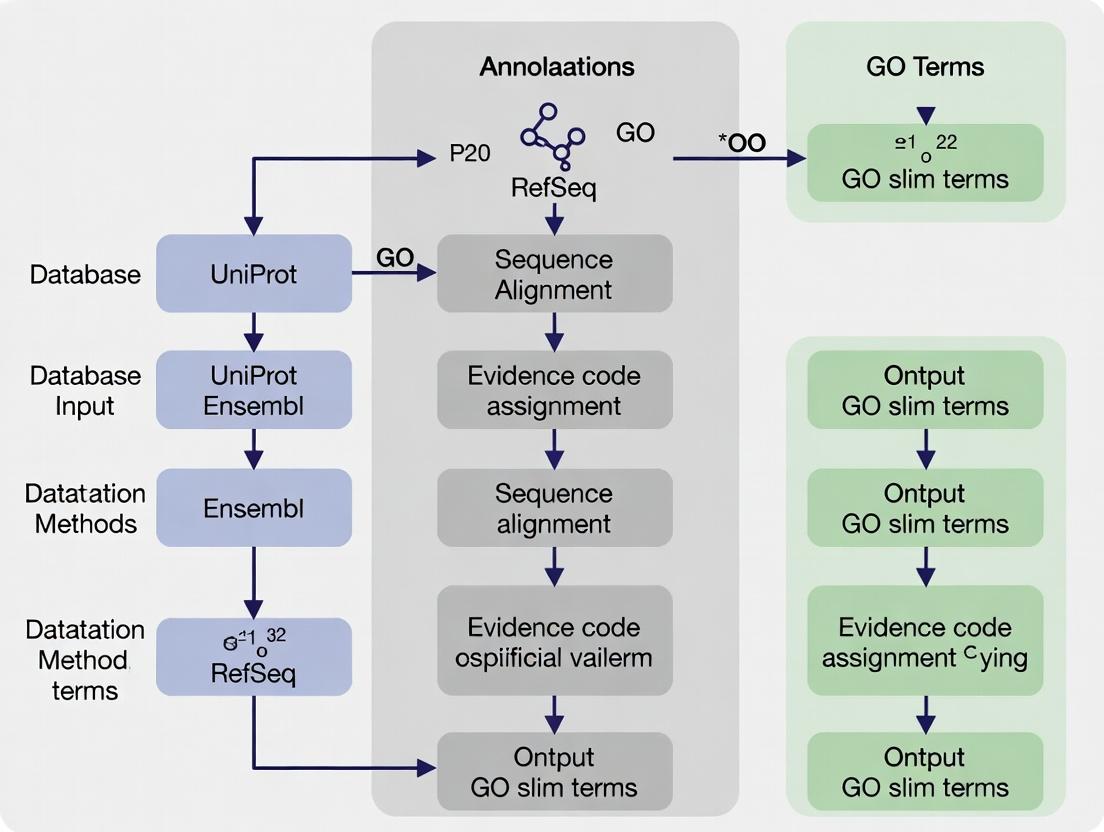

Annotations originate from diverse channels. The logical relationship between sources, methods, and the final annotation dataset is shown below.

Title: Data Flow from Annotation Sources to Final GAF

Application in Drug Development: A Pathway Example

GO annotations enable pathway and network analysis to identify novel drug targets. For instance, aggregating annotations can reveal a protein's role in a disease-relevant signaling cascade.

Title: GO-Annotated Signaling Pathway with Drug Target Points

A GO annotation is a precise, evidence-based statement that links gene products to functional concepts, forming the core data layer of the Gene Ontology. The rigor of its structure—encompassing evidence codes, provenance, and extensions—makes it an indispensable asset for computational biology, systems biology, and translational research, including target identification and validation in drug development. Understanding its creation and composition is fundamental to leveraging the power of functional genomics data.

Within the broader thesis on the Gene Ontology (GO) annotation process and data sources, understanding the dynamic interplay between its key stakeholders is paramount. The Gene Ontology Consortium (GOC) provides the foundational framework, curators from diverse model organism databases and research groups supply the expert-driven annotations, and the global user community of researchers and drug development professionals applies and critiques the data. This synergy drives the continuous evolution of GO, making it a critical, living resource for functional genomics.

The Gene Ontology Consortium: Governance and Infrastructure

The GOC is an international, collaborative initiative that develops, maintains, and disseminates the Gene Ontology. Its primary role is ontological engineering and ensuring computational integrity.

Core Functions:

- Ontology Development: Establishing and refining the structured vocabularies (biological process, molecular function, cellular component) and their logical relationships (isa, partof, regulates).

- Technical Infrastructure: Maintaining the GO website (geneontology.org), the AmiGO browser, and the GO database.

- Standard Setting: Defining annotation standards and evidence codes (e.g., Inferred from Experiment (EXP), Inferred from Electronic Annotation (IEA)).

- Coordination: Facilitating collaboration among member groups to prevent duplication and ensure consistency.

Quantitative Snapshot of GOC Resources (2024): Table 1: Current Scale of the Gene Ontology (Live Data Summary)

| Metric | Count | Notes |

|---|---|---|

| GO Terms (Total) | ~45,000 | Active terms in the ontology graph. |

| Species with GO Data | > 5,000 | Spanning all domains of life. |

| Total GO Annotations | ~800 million | Includes all evidence types. |

| Manual Annotations (Curated) | ~1.4 million | High-quality, expert-reviewed annotations. |

| Participating Databases | ~30 | Includes UniProtKB, SGD, FlyBase, WormBase, etc. |

Curators: The Annotation Engineers

Curators, often based in model organism databases (MODs) or large-scale annotation projects, are the linchpin between biological knowledge and its computational representation. They execute the GO annotation process.

Detailed Annotation Protocol:

- Step 1: Literature Curation: Curators identify relevant publications through targeted PubMed searches (e.g., for a specific gene or pathway).

- Step 2: Data Extraction: From the experimental results in the paper, curators identify the gene product studied, the molecular function, biological process, or cellular location demonstrated, and the experimental method used.

- Step 3: GO Term Assignment: Using the GO browser, curators select the most specific, accurate term(s) to describe the finding.

- Step 4: Evidence Tagging: Each annotation is tagged with an Evidence Code (see Table 2).

- Step 5: Annotation Record Creation: The 4-part annotation (Gene Product, GO Term, Evidence Code, Reference) is formatted in GPAD/GAF format and submitted to the GO database.

Table 2: Key GO Evidence Codes for Experimental Data

| Evidence Code | Full Name | Description | Typical Experimental Method |

|---|---|---|---|

| EXP | Inferred from Experiment | Direct evidence from a reported experiment. | Co-immunoprecipitation, Enzyme assay, GFP localization. |

| IDA | Inferred from Direct Assay | A sub-category of EXP for direct physical or functional assays. | In vitro binding assay, Kinetic analysis in purified system. |

| IPI | Inferred from Physical Interaction | Evidence from interaction with another molecule. | Yeast two-hybrid, Affinity chromatography/MS. |

| IMP | Inferred from Mutant Phenotype | Evidence from a mutant, knockdown, or overexpression phenotype. | CRISPR knockout, RNAi, Transgenic rescue experiment. |

| IEP | Inferred from Expression Pattern | Evidence from changes in gene expression levels. | RNA-seq, qRT-PCR, Microarray under specific conditions. |

The Scientist's Toolkit: Essential Reagents for GO-Relevant Experiments Table 3: Key Research Reagent Solutions for Generating GO-Annotatable Data

| Item | Function in Experiment | Example/Supplier |

|---|---|---|

| CRISPR-Cas9 Kit | Targeted gene knockout for IMP evidence. | Synthego, IDT Alt-R, ToolGen. |

| Tag-Specific Antibodies | Immunoprecipitation (IPI) or immunofluorescence (IDA, EXP). | Anti-FLAG (Sigma), Anti-GFP (Roche), Anti-HA (CST). |

| Protease Inhibitor Cocktail | Preserves protein complexes during co-IP (IPI). | Roche cOmplete, Thermo Fisher Pierce. |

| qRT-PCR Master Mix | Quantifies gene expression changes (IEP). | Bio-Rad iTaq, Applied Biosystems Power SYBR. |

| Fluorescent Protein Vectors | Subcellular localization studies (EXP for cellular component). | Addgene plasmids (EGFP, mCherry fusions). |

| Mass Spectrometry Grade Trypsin | Digests proteins for LC-MS/MS identification in interaction studies (IPI). | Promega Sequencing Grade, Thermo Fisher Trypsin Platinum. |

The User Community: Application and Feedback

Users, including academic researchers and drug development professionals, apply GO data to interpret omics studies, prioritize disease genes, and identify potential drug targets.

Primary Use Cases:

- Functional Enrichment Analysis: Interpreting lists of differentially expressed genes from RNA-seq by identifying overrepresented GO terms.

- Network and Pathway Analysis: Integrating GO annotations with protein-protein interaction networks to map functional modules.

- Target Validation: Assessing the functional landscape of a potential drug target across processes and pathways to understand therapeutic implications and side-effect potentials.

Feedback Loop: Users report ontological gaps, ambiguities, or annotation errors through GitHub issues or curator contact forms, directly influencing future curation cycles and ontology development.

Integrated Workflow and Stakeholder Interaction

The following diagram illustrates the cyclic workflow of GO development, annotation, and use, highlighting the roles of each stakeholder group.

Diagram 1: GO stakeholder ecosystem and data flow.

Pathway Annotation Example: Wnt Signaling

To illustrate the curator's role, consider annotating a gene involved in the canonical Wnt/β-catenin pathway. The following pathway diagram shows key steps where experimental evidence leads to specific GO annotations.

Diagram 2: Mapping experiments to GO terms via Wnt pathway.

Gene Ontology (GO) annotations are the critical linchpin connecting genomic data to biological understanding. Within the broader thesis of the GO annotation process and data sources, this guide details how these curated associations empower functional enrichment analysis and drive hypothesis generation in molecular biology and drug discovery. Annotations transform static gene lists into dynamic biological narratives by providing standardized descriptions of molecular functions (MF), biological processes (BP), and cellular components (CC).

GO annotations are derived from multiple channels, each with distinct methodologies and evidence codes. The following table summarizes the primary sources and their quantitative contributions as of recent data releases.

Table 1: Primary GO Annotation Data Sources and Current Metrics

| Data Source | Methodology | Evidence Codes | Annotations (Millions) | Species Covered | Key Characteristics |

|---|---|---|---|---|---|

| UniProtKB | Manual curation & computational | EXP, IDA, IPI, ISS, ISO, ISA, ISM, IGC, IBA, IBD, IKR, IRD, RCA, TAS, NAS, IC | ~1.2 | > 10,000 | High-quality manual annotations for key model organisms. |

| Ensembl | Automated pipelines (InterPro2GO, etc.) | IEA | ~150 | > 20,000 | Large-scale, computationally derived annotations. |

| Model Organism Databases (MGD, RGD, SGD, etc.) | Centralized manual curation | EXP, IDA, IPI, etc. | ~4.5 | 10-15 (deep curation) | Organism-specific, high-depth curation for model organisms. |

| GO-CAM (Causal Activity Models) | Pathway/mechanism-based curation | All, combined in models | ~0.05 (models) | Selected organisms | Represents causal, predictive biological network models. |

Source: Data compiled from Gene Ontology Consortium releases (2024), UniProt, and Ensembl.

Core Methodology: Functional Enrichment Analysis Workflow

Functional enrichment analysis identifies GO terms statistically over-represented in a gene set of interest (e.g., differentially expressed genes) compared to a background set. The protocol below details a standard computational workflow.

Experimental Protocol: Statistical Enrichment Analysis Using ClusterProfiler

- Input Preparation: Prepare a target gene list (e.g., significant DEGs) and a background gene list (e.g., all genes assayed). Ensure gene identifiers are consistent (e.g., Ensembl IDs).

- Statistical Test Selection: Choose an appropriate test. The hypergeometric test (or Fisher's exact test) is most common for term-for-term analysis. For gene-set enrichment analysis (GSEA), a ranked list is required.

- Multiple Testing Correction: Apply corrections (Benjamini-Hochberg FDR) to adjusted p-values to control for false discoveries.

- Tool Execution (R example):

- Interpretation: Analyze significantly enriched terms (FDR < 0.05). Consider term redundancy reduction using tools like

simplify()or REVIGO.

Diagram 1: Functional Enrichment Analysis Core Workflow

Key Experimental Protocols for Validation

Following in silico enrichment, hypotheses require experimental validation. Key methodologies are listed below.

Table 2: Key Research Reagent Solutions for Experimental Validation

| Reagent/Material | Function in Validation | Example Vendor/Identifier |

|---|---|---|

| CRISPR-Cas9 Knockout Kit | Gene-specific knockout to perturb function linked to enriched GO term. | Synthego (sgRNA design/ synthesis) |

| Validated siRNA/shRNA Library | Transcript knockdown for functional screening of gene sets from enriched processes. | Horizon Discovery (siGENOME) |

| Pathway-Specific Reporter Assay (e.g., Luciferase) | Measures activity of a signaling pathway inferred from enriched terms (e.g., NF-κB). | Promega (pGL4 NF-κB RE) |

| Phospho-Specific Antibody Panel | Detects phosphorylation changes in signaling proteins from an enriched pathway. | Cell Signaling Technology |

| Organelle-Specific Dyes (e.g., MitoTracker) | Validates changes in cellular component localization (e.g., mitochondrial disruption). | Thermo Fisher Scientific |

| Metabolite Assay Kits | Quantifies metabolites to test hypotheses about enriched metabolic processes. | Abcam, Sigma-Aldrich |

Experimental Protocol: Validating a GO Biological Process via CRISPR Knockout and Phenotypic Assay This protocol tests the hypothesis that genes enriched for "positive regulation of apoptotic process" (GO:0043065) are essential for cell survival upon chemotherapeutic treatment.

- Design and Delivery: Design sgRNAs targeting 3-5 top genes from the enriched list using a tool like CRISPick. Transfect target cells (e.g., HeLa) with ribonucleoprotein (RNP) complexes of Cas9 and sgRNA using a nucleofection system. Include a non-targeting control (NTC) sgRNA.

- Selection and Verification: 48h post-transfection, apply appropriate selection (e.g., puromycin if using a vector). After 5-7 days, harvest genomic DNA from pool. Verify editing efficiency (>70% recommended) via TIDE analysis or next-generation sequencing.

- Phenotypic Assay: Plate knockout and control cells in 96-well plates. At 70% confluence, treat with a titrated dose of chemotherapeutic agent (e.g., Doxorubicin, 0-1 µM). After 48h, measure cell viability using a resazurin-based assay (AlamarBlue). Read fluorescence (Ex560/Em590).

- Data Analysis: Normalize fluorescence readings to untreated NTC cells. Plot dose-response curves. Compare IC50 values between gene knockouts and NTC using a paired t-test. A significant decrease in IC50 in a knockout confirms the gene's role in the apoptotic response process.

From Enrichment to Hypothesis: A Pathway Case Study

Enrichment analysis of genes overexpressed in a cancer subtype may reveal "Wnt signaling pathway" (GO:0016055) and "cell migration" (GO:0016477). This leads to the testable hypothesis: "Dysregulated Wnt signaling drives increased migration in this cancer subtype." The following causal diagram, inspired by GO-CAM, models this hypothesis.

Diagram 2: Hypothesis Model: Wnt Pathway Driving Migration

GO annotations are not mere labels but foundational data that fuel functional enrichment analysis, converting high-throughput data into biological insight. Through rigorous statistical application and subsequent experimental validation, as detailed in this guide, these annotations enable researchers to generate and test precise mechanistic hypotheses, directly accelerating target discovery and mechanistic understanding in biomedicine.

How GO Annotations are Made: A Step-by-Step Guide to Manual and Automated Methods

Within the broader thesis on the Gene Ontology (GO) annotation process, this guide details the technical workflow for converting biological evidence from diverse data sources into standardized GO term assignments. This process is foundational for functional genomics, systems biology, and target validation in drug development.

GO annotations are derived from a variety of experimental and computational sources, each with associated evidence codes indicating the type of support.

Table 1: Primary Data Sources and Evidence Codes for GO Annotation

| Data Source Type | Specific Source | Typical Evidence Code(s) | Relative Contribution (Estimate) | Key Characteristics |

|---|---|---|---|---|

| Published Literature | Peer-reviewed research articles | EXP (Inferred from Experiment), IDA (Inferred from Direct Assay) | ~45% | High-curation burden, high specificity. |

| High-Throughput Experiments | Proteomics, Protein-protein interaction arrays, RNA-seq | HTP (High Throughput Experiment), HDA (High Throughput Direct Assay) | ~30% | Large-scale, requires rigorous filtering. |

| Computational Analyses | Sequence similarity, phylogenetic models | ISS (Inferred from Sequence/Structural Similarity), IBA (Inferred from Biological Ancestry) | ~20% | Scalable, requires manual review for precision. |

| Author Statements | Reviews, curated databases | TAS (Traceable Author Statement), IC (Inferred by Curator) | ~5% | Secondary, requires source verification. |

Data synthesized from current GO Consortium documentation and major model organism databases (2024).

Core Annotation Workflow Protocol

The following is a standardized protocol for manual annotation based on experimental literature.

Protocol: Manual Curation from Literature (EXP/IDA Evidence)

- Objective: To assign GO terms to a gene product based on findings in a primary research article.

Materials:

- Research article (PDF).

- Gene/Protein identifier for the studied entity.

- Ontology browser (e.g., AmiGO, QuickGO).

- Annotation tool (e.g, Protein2GO, Noctua curation platform).

- Reference management software.

Methodology:

- Article Triage: Identify articles with direct experimental evidence (e.g., knockout, assay, localization) for a gene's function, process, or location.

- Gene Identification: Map the gene/protein name in the paper to a standard identifier (e.g., UniProtKB ID, MGI ID).

- Evidence Extraction: Identify specific sentences/figures describing a conclusive experimental result.

- Example: "Knockdown of Gene X resulted in a significant arrest of the cell cycle at G1 phase (p<0.01)."

- GO Term Selection: Using an ontology browser, find the most specific GO term matching the described activity.

- For the example: GO:0044843 (cell cycle G1/S phase transition)

- Evidence Code Assignment: Assign the appropriate evidence code.

- For direct functional assay: IDA or EXP.

- Annotation Capture: In the curation tool, create the annotation quadruple: Gene ID | GO Term ID | Evidence Code | Reference (PMID).

- Quality Check: Verify the annotation is accurate, not over-interpreted, and uses the correct term from the correct ontology (BP, CC, MF).

Protocol: Computational Annotation Transfer (ISS/IBA Evidence)

- Objective: To assign GO terms based on sequence or phylogenetic similarity to a manually annotated ortholog.

Materials:

- Target protein sequence.

- Reference database (e.g., UniProtKB, orthology clusters from OrthoDB).

- Alignment tool (e.g., BLAST).

- Phylogenetic inference tool (e.g., PANTHER, PhylomeDB).

- Annotation propagation guidelines (GO Consortium standards).

Methodology:

- Sequence Alignment: Perform a BLASTp search of the target sequence against a database of annotated proteins.

- Orthology Assessment: Use a phylogenetic pipeline (e.g., PANTHER) to distinguish true orthologs from paralogs.

- Annotation Transfer: Transfer GO annotations from the source ortholog only if:

- Sequence identity is above the trusted threshold for the specific protein family.

- The orthology relationship is strongly supported (e.g., in a 1:1 orthology cluster).

- Evidence Code Assignment: Assign ISS (with identifier of the source ortholog) or IBA (for annotations within curated phylogenetic models like PANTHER).

Workflow Visualization

GO Annotation Workflow Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Tools for GO-Annotated Experiments

| Item | Example Product/Resource | Primary Function in Generating Annotation Evidence |

|---|---|---|

| CRISPR-Cas9 Knockout Kit | Synthego Edit-R CRISPR kits | Enables generation of loss-of-function mutants for in vivo functional assays (EXP evidence). |

| Antibody for Immunofluorescence | Cell Signaling Technology monoclonal antibodies | Detects protein subcellular localization (IDA evidence for Cellular Component). |

| Kinase Activity Assay Kit | Promega ADP-Glo Kinase Assay | Measures direct enzymatic activity of a protein (IDA evidence for Molecular Function). |

| Yeast Two-Hybrid System | Takara Matchmaker Gold System | Identifies direct protein-protein interactions (IDA evidence). |

| RNA-seq Library Prep Kit | Illumina Stranded mRNA Prep | Generates transcriptome data for inferring biological process involvement (HTP evidence). |

| Mass Spectrometry Standard | Thermo Scientific Pierce TMTpro 16plex | Enables quantitative proteomics for protein complex/process analysis (HDA evidence). |

| Curated Orthology Database | PANTHER Classification System | Provides phylogenetic trees for computational annotation transfer (IBA evidence). |

| Annotation Curation Platform | GO Consortium's Noctua/Protein2GO | The software interface for creating, managing, and submitting GO annotations. |

Within the complex landscape of functional genomics, the Gene Ontology (GO) provides the essential conceptual framework for characterizing gene products. The integrity of this resource hinges on the quality of its annotations. While computational methods scale rapidly, manual curation by domain experts, often organized within Model Organism Databases (MODs), remains the undisputed gold standard for accuracy, depth, and reliability.

The Role of Manual Curation in the GO Ecosystem

Manual curation is a rigorous, evidence-based process where expert biologists read published literature to extract precise functional data and map it to controlled GO terms and supporting evidence codes. This process is critical for generating the high-quality reference datasets that validate and train computational annotation pipelines.

Table 1: Comparison of GO Annotation Data Sources

| Aspect | Manual Curation by Experts/MODs | High-Throughput Computational Methods | Literature-Based Automated Extraction |

|---|---|---|---|

| Primary Evidence | Direct reading of full-text papers | Large-scale experimental data (e.g., proteomics, expression clusters) | Text mining of abstracts and full texts |

| Accuracy & Precision | Very High (Gold Standard) | Variable; requires manual validation | Moderate; prone to contextual misinterpretation |

| Annotation Depth | Deep (multi-term annotations, complex processes, isoforms) | Broad but often shallow (single term per gene) | Broad, limited by textual mention |

| Evidence Code Use | Precise (IDA, IMP, IPI, etc.) | Inferred from Experiment (IEA) or Sequence/Structural Similarity (ISS) | Inferred from Electronic Annotation (IEA) |

| Throughput | Low (resource-intensive) | Very High | High |

| Exemplar Source | SGD (Yeast), FlyBase, WormBase, ZFIN, PomBase, TAIR | GOA, UniProt | Textpresso, Europe PMC |

Experimental Protocols Underpinning Manual Curation

The following methodologies represent common experiments whose results are captured during manual GO curation.

Protocol 1: Yeast Two-Hybrid (Y2H) Assay for Protein-Protein Interaction (GO:0005515)

- Objective: To detect direct physical interaction between two proteins in vivo.

- Materials:

- S. cerevisiae reporter strains (e.g., Y187, AH109).

- Bait Plasmid: pGBKT7 (DNA-BD fusion).

- Prey Plasmid: pGADT7 (AD fusion).

- Selection Media: SD/-Leu/-Trp (for co-transformation), SD/-Leu/-Trp/-His/-Ade + X-α-Gal (for interaction stringency).

- Positive & Negative Control Plasmids.

- Procedure:

- Clone genes of interest into bait (pGBKT7) and prey (pGADT7) vectors.

- Co-transform both plasmids into the yeast reporter strain.

- Plate transformations on SD/-Leu/-Trp medium to select for cells containing both plasmids. Incubate at 30°C for 3-5 days.

- Pick colonies and streak/re-streak on high-stringency medium (SD/-Leu/-Trp/-His/-Ade + X-α-Gal). True protein-protein interactions activate reporter genes (HIS3, ADE2, MEL1), enabling growth and producing blue colonies.

- Validate interactions with control experiments (swapping bait/prey, using non-interacting protein pairs).

Protocol 2: Gene Knockout & Phenotypic Analysis for Biological Process Annotation

- Objective: To determine the biological process a gene is involved in by observing the phenotypic consequence of its loss of function.

- Materials:

- Model organism (e.g., mouse, zebrafish, Drosophila, yeast).

- CRISPR/Cas9 components or homologous recombination vectors for gene targeting.

- Phenotyping platforms (microscopy, metabolic assays, behavioral assays).

- Procedure:

- Generate a null allele using targeted gene disruption (e.g., CRISPR/Cas9-induced frameshift, homologous recombination).

- Establish homozygous mutant lines.

- Conduct systematic phenotypic analysis comparing mutants to wild-type controls. Assays may include: viability, morphology, histology, physiological tests, and response to stressors.

- Map the observed mutant phenotype to specific GO biological processes (e.g., embryonic development, synaptic transmission, DNA repair) based on established phenotype-ontology cross-references.

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Function in Validation & Curation |

|---|---|

| CRISPR/Cas9 System | Enables precise gene knockouts, knock-ins, and edits to establish gene function. |

| Tandem Affinity Purification (TAP) Tags | Allows purification of protein complexes under near-physiological conditions for interaction mapping. |

| β-Galactosidase (LacZ) Reporters | Visualizes gene expression patterns and regulatory element activity in model organisms. |

| GFP/YFP Fusion Proteins | Enables in vivo localization and dynamic tracking of proteins (GO Cellular Component). |

| Specific Chemical Inhibitors/Agonists | Tools to perturb specific pathways and infer gene function from rescue or enhancement experiments. |

| RNAi Libraries | Facilitates genome-wide or targeted loss-of-function screens for phenotype discovery. |

Visualizing the Manual Curation Workflow and Data Integration

Diagram 1: Manual Curation Workflow for GO Annotations

Diagram 2: GO Data Sources and Evidence Flow

Diagram 3: From Experiment to GO Annotation (Example: Kinase Pathway)

In conclusion, manual curation by domain experts at MODs is not merely a legacy practice but a critical, ongoing component of the GO ecosystem. It generates the foundational, high-fidelity data required for accurate systems biology, drug target validation, and the training of next-generation AI-based annotation tools. Its integration with computational methods creates a synergistic framework essential for modern biological and biomedical research.

This whitepaper explicates the computational methodologies underpinning the Gene Ontology (GO) annotation process, a cornerstone of modern functional genomics. Accurate GO annotation is essential for interpreting high-throughput biological data, informing hypothesis generation in basic research, and identifying novel therapeutic targets in drug development. While experimental evidence codes (e.g., IDA, IPI) provide the highest-confidence annotations, the vast majority of functional knowledge is propagated computationally. This document provides an in-depth technical guide to three pivotal computational evidence codes: Inferred from Sequence or Structural Similarity (ISS), Inferred from Biological aspect of Ancestor (IBA), and Inferred from Electronic Annotation (IEA). These methods form the scalable backbone of GO annotation, enabling the functional characterization of proteomes across the tree of life.

Methodological Foundations and Protocols

Homology-Based Annotation: Inferred from Sequence or Structural Similarity (ISS)

The ISS code is applied when a curator manually reviews and validates the results of a sequence or structural similarity search, asserting functional similarity between a characterized gene product and an uncharacterized one.

Experimental/Computational Protocol:

- Query Sequence Preparation: Obtain the protein sequence of the unannotated gene product (QuerySeq).

- Database Search: Execute a BLASTP search against a curated database of annotated protein sequences (e.g., UniProtKB/Swiss-Prot) using stringent parameters (E-value threshold ≤ 1e-30, query coverage ≥ 80%).

- Hit Analysis: Identify the top significant hit (HitSeq) with a known GO annotation based on direct experimental evidence (EXP, IDA, etc.).

- Manual Curation: The curator assesses:

- Sequence Identity: Typically requires >60% identity over the aligned region.

- Domain Architecture: Use tools like InterProScan to confirm conservation of functional domains.

- Absence of Conflicting Data: Ensure no experimental evidence contradicts the inferred function.

- Annotation Assertion: If criteria are met, the GO term from HitSeq is transferred to QuerySeq with the ISS evidence code.

Phylogenetic Inference-Based Annotation: IBA and IEA

These codes automate annotation transfer within a phylogenetic framework, with IBA representing a higher-confidence, manually reviewed subset.

Phylogenetic Inference Protocol (IBA/IEA Pipeline):

- Orthology Group Construction: For a target species, identify putative orthologs using tools like OrthoFinder or PANTHER. The input is a whole-proteome FASTA file.

- Tree Building: Generate a gene tree for each ortholog group using maximum likelihood methods (e.g., RAxML, FastTree).

- Ancestral State Reconstruction: Use the GO Phylogenetic Annotation (PAINT) tool. Manually curated GO annotations from a "reference" genome (e.g., human, mouse, yeast) are overlaid onto the tree.

- Annotation Propagation (Rules):

- A GO term can be propagated from an annotated node to its direct descendant if the function is believed to be conserved in the common ancestor.

- Propagation stops at nodes where there is evidence of gene duplication, neofunctionalization, or loss of function.

- Evidence Code Assignment:

- IBA: Applied to annotations created by the PAINT tool where the phylogenetic inference has been manually reviewed by a curator.

- IEA: Applied to fully automated annotations generated by pipelines like Ensembl Compara or PANTHER that use similar phylogenetic principles but without manual review.

Data and Comparative Analysis

Table 1: Quantitative Comparison of Computational GO Evidence Codes (Representative Data)

| Evidence Code | Methodological Basis | Typical Review Level | Relative Confidence | Approx. % of Total GO Annotations* | Primary Source/Resource |

|---|---|---|---|---|---|

| ISS | Pairwise sequence/structural alignment | Manual Curation | High | ~4% | Manual curation by GO Consortium members |

| IBA | Phylogenetic inference within curated tree | Manual Review of Model | High | ~1% | GO Phylogenetic Annotation (PAINT) pipeline |

| IEA | Automated orthology/domain-based transfer | Fully Automated | Lower | ~65% | InterPro2GO, UniRule, Ensembl Compara |

Note: Percentages are approximate and vary by organism and proteome release. IEA dominates in quantity but requires filtering for high-confidence analyses.

Table 2: Key Algorithmic Tools and Databases for Computational Annotation

| Tool/Database | Purpose in Annotation | Typical Input | Output for Annotation |

|---|---|---|---|

| BLAST Suite | ISS: Find sequence homologs | Protein/DNA sequence | List of homologous sequences with E-values |

| InterProScan | IEA/ISS: Identify protein domains/families | Protein sequence | Domain matches (Pfam, SMART, etc.) linked to GO terms |

| OrthoFinder | IBA/IEA: Determine ortholog groups | Multi-FASTA (proteomes) | Orthogroups and gene trees |

| PANTHER DB | IEA: Scalable phylogenetic classification | Protein sequence | GO inferences via family/subfamily HMMs |

| PAINT Tool | IBA: Phylogenetic annotation curation | Gene tree, curated annotations | Reviewed GO annotations for tree nodes |

Visualizing Workflows and Relationships

Title: Computational GO Annotation Decision Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Resources for Annotation Validation

| Item/Resource | Function in Research | Example Vendor/Implementation |

|---|---|---|

| GO Consortium Annotation File | Primary source of all GO-term-to-gene-product associations. | Downloaded from http://geneontology.org |

| UniProtKB/Swiss-Prot Database | High-quality, manually annotated protein sequence database used as the reference for ISS. | EMBL-EBI / SIB |

| PANTHER Classification System | Library of protein family HMMs for large-scale, automated functional classification (IEA). | Paul D. Thomas Lab (USC) |

| Cytoscape with ClueGO | Visualization and network analysis of GO term enrichment results from experimental data. | Open Source (cytoscape.org) |

| GO Enrichment Analysis Tools | Determine statistically over-represented GO terms in a gene set (e.g., for target validation). | g:Profiler, DAVID, topGO (Bioconductor) |

| Custom Python/R Scripts (Biopython, biomaRt) | Automate retrieval, filtering (e.g., removing IEA), and analysis of GO annotations for specific projects. | Open Source Libraries |

Within the Gene Ontology (GO) annotation process, evidence codes are critical metadata that indicate the type of evidence supporting an association between a gene product and a GO term. They underpin the reliability and interpretability of GO data, which is foundational for biological research, target validation, and drug development. This guide provides a technical dissection of four pivotal evidence types: Experimental (EXP, IDA, IMP) and Inferred (IEA).

Evidence Code Classification and Hierarchy

GO evidence codes are organized hierarchically based on the underlying evidence. The codes discussed here fall under two primary categories: Experimental and Computational Analysis.

GO Evidence Code Hierarchy

Quantitative Comparison of Evidence Code Usage and Reliability

The following table summarizes key quantitative and qualitative metrics for each evidence code, based on recent GO data releases and curation guidelines.

| Evidence Code | Full Name | Curator Reviewed? | Typical Annotation Volume* (Approx. %) | Common Data Sources | Relative Reliability for Hypothesis |

|---|---|---|---|---|---|

| EXP | Inferred from Experiment | Yes | ~11% | Primary literature (wet-lab experiments) | High - Gold Standard |

| IDA | Inferred from Direct Assay | Yes | ~16% | Primary literature (specific functional assays) | High - Gold Standard |

| IMP | Inferred from Mutant Phenotype | Yes | ~12% | Primary literature (genetic interference studies) | High - Gold Standard |

| IEA | Inferred from Electronic Annotation | No | ~61% | Automatic pipelines (e.g., InterPro, UniProtKB) | Low - Requires Verification |

Note: Percentages are estimates based on total GO annotation counts and illustrate the prevalence of automated annotations.

Detailed Methodologies for Experimental Evidence Codes

EXP (Inferred from Experiment)

This is a broad code used when a physical, biochemical, or genetic interaction experiment directly supports the annotation, but a more specific code like IDA or IMP does not apply.

Core Protocol Example: Co-immunoprecipitation (Co-IP) for Protein Binding (GO:0005515)

- Cell Lysis: Lyse cells expressing tagged (e.g., FLAG, HA) and untagged proteins of interest in a non-denaturing buffer.

- Immunoprecipitation: Incubate lysate with antibody beads specific to the tag. Use control IgG beads for the negative control.

- Washing: Wash beads extensively to remove non-specifically bound proteins.

- Elution & Analysis: Elute bound proteins and analyze by SDS-PAGE and Western blotting.

- Validation: Probe the blot for the putative interacting partner (untagged protein). Co-elution confirms a physical interaction, supporting an EXP code annotation.

IDA (Inferred from Direct Assay)

Used for annotations directly supported by a functional assay that measures an activity, not just an interaction.

Core Protocol Example: Enzyme Activity Assay (GO:0003824)

- Protein Purification: Purify the recombinant protein of interest.

- Reaction Setup: Incubate the purified enzyme with its specific substrate under optimized buffer conditions (pH, temperature, co-factors).

- Activity Measurement: Use a spectrophotometer or fluorometer to measure the conversion of substrate to product over time (e.g., NADH oxidation at 340 nm).

- Controls: Include negative controls (heat-inactivated enzyme, no substrate) and positive controls (known enzyme).

- Analysis: Calculate specific activity (µmoles product/min/mg protein). Direct measurement of catalytic activity warrants an IDA code.

IMP (Inferred from Mutant Phenotype)

Applied when a phenotype observed after genetic alteration (knockout, mutation, knockdown) supports the annotation.

Core Protocol Example: Gene Knockout via CRISPR-Cas9 (GO:0009653 phenotype: response to salt stress)

- gRNA Design: Design guide RNAs targeting an exon of the gene of interest.

- Transfection: Co-transfect cells or organisms with plasmids encoding Cas9 and the gRNA.

- Screening: Isolate clones and use PCR/genomic sequencing to identify frameshift mutations.

- Phenotypic Assay: Subject knockout and wild-type lines to high-salt conditions.

- Phenotype Measurement: Quantify a relevant metric (e.g., plant root growth, cell viability). Compare mutant vs. wild-type. Significant impairment in the knockout supports an IMP annotation for the gene's role in the salt stress response.

The Nature and Caveats of IEA (Inferred from Electronic Annotation)

IEA annotations are generated automatically without curator review, primarily via:

- Sequence Similarity: Using tools like BLAST to transfer annotations from characterized homologs.

- Pattern Matching: Using tools like InterProScan to identify protein domains and infer function.

- Phylogenetic Inheritance: Using structured models like the Ensembl Compara pipeline.

IEA Annotation Generation Workflow

Critical Limitation: IEA annotations are prone to error propagation and lack the nuanced context of manual curation. They should be considered preliminary and must be validated for high-confidence research.

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Primary Function in Experimental Evidence Generation |

|---|---|

| Tag-Specific Antibody Beads (e.g., Anti-FLAG M2 Magnetic Beads) | For immunopurification of tagged proteins in EXP-level interaction studies (Co-IP). |

| Spectrophotometer / Microplate Reader | For quantifying enzyme activity (IDA) via absorbance or fluorescence changes in kinetic assays. |

| CRISPR-Cas9 Knockout Kit | For generating gene-specific knockout cell lines or organisms to study mutant phenotypes (IMP). |

| Validated Positive Control Protein/Enzyme | Essential control for IDA assays to validate experimental conditions and measurement accuracy. |

| High-Fidelity DNA Polymerase & Sequencing Primers | For amplifying and sequencing genomic DNA to confirm CRISPR-induced mutations in IMP protocols. |

| Computational Server (for IEA verification) | Running local BLAST or InterProScan to trace the source of an IEA annotation and assess its reliability. |

Within the broader thesis on the Gene Ontology (GO) annotation process and data sources, the practical retrieval of annotations is a critical step for researchers. GO annotations link gene products (proteins, non-coding RNAs) to controlled, hierarchical terms describing Molecular Function (MF), Biological Process (BP), and Cellular Component (CC). Accessing this data enables functional enrichment analysis, hypothesis generation, and validation in experimental biology and drug discovery.

A live search confirms the following as the authoritative, current sources for GO annotations. These repositories employ distinct annotation strategies, as summarized in Table 1.

Table 1: Primary GO Annotation Data Sources

| Source/Project | Scope & Strategy | Direct Download URL (as of 2024) | Update Frequency |

|---|---|---|---|

| UniProt-GOA (EBI) | Largest source, integrates annotations from multiple channels including manual curation and automated pipelines. | ftp.ebi.ac.uk/pub/databases/GO/goa/ | Daily |

| Gene Ontology Consortium (Annotations) | Central repository providing the GO resource and basic annotations. | http://current.geneontology.org/products/pages/downloads.html | Monthly |

| Model Organism Databases (e.g., SGD, MGI, FlyBase) | Organism-specific, high-quality manual curation. | Species-specific sites (e.g., yeastgenome.org) | Varies |

| PAINT (Phylogenetic Annotation and Inference Tool) | Phylogenetically-based inference for non-model organisms. | http://current.geneontology.org/products/pages/downloads.html (included) | With releases |

| Ensembl BioMart | Platform for complex querying and batch retrieval across species. | www.ensembl.org/biomart | Aligned with releases |

Detailed Methodologies for Access and Download

Protocol 3.1: Bulk Download via UniProt-GOA

This protocol is optimal for obtaining comprehensive annotations for entire proteomes (e.g., human, mouse) or specific organism groups.

- Navigate to the UniProt-GOA FTP directory:

ftp.ebi.ac.uk/pub/databases/GO/goa/ - Identify the relevant file:

- For human annotations:

goa_human.gaf.gz - For all UniProt-reviewed annotations across species:

goa_uniprot_all.gaf.gz - Other formats (GPAD, GAF) are available. GAF is the standard Gene Annotation File.

- For human annotations:

- Download the compressed file using a command-line tool (e.g.,

wget) or a web browser. - Decompress using

gunzipor equivalent software. - Parse the file. Columns are tab-separated. Key columns include: DB Object ID (gene/protein identifier), GO Term, Evidence Code, and Reference.

Protocol 3.2: Programmatic Access Using APIs

For integrating annotation retrieval into analysis pipelines, APIs are essential.

Example using the GOATools Python library:

Example using the NCBI EUtils API (for Gene2GO):

A direct E-Utilities query can fetch annotations for a list of Gene IDs (e.g., 1017, 1018):

https://eutils.ncbi.nlm.nih.gov/entrez/eutils/elink.fcgi?dbfrom=gene&db=gene&linkname=gene_go&id=1017,1018

Protocol 3.3: Customized Retrieval via Ensembl BioMart

This method is ideal for filtering annotations for a specific gene set and adding orthogonal data.

- Access BioMart at https://www.ensembl.org/biomart.

- Choose Database: "Ensembl Genes" > Choose Dataset (e.g., "Human Genes").

- Configure Filters: On the "Filters" page, add a "Gene" filter to input your list of stable gene IDs (e.g., ENSG00000139618).

- Select Attributes: On the "Attributes" page:

- Under "GENE", select "Ensembl Gene ID".

- Under "EXTERNAL", select "EntrezGene ID" and "Gene Name".

- Under "GO", select "GO term accession", "GO term name", "GO namespace", and "GO evidence code".

- Export Results: Click "Results", choose "File" format (e.g., TSV), and export.

Workflow Diagram: From Gene List to Functional Analysis

Title: GO Annotation Retrieval and Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for GO-Based Analysis

| Item/Reagent | Function/Application in GO Analysis |

|---|---|

| GOATools (Python library) | A suite of Python scripts for parsing GO files, performing enrichment analysis, and visualizing results. |

| clusterProfiler (R/Bioconductor) | A widely used R package for statistical analysis and visualization of functional profiles (GO, KEGG) for gene clusters. |

| Cytoscape with ClueGO/stringApp | Network visualization platform. ClueGO performs GO enrichment and creates interpretable networks; stringApp integrates protein-protein interaction data with GO terms. |

| PANTHER Classification System | Web-based tool for gene list functional classification, statistical enrichment testing, and pathway mapping. Provides curated GO-Slim datasets. |

| Revigo | Web tool for summarizing and visualizing long lists of GO terms by removing redundant terms, creating tractable treemaps or scatterplots. |

| Custom Scripts (Python/R) | Essential for preprocessing gene identifiers, parsing large GAF files, and automating repetitive retrieval and analysis tasks. |

Advanced Considerations and Data Integrity

Evidence Codes and Filtering Strategies

Annotations are accompanied by evidence codes (e.g., EXP: Inferred from Experiment, IEA: Inferred from Electronic Annotation). For high-confidence analyses, filter out computationally inferred annotations (IEA). Manual curation codes (EXP, IDA, IPI, IMP, IGI, IEP) provide the highest reliability.

Table 3: Common GO Evidence Code Categories

| Evidence Code | Category | Description | Typical Use in Analysis |

|---|---|---|---|

| EXP, IDA, IPI | Experimental | Direct experimental evidence | Core validation, high-confidence sets |

| IBA, IBD, IKR | Phylogenetic | Inferred from biological aspect of ancestor/descendant | Including evolutionary context |

| ISS, ISO, ISA | Computational | Inferred from sequence/structural similarity | Broad analysis, requires caution |

| IEA | Electronic | Inferred from electronic annotation | Often excluded in stringent analyses |

| TAS, NAS | Curator | Traceable/Non-traceable author statement | Reviewed, reliable |

Experimental Protocol for Validation: Wet-Lab Confirmation of a GO Annotation

If computational analysis highlights a key GO Biological Process term (e.g., "positive regulation of apoptotic process", GO:0043065) for a gene of unknown function, the following validation protocol can be applied:

- Gene Knockdown/Out: Use siRNA (for mammalian cells) or CRISPR-Cas9 to create a loss-of-function model for the gene of interest.

- Treatment: Apply a known apoptotic inducer (e.g., Staurosporine at 1 µM) to both control and knockout cell lines for 6 hours.

- Assay for Apoptosis:

- Annexin V / Propidium Iodide (PI) Flow Cytometry: Harvest cells, stain with Annexin V-FITC and PI according to manufacturer protocol. Analyze by flow cytometry. Quantify the percentage of cells in early (Annexin V+/PI-) and late (Annexin V+/PI+) apoptosis.

- Caspase-3/7 Activity Assay: Using a luminescent substrate, measure caspase activation in cell lysates as per kit instructions.

- Expected Validation: If the gene positively regulates apoptosis, its knockout should show a significant decrease in Annexin V-positive cells and reduced caspase activity upon inducer treatment compared to the control, thereby supporting the GO annotation.

Title: Wet-Lab Validation of a GO Annotation

Effectively accessing and downloading GO annotations is a foundational skill in modern bioinformatics-driven research. By selecting the appropriate data source (Table 1), applying a rigorous retrieval protocol (Section 3), and understanding the underlying evidence (Table 3), researchers can generate robust functional profiles for their gene sets. This process, integral to the broader thesis on GO data, directly fuels downstream experimental validation (Section 6) and hypothesis-driven discovery in biomedicine and drug development.

Common Pitfalls and Best Practices: Ensuring Accuracy in GO Annotation Analysis

The Gene Ontology (GO) provides a structured, controlled vocabulary to describe the functions of gene products across all species. The annotation process links GO terms to specific gene products, providing the foundational data for functional genomics. Each annotation is assigned an Evidence Code (EC) indicating the type of evidence supporting the association. This whitepaper focuses on the proper interpretation of the Inferred from Electronic Annotation (IEA) code within the context of a broader thesis on GO data integrity and reliability.

IEA annotations are derived computationally without manual curator review, making them prolific but prone to over-interpretation. They are essential for providing preliminary functional hypotheses but are not standalone proof of function.

The Hierarchy and Meaning of GO Evidence Codes

Evidence Codes are categorized by the type of evidence they represent. Understanding this hierarchy is critical for correct interpretation.

Table 1: Categories and Descriptions of Major GO Evidence Codes

| Evidence Code Category | Example Codes | Curation Method | Typical Reliability |

|---|---|---|---|

| Experimental | EXP, IDA, IPI, IMP, IGI, IEP | Manual | High (Direct empirical evidence) |

| Phylogenetic | IBA, IBD, IKR, IRD | Manual or Reviewed Computational | Medium-High (Evolutionary evidence) |

| Computational | ISS, ISO, ISA, ISM, IGC, RCA | Manual | Medium (Curator-evaluated analysis) |

| Author Statement | TAS, NAS | Manual | Medium (Based on published assertions) |

| Electronic | IEA | Fully Automated | Low (Unreviewed predictions) |

| Curator | IC, ND | Manual | Varies |

IEA stands apart as the only code assigned through entirely automated pipelines, such as those mapping InterPro domains to GO terms or applying annotation rules (e.g., via the GO Annotations (GOA) project). They comprise the vast majority of all GO annotations.

Table 2: Quantitative Snapshot of IEA Annotations (Based on Recent GO Release Data)

| Metric | Value | Implication |

|---|---|---|

| Percentage of all GO annotations that are IEA | ~70% | Dominant source of annotations. |

| Percentage of annotations for well-studied models (e.g., human, mouse) that are IEA | ~45-55% | Even curated genomes rely heavily on IEA. |

| Percentage of IEA annotations with no non-IEA support | ~40% | A large fraction are only computationally predicted. |

| Error rate estimate for IEA vs. Experimental codes | ~3-5% vs. <1% | Higher potential for inaccuracy. |

Understanding the automated sources is key to gauging reliability.

InterPro2GO & Pfam2GO: The most common source.

- Protocol: A manually created mapping file links protein family, domain, or motif signatures (in InterPro/Pfam) to specific GO terms. An automated pipeline scans protein sequences against these signatures (e.g., using HMMER for Pfam) and assigns the corresponding GO terms with the IEA code.

- Limitation: Assumes all members of a protein family share identical molecular functions, which may not be true for diverse families.

Ensembl Compara & Phylogenetic Trees:

- Protocol: Automated pipelines build gene trees. Using a known GO annotation for one member (the "anchor"), the annotation is propagated orthologously to other tree members if they meet strict sequence similarity thresholds.

- Limitation: Relies on the correctness of the anchor annotation and the orthology prediction.

UniRule (Formerly UniProtKB Automatic Annotation):

- Protocol: A system of rules (based on protein name keywords, taxonomy, existence of specific domains, etc.) automatically annotates entries in UniProtKB. These are then propagated to GO as IEA.

- Limitation: Rules are broad and can miss edge cases or biological nuance.

Diagram 1: Automated sources generating IEA evidence.

Risks of Over-Interpretation and Best Practices

Common Pitfalls

- Treating IEA as Confirmatory Evidence: Using IEA annotations to "confirm" results from a wet-lab experiment is circular reasoning.

- Ignoring the Evidence Code Stack: An annotation may be supported by multiple evidence codes. Relying solely on the IEA support when a stronger code (e.g., EXP) exists overstates uncertainty.

- Assuming High Specificity: IEA-derived terms are often correct at a broad functional level (e.g., "kinase activity") but may be incorrect or too specific (e.g., "serine/threonine kinase activity").

Best Practice Protocol for Researchers

- Always Filter by Evidence: In any analysis, segregate IEA annotations from non-IEA annotations. Test if conclusions hold using only experimentally supported (EXP, IDA, etc.) annotations.

- Trace the Annotation Back: Use the GO annotation file's "Assigned By" and "With/From" fields to identify the source (e.g., InterPro:IPR000001). Assess the appropriateness of the source's mapping rule.

- Seek Corroboration: Treat IEA as a hypothesis. Use protein-protein interaction data, expression co-localization, or literature mining to seek independent support.

- Prioritize for Validation: In experimental design, genes with only IEA support for a function of interest are prime candidates for novel validation.

Diagram 2: Decision tree for evaluating IEA annotations.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Reagents & Resources for Validating IEA-Based Hypotheses

| Item / Resource | Function in Validation | Example Provider/Identifier |

|---|---|---|

| CRISPR-Cas9 Knockout Kits | To create loss-of-function mutants for in vivo functional assays. | Synthego, Horizon Discovery |

| Validated siRNA/shRNA Libraries | For transient or stable knockdown to observe phenotypic changes. | Dharmacon (Horizon), Sigma-Aldrich |

| Tagged ORF Clones (HA-FLAG-Myc) | For overexpression and protein localization/pull-down experiments. | GenScript, Addgene (CCSB collection) |

| Phospho-Specific Antibodies | If IEA suggests kinase activity, to assess phosphorylation status of substrates. | Cell Signaling Technology |

| Recombinant Purified Protein | For in vitro enzymatic assays (e.g., kinase, GTPase) predicted by IEA. | Origene, Abnova |

| Proximity Labeling Kits (BioID/APEX) | To identify potential interaction partners of the protein of interest. | Promega (BioID), IBA Lifesciences |

| GO Enrichment Analysis Tools | To contextualize experimental results within broader GO biological processes. | DAVID, g:Profiler, clusterProfiler |

| GO Evidence Code Filter | To programmatically separate IEA from other evidence in datasets. | GOATOOLS, R package topGO |

Addressing Annotation Inconsistencies and Propagation Errors in the GO Graph