Demystifying the High-Dimensionality of Multi-Omics Data: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive exploration of the high-dimensionality inherent in multi-omics data, a central challenge in modern biomedical research.

Demystifying the High-Dimensionality of Multi-Omics Data: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive exploration of the high-dimensionality inherent in multi-omics data, a central challenge in modern biomedical research. We first establish foundational concepts, defining what constitutes high-dimensionality in the context of genomics, transcriptomics, proteomics, and metabolomics. We then detail cutting-edge methodologies and analytical pipelines designed to manage and extract knowledge from these complex datasets. Practical guidance is offered on troubleshooting common pitfalls and optimizing workflows for robust analysis. Finally, we compare validation strategies and benchmark approaches to ensure biological relevance and reproducibility. This guide is tailored for researchers, scientists, and drug development professionals seeking to navigate and leverage the complexity of multi-omics data for impactful discovery.

What is Multi-Omics High-Dimensionality? Core Concepts and Sources of Complexity

In multi-omics research, high-dimensionality is formally defined by the condition where the number of measured features or variables (p) vastly exceeds the number of observations or samples (n), denoted as p >> n. This paradigm is ubiquitous in genomics, transcriptomics, proteomics, and metabolomics, where technological advances allow for the simultaneous measurement of tens to hundreds of thousands of molecular entities from a limited set of biological specimens. This "curse of dimensionality" fundamentally challenges classical statistical inference, requiring specialized methodologies for analysis, interpretation, and validation.

Quantitative Landscape of p >> n in Modern Omics

The scale of p >> n varies across omics layers. The table below summarizes representative dimensions.

Table 1: Representative Dimensionality (p) Across Omics Platforms

| Omics Layer | Typical Feature Range (p) | Common Sample Range (n) | p/n Ratio | Example Technology |

|---|---|---|---|---|

| Genomics | 500,000 - 10,000,000 | 100 - 10,000 | 100 - 1000 | Whole-Genome Sequencing, SNP Arrays |

| Transcriptomics | 20,000 - 60,000 | 10 - 1,000 | 20 - 6,000 | RNA-Seq, Microarrays |

| Proteomics | 1,000 - 10,000+ | 10 - 500 | 10 - 500 | Mass Spectrometry (LC-MS/MS) |

| Metabolomics | 100 - 10,000 | 50 - 500 | 2 - 200 | NMR, LC/GC-MS |

| Multi-omics (Integrated) | 50,000 - 1,000,000+ | 50 - 500 | 1000 - 20,000+ | Combined Assays |

Core Statistical and Computational Challenges

The p >> n condition violates assumptions of traditional statistical models, leading to:

- Ill-posed Problems: Infinite solutions for model fitting (non-identifiability).

- Overfitting: Models memorize noise rather than learning generalizable patterns.

- Collinearity: High correlation among features.

- Curse of Dimensionality: Data becomes sparse, distorting distance metrics.

Key Methodological Approaches: Experimental Protocols

Dimensionality Reduction Protocol: Sparse Principal Component Analysis (sPCA)

Objective: Identify a low-dimensional representation of data with sparse, interpretable loadings.

- Input: Data matrix X (n x p), where n << p. Pre-process (center, scale).

- Sparsity Penalty: Apply L1 (Lasso) penalty on the loading vectors to force zero loadings for irrelevant features.

- Optimization: Solve using alternating optimization or iterative thresholding:

argmax_{v} (v^T X^T X v) subject to ||v||_2 = 1 and ||v||_1 ≤ t, wheretis a sparsity parameter. - Number of Components: Use cross-validation or variance explained criteria to select

kcomponents. - Output: Sparse loading vectors and component scores for downstream analysis.

Feature Selection Protocol: Stability Selection with Lasso

Objective: Reliably identify a stable subset of non-redundant predictive features.

- Subsampling: Draw 100+ random subsamples of the data (e.g., 50% of samples).

- Lasso Application: For each subsample, apply Lasso regression over a regularization path (λ).

- Selection Probability: For each feature, compute the probability of being selected across all subsamples and λ values.

- Thresholding: Select features with a selection probability above a pre-defined threshold (e.g., 0.6). This controls the per-family error rate.

- Validation: Apply selected features on held-out test data.

Predictive Modeling Protocol: Elastic-Net Regularized Regression

Objective: Build a generalizable predictive model when p >> n.

- Data Split: Partition data into training (70%), validation (15%), and test (15%) sets.

- Model Formulation: Solve:

argmin_{β} (||Y - Xβ||^2 + λ [α||β||_1 + (1-α)||β||^2]).αbalances L1 (Lasso) and L2 (Ridge) penalties.λcontrols overall regularization strength.

- Hyperparameter Tuning: Use k-fold cross-validation on the training set to optimize

αandλ(maximize AUC for classification, minimize MSE for regression). - Model Fitting: Refit model on the entire training set using optimal hyperparameters.

- Evaluation: Assess final model performance on the held-out test set using appropriate metrics.

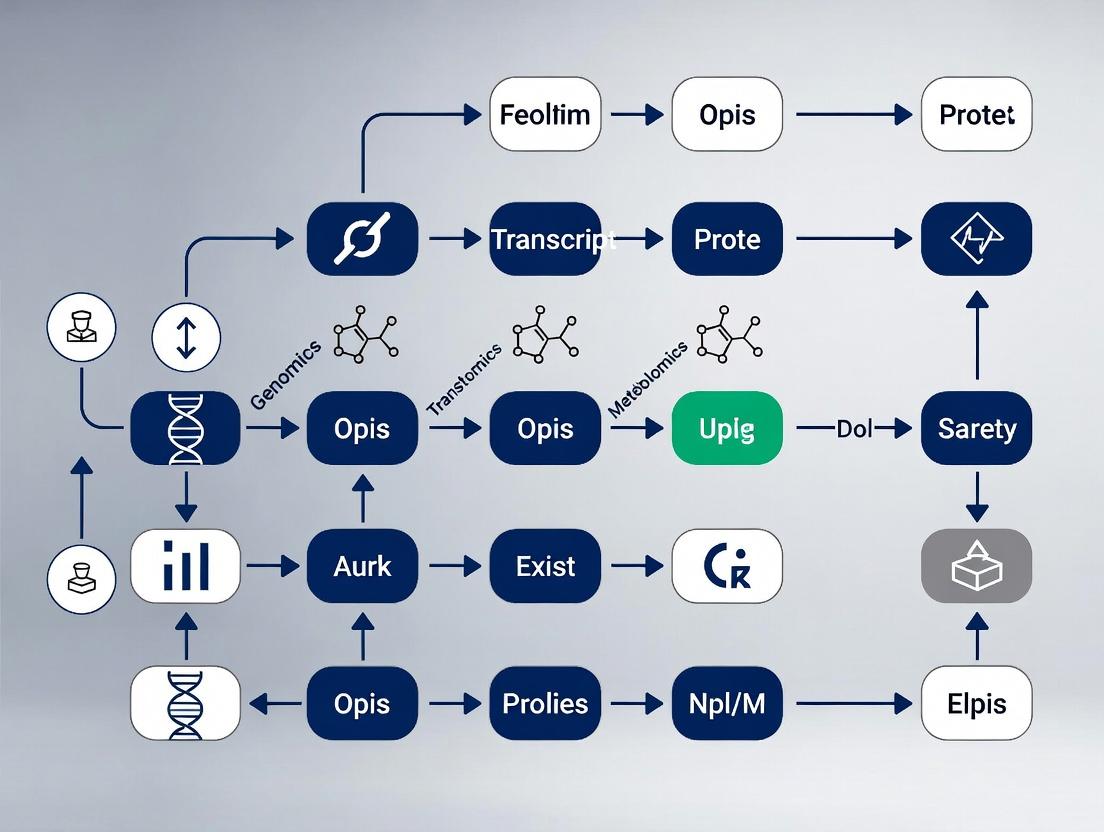

Visualizing Analysis Workflows and Relationships

High-Dimensional Omics Analysis Pipeline

Challenges and Solutions in p>>n Analysis

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Materials for High-Dimensional Omics Studies

| Item | Function & Application | Key Consideration for p>>n Context |

|---|---|---|

| Next-Generation Sequencing Kits (e.g., Illumina NovaSeq) | Generate genome/transcriptome-wide data (high p). | High depth/coverage required for robust feature detection in small n cohorts. |

| Isobaric Labeling Reagents (e.g., TMT, iTRAQ) | Multiplex proteomic samples for relative quantification. | Enables pooling of n samples to reduce batch effects, critical for small-n studies. |

| Single-Cell RNA-Seq Kits (e.g., 10x Genomics Chromium) | Profile transcriptomes of thousands of single cells. | Creates artificial p>>n datasets (cells as samples, genes as features) for subpopulation discovery. |

| High-Performance LC Columns (e.g., C18 reversed-phase) | Separate complex metabolite/protein mixtures prior to MS. | Maximizing feature resolution (p) from minimal sample input (small n). |

| Stable Isotope-Labeled Internal Standards | Absolute quantification in metabolomics/proteomics. | Essential for technical normalization to control variance in high-p data from few n. |

| Multi-omics Integration Software (e.g., MOFA, mixOmics) | Statistically integrate multiple p>>n data layers. | Key reagent for joint analysis, providing algorithms to handle shared variance. |

| CRISPR Screening Libraries (e.g., whole-genome sgRNA) | Functional genomics to link high-p molecular data to phenotype. | Enables causal validation of features identified from initial p>>n discovery cohort. |

The central challenge in modern biology is integrating high-dimensional data from multiple molecular layers to construct a predictive, systems-level understanding of physiology and disease. Each "omic" stratum provides a distinct but interconnected snapshot of biological state, governed by complex, non-linear regulatory networks. This whitepaper provides a technical overview of each layer, its measurement technologies, and the experimental protocols that generate the data fueling integrative multi-omics research.

The Omics Layers: Technologies, Data, and Protocols

Table 1: Core Omics Layers: Scope, Key Technologies, and Output Data

| Omics Layer | Molecular Entity Measured | Core High-Throughput Technologies | Primary Data Output & Scale |

|---|---|---|---|

| Genomics | DNA Sequence (Static Code) | Next-Generation Sequencing (NGS), Long-Read Sequencing (PacBio, Nanopore) | Variant calls (SNVs, Indels, CNVs), Reference genome alignment. ~3.2 billion bases (human diploid). |

| Epigenomics | DNA & Histone Modifications (Dynamic Regulators) | Bisulfite-Seq (Methylation), ChIP-Seq (Histones/TFs), ATAC-Seq (Chromatin Accessibility) | Methylation ratios, chromatin accessibility peaks, histone mark peaks. Millions of genomic loci. |

| Transcriptomics | RNA Levels (Expression Dynamics) | RNA-Seq, Single-Cell RNA-Seq (scRNA-seq), Spatial Transcriptomics | Read counts per gene/isoform. Tens of thousands of transcripts per sample. |

| Proteomics | Proteins & Modifications (Functional Effectors) | Mass Spectrometry (LC-MS/MS), Affinity-Based Arrays (Olink), RPPA | Peptide spectra counts, protein abundance/phosphorylation levels. Thousands to tens of thousands of proteins. |

| Metabolomics | Small-Molecule Metabolites (Metabolic Phenotype) | Mass Spectrometry (GC-MS, LC-MS), Nuclear Magnetic Resonance (NMR) | Spectral peak intensities identifying metabolites. Hundreds to thousands of metabolites. |

Table 2: Quantitative Data Characteristics and Dimensionality

| Layer | Typical Features per Sample | Dynamic Range | Technical Noise Sources | Batch Effect Sensitivity |

|---|---|---|---|---|

| Genomics | ~4-5 million variants (vs. reference) | Binary or low (0,1,2 copies) | Sequencing errors, coverage bias | Moderate |

| Epigenomics | ~1-2 million differentially methylated regions/CpGs; ~100k peaks | Wide (0-100% methylation) | Antibody specificity (ChIP), bisulfite conversion efficiency | High |

| Transcriptomics | ~20,000 coding genes; >100,000 isoforms | >10⁵ | Amplification bias, ribosomal RNA depletion efficiency | Very High |

| Proteomics | ~10,000 proteins (deep profiling) | >10⁶ | Ionization efficiency, sample digestion variability | High |

| Metabolomics | ~1,000-10,000 annotated peaks | >10⁹ | Extraction efficiency, instrument drift | Very High |

Detailed Experimental Methodologies

Whole Genome Sequencing (WGS) for Genomics

- Protocol: 1. DNA Extraction: Use column-based or magnetic bead kits for high-molecular-weight DNA. 2. Library Preparation: Fragment DNA via sonication, end-repair, A-tail, and ligate sequencing adapters. 3. PCR Amplification: Limited-cycle PCR to enrich adapter-ligated fragments. 4. Sequencing: Load onto Illumina NovaSeq etc., for paired-end sequencing (2x150 bp). 5. Bioinformatics: Align reads to reference (e.g., GRCh38) using BWA-MEM, call variants with GATK.

- Key Quality Metrics: Coverage depth (≥30x for WGS), mapping rate (>95%), Q30 score (>80%).

Bulk RNA-Sequencing for Transcriptomics

- Protocol: 1. RNA Extraction: Trizol or column-based extraction, with DNase I treatment. 2. RNA Integrity Check: RIN > 7.0 on Bioanalyzer. 3. Library Prep: Poly-A selection for mRNA or ribosomal RNA depletion for total RNA. Reverse transcription, second-strand synthesis, adapter ligation, and PCR amplification. 4. Sequencing: Illumina platform, typically 20-40 million reads per sample. 5. Analysis: Alignment (STAR), quantification (featureCounts), differential expression (DESeq2/edgeR).

- Key Consideration: Stranded protocols preserve transcript orientation.

Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) for Proteomics

- Protocol (Data-Dependent Acquisition - DDA): 1. Protein Extraction & Digestion: Lyse cells/tissue in RIPA buffer, reduce (DTT), alkylate (IAA), digest with trypsin. 2. Desalting: Use C18 solid-phase extraction tips. 3. LC Separation: Load peptides onto a C18 column with nano-flow HPLC and a 60-120 min organic gradient. 4. MS Analysis: Ionize via electrospray; full MS scan (MS1) followed by isolation/fragmentation of top N ions for MS2 scans. 5. Database Search: Match MS2 spectra to theoretical spectra from protein sequence databases using Sequest/MaxQuant.

- Alternative: Data-Independent Acquisition (DIA/SWATH) for higher reproducibility.

Visualizing Multi-omics Relationships and Workflows

Title: Information Flow Between Omics Layers

Title: Generic Multi-omics Experimental and Computational Pipeline

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for Multi-omics Research

| Category | Item | Function & Application |

|---|---|---|

| Nucleic Acid Analysis | Poly(A) Magnetic Beads | Isolation of messenger RNA from total RNA for RNA-seq library prep. |

| Tn5 Transposase (Tagmentase) | Enzymatic fragmentation and simultaneous adapter tagging of DNA for ATAC-seq and NGS library prep. | |

| Bisulfite Conversion Kit | Chemical treatment that converts unmethylated cytosine to uracil for methylation profiling. | |

| Protein/Metabolite Analysis | Trypsin, Sequencing Grade | Protease for specific digestion of proteins into peptides for LC-MS/MS analysis. |

| C18 Solid Phase Extraction (SPE) Tips | Desalting and concentration of peptide or metabolite samples prior to MS injection. | |

| Stable Isotope-Labeled Internal Standards | Absolute quantification and correction for ionization efficiency in targeted MS assays. | |

| Single-Cell/Spatial | Barcoded Gel Beads (10x Genomics) | Partitioning of single cells and mRNA for droplet-based scRNA-seq. |

| Visium Spatial Gene Expression Slide | Array-coated slide for capturing mRNA from tissue sections while preserving location data. | |

| General | DNase/RNase Inhibitors | Protect nucleic acids from degradation during sample processing. |

| Proteinase K | Broad-spectrum protease for digesting contaminants during nucleic acid extraction. | |

| Magnetic Bead-Based Cleanup Kits | High-throughput purification and size selection of DNA/RNA libraries. |

Within the broader thesis on explaining high-dimensionality in multi-omics research, the intrinsic characteristics of raw data form the fundamental layer of complexity. These inherent features—sparsity, noise, batch effects, and technical artifacts—are not mere nuisances but constitutive elements that shape all downstream analytical validity. Successfully deconvoluting biological signals from these embedded technical confounders is the critical first step in constructing robust, biologically interpretable models from multi-tiered omics datasets (genomics, transcriptomics, proteomics, metabolomics). This guide provides a technical deep dive into these characteristics, their origins, and methodologies for their quantification and mitigation.

Sparsity

Sparsity refers to data matrices where most entries are zeros or missing values. In multi-omics, this arises from biological reality (e.g., most metabolites not present in a sample) and technical limitations (detection thresholds of mass spectrometers).

Table 1: Quantitative Characterization of Sparsity Across Omics Layers

| Omics Layer | Typical Assay | Approx. Sparsity Range | Primary Cause |

|---|---|---|---|

| Single-Cell RNA-seq | 10x Genomics | 80-95% | Dropout events, low mRNA copy number |

| Metabolomics | LC-MS (untargeted) | 60-90% | Detection limits, biological absence |

| Proteomics | Shotgun LC-MS/MS | 50-85% | Dynamic range, ionization efficiency |

| Methylomics | Whole-genome bisulfite seq | 40-70% | Focused methylation patterns |

Protocol 1.1: Evaluating Sparsity with the Sparsity Index

- Data Input: Start with a pre-processed count/abundance matrix ( M ) of shape ( m \times n ) (m features, n samples).

- Define Detection Threshold: For each feature, define a detection limit ( L ) (e.g., 1 count for sequencing, noise level for MS).

- Compute Sparsity per Feature: For feature ( i ), ( Si = \frac{\text{Count}(M{ij} < L)}{n} ).

- Aggregate: Report global sparsity as ( S = \frac{1}{m} \sum{i=1}^{m} Si ). Visualize the distribution of ( S_i ).

Noise

Noise encompasses stochastic variability obscuring the true biological signal. It is categorized as:

- Technical Noise: Introduced by measurement platforms (e.g., sequencing errors, MS detector noise).

- Biological Noise: Intrinsic stochasticity in molecular processes (e.g., transcriptional bursting).

Table 2: Noise Sources and Magnitude Estimates

| Noise Type | Omics Context | Estimation Method | Typical CV Range |

|---|---|---|---|

| Poisson Technical Noise | NGS Read Counts | Mean-Variance Relationship | 10-30% |

| Additive Gaussian Noise | Microarray Intensity | Replicate Analysis | 5-15% |

| Multiplicative Noise | LC-MS Peak Area | Signal-Dependent Models | 15-40% |

Protocol 2.1: Technical Noise Estimation via ERCC Spike-Ins

- Spike-in Addition: Add a known quantity of External RNA Controls Consortium (ERCC) synthetic RNAs to your sample prior to RNA-seq library prep.

- Sequencing & Quantification: Sequence and map reads to the ERCC reference. Obtain expected input concentrations (from mix) and observed read counts.

- Model Fitting: Fit a generalized linear model (e.g., negative binomial) between log(expected concentration) and log(observed counts).

- Parameter Extraction: The dispersion parameter of the model quantifies the technical noise exceeding Poisson expectation.

Batch Effects

Batch effects are systematic non-biological differences introduced when samples are processed in different groups (batches). They are a dominant confounder in multi-omics integration.

Diagram 1: Workflow illustrating batch effect introduction.

Protocol 3.1: Batch Effect Detection with Principal Component Analysis (PCA)

- Data Preparation: Create a combined normalized matrix of all samples across batches. Include batch metadata.

- PCA Execution: Perform PCA on the combined matrix (features as variables).

- Variance Inspection: Examine the proportion of variance explained by the first few principal components (PCs).

- Association Testing: Statistically test (e.g., PERMANOVA, linear regression) the association between the first 3-5 PCs and batch labels vs. biological conditions. A strong association with batch indicates a significant batch effect.

Technical Artifacts

Technical artifacts are specific, often sporadic, distortions caused by equipment malfunctions or protocol failures (e.g., column bubbles in LC, image scratches in arrays).

The Scientist's Toolkit: Key Reagent Solutions for Artifact Mitigation

| Item | Function in Multi-omics | Example Product/Brand |

|---|---|---|

| UMI Adapters | Unique Molecular Identifiers to correct PCR amplification bias and errors in NGS. | Illumina TruSeq UD Indexes |

| Internal Standard Mix | Spike-in cocktails for mass spectrometry to normalize for ionization efficiency and instrument drift. | MS-Cheker Proteomics Standard, Biocrates MxP Quant 500 Kit |

| Digestion Control Proteins | Monitors completeness and consistency of protein digestion in proteomics. | MS-SMA RTX Digestion Control |

| ERCC Spike-in Mix | Defined RNA spike-ins for absolute quantification and noise modeling in RNA-seq. | Thermo Fisher Scientific ERCC ExFold RNA Spike-In Mixes |

| Bisulfite Conversion Control | Assesses the efficiency of bisulfite conversion in methylation sequencing. | Qiagen EpiTect Control DNA |

| Blocking Reagents | Reduce non-specific binding in microarray or spatial transcriptomics assays. | Cot-1 DNA, BSA, Formamide |

Integrated Mitigation Workflow

Addressing these characteristics requires a sequential, layered approach.

Diagram 2: Sequential workflow for mitigating intrinsic data issues.

Protocol 4.1: Integrated Preprocessing for scRNA-seq Data

- Artifact/Quality Filtering (Step 1): Use

CellRangerorSeuratto remove cells with high mitochondrial gene percentage (>20%) or low unique gene counts. Remove genes detected in <10 cells. - Sparsity & Noise Handling (Step 2):

- Normalization: Scale reads per cell using total-count normalization (e.g.,

Seurat::NormalizeData). - Imputation: Use a model-based approach (e.g.,

ALRA,MAGIC) cautiously to fill plausible zeros without over-smoothing.

- Normalization: Scale reads per cell using total-count normalization (e.g.,

- Batch Correction (Step 3): Apply integration algorithms that anchor datasets (e.g.,

Seurat::FindIntegrationAnchors,Harmony,scVI) to align cells across batches in a shared low-dimensional space. - Validation: Confirm that known cell-type markers form coherent clusters post-correction and that batch-specific separation is minimized in visualizations like UMAP.

In high-dimensional multi-omics research, data is not merely collected but constructed through a complex interplay of biology and technology. Sparsity, noise, batch effects, and artifacts are intrinsic to this construction. A rigorous, stepwise experimental and computational protocol to characterize and mitigate these issues is non-negotiable for deriving biologically truthful conclusions. This foundational work enables the subsequent robust integration of omics layers, driving discoveries in systems biology and therapeutic development.

Within the context of multi-omics data high-dimensionality research, understanding the biological origins of data complexity is paramount. This technical guide deconstructs the primary sources of dimensionality across biological strata, from discrete genetic variation to emergent pathway dynamics. The inherent high-dimensionality of integrated omics datasets is not merely a statistical challenge but a direct reflection of the multi-layered, interconnected architecture of biological systems.

Genetic Variants: The Primary Layer of Variation

The foundational source of dimensionality in human populations stems from genomic variation. Each variant represents a potential dimension contributing to phenotypic diversity and disease susceptibility.

Types and Frequencies of Genetic Variants

Quantitative data on variant types and their population frequencies are summarized in Table 1.

Table 1: Spectrum and Scale of Human Genetic Variation

| Variant Type | Approximate Count in Human Genome (per individual) | Typical Allele Frequency Range (in populations) | Contribution to Multi-omics Dimensionality |

|---|---|---|---|

| Single Nucleotide Polymorphism (SNP) | 4-5 million | Common (>1%) to Rare (0.1-1%) | Primary source for GWAS; millions of potential features. |

| Insertion/Deletion (Indel) | 300,000 - 500,000 | Wide range, often low frequency | Adds alignment complexity in sequencing data. |

| Copy Number Variation (CNV) | ~1,000 (>1kb) | Variable, often <1% | Alters gene dosage; non-linear transcriptional effects. |

| Tandem Repeat | Millions (mostly short) | Highly polymorphic | Challenging to assay; source of regulatory and coding variation. |

| Structural Variation (SV) | ~2,000-3,000 | Mostly rare | Major chromosomal changes; high-impact features. |

Key Experimental Protocol: Genome-Wide Association Study (GWAS)

Objective: To identify statistically significant associations between genetic variants (typically SNPs) and a trait or disease.

Detailed Methodology:

- Cohort Genotyping: DNA from case and control cohorts is genotyped using high-density microarray chips (e.g., Illumina Global Screening Array, ~1M markers) or via whole-genome sequencing (WGS).

- Quality Control (QC): Per-sample and per-variant filtering is applied.

- Sample QC: Remove samples with high missingness (>5%), sex discrepancies, or extreme heterozygosity.

- Variant QC: Exclude variants with high missingness (>2%), significant deviation from Hardy-Weinberg Equilibrium (HWE p<1e-6 in controls), and low minor allele frequency (MAF < 1% for common variant studies).

- Imputation: Genotyped variants are statistically imputed to a reference panel (e.g., 1000 Genomes, Haplotype Reference Consortium) to increase density to ~10-30 million variants.

- Population Stratification: Principal Component Analysis (PCA) is performed on genotyping data to derive ancestry covariates.

- Association Testing: For each imputed variant, a statistical model (e.g., logistic regression for case-control) tests the null hypothesis of no association. The model includes covariates for ancestry (PCs), age, and sex.

Phenotype ~ β0 + β1*(Genotype Dosage) + β2*(PC1) + ... + βn*(Covariate_n) - Multiple Testing Correction: A genome-wide significance threshold is applied (typically p < 5e-8).

- Replication & Meta-analysis: Significant loci are validated in an independent cohort, followed by meta-analysis to combine evidence.

Visualization: From Variant to Gene Function

Flow of Genetic Variant Effects Across Molecular Layers

Transcriptional & Epigenetic Regulation: Amplifying Dimensionality

The mapping from genome to transcriptome is not one-to-one. Regulatory mechanisms exponentially increase the potential feature space.

Quantitative Dimensions of Regulation

Table 2: Sources of Dimensionality in Transcriptional Regulation

| Regulatory Layer | Measurable Features | Approximate Scale in Humans | Technology |

|---|---|---|---|

| Gene Expression | Transcript counts per gene | ~20,000 coding genes | RNA-seq, Microarrays |

| Isoform Usage | Transcript isoforms per gene | ~100,000+ total isoforms | Isoform-specific RNA-seq |

| Chromatin Accessibility | Accessible chromatin regions | ~100,000 - 1 million peaks | ATAC-seq, DNase-seq |

| DNA Methylation | CpG site methylation status | ~28 million CpG sites | Whole-genome bisulfite sequencing |

| Histone Modifications | Enrichment of specific marks (e.g., H3K27ac) | Multiple marks x genomic bins | ChIP-seq |

| Chromatin Conformation | Genomic interaction loci | Millions of potential contacts | Hi-C, ChIA-PET |

Key Experimental Protocol: Assay for Transposase-Accessible Chromatin with Sequencing (ATAC-seq)

Objective: To identify genome-wide regions of open chromatin, indicative of regulatory activity.

Detailed Methodology:

- Nuclei Isolation: Cells are lysed in a mild detergent buffer to isolate intact nuclei. Critical: keep samples cold and use fresh or flash-frozen tissue.

- Tagmentation: Isolated nuclei are incubated with the Tn5 transposase pre-loaded with sequencing adapters. Tn5 simultaneously fragments accessible DNA and inserts adapters.

- Reaction: 25-50,000 nuclei, 1x TD Buffer, Tn5 enzyme (Illumina), 37°C for 30 min.

- DNA Purification: Tagmented DNA is purified using a standard column-based PCR cleanup kit.

- PCR Amplification: Purified DNA is amplified with barcoded primers for 10-12 cycles to generate the sequencing library. Use a qPCR side-reaction to determine optimal cycle number.

- Library QC & Sequencing: Libraries are size-selected (typically 150-600 bp fragments) and sequenced on an Illumina platform, PE 75-150 bp.

- Bioinformatics Analysis:

- Alignment: Reads are aligned to a reference genome (e.g., hg38) using aligners like BWA-MEM.

- Peak Calling: Regions of significant enrichment (peaks) are identified using tools like MACS2, representing open chromatin.

- Motif Analysis & Annotation: Peaks are annotated to nearby genes and analyzed for transcription factor binding motifs using HOMER or MEME.

Metabolic Pathways: Integrating Dimensions into Systems

Pathways represent a higher-order source of dimensionality, where non-linear interactions between molecules create emergent, systems-level features.

Visualization: Multi-omics Data Integration into Pathway Context

Multi-omics Integration for Pathway-Centric Analysis

Key Experimental Protocol: Untargeted Liquid Chromatography-Mass Spectrometry (LC-MS) Metabolomics

Objective: To comprehensively profile the small-molecule metabolome in a biological sample.

Detailed Methodology:

- Sample Preparation: Cells/tissue are quenched rapidly (liquid N2). Metabolites are extracted using a cold solvent mixture (e.g., 80% methanol/water). Internal standards are added for QC. After vortexing and centrifugation, the supernatant is dried and reconstituted in LC-MS compatible solvent.

- Liquid Chromatography: The extract is injected onto a reversed-phase (e.g., C18) or hydrophilic interaction (HILIC) column for separation. A gradient from aqueous to organic solvent elutes metabolites over 15-25 minutes.

- Mass Spectrometry: Eluate is ionized via electrospray ionization (ESI) in positive and negative modes separately. A high-resolution mass spectrometer (e.g., Q-TOF, Orbitrap) performs data-dependent acquisition (DDA): a full MS1 scan (e.g., m/z 50-1200) is followed by MS2 fragmentation scans of the most intense ions.

- Data Processing:

- Feature Detection: Software (e.g., XCMS, MS-DIAL) aligns chromatograms, picks peaks, and deconvolutes isotopes/adducts to create a feature table (m/z, retention time, intensity).

- Annotation: Features are annotated by matching m/z (±5 ppm) and MS2 spectra to databases (e.g., HMDB, METLIN, GNPS). Confidence levels (1-4) are assigned.

- Pathway Analysis: Statistically altered metabolites are mapped to biochemical pathways using tools like MetaboAnalyst or Mummichog to identify perturbed pathways.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Reagents and Platforms for Multi-omics Dimensionality Research

| Item Name | Vendor Examples | Primary Function in Research |

|---|---|---|

| Illumina DNA/RNA Prep Kits | Illumina | Library preparation for next-generation sequencing (NGS) of genomic DNA or RNA. |

| NovaSeq 6000 Reagent Kits | Illumina | High-output sequencing reagents for whole-genome, transcriptome, or epigenome profiling. |

| QIAamp DNA/RNA Mini Kits | Qiagen | Reliable purification of high-quality genomic DNA or total RNA from tissues/cells. |

| Tn5 Transposase | Illumina (Nextera) / DIY | Enzyme for simultaneous fragmentation and tagging of DNA in ATAC-seq and other tagmentation assays. |

| RNeasy Plus Mini Kit | Qiagen | Purifies RNA while eliminating genomic DNA contamination, critical for RNA-seq. |

| Pierce BCA Protein Assay Kit | Thermo Fisher Scientific | Colorimetric quantification of protein concentration for proteomics sample normalization. |

| TMTpro 16plex | Thermo Fisher Scientific | Tandem Mass Tag reagents for multiplexed quantitative proteomics of up to 16 samples. |

| Seahorse XFp Cell Mito Stress Test Kit | Agilent Technologies | Measures key parameters of mitochondrial function (OCR, ECAR) as a functional metabolic readout. |

| Cytiva HiPrep Columns | Cytiva | For FPLC-based protein purification, essential for enzymatic assays in pathway studies. |

| C18 & HILIC LC Columns | Waters, Thermo Fisher | Chromatographic separation of complex metabolite mixtures prior to MS detection. |

| PBS, FBS, Trypsin-EDTA | Various (Gibco, Sigma) | Fundamental cell culture reagents for maintaining experimental biological systems. |

| Sodium Pyruvate, Glucose, Glutamine | Sigma-Aldrich | Key metabolic substrates added to culture media to control nutrient environment for experiments. |

Within the context of multi-omics research, the curse of dimensionality presents a formidable barrier to biological insight. This whitepaper details how high-dimensional data from genomics, transcriptomics, proteomics, and metabolomics renders traditional statistical methods (e.g., linear regression, hypothesis testing) ineffective due to data sparsity, multicollinearity, and the exponential growth of the search space. We present current methodologies for dimensionality reduction and feature selection, critical for meaningful analysis in drug development.

Multi-omics integration involves the simultaneous analysis of millions of features (p) from a limited number of biological samples (n), creating an n << p problem. This high-dimensional space is where the curse of dimensionality manifests, invalidating assumptions foundational to classical statistics.

Quantitative Manifestations of the Curse

The core issues are summarized in the following table:

Table 1: Key Challenges in High-Dimensional Multi-omics Data

| Phenomenon | Description | Quantitative Impact | Consequence for Traditional Methods |

|---|---|---|---|

| Data Sparsity | Samples become isolated in vast feature space. | In a 10,000-D unit hypercube, the median distance between points approaches ~1.0; data is no longer "dense". | Nearest-neighbor algorithms fail; overfitting becomes inevitable. |

| Multicollinearity | Extreme correlation between features (e.g., gene co-expression). | Correlation matrices become singular or ill-conditioned; determinant ~0. | Linear regression coefficient estimates become unstable and infinite variance. |

| Multiple Testing Burden | Testing millions of hypotheses (e.g., differential expression). | For 1M tests at α=0.05, 50,000 false positives are expected by chance. | Family-wise error rate (FWER) approaches 1 without severe correction, obliterating power. |

| Distance Concentration | Euclidean distances between points become similar. | Relative contrast (max-min)/min of distances converges to 0 as dimensions grow. | Clustering and classification lose discriminative power. |

| Empty Space Phenomenon | Volume concentrates in the "corners" of the space. | For a D-dimensional sphere inscribed in a unit cube, volume ratio → 0 as D increases. | Sampling becomes inefficient; most of the space is empty. |

Experimental Protocol: A Typical High-Dimensional Multi-omics Analysis

Protocol Title: Dimensionality Reduction and Feature Selection for Integrative Multi-omics Analysis.

Objective: To identify a robust, low-dimensional representation of integrated genomics, transcriptomics, and proteomics data for predictive biomarker discovery.

Materials & Workflow:

Diagram Title: Multi-omics Analysis Workflow with Dimensionality Mitigation

Detailed Protocol Steps:

Data Acquisition & QC (n=100, p>1,000,000):

- Whole Genome Sequencing (WGS): Process ~3 billion base pairs/sample. Filter variants with MAF < 0.01 and call quality < 20.

- RNA-Seq: Process ~50 million reads/sample. Normalize using TMM (Trimmed Mean of M-values) or DESeq2's median-of-ratios method.

- Mass Spectrometry Proteomics: Process ~10,000 peptides/sample. Normalize using median centering and log2 transformation.

Data Integration:

- Method: Use Multi-Omics Factor Analysis (MOFA+) or Similarity Network Fusion.

- Procedure: Input matrices are centered and scaled. MOFA+ models the data as a linear combination of a small number (k=10-15) of latent factors, learning a shared low-dimensional representation across modalities.

Dimensionality Reduction (Addressing Sparsity & Concentration):

- Primary Method: Uniform Manifold Approximation and Projection (UMAP).

- Protocol: Set

n_neighbors=15(to define local connectivity),min_dist=0.1,n_components=2for visualization or 10 for downstream analysis. Use correlation distance for omics data. Train on the integrated latent factors or concatenated normalized data.

Feature Selection (Addressing Multicollinearity & Multiple Testing):

- Method: Stability Selection with Lasso regularization.

- Protocol: a. Subsample 80% of data without replacement (100 iterations). b. For each subsample, run Lasso regression (via GLMNet) across a regularization path (λ from 0.01 to 1). c. Record features with non-zero coefficients for each λ. d. Calculate selection probabilities for each feature across all iterations. e. Apply a cutoff (e.g., probability > 0.8) to identify a stable, sparse feature set.

Validation:

- Use a completely held-out cohort (n=30). Train a final model (e.g., Cox PH for survival, SVM for classification) using only the selected features on the original training set. Validate predictive performance on the held-out set using C-index or AUROC.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Tools for High-Dimensional Multi-omics Research

| Item / Solution | Function / Role | Key Consideration for High-D Data |

|---|---|---|

| UMAP (Uniform Manifold Approximation and Projection) | Non-linear dimensionality reduction. | Preserves local and global structure better than t-SNE; less computationally intensive for very large p. |

| MOFA+ (Multi-Omics Factor Analysis) | Bayesian framework for multi-omics integration. | Learns interpretable latent factors that capture shared and specific variation across data types, directly reducing p. |

| Stability Selection | Robust feature selection method. | Controls false discovery rate (FDR) more effectively than one-shot Lasso; provides a measure of feature importance stability. |

| WGCNA (Weighted Gene Co-expression Network Analysis) | Constructs correlation-based networks. | Reduces dimension by clustering highly correlated features into "eigengenes" (modules), treating modules as new variables. |

| DESeq2 / edgeR | Differential expression analysis for RNA-Seq. | Uses empirical Bayes shrinkage to moderate fold changes across features, stabilizing estimates in low n, high p settings. |

| Cell Painting Assay Kits | High-content morphological profiling. | Generates ~1,500 features per cell; requires dedicated dimensionality reduction (e.g., UMAP) for phenotypic analysis. |

| CyTOF (Mass Cytometry) Antibody Panels | High-parameter single-cell proteomics. | Enables measurement of 40+ proteins simultaneously; analysis necessitates automatic dimensionality reduction (e.g., viSNE, PhenoGraph). |

Conceptualizing the Statistical Failure

The fundamental breakdown of traditional methods is illustrated in the relationship between data dimensions and statistical power/error.

Diagram Title: Consequences of the Curse of Dimensionality

In multi-omics research, the curse of dimensionality is not an abstract concern but a daily analytical reality that invalidates p-values, corrupts predictive models, and obscures biological signal. Success depends on abandoning traditional methods that assume n > p and embracing a toolkit designed for complexity: robust integration, nonlinear dimensionality reduction, and stable feature selection. The path forward in systems biology and precision medicine lies in algorithms that explicitly model and mitigate the geometry of high-dimensional space.

Taming the Data Deluge: Key Methodologies for Dimensionality Reduction and Integration

The integration of multi-omics data (genomics, transcriptomics, proteomics, metabolomics) is central to modern systems biology and precision medicine. This paradigm generates datasets of extreme dimensionality, often characterized by a vast number of molecular features (p) measured across a relatively small cohort of biological samples (n), the so-called "p >> n" problem. This high-dimensional landscape introduces noise, multicollinearity, and the risk of model overfitting, obscuring true biological signals. Dimensionality reduction (DR) is therefore not merely a preprocessing step but a fundamental computational strategy to distill meaningful biological insights, enhance predictive modeling, and enable visualization. This guide dissects the two principal DR philosophies—Feature Selection and Feature Extraction—within the multi-omics research thesis framework.

Foundational Concepts: A Comparative Framework

Feature Selection identifies and retains a subset of the original features (e.g., specific genes, proteins, or metabolites) based on their relevance to the outcome of interest (e.g., disease state, drug response). The original semantic meaning of the features is preserved. Feature Extraction creates a new, smaller set of composite features through transformations of the original data. These new features, while more informative for analysis, are often not directly interpretable as original biological entities.

Table 1: Core Conceptual Comparison

| Aspect | Feature Selection | Feature Extraction |

|---|---|---|

| Output | Subset of original features (e.g., Gene A, Metabolite B). | New transformed features (e.g., Principal Component 1). |

| Interpretability | High. Direct biological interpretation. | Low to Medium. New features are linear/non-linear combinations of all originals. |

| Information Retention | Preserves original measurement space and meaning. | Projects data into a new, lower-dimensional space. |

| Noise Handling | May retain irrelevant variables if selected. | Can reduce noise by concentrating variance into fewer components. |

| Primary Methods | Filter (variance, correlation), Wrapper (RF, LASSO), Embedded. | PCA, t-SNE, UMAP, Autoencoders. |

| Use Case in Multi-omics | Identifying biomarker panels for diagnostics. | Visualizing sample clusters or integrating omics layers. |

Methodological Deep Dive: Protocols & Applications

Feature Selection Protocols

A. Filter Method: Univariate Statistical Screening

- Objective: Rank features individually based on statistical scores.

- Protocol for Differential Expression Analysis (Transcriptomics):

- Input: Normalized count matrix (e.g., from RNA-Seq).

- Statistical Test: Apply a test (e.g., Welch's t-test for two groups, ANOVA for multi-group) to each feature.

- Multiple Testing Correction: Apply Benjamini-Hochberg procedure to control the False Discovery Rate (FDR). Retain features with adjusted p-value < 0.05.

- Effect Size Filter: Calculate fold-change (FC). Often combined with p-value (e.g., |log2FC| > 1 & adj. p-value < 0.05).

- Limitation: Ignores feature-feature interactions.

B. Embedded Method: LASSO (L1) Regularization

- Objective: Perform feature selection during model training by penalizing coefficient magnitudes.

- Protocol for Predictive Model Building:

- Input: Scaled multi-omics feature matrix

X, response variabley(e.g., survival time). - Model Formulation: Solve for coefficients β in:

min( ||y - Xβ||² + λ||β||₁ ). The L1 penalty (||β||₁) drives coefficients of irrelevant features to zero. - Cross-Validation: Use k-fold cross-validation to tune the hyperparameter λ, which controls the strength of penalty and sparsity.

- Output: A model with a subset of features having non-zero coefficients, directly usable for prediction and interpretation.

- Input: Scaled multi-omics feature matrix

Feature Extraction Protocols

A. Linear Extraction: Principal Component Analysis (PCA)

- Objective: Find orthogonal axes (Principal Components, PCs) of maximum variance in the data.

- Protocol for Data Exploration & Noise Reduction:

- Input: Centered (and often scaled) feature matrix.

- Covariance Matrix: Compute the covariance matrix of the data.

- Eigendecomposition: Calculate eigenvectors (PC loadings) and eigenvalues (variance explained).

- Projection: Transform original data by multiplying with the top

keigenvectors:PC_scores = X * V[,1:k]. - Determining

k: Use scree plot (elbow point) or retain PCs explaining >80-90% cumulative variance.

B. Non-linear Extraction: UMAP (Uniform Manifold Approximation and Projection)

- Objective: Capture complex non-linear manifold structure in a low-dimensional space, preserving both local and global geometry.

- Protocol for High-Dimensional Visualization:

- Input: High-dimensional data (e.g., preprocessed single-cell RNA-seq data).

- Graph Construction: Construct a fuzzy topological graph in high-dimensional space based on nearest neighbors.

- Optimization: Initialize a low-dimensional graph and optimize its layout to minimize the cross-entropy between the high- and low-dimensional graphs using stochastic gradient descent.

- Output: 2D or 3D coordinates for each sample, ideal for cluster visualization.

Table 2: Quantitative Performance Comparison (Hypothetical Multi-omics Study)

| Method | Type | Dimensionality Reduction (p → k) | Classification Accuracy (Test Set) | Top 5 Feature Interpretability |

|---|---|---|---|---|

| ANOVA + FC Filter | Selection | 20,000 → 150 | 82% | High. Direct list of dysregulated genes. |

| LASSO Regression | Selection | 20,000 → 45 | 88% | High. Sparse, weighted gene list. |

| PCA | Extraction | 20,000 → 15 | 85% | Low. PCs are linear combos of all 20k genes. |

| UMAP (for clustering) | Extraction | 20,000 → 2 | N/A | Very Low. Purely for visualization. |

Visualizing Methodological Pathways & Workflows

Diagram 1: Feature Selection Method Workflow

Diagram 2: Feature Extraction Transformation Process

Diagram 3: Decision Pathway for Multi-omics Dimensionality Reduction

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Packages for Multi-omics DR

| Tool/Reagent | Function in DR | Application Context | Key Reference/Link |

|---|---|---|---|

| scikit-learn (Python) | Unified library for Filter methods (VarianceThreshold), Embedded methods (LASSO), and FE (PCA). | General-purpose DR for bulk omics data. | Pedregosa et al., 2011, JMLR |

| GLMnet / glmnet (R) | Efficiently fits LASSO and elastic-net regularized models. | High-dimensional regression for biomarker discovery. | Friedman et al., 2010, JSS |

| UMAP (python/R) | State-of-the-art non-linear dimensionality reduction. | Visualization of single-cell omics, microbiome data. | McInnes et al., 2018, JOSS |

| MixOmics (R) | Provides multi-omics specific DR (e.g., DIABLO, sPLS-DA). | Integrative analysis of multiple omics datasets. | Rohart et al., 2017, PLoS Comp Biol |

| MOFA2 (R/Python) | Uses Factor Analysis for multi-omics integration and DR. | Unsupervised discovery of latent factors across omics. | Argelaguet et al., 2020, Nat Protoc |

| Scanpy (Python) | Integrated workflows including PCA, UMAP, and feature selection for single-cell. | End-to-end analysis of single-cell RNA-seq data. | Wolf et al., 2018, Genome Biology |

Within a multi-omics thesis, the choice between feature selection and extraction is not binary but strategic. Feature selection is indispensable for generating biologically testable hypotheses, directly linking analysis results to specific genes, proteins, or pathways for experimental validation in drug development. Feature extraction is powerful for exploratory data analysis, noise reduction, and handling complex interactions when prediction or visualization is the primary goal. A pragmatic strategy often involves a hybrid approach: using filter methods for initial aggressive dimensionality reduction, followed by embedded selection or extraction for final model building, thereby balancing interpretability with analytical power in the high-dimensional multi-omics landscape.

Within multi-omics research, the curse of high-dimensionality is a fundamental challenge. Classical linear dimensionality reduction techniques, specifically Principal Component Analysis (PCA) and Linear Discriminant Analysis (LDA), provide foundational mathematical frameworks for feature extraction, noise reduction, and discriminative pattern discovery. This whitepaper details their theoretical underpinnings, protocols for application in omics data, and comparative analysis, contextualized within a thesis on explaining high-dimensionality in integrated genomics, transcriptomics, proteomics, and metabolomics datasets.

Multi-omics studies integrate data from genomics, epigenomics, transcriptomics, proteomics, and metabolomics, routinely generating datasets where the number of features (p; e.g., genes, proteins, metabolites) far exceeds the number of samples (n). This p >> n paradigm leads to computational instability, overfitting, and difficulty in visualization and interpretation. PCA and LDA are two pivotal, mathematically distinct linear approaches to project this high-dimensional data into a lower-dimensional subspace while preserving critical information.

Theoretical Foundations

Principal Component Analysis (PCA)

PCA is an unsupervised method that finds a new set of orthogonal axes (principal components) that capture the maximum variance in the data. It is solution to an eigen decomposition of the covariance matrix.

Algorithm:

- Center the Data: ( X_{centered} = X - \mu ), where ( \mu ) is the feature-wise mean.

- Compute Covariance Matrix: ( \Sigma = \frac{1}{n-1} X{centered}^T X{centered} ).

- Eigen Decomposition: Solve ( \Sigma v = \lambda v ). Eigenvectors ( v ) are the principal components (PCs); eigenvalues ( \lambda ) represent variance captured.

- Projection: The transformed data is ( Z = X{centered} Vk ), where ( V_k ) contains the top ( k ) eigenvectors.

Linear Discriminant Analysis (LDA)

LDA is a supervised method that seeks a projection that maximizes the separation between predefined classes. It maximizes the ratio of between-class variance to within-class variance.

Objective Function (Fisher's Criterion): [ J(w) = \frac{w^T SB w}{w^T SW w} ] where ( SB ) is the between-class scatter matrix and ( SW ) is the within-class scatter matrix. The optimal projection is found by solving the generalized eigenvalue problem: ( SB w = \lambda SW w ).

Table 1: Core Characteristics of PCA and LDA

| Aspect | Principal Component Analysis (PCA) | Linear Discriminant Analysis (LDA) |

|---|---|---|

| Learning Type | Unsupervised | Supervised (requires class labels) |

| Primary Objective | Maximize variance (signal) retention | Maximize class separability |

| Mathematical Core | Eigen decomposition of covariance matrix | Generalized eigen decomposition of ( SW^{-1}SB ) |

| Output Dimensions | Maximum: min(n-1, p) | Maximum: C - 1 (where C = number of classes) |

| Assumptions | Linearity, large variance implies importance | Linear separability, normal distribution of features, homoscedasticity (equal class covariances) |

| Use in Multi-omics | Exploratory analysis, noise reduction, visualization | Classification, biomarker discovery, supervised visualization |

Table 2: Typical Performance Metrics on Multi-omics Data (Illustrative Examples from Recent Literature)

| Study (Example Focus) | Method | Key Metric | Reported Outcome | Omics Layer |

|---|---|---|---|---|

| Cancer Subtype Discovery | PCA | Variance Explained | Top 5 PCs captured ~60% of total variance in tumor RNA-seq data. | Transcriptomics |

| Disease vs. Control Classification | LDA | Classification Accuracy | Achieved 92% accuracy on held-out test set using metabolic profiles. | Metabolomics |

| Multi-omics Integration (Early Fusion) | PCA on concatenated data | Cluster Separation (Silhouette Score) | Silhouette score improved from 0.12 (raw) to 0.41 (after PCA) for integrated clusters. | Genomics+Proteomics |

| Biomarker Panel Identification | LDA (as feature selector) | Number of Discriminative Features | Identified a panel of 15 proteins sufficient for robust classification. | Proteomics |

Experimental Protocols for Multi-omics Data

Protocol: PCA for Multi-omics Exploratory Analysis

Objective: To reduce dimensionality and visualize sample clustering/structure in an unsupervised manner.

Input: A normalized ( n \times p ) data matrix ( X ) (e.g., gene expression counts, protein abundances). Procedure:

- Preprocessing: Log-transform (if needed), center, and optionally scale each feature to unit variance.

- Covariance Computation: Use singular value decomposition (SVD) on the preprocessed matrix for numerical stability.

- Component Selection: Plot the scree plot (eigenvalues vs. PC number). Apply the elbow method or select PCs that cumulatively explain >80% variance.

- Projection & Visualization: Project data onto selected PCs. Generate 2D/3D scatter plots (PC1 vs. PC2), colored by relevant sample metadata (e.g., batch, phenotype).

- Interpretation: Analyze loadings (coefficients) of top PCs to identify features (genes/proteins) driving the observed sample separation.

Protocol: LDA for Discriminative Biomarker Discovery

Objective: To find a linear combination of molecular features that best separates two or more predefined clinical classes (e.g., responder vs. non-responder).

Input: A normalized ( n \times p ) data matrix ( X ) and a corresponding ( n \times 1 ) vector of class labels ( y ). Procedure:

- Feature Preselection (for p >> n): Apply a univariate filter (e.g., ANOVA F-value) to reduce ( p ) to a manageable size (e.g., 1000 top features).

- LDA Model Training: Compute ( SW ) and ( SB ) from the training set. Solve for the LDA transformation matrix ( W ). Regularization (e.g., adding a small constant to ( S_W )'s diagonal) is often essential for stability.

- Dimensionality Reduction: Project the training data onto the LDA axes: ( Z{train} = X{train} W ).

- Classifier Construction: Fit a simple classifier (e.g., a linear classifier) on ( Z_{train} ). Alternatively, use the LDA's built-in Bayesian classification rule.

- Validation: Apply the learned transformation ( W ) to the held-out test set (( Z{test} = X{test} W )) and evaluate classification performance (accuracy, AUC-ROC).

- Biomarker Interpretation: Examine the coefficients (weights) in the most discriminative LDA axes to identify the top-contributing features to class separation.

Visualizations

PCA Workflow for Multi-omics Data

PCA vs LDA: Objective Comparison

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Packages for Implementing PCA/LDA in Multi-omics

| Tool/Reagent | Category | Function in Analysis | Example/Provider |

|---|---|---|---|

| Scikit-learn | Software Library | Primary Python implementation for PCA (sklearn.decomposition.PCA) and LDA (sklearn.discriminant_analysis.LinearDiscriminantAnalysis). |

Open-source (scikit-learn.org) |

| FactoMineR & factoextra | Software Library | Comprehensive R suite for multivariate analysis, providing PCA computation and enhanced visualization. | CRAN repository |

| SIMCA | Commercial Software | Industry-standard tool for multivariate data analysis (PCA, PLS-DA, a variant of LDA) with GUI, common in metabolomics/proteomics. | Sartorius Stedim Data Analytics |

| MetaboAnalyst | Web-based Platform | Offers PCA and PLS-DA modules tailored for -omics data, with integrated statistical and pathway analysis. | metabolanalyst.ca |

| ComBat or sva | Software Tool | Batch effect correction package (in R). Critical preprocessing step before PCA/LDA to remove technical noise. | Bioconductor |

| Unit Variance Scaling | Algorithmic Step | Standard scaling (z-score normalization) ensures features contribute equally to PCA variance calculation. | Built into sklearn.preprocessing.StandardScaler |

| Regularization Parameter (γ) | Mathematical Parameter | Added to diagonal of ( S_W ) in LDA to prevent singularity in high-dimensional settings (p >> n). | Tuned via cross-validation in sklearn |

PCA and LDA remain indispensable in the multi-omics analytical pipeline. PCA serves as the workhorse for initial data exploration, quality control, and unsupervised dimensionality reduction. In contrast, LDA provides a powerful framework for supervised feature extraction and classification when clear phenotypic labels exist. Their mathematical elegance, interpretability, and computational efficiency ensure their continued relevance, often serving as critical preprocessing steps or benchmarks for more complex nonlinear and deep learning models in the quest to explain and harness high-dimensional biological data.

In multi-omics research, high-dimensional data from genomics, transcriptomics, proteomics, and metabolomics present a profound analytical challenge. Traditional linear dimensionality reduction techniques like PCA often fail to capture the complex, nonlinear relationships inherent in biological systems. Nonlinear manifold learning techniques—specifically t-Distributed Stochastic Neighbor Embedding (t-SNE), Uniform Manifold Approximation and Projection (UMAP), and Autoencoders—have become indispensable for visualizing and interpreting these intricate datasets, facilitating discoveries in disease subtyping, biomarker identification, and drug development.

Core Algorithms: Theory and Application to Multi-omics

t-Distributed Stochastic Neighbor Embedding (t-SNE)

t-SNE minimizes the divergence between two probability distributions: one measuring pairwise similarities in the high-dimensional space, and another in the low-dimensional embedding space. It excels at preserving local structures, making it ideal for identifying tight clusters like cell types or disease subgroups.

Key Equations:

- High-dimensional similarity (conditional probability): ( p{j|i} = \frac{\exp(-||\mathbf{x}i - \mathbf{x}j||^2 / 2\sigmai^2)}{\sum{k \neq i} \exp(-||\mathbf{x}i - \mathbf{x}k||^2 / 2\sigmai^2)} )

- Low-dimensional similarity (Student-t distribution): ( q{ij} = \frac{(1 + ||\mathbf{y}i - \mathbf{y}j||^2)^{-1}}{\sum{k \neq l} (1 + ||\mathbf{y}k - \mathbf{y}l||^2)^{-1}} )

- Cost function (Kullback-Leibler divergence): ( C = KL(P||Q) = \sumi \sumj p{ij} \log \frac{p{ij}}{q_{ij}} )

Uniform Manifold Approximation and Projection (UMAP)

UMAP is grounded in topological data analysis. It constructs a fuzzy topological representation of the high-dimensional data and optimizes a low-dimensional layout to be as topologically similar as possible. It is faster than t-SNE and often better preserves global structure.

Key Equations:

- High-dimensional weights: ( v{j|i} = \exp[(-d(\mathbf{x}i, \mathbf{x}j) - \rhoi) / \sigma_i] )

- Low-dimensional weights: ( w{ij} = (1 + a ||\mathbf{y}i - \mathbf{y}_j||^{2b})^{-1} )

- Cross-entropy cost function optimized via stochastic gradient descent.

Autoencoders (AEs)

Autoencoders are neural networks trained to reconstruct their input through a bottleneck layer, learning a compressed, nonlinear representation. Variants like Variational Autoencoders (VAEs) learn a probabilistic latent space, enabling generation and robust handling of noise common in omics data.

Architecture:

- Encoder: ( \mathbf{h} = f(\mathbf{W}e \mathbf{x} + \mathbf{b}e) )

- Bottleneck (Latent space): (\mathbf{z})

- Decoder: ( \mathbf{\hat{x}} = g(\mathbf{W}d \mathbf{z} + \mathbf{b}d) )

- Loss: ( \mathcal{L}(\mathbf{x}, \mathbf{\hat{x}}) = ||\mathbf{x} - \mathbf{\hat{x}}||^2 + \Omega(\text{regularization}) )

Comparative Analysis for Multi-omics Data

Table 1: Algorithm Comparison for Multi-omics Applications

| Feature | t-SNE | UMAP | Autoencoder (Standard) | Variational Autoencoder (VAE) |

|---|---|---|---|---|

| Core Objective | Preserve local neighborhoods | Preserve local & global topology | Learn compressed, nonlinear encoding | Learn probabilistic latent distribution |

| Scalability | ~O(n²), poor for >10k samples | ~O(n), excellent for large n | ~O(n), depends on network size | ~O(n), depends on network size |

| Global Structure | Poorly preserved | Well preserved | Can be preserved with tuning | Can be preserved with tuning |

| Stochasticity | High (multiple runs vary) | Moderate | Deterministic (fixed seed) | Stochastic (by design) |

| Out-of-Sample | Not supported | Not natively supported | Fully supported (encoder) | Fully supported (encoder) |

| Multi-omics Integration | Manual concatenation or early integration | Manual concatenation or early integration | Flexible (custom input layers) | Flexible (custom input layers) |

| Typical Latent Dim | 2 or 3 (visualization) | 2 to ~50 | 2 to hundreds | 2 to hundreds |

| Key Hyperparameters | Perplexity, learning rate, iterations | Nneighbors, mindist, metric | Network architecture, activation, loss | β (KL weight), network architecture |

Table 2: Performance on Public Multi-omics Datasets (The Cancer Genome Atlas - TCGA)

| Algorithm | Dataset (Samples x Features) | Runtime (s) | Trustworthiness* (↑) | Continuity* (↑) | Biological Cluster Separation (Silhouette Score) |

|---|---|---|---|---|---|

| t-SNE | BRCA (1000 x 20k) | 450 | 0.95 | 0.72 | 0.68 |

| UMAP | BRCA (1000 x 20k) | 22 | 0.91 | 0.89 | 0.71 |

| Deep AE | BRCA (1000 x 20k) | 310 (train) | 0.88 | 0.85 | 0.65 |

| t-SNE | Pan-cancer (5000 x 50k) | >3600 | NA | NA | NA |

| UMAP | Pan-cancer (5000 x 50k) | 155 | 0.87 | 0.91 | 0.64 |

| VAE | Pan-cancer (5000 x 50k) | 2200 (train) | 0.89 | 0.88 | 0.62 |

*Metrics range 0-1, higher is better. NA: Not feasible due to computational constraints.

Experimental Protocols for Multi-omics Integration

Protocol 4.1: Dimensionality Reduction for Single-Cell Multi-omics (CITE-seq)

Objective: Visualize integrated protein (ADT) and gene expression (RNA) data to identify immune cell populations.

- Data Preprocessing: Normalize RNA counts (log(CP10K+1)) and ADT counts (centered log ratio). Select top 2000 highly variable genes and all ADT features.

- Concatenation: Create a combined matrix [RNA features | ADT features] per cell.

- Scaling: Scale concatenated features to zero mean and unit variance.

- UMAP Embedding:

- Set

n_neighbors=30,min_dist=0.3,metric='cosine',n_components=2. - Fit on the first 50 principal components (PCA) of the scaled matrix for denoising.

- Transform the data to generate 2D coordinates.

- Set

- Validation: Calculate Leiden clustering on a k-nearest neighbor graph derived from the PCA. Assess cluster purity using known protein marker expression.

Protocol 4.2: Deep Learning-Based Integration for Stratification

Objective: Integrate mRNA expression, DNA methylation, and miRNA data to discover novel cancer subtypes.

- Modality-Specific Encoding: Train separate shallow AEs for each omics modality (mRNA, meth, miRNA) to reduce noise and dimension to 100 each.

- Latent Space Concatenation: Concatenate the three 100-dim latent vectors to form a 300-dim integrated representation.

- Joint Optimization: Train a second AE on this concatenated latent space, forcing it to learn a coherent joint latent space

Z(dim=50). - Clustering & Validation: Apply consensus k-means clustering on

Z. Perform survival analysis (Kaplan-Meier log-rank test) on derived clusters. Validate via differential pathway analysis (GSEA) across clusters.

Visualization of Methodologies and Data Flow

Title: Multi-omics Dimensionality Reduction Workflow

Title: Variational Autoencoder for Multi-omics Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Packages

| Item (Package/Platform) | Primary Function in Manifold Learning | Application Note for Multi-omics |

|---|---|---|

| Scanpy (Python) | Single-cell analysis toolkit; integrates t-SNE, UMAP, and graph-based clustering. | Standard for scRNA-seq and CITE-seq data preprocessing, integration, and visualization. |

| scikit-learn (Python) | Provides t-SNE implementation and standardization tools. | Robust preprocessing (StandardScaler) and baseline t-SNE for smaller omics datasets. |

| UMAP-learn (Python) | Official UMAP implementation. | Key for large-scale multi-omics visualization; supports custom distance metrics. |

| TensorFlow / PyTorch | Deep learning frameworks for building custom autoencoders. | Essential for designing multi-input AEs/VAEs for heterogeneous omics data integration. |

| MOFA+ (R/Python) | Multi-Omics Factor Analysis framework. | Bayesian model for integration; generates factors that can be visualized via UMAP/t-SNE. |

| Cell Ranger (10x Genomics) | Pipeline for processing single-cell data. | Generates count matrices from raw sequencing data, forming the input for downstream manifold learning. |

| Seurat (R) | Comprehensive single-cell analysis suite. | Popular for integrative analysis of multi-modal single-cell data, includes robust UMAP implementations. |

| SCVI-tools (Python) | Probabilistic modeling for single-cell omics. | Provides scVI (VAE for scRNA-seq) and multi-modal integration models like totalVI. |

Multi-omics data integration is a critical step in systems biology, addressing the high-dimensionality and heterogeneity inherent in modern biological datasets. This guide details three primary integration frameworks—Early (Data-Level), Intermediate (Feature-Level), and Late (Decision-Level) Fusion—within the context of managing high-dimensional multi-omics data to derive robust biological and clinical insights.

The Challenge of High-Dimensionality in Multi-Omics

A single multi-omics study can yield millions of molecular features, far exceeding the number of samples (the "n << p" problem). This dimensionality curse complicates statistical analysis, increases noise, and risks model overfitting.

Fusion Strategies: A Comparative Framework

Each integration strategy handles data dimensionality at a different stage of the analytical pipeline.

Table 1: Core Characteristics of Multi-Omics Fusion Strategies

| Aspect | Early Fusion | Intermediate Fusion | Late Fusion |

|---|---|---|---|

| Integration Stage | Raw or pre-processed data | Reduced feature space or latent components | Model predictions or decisions |

| Dimensionality Handling | Before integration; requires aggressive reduction | During integration via joint dimensionality reduction | After omics-specific models are built |

| Key Advantage | Captures global correlations across all data types | Flexible; models complex, non-linear interactions | Modular; leverages optimal model per data type |

| Key Disadvantage | Highly sensitive to noise and scale; loses data-type specificity | Methodologically complex; can be computationally intensive | May miss cross-omics interactions in the data |

| Typical Methods | Concatenation, then PCA, t-SNE, UMAP | Multi-CCA, MOFA, iCluster, Deep Learning (Autoencoders) | Ensemble learning, Weighted voting, Stacked generalization |

| Suitability | When omics types are well-aligned and scales are comparable | For discovering latent factors driving variation across omics | When omics data are disparate or collected at different times |

Early Fusion (Data-Level Integration)

Early fusion concatenates multiple omics datasets into a single, high-dimensional matrix prior to analysis.

Experimental Protocol: Concatenation with Dimensionality Reduction

- Data Pre-processing: Independently normalize and scale each omics dataset (e.g., RNA-seq counts, methylation beta values, protein abundance).

- Feature Filtering: Apply variance-based or significance-based filtering within each dataset to reduce initial dimensionality.

- Concatenation: Row-align samples (N) and column-bind filtered features from all omics types into a matrix of size N x (P1+P2+...+Pk).

- Global Dimensionality Reduction: Apply Principal Component Analysis (PCA) or Uniform Manifold Approximation and Projection (UMAP) to the concatenated matrix.

- Downstream Analysis: Use the reduced components for clustering, classification, or survival analysis.

Diagram: Early Fusion Workflow: Data Concatenation & Joint Reduction

Intermediate Fusion (Feature-Level Integration)

Intermediate fusion integrates data by extracting shared representations or latent variables, often using matrix factorization or deep learning.

Experimental Protocol: Integration using MOFA (Multi-Omics Factor Analysis)

- Data Preparation: Center and scale each omics dataset. Handle missing values appropriately (e.g., via imputation or MOFA's built-in handling).

- Model Setup: Specify the omics data views and relevant likelihoods (Gaussian for continuous, Bernoulli for binary, Poisson for counts).

- Model Training: Run the MOFA algorithm to decompose the data matrices and infer a set of latent factors (Z) and corresponding weights (W) for each view:

E[Y_k] = Z W_k^T. - Factor Inspection: Analyze the variance explained by each factor across omics types. Correlate factors with sample covariates (e.g., clinical outcome).

- Biological Interpretation: Project the latent factors onto gene sets or pathways for functional analysis.

Table 2: Key Intermediate Fusion Methods & Tools

| Method | Underlying Principle | Key Output | Software/Package |

|---|---|---|---|

| MOFA+ | Bayesian group factor analysis | Shared latent factors across omics | R/Python MOFA2 |

| Multi-CCA | Finds correlated projections between datasets | Canonical variates (linear combinations) | PMA (R), sklearn (Python) |

| iCluster | Joint latent variable model for clustering | Integrated cluster assignments | R iClusterPlus |

| Deep Autoencoder | Neural network learns compressed representation | Low-dimensional encoded features | TensorFlow, PyTorch |

Diagram: Intermediate Fusion via Shared Latent Space Learning

Late Fusion (Decision-Level Integration)

Late fusion builds separate models on each omics dataset and integrates their predictions.

Experimental Protocol: Stacked Generalization (Stacking) for Patient Stratification

- Base Model Training: For each omics dataset (k), train a predictive model (e.g., SVM, Random Forest, Cox model) using cross-validation. Generate out-of-fold predictions for each sample.

- Meta-Feature Creation: Assemble the cross-validated predictions from all base models into a new N x k meta-feature matrix.

- Meta-Model Training: Train a final model (the meta-learner, often a simple logistic regression) on the meta-feature matrix to combine predictions.

- Validation: Perform nested cross-validation to assess the final integrated model's performance without data leakage.

- Interpretation: Examine the weights assigned to each base model by the meta-learner to understand the relative contribution of each omics type.

Diagram: Late Fusion via Stacked Generalization (Stacking)

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagent Solutions for Multi-Omics Experiments

| Item / Reagent | Function & Role in Multi-Omics Integration |

|---|---|

| Nucleic Acid Stabilization Reagents (e.g., PAXgene, RNAlater) | Preserve RNA/DNA integrity at collection from same specimen, ensuring data alignment for genomics/transcriptomics. |

| Single-Cell Multi-Omics Kits (e.g., 10x Genomics Multiome ATAC + Gene Exp.) | Enable simultaneous profiling of chromatin accessibility and transcriptomics from the same single cell, providing inherently aligned data for integration. |

| Isobaric Mass Tag Kits (e.g., TMT, iTRAQ) | Allow multiplexed quantitative proteomics, enabling precise comparison of protein abundance across many samples for integration with transcriptomic data. |

| Methylation Arrays (e.g., Illumina EPIC) | Provide genome-wide CpG methylation profiles, a key epigenomic layer for integration with gene expression data. |

| Cell Line Authentication & Mycoplasma Detection Kits | Ensure sample quality and identity, a critical pre-requisite for valid data generation and integration across disparate omics assays. |

| Benchmark Multi-Omics Datasets (e.g., TCGA, CPTAC) | Provide gold-standard, publicly available matched omics data from same patient cohorts for method development and validation. |

The choice of integration strategy is dictated by the biological question, data characteristics, and the nature of the expected signal. Early fusion is straightforward but brittle. Intermediate fusion is powerful for discovery but complex. Late fusion is robust and modular but may miss subtle interactions. A systematic, question-driven approach is essential to navigate the high-dimensionality of multi-omics data and extract actionable insights for precision medicine.

The integration of high-dimensional multi-omics data (genomics, transcriptomics, proteomics, metabolomics) presents a fundamental challenge in systems biology. Network-based analysis provides a critical framework to reduce this complexity by representing biological entities as nodes and their interactions as edges within a graph. This approach transforms disparate omics layers into interpretable models of signaling pathways, protein-protein interaction (PPI) networks, and gene regulatory circuits, enabling the extraction of mechanistic insights crucial for understanding disease etiology and identifying therapeutic targets.

Core Network Types

Biological networks are constructed from curated databases and high-throughput experiments.

| Network Type | Primary Components | Key Public Databases (Source: 2024 Update) |

|---|---|---|

| Protein-Protein Interaction (PPI) | Proteins (Nodes), Physical/Functional Associations (Edges) | STRING (v12.0), BioGRID (v4.4), IntAct, APID |

| Metabolic Pathways | Metabolites, Enzymes, Biochemical Reactions | KEGG (2023 Release), Reactome (2022), MetaCyc |

| Gene Regulatory | Transcription Factors, Target Genes, Regulatory Elements | RegNetwork, TRRUST (v2), ENCODE, ChIP-Atlas |

| Signaling Pathways | Signaling Molecules, Post-Translational Modifications | KEGG, Reactome, WikiPathways, PANTHER |

| Genetic Interaction | Synthetic Lethality, Epistasis | BioGRID, SynLethDB (v2.0) |

Quantitative Snapshot of Major Database Coverage (as of 2023-2024)

| Database | Organisms Covered | Interactions/Pathways | Primary Data Type |

|---|---|---|---|

| STRING | >14,000 | >67 million proteins; >2 billion interactions | Predicted & Experimental PPI |

| BioGRID | 85 | ~2.4 million genetic & protein interactions | Curated literature evidence |

| KEGG | 5,200+ organisms | 540+ pathway maps; 5,900+ metabolic modules | Curated pathways |

| Reactome | 204 species | ~12,700 human pathways & reactions | Curated & inferred pathways |

| IntAct | All major model organisms | ~1.2 million curated interactions | Molecular interaction data |

Core Methodological Framework: From Omics Data to Network Models

Standardized Protocol for Constructing a Context-Specific PPI Network

Objective: Integrate differential expression data with a global interactome to identify dysregulated subnetworks.

Materials & Workflow:

- Input Data: List of differentially expressed genes (DEGs) from RNA-seq (e.g., |log2FC| > 1, adj. p-value < 0.05).

- Background Interactome: Download a comprehensive, non-redundant PPI network from STRING or BioGRID. Apply a confidence score threshold (e.g., STRING combined score > 700).

- Network Pruning: Extract the subnetworks induced by the DEGs (the "seed" nodes) and their first neighbors from the background network. This captures direct interactors potentially missed by expression analysis.

- Topological Analysis: Calculate node centrality metrics (degree, betweenness, closeness) using tools like Cytoscape or NetworkX.

- Functional Enrichment: Perform over-representation analysis (ORA) or gene set enrichment analysis (GSEA) on topologically significant nodes (e.g., high-degree hubs) using Gene Ontology (GO), KEGG, or Reactome libraries.

Detailed Experimental Protocol for Co-Immunoprecipitation (Co-IP) Followed by Mass Spectrometry (MS) for PPI Validation

Title: Experimental Validation of Predicted Protein Interactions via Co-IP-MS.

Reagents & Equipment:

- Lysis Buffer: 50 mM Tris-HCl (pH 7.5), 150 mM NaCl, 1% NP-40, protease/phosphatase inhibitors.

- Antibodies: Specific antibody for the target protein (Bait), isotype control IgG, Protein A/G magnetic beads.

- Cell Line: Relevant mammalian cell line (e.g., HEK293T, HeLa).

- Mass Spectrometer: LC-MS/MS system (e.g., Q Exactive HF).

Procedure:

- Cell Lysis: Harvest 1x10^7 cells, wash with PBS, and lyse in 1 mL ice-cold lysis buffer for 30 min on ice. Centrifuge at 16,000 x g for 15 min at 4°C. Collect supernatant.

- Pre-clearing: Incubate lysate with 20 µL of bare magnetic beads for 30 min at 4°C. Discard beads.

- Immunoprecipitation: Split lysate into two tubes. To one, add 2-5 µg of specific anti-Bait antibody. To the other (control), add equivalent amount of control IgG. Incubate for 2 hours at 4°C with rotation.

- Bead Capture: Add 50 µL of Protein A/G beads to each tube. Incubate for 1 hour at 4°C with rotation.

- Washing: Pellet beads magnetically. Wash 5 times with 1 mL lysis buffer.

- Elution: Elute bound proteins by boiling beads in 40 µL of 1X Laemmli buffer for 10 min at 95°C.

- Mass Spec Preparation: Resolve eluates by SDS-PAGE (short run). Excise the entire lane, perform in-gel tryptic digestion, and desalt peptides.

- LC-MS/MS Analysis: Analyze peptides using a 60-min gradient on a C18 column coupled to the MS. Use data-dependent acquisition (DDA) mode.

- Data Analysis: Identify proteins using a search engine (MaxQuant, Proteome Discoverer) against a human UniProt database. Significant interactors are proteins enriched in the anti-Bait sample vs. control IgG (fold-change > 5, p-value < 0.01 by t-test).

Key Analytical Algorithms and Workflow Visualization

Diagram Title: Core Network Analysis Workflow for Multi-omics Data

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item / Reagent | Function in Network-Based Analysis | Example Product/Source |

|---|---|---|

| CRISPR/Cas9 Knockout Kits | Functional validation of hub genes. Enables genetic perturbation to test network robustness. | Synthego Edit-R kits, Horizon Discovery. |

| Validated Co-IP Antibodies | Essential for experimental validation of predicted PPIs from network models. | Cell Signaling Technology, Abcam (Validated for Co-IP). |

| Proximity-Dependent Labeling Reagents (e.g., BioID, APEX) | Maps proximal interactomes in live cells, providing spatial context to network edges. | Promega BioID2 Kit, IRE-PERK APEX2 Kit. |

| Pathway Reporter Assays (Luciferase, GFP) | Tests activity of signaling pathways (e.g., NF-κB, Wnt) inferred from network analysis. | Qiagen Cignal Reporter Assays, Addgene plasmids. |

| Cytoscape with Plugins (cytoHubba, MCODE) | Open-source software platform for network visualization, clustering, and hub identification. | Cytoscape App Store. |