DIABLO for Biomarker Discovery: A Comprehensive Guide for Research Scientists in Multi-Omics Integration

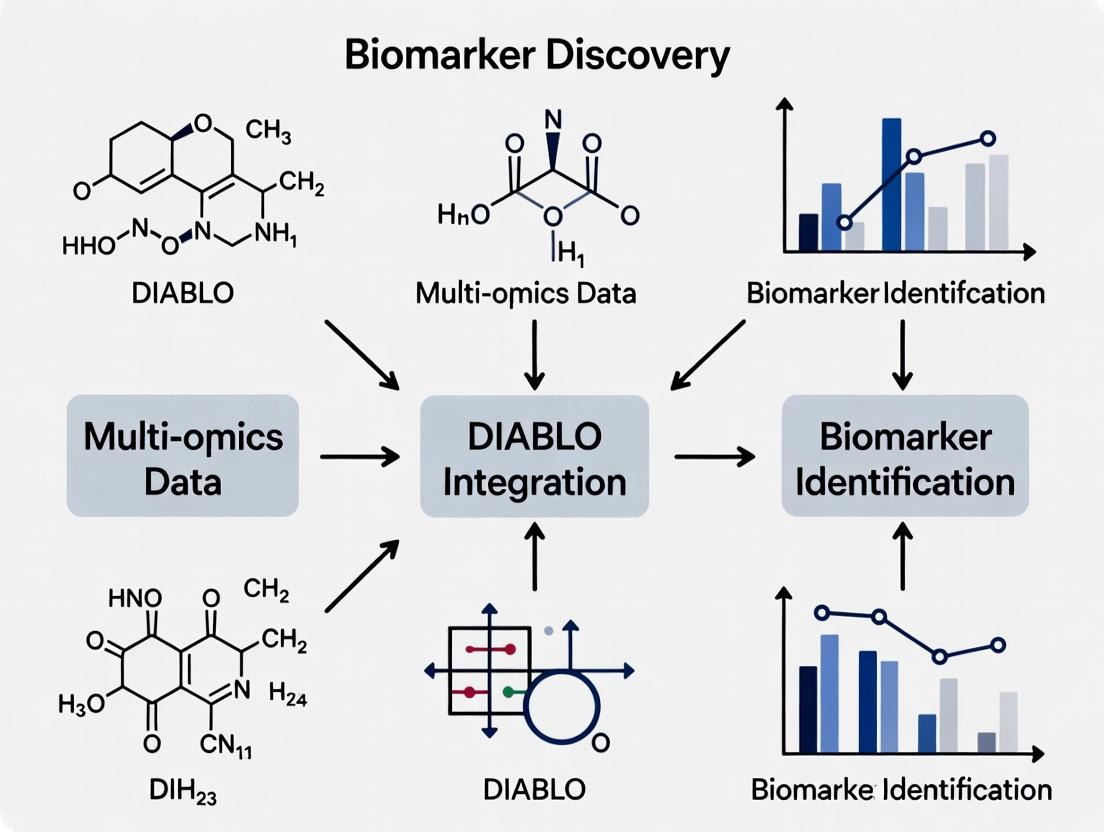

This comprehensive guide explores the application of DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) for multi-omics biomarker discovery.

DIABLO for Biomarker Discovery: A Comprehensive Guide for Research Scientists in Multi-Omics Integration

Abstract

This comprehensive guide explores the application of DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) for multi-omics biomarker discovery. Targeting researchers, scientists, and drug development professionals, the article provides a foundational overview of the method, detailed workflows for its application, practical troubleshooting advice, and frameworks for validating and comparing results. Readers will gain actionable insights for implementing DIABLO in their own studies, from experimental design to robust biomarker panel identification and biological interpretation, enhancing the translational potential of integrated omics data.

What is DIABLO? Unlocking Multi-Omics Integration for Translational Biomarker Research

1. Introduction & Core Principles DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) is a multivariate method designed for the integrative analysis of multiple omics datasets (e.g., transcriptomics, proteomics, metabolomics) collected on the same set of biological samples. Its primary purpose in the systems biology toolkit is to identify robust multi-omics biomarker panels that maximally discriminate between experimental conditions, such as disease states.

Core Principles:

- Multi-Block Integration: DIABLO is built on a generalized multi-block variant of Partial Least Squares Discriminant Analysis (PLS-DA), known as N-integration. It identifies a set of "latent components" that simultaneously explain variation within each dataset and maximize correlation between datasets, while also achieving optimal class separation.

- Sparse Modeling: DIABLO incorporates a penalty (like LASSO) to select a small subset of predictive and highly correlated features across blocks. This sparsity is crucial for identifying a concise, interpretable biomarker signature from high-dimensional data.

- Supervised Framework: The model is explicitly guided by the known sample class labels (e.g., healthy vs. disease), directing the integration towards components directly relevant to the biological outcome of interest.

2. Quantitative Performance Metrics The performance of a DIABLO model is typically evaluated through cross-validation. Key metrics include:

Table 1: Key Performance Metrics for DIABLO Model Evaluation

| Metric | Description | Typical Target |

|---|---|---|

| Balanced Error Rate | Average misclassification rate across all classes. | Minimize (closer to 0). |

| AUC (Area Under ROC Curve) | Measures the model's ability to discriminate between classes across all thresholds. | Maximize (closer to 1). |

| Component Correlation | The correlation between component scores from different omics blocks for the same latent variable. | High (e.g., >0.7) indicates strong multi-omics agreement. |

3. Application Note: A Standard DIABLO Workflow for Biomarker Discovery Objective: Identify a coordinated mRNA-miRNA-protein biomarker signature distinguishing two phenotypes.

Protocol: Step 1: Data Preprocessing & Input Formatting.

- Input: Three matched datasets (mRNA expression, miRNA expression, protein abundance) from the same n samples, with a n-length class label vector.

- Processing: Independently preprocess each dataset (normalization, log-transformation, missing value imputation). Features are centered and scaled by default.

- Format: Data must be structured as a list of matrices (

list(mRNA = X_mrna, miRNA = X_mirna, Protein = X_prot)). Rows are samples, columns are features.

Step 2: Design Matrix Tuning.

- The design matrix defines the target correlation network between omics blocks. It is a square matrix where values (usually between 0-1) specify the correlation to encourage between pairs of blocks.

- Protocol: Start with a full design (value = 1 between all blocks). Use

tune.block.splsda()to optimize this value and the number of features to select per component via repeated cross-validation. - Output: Optimal

designvalue and number of features (keepXlist) that minimize the balanced error rate.

Step 3: Model Building.

- Execute the final

block.splsda()function with the tuned parameters.

Step 4: Model Evaluation & Feature Selection.

- Assess performance using

perf()with repeated cross-validation. - Extract selected features:

selectVar(diabo_model, block = 'mRNA', comp = 1)$mRNA$name - Validate the signature on an independent test set if available.

4. The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for a DIABLO-based Multi-Omics Study

| Item / Solution | Function in DIABLO Workflow |

|---|---|

| R Statistical Environment | Core platform for computational analysis. |

mixOmics R Package |

Implements the DIABLO algorithm and provides all essential functions for tuning, plotting, and evaluation. |

| Sample Multi-Omics Dataset (e.g., TCGA, PRIDE) | Publicly available or proprietary matched multi-omics data required for model training and validation. |

| High-Performance Computing (HPC) Access | Facilitates the computationally intensive cross-validation and tuning steps, especially for large datasets. |

| Benchmarking Datasets | Curated, publicly available multi-omics datasets with known outcomes used for method validation and comparison. |

5. Visualizing the DIABLO Framework and Workflow

DIABLO Integration Workflow (100 chars)

DIABLO Core Integration Principle (96 chars)

Within the broader thesis on biomarker discovery, DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) from the mixOmics R package represents a paradigm shift from single-omics investigations. It enables the integrative analysis of multiple, matched omics datasets (e.g., transcriptomics, proteomics, metabolomics) to identify a consensus multi-omics biomarker signature with superior robustness and biological interpretability compared to single-omics models.

Table 1: Key Comparative Metrics: DIABLO vs. Single-Omics Analysis

| Metric | Single-Omics Analysis (e.g., RNA-seq only) | DIABLO Multi-Omics Integration | Implication for Biomarker Discovery |

|---|---|---|---|

| Data Type | One block (e.g., gene expression) | ≥2 matched blocks (e.g., mRNA, miRNA, proteins) | Holistic view of molecular regulation. |

| Signature Concordance | Limited to one layer. | Cross-validated, correlated features across omics. | Higher mechanistic plausibility. |

| Prediction Performance | Varies; can be prone to overfitting. | Often improved and more stable (AUC increase 5-15% reported). | More reliable diagnostic/prognostic models. |

| Biological Interpretation | Isolated; hard to infer causality. | Reveals networks (e.g., mRNA-miRNA-protein). | Identifies functional drivers and pathways. |

| Handling Redundancy | Within-omics correlation only. | Models between-omics covariance explicitly. | Distills core, multi-omics regulatory modules. |

Core Application Notes

Primary Use Case: Identification of a composite biomarker panel for disease classification (e.g., Cancer vs. Normal) or subtyping. Key Advantage: DIABLO finds a canonical correlation-based linear combination of features from each omics block that maximally correlates with the outcome and with each other. Output: A set of latent components, each comprising a weighted list of selected features from all blocks, representing a multi-omics molecular signature.

Detailed Experimental Protocol for Biomarker Discovery

Protocol: DIABLO Framework for Multi-Omics Biomarker Discovery

A. Prerequisites & Experimental Design

- Sample Cohort: Collect matched multi-omics data (N > sample size requirement for all blocks) from the same biological samples. Common blocks: mRNA, miRNA, DNA methylation, proteomics, metabolomics.

- Data Preprocessing: Perform platform-specific normalization, log-transformation, and missing value imputation individually per block.

- Outcome Variable: Define a categorical outcome (Y) (e.g., Disease Subtype A, B, C; Responder vs. Non-Responder).

B. DIABLO Analysis Workflow

- Setup & Parameter Tuning:

- Input: Formatted data list

X(blocks) and factor vectorY. - Design Matrix: Define the between-block correlation to target. A full design (value = 1 between all blocks) encourages strong correlation.

- Number of Components (

ncomp): Determine via cross-validation (usually 2-3). - Feature Selection: Use

tune.block.splsda()to perform 10-fold CV to optimize the number of features to select per block and per component (list.keepX).

- Input: Formatted data list

- Model Training:

- Run the final model with

block.splsda()using the tunedlist.keepX. - Example call:

final.model <- block.splsda(X = list(transcriptome = mrna, proteome = protein), Y = outcome, ncomp = 3, design = 'full', keepX = tuned.keepX)

- Run the final model with

- Model Evaluation:

- Perform repeated k-fold CV (

perf()) to estimate balanced error rate and AUC. - Plot the Sample Plot to visualize sample clustering on the first two components.

- Perform repeated k-fold CV (

- Biomarker Signature Extraction & Validation:

- Extract selected features:

selectVar(final.model, comp = 1)$transcriptome$name - Validate the signature on an independent test set using

predict(). - Perform pathway enrichment analysis on the integrated feature list.

- Extract selected features:

C. Downstream Biological Validation

- Correlation Networks: Plot the contribution and correlations of selected features across blocks for a component using

network(). - Circos Plot: Visualize correlations between selected features across all blocks and components (

circosPlot()).

Visualizations

Title: Single-Omics vs DIABLO Workflow Comparison

Title: DIABLO Biomarker Discovery Protocol

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Research Reagent Solutions for DIABLO-Driven Biomarker Studies

| Category / Item | Example Product / Technology | Function in DIABLO Workflow |

|---|---|---|

| Sample Preparation | PAXgene Blood RNA tubes (Qiagen), RIPA Lysis Buffer (Thermo) | Stabilize and extract high-quality nucleic acids/proteins from matched clinical samples. |

| Multi-Omics Profiling | NovaSeq 6000 (Illumina), TMTpro 16plex (Thermo), Vanquish UHPLC (Thermo) | Generate matched transcriptomic (RNA-seq), proteomic (MS), and metabolomic (LC-MS) data blocks. |

| Data QC & Normalization | FastQC, DESeq2 (R), Proteome Discoverer | Perform per-block quality control, normalization, and initial feature quantification. |

| Computational Analysis | R Studio with mixOmics, caret, igraph packages | Implement the DIABLO pipeline, perform CV, and visualize correlation networks. |

| Statistical Validation | pROC (R package), ClustVis (web tool) | Calculate AUC metrics and generate validation plots for the final biomarker signature. |

| Pathway Analysis | Ingenuity Pathway Analysis (IPA) (Qiagen), g:Profiler | Interpret the biological meaning of the discovered multi-omics feature set. |

Within the broader thesis on the multi-omics integrative analysis tool DIABLO (Data Integration Analysis for Biomarker discovery using Latent variable approaches for 'Omics studies), this document details its application in biomarker discovery research. DIABLO enables the joint analysis of multiple data types (e.g., transcriptomics, proteomics, metabolomics) to identify multi-omics biomarker signatures, uncover disease heterogeneity, and elucidate underlying biological mechanisms. The following application notes and protocols focus on three critical use cases.

Application Note 1: Disease Subtyping for Precision Medicine

Objective

To stratify a heterogeneous patient population into distinct molecular subtypes using multi-omics data, enabling tailored therapeutic strategies.

DIABLO-Driven Workflow

DIABLO integrates data from multiple 'omics blocks measured on the same samples. It identifies canonical components that maximize covariance between the selected data types and correlation within a subtype. Cluster analysis on the component scores reveals patient subgroups with distinct multi-omics profiles.

Key Data Outputs

Table 1: Example Output from a DIABLO-Driven Cancer Subtyping Study (n=150 patients)

| Identified Subtype | Number of Patients | Key Discriminatory Omics Features | Associated Clinical Trait |

|---|---|---|---|

| Subtype A (Metabolic) | 65 | High Glycolytic Proteins, Low Phospholipids | Worse Response to Standard Chemo |

| Subtype B (Inflammatory) | 52 | High Cytokine mRNA, Activated T-cell Proteome | Better Immunotherapy Outcome |

| Subtype C (Quiescent) | 33 | Low Proliferation Markers Across All Omics | Most Favorable Prognosis |

Protocol: Multi-Omics Patient Stratification Using DIABLO

Step 1: Data Preprocessing & Setup

- Gather matched multi-omics datasets (e.g., mRNA-seq, miRNA-seq, proteomics) from the same patient cohort.

- Perform platform-specific normalization, log-transformation, and missing value imputation.

- Format data into a list of matrices (

X), where each matrix is an 'omics data type with matching rows (patients/samples). - Define the outcome

Yas a single vector for unsupervised analysis (e.g., all one value) or for supervised analysis, a vector of a known clinical class (e.g., disease state).

Step 2: DIABLO Model Design & Tuning

- Use the

block.plsda()orblock.splsda()functions (from themixOmicsR package) for supervised analysis. - Perform cross-validation with

tune.block.splsda()to determine the optimal number of components and the number of features to select per 'omics data type and per component.

Step 3: Model Fitting & Assessment

- Run the final DIABLO model with the tuned parameters.

- Evaluate the model's performance using

perf()for cross-validated error rates andauc()for AUC-ROC curves.

Step 4: Subtype Identification & Characterization

- Generate sample plots (

plotIndiv) to visualize patient clustering in the latent component space. - Perform consensus clustering (e.g., using

ConsensusClusterPlus) on the DIABLO component scores to define robust subtypes. - Use the

plotDiablo()andcircosPlot()functions to examine correlations between selected features from different omics layers that define each subtype.

The Scientist's Toolkit

Table 2: Essential Reagents & Solutions for Multi-Omics Subtyping

| Item | Function in Workflow |

|---|---|

| PAXgene Blood RNA Tube | Stabilizes intracellular RNA for transcriptomic profiling from blood samples. |

| FFPE Tissue RNA Extraction Kit | Isols high-quality RNA from archived formalin-fixed paraffin-embedded (FFPE) tissue blocks. |

| TMTpro 16plex Kit | Enables multiplexed quantitative proteomic analysis of up to 16 samples in a single LC-MS/MS run. |

| CIL/IL LC-MS Metabolite Standards | Isotope-labeled internal standards for accurate quantification of metabolites in mass spectrometry. |

| Cell-Free DNA Collection Tubes | Preserves circulating cell-free DNA for genomic and epigenomic analysis of liquid biopsies. |

(Diagram: Workflow for Disease Subtyping with DIABLO)

Application Note 2: Multi-Omics Prognostic Biomarker Panels

Objective

To discover and validate a robust panel of biomarkers from multiple 'omics layers that predicts clinical outcomes (e.g., survival, recurrence).

DIABLO-Driven Workflow

DIABLO identifies a small, correlated set of features across different data types that are jointly predictive of a time-to-event or binary outcome. The resulting multi-omics signature often has superior performance to single-omics markers.

Key Data Outputs

Table 3: Performance of a Hypothetical DIABLO-Derived Prognostic Panel vs. Single-Omics Signatures

| Biomarker Signature Source | Number of Features | Concordance Index (C-Index) | Integrated AUC (5-Year) |

|---|---|---|---|

| DIABLO Panel (Multi-Omics) | 12 (4 mRNA, 3 miRNA, 5 Proteins) | 0.82 | 0.89 |

| Transcriptomics Only | 8 mRNA | 0.71 | 0.75 |

| Proteomics Only | 6 Proteins | 0.76 | 0.80 |

| Clinical Factors Only | 3 (Age, Stage, Grade) | 0.65 | 0.68 |

Protocol: Building a Prognostic Panel with DIABLO

Step 1: Cohort Definition & Data Integration

- Assemble a discovery cohort with matched multi-omics data and long-term clinical follow-up (survival data).

- Preprocess data as in Application Note 1. The outcome

Yis a survival object (time + event). - Use the

block.splsdafunction in a supervised design, treating survival risk groups (e.g., high vs. low risk from median survival split) as the outcome for feature selection.

Step 2: Feature Selection & Panel Definition

- From the tuned DIABLO model, extract the selected variables across all components and omics datasets using the

selectVar()function. - Prioritize features that are consistently selected across cross-validation folds and have high component loading weights.

- Define the final panel as the union of top features from each omics type.

Step 3: Prognostic Model Building & Validation

- Using only the selected panel features, build a multivariate Cox proportional hazards model in the discovery cohort.

- Calculate a risk score for each patient (e.g., linear predictor from Cox model).

- Validate the risk score's prognostic performance in an independent validation cohort using the C-index and Kaplan-Meier log-rank analysis.

Step 4: Biological Interpretation

- Perform pathway enrichment analysis on the selected features (e.g., using

geneSetTestfor genes, MetaboAnalyst for metabolites). - Visualize the correlation network of the final panel features using

network().

(Diagram: Prognostic Panel Development Workflow)

Application Note 3: Mechanism-Driven Discovery

Objective

To uncover interconnected molecular mechanisms across omics layers that drive a phenotype, generating testable hypotheses for functional validation.

DIABLO-Driven Workflow

DIABLO identifies strongly correlated (and potentially causally linked) variables across data types. For example, a transcription factor (proteomics), its target genes (transcriptomics), and related metabolites (metabolomics) may be selected together in a component, suggesting a functional module.

Key Data Outputs

Table 4: Example Multi-Omics Module Discovered by DIABLO in Inflammatory Disease

| Omics Layer | Selected Feature | Known Function | Correlation to Latent Component 1 |

|---|---|---|---|

| Phosphoproteomics | p-STAT3 (Y705) | Inflammatory signaling hub | +0.92 |

| Transcriptomics | SOCS3 mRNA | STAT3-induced feedback inhibitor | +0.88 |

| Transcriptomics | IL6ST mRNA (gp130) | IL-6 receptor subunit | +0.85 |

| Metabolomics | Kynurenine | Tryptophan metabolite, immune suppressive | +0.90 |

Protocol: Mechanism Hypothesis Generation with DIABLO

Step 1: Experimental Design & Integration

- Design an experiment comparing two biologically distinct conditions (e.g., treated vs. control, diseased vs. healthy) with deep multi-omics profiling.

- Process data into the

Xlist. The outcomeYis the class vector for the two conditions. - Run a supervised DIABLO (

block.splsda) to find features discriminating the conditions.

Step 2: Extraction of Correlated Multi-Omics Networks

- For each DIABLO component, extract the top features from each block and their loadings.

- Use the

network()function to generate and visualize the correlation network for a specific component, highlighting inter-omics connections. - Export the adjacency matrix for network analysis in tools like Cytoscape.

Step 3: Functional Annotation & Hypothesis Formation

- For each correlated multi-omics set, perform over-representation analysis of genes/proteins against pathway databases (KEGG, Reactome).

- Map metabolites to associated enzymatic pathways (via KEGG or HMDB).

- Formulate a mechanistic hypothesis: e.g., "Inhibition of X kinase (phosphoproteomics) leads to downregulation of Y pathway transcripts and accumulation of Z metabolite."

Step 4: Experimental Prioritization & Validation

- Prioritize central hub nodes in the network (high degree of inter-omics connections) as key candidate regulators.

- Design functional experiments (e.g., siRNA knockdown, small molecule inhibition, overexpression) targeting the hub and measure downstream multi-omics effects to validate the predicted connections.

(Diagram: Mechanism Discovery from Multi-Omics Correlation)

Within the broader thesis on utilizing DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) for multi-omics biomarker discovery in complex diseases, a fundamental prerequisite is a comprehensive understanding of the mixOmics R/Bioconductor framework and its specific input data requirements. This protocol outlines the core principles, data structures, and pre-processing steps necessary to effectively employ DIABLO for integrative analysis aimed at identifying robust, multi-modal biomarker panels in translational research and drug development.

The mixOmics Framework: Core Components

mixOmics is an R package providing a comprehensive suite of multivariate methods for the integration and exploration of large-scale biological datasets. For DIABLO-based biomarker discovery, the following components are critical:

- Data Integration Paradigm: Employs variants of Projection to Latent Structures (PLS) methods, extending to multi-block integration via DIABLO (sPLS-DA).

- Statistical Engine: Uses sparse modeling to identify a small subset of discriminative variables (potential biomarkers) from each dataset.

- Visualization Toolkit: Offers functions for result interpretation, including sample plots, variable loadings plots, correlation circle plots, and network representations.

Input Data Requirements and Structures

Successful application requires data to be structured in a specific format. The following table summarizes the mandatory input specifications.

Table 1: Core Input Data Specifications for DIABLO Analysis

| Feature | Requirement | Description | Impact on Analysis |

|---|---|---|---|

| Data Types | Minimum: 2 blocks | Matrices of matching samples (rows) for features (columns). Common blocks: Transcriptomics, Proteomics, Metabolomics, Methylation. | Defines the integration scope. DIABLO is designed for 2+ blocks. |

| Sample Matching | Strict 1-to-1 correspondence | The same N biological samples must be present across all blocks (in the same row order). | Foundation for multi-omics integration. Mismatches cause erroneous correlations. |

| Data Format | Numeric matrix | Each block (X1, X2, ...) must be a numeric matrix or data frame. Categorical variables must be encoded. |

Required for mathematical computation. Non-numeric data will throw an error. |

Class Vector (Y) |

Categorical factor | A single factor vector of length N defining the known sample classes (e.g., Disease vs. Control, multiple subtypes). | The supervised component drives the search for discriminative features. |

| Nomenclature | Consistent IDs | Row names (samples) must match across all matrices and the class vector Y. Column names (features) should be unique within each block. |

Ensures correct alignment of samples and feature identification in results. |

| Missing Values | Pre-processed | The framework cannot handle NA values directly. Imputation or removal is a mandatory pre-processing step. |

Missing data will cause function failure. Strategy must be documented. |

| Scale | Usually centered & scaled | For features measured on different scales (e.g., gene counts vs. metabolite intensities), scaling is typically applied internally or beforehand. | Prevents high-variance domains from dominating the component calculation. |

Experimental Protocol: Data Preparation for DIABLO

Protocol 4.1: Data Curation and Sample Alignment

Objective: To curate multi-omics datasets into the strictly aligned format required by mixOmics.

- Begin with raw or pre-processed data matrices from each omics platform.

- Extract and standardize sample identifiers across all datasets.

- Perform sample intersection: retain only samples present in all measured datasets.

- Order the samples identically in each data matrix and the class label vector.

- Verify alignment by checking that

rownames(X1) == rownames(X2) ... == names(Y)returnsTRUE.

Protocol 4.2: Pre-processing and Normalization

Objective: To address technical variance and make datasets comparable.

- Perform platform-specific normalization (e.g., TPM for RNA-Seq, median normalization for proteomics, probabilistic quotient for metabolomics).

- Apply variance-stable transformations if needed (e.g., log2 for sequencing count data).

- Handle missing values using an appropriate method (e.g., k-nearest neighbors imputation, minimum value imputation for proteomics). Document the method and proportion of imputed values.

- Filter non-informative features: Remove features with near-zero variance or excessive missingness prior to imputation.

Protocol 4.3: Input Object Construction in R

Objective: To create the final input object for the block.splsda() function.

Visualization of Framework and Workflow

Title: DIABLO Multi-Omics Analysis Workflow from Data to Results

Title: DIABLO's Supervised Integration Model Logic

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for Multi-Omics Biomarker Discovery

| Category | Item / Solution | Function in Workflow | Example / Note |

|---|---|---|---|

| Sample Prep | PAXgene Blood RNA Tube | Stabilizes intracellular RNA in whole blood for transcriptomic studies from the same draw as plasma. | Enables matched transcriptomic & proteomic/metabolomic analysis. |

| Sample Prep | Protease & Phosphatase Inhibitor Cocktails | Preserves the proteome and phosphoproteome state during tissue homogenization or plasma preparation. | Critical for unbiased protein and phosphorylation site quantification. |

| Sample Prep | Stable Isotope-Labeled Internal Standards | Enables accurate absolute quantification of metabolites or proteins via mass spectrometry. | Corrects for ionization efficiency variations in LC-MS platforms. |

| Data Gen. | TruSeq Stranded mRNA Kit | Library preparation for RNA-Seq; generates strand-specific transcriptome data. | Common input for transcriptomic block. |

| Data Gen. | Olink Explore Proximity Extension Assay (PEA) Panels | High-throughput, high-sensitivity multiplex immunoassay for protein biomarker discovery. | Provides proteomic block data from minimal sample volume. |

| Data Gen. | Metabolon Discovery HD4 Platform | Global untargeted metabolomics profiling for broad metabolite identification. | Provides extensive metabolomic block data. |

| Bioinformatics | mixOmics R/Bioconductor Package | The primary software toolkit performing DIABLO and related integrative analyses. | Version >6.18.1 is recommended. |

| Bioinformatics | NIH Metabolomics Workbench / PRIDE / GEO | Public repositories to obtain complementary datasets for method comparison or validation. | Used for benchmarking or independent validation cohorts. |

In the application of multi-omics integration for biomarker discovery, frameworks like DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) are pivotal. This protocol clarifies the essential terminology—Components, Loadings, Design Matrix, and Explained Variance—within the experimental workflow of DIABLO. A precise understanding of these terms is critical for designing robust multi-omics studies, interpreting latent biological drivers, and validating candidate biomarkers across platforms (e.g., transcriptomics, proteomics, metabolomics).

Core Terminology: Definitions & Quantitative Benchmarks

Table 1: Core Terminology in DIABLO-Based Integration

| Term | Definition | Role in DIABLO | Typical Quantitative Range/Consideration |

|---|---|---|---|

| Component | A latent variable constructed as a weighted sum of variables from one or more omics datasets, capturing co-variation. | Represents a multi-omics biomarker signature or biological axis. DIABLO extracts components to maximize covariance between omics types. | Number of components (K) is user-defined; often selected via cross-validation (Typical K: 1-5). |

| Loadings | Weights assigned to each original variable (e.g., gene, protein) in the construction of a component. Indicates the variable's contribution. | Identifies which features drive the multi-omics correlation and are candidate biomarkers. Sparse loadings are often enforced for feature selection. | Absolute loading magnitude indicates importance. Loadings ~0 indicate irrelevant features. |

| Design Matrix | A symmetric matrix specifying the a priori connections between different omics datasets that the model should strengthen. | Guides DIABLO to focus integration on linked datasets. A higher design value (e.g., 0.5-1) forces stronger integration between two blocks. | Values range [0,1]. 0 = no connection forced; 1 = full connection (maximal integration). |

| Explained Variance | The proportion of variance in an individual omics dataset accounted for by the extracted components. | Assesses how well the multi-omics components explain each data block. High variance suggests the captured signal is strong in that omics layer. | Reported per dataset, per component. In biomarker studies, a balance across omics types is often sought. |

Experimental Protocol: Implementing DIABLO for Biomarker Discovery

Protocol 3.1: Pre-Analysis Data Preparation

- Data Collection: Assemble matched multi-omics data (e.g., mRNA-seq, LC-MS proteomics, NMR metabolomics) from the same biological samples (n). Ensure consistent sample IDs.

- Pre-processing & Scaling: Perform platform-specific normalization, log-transformation where appropriate, and handle missing values. Autoscale each variable (mean-center, divide by SD) to give equal weight.

- Define Data Blocks (X): Format data into a list of matrices,

X = {X_mRNA, X_protein, X_metab}, where each matrix is of dimensions (n x pk) with pk features. - Define Outcome (Y): Specify the categorical outcome vector (e.g., Disease vs. Control) of length n.

Protocol 3.2: Configuration & Model Tuning

- Set Design Matrix (C): Based on biological hypothesis. For full integration of all omics: C_{ij} = 1 for all i, j. To model known specific relationships, adjust values accordingly (e.g., mRNA-Protein link = 0.9, others = 0.5).

- Tune Parameters:

- Perform repeated k-fold cross-validation (e.g., 5-fold, 10 repeats) to determine:

- Number of Components (ncomp): Evaluate model performance (balanced error rate) across potential components.

- Number of Features to Select (keepX): Use

tune.block.splsdato test different sparsity levels per component and data block, optimizing classification accuracy.

- Perform repeated k-fold cross-validation (e.g., 5-fold, 10 repeats) to determine:

Protocol 3.3: Model Fitting & Evaluation

- Run DIABLO: Fit the final

block.splsdamodel using the tunedncompandkeepXparameters and the specified design matrix. - Assess Model Performance: Generate a confusion matrix and calculate the balanced error rate. Plot the sample clusters on the first two components.

- Extract Key Results:

- Components: Retrieve the latent variable scores for each sample (

$variates). - Loadings: Examine

$loadingsto identify the selected features (non-zero weights) driving each component. - Explained Variance: Calculate using the

explained_variancefunction on the fitted model for each data block and component.

- Components: Retrieve the latent variable scores for each sample (

Visualization of DIABLO Workflow & Terminology Relationships

Diagram Title: DIABLO Workflow from Input to Biomarkers

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for DIABLO-Based Biomarker Discovery

| Item | Function/Description | Example/Provider |

|---|---|---|

| R Statistical Environment | Open-source platform for statistical computing and graphics. Essential for running DIABLO. | R Project (www.r-project.org) |

| mixOmics R Package | Comprehensive R toolkit containing the DIABLO (block.splsda) function and all necessary plotting and tuning utilities. |

CRAN/Bioconductor |

| Multi-omics Data | Matched, pre-processed datasets from at least two omics technologies. The fundamental input. | In-house or public repositories (GEO, PRIDE, Metabolights). |

| High-Performance Computing (HPC) Resources | For computationally intensive cross-validation tuning steps, especially with large feature sets. | Local clusters or cloud services (AWS, GCP). |

| Sample Metadata Manager | Software/tool to meticulously maintain and curate sample matching across omics assays. | REDCap, OpenSpecimen, or custom SQL databases. |

| Visualization Toolkit | Libraries for creating publication-quality plots from DIABLO results (e.g., correlation circle, sample plots). | ggplot2, plotly in R. |

Step-by-Step DIABLO Pipeline: From Raw Omics Data to Actionable Biomarker Panels

This protocol details the essential steps for preparing multi-omics datasets for analysis with the DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) framework. Proper experimental design and preprocessing are critical for the success of integrative biomarker discovery studies, forming the foundation for robust, biologically interpretable results within a broader thesis on DIABLO's application in translational research.

Foundational Experimental Design Principles

The experimental design must precede data collection to ensure compatibility with DIABLO's requirement for matched samples across omics layers.

Key Design Considerations

- Sample Matching: DIABLO requires a one-to-one correspondence of samples (e.g., the same patient's tissue) across all measured omics datasets (e.g., transcriptomics, proteomics, metabolomics).

- Cohort Sizing: Adequate sample size is paramount for generalizability. Power calculations should be performed for the primary outcome.

- Batch Effects: The design must systematically account for and randomize technical factors (processing date, reagent lot, operator) to prevent confounding.

Table 1: Recommended Minimum Sample Sizes for DIABLO Studies

| Study Type | Discovery Cohort (Min) | Validation Cohort (Min) | Rationale |

|---|---|---|---|

| Pilot/Exploratory | 20-30 per group | N/A | Initial feature selection and model tuning. |

| Biomarker Discovery | 50-100 per group | 30-50 per group | Robust model training and independent testing. |

| Clinical Validation | 100+ per group | 100+ per group | High-confidence biomarker signature for deployment. |

Sample Collection & Storage Protocol

Aim: To collect and preserve biospecimens for multi-omics analysis while maintaining molecular integrity. Protocol:

- Consent & Annotate: Obtain informed consent. Record detailed clinical phenotype and sample metadata.

- Collect Matched Samples: For a given subject, collect biospecimens (e.g., blood, tissue) destined for each omics platform simultaneously.

- Immediate Processing: Process samples according to platform-specific SOPs (e.g., PAXgene tubes for RNA, serum separation for metabolomics) within a strict, pre-defined window (e.g., ≤30 minutes for plasma).

- Consistent Storage: Aliquot and flash-freeze samples in liquid nitrogen. Store long-term at -80°C or in liquid nitrogen vapor phase. Avoid freeze-thaw cycles.

- Randomized Batch Processing: Assign samples from different experimental groups across different processing batches to minimize technical bias.

Data Preprocessing Pipeline

Raw data from each omics platform must be independently processed and normalized before integration.

Table 2: Standard Preprocessing Steps by Omics Type

| Omics Layer | Raw Data | Key Preprocessing Steps | Common Normalization | Output for DIABLO |

|---|---|---|---|---|

| Transcriptomics (RNA-seq) | FASTQ files | QC (FastQC), Trimming, Alignment (STAR), Quantification (featureCounts). | TMM (edgeR) or Variance Stabilizing Transform (DESeq2). | Log2(CPM or normalized counts) matrix. |

| Proteomics (LC-MS/MS) | .raw spectra files | Database search (MaxQuant, Proteome Discoverer), Peptide/Protein inference. | Median centering, log2 transformation, quantile normalization or vsn. | Log2(intensity) matrix. |

| Metabolomics (LC-MS) | .raw spectra files | Peak picking, alignment, annotation (XCMS, Progenesis QI). | Sample-specific normalization (e.g., PQN), log2 transformation, pareto scaling. | Log2(intensity) matrix. |

| Microbiome (16S rRNA) | FASTQ files | Denoising (DADA2), Chimera removal, Taxonomic assignment (SILVA). | Rarefaction or CSS normalization (metagenomeSeq). | Relative abundance or CSS-normalized matrix. |

Protocol: Creating a DIABLO-Ready Data List

Aim: To structure normalized, matched omics datasets into the list object required by the mixOmics R package.

Protocol:

- Feature Filtering: Remove low-abundance features. Retain features present in >X% of samples (e.g., >50%) or with sufficient variance (top Y% by variance).

- Missing Value Imputation: Use platform-appropriate methods (e.g., k-NN for metabolomics, minimal imputation for proteomics).

- Data Scaling: Center each feature (column) to mean = 0. Scale features to unit variance if comparable magnitude across features is desired.

- Format Matrices: Ensure each omics data matrix (features x samples) has identical sample order. Sample IDs must match exactly across matrices.

- Construct List:

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item | Function in DIABLO-Ready Study |

|---|---|

| PAXgene Blood RNA Tubes | Stabilizes intracellular RNA in whole blood at collection, preserving transcriptomic profiles. |

| Streck Cell-Free DNA BCT Tubes | Preserves blood samples for cell-free DNA/RNA analysis, preventing genomic DNA contamination. |

| Mass Spectrometry Grade Solvents (Acetonitrile, Methanol) | Essential for reproducible protein/metabolite extraction and LC-MS/MS analysis. |

| Stable Isotope Labeled Internal Standards | Enables accurate quantification in mass spectrometry-based proteomics and metabolomics. |

| Nucleic Acid Stabilization Reagents (e.g., RNAlater) | Preserves RNA/DNA integrity in solid tissues during collection and transport. |

| Multiplex Assay Kits (Luminex, Olink, SomaScan) | Allows high-throughput, simultaneous quantification of dozens to thousands of proteins from minimal sample volume. |

| DNA/RNA/Protein Normalization Assay Kits (e.g., Qubit) | Provides accurate concentration measurements for downstream library preparation or analysis. |

Workflow & Pathway Visualizations

Title: DIABLO-Ready Dataset Preparation Workflow

Title: DIABLO Integrates Omics Layers for Biomarker Discovery

Introduction

This Application Note details a critical phase in the DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) framework for multi-omics biomarker discovery. The performance and biological interpretability of a DIABLO model are heavily dependent on two hyperparameters: the number of components (ncomp) and the design matrix, which specifies the inter-omics connections. This protocol provides a systematic, data-driven approach for tuning these parameters within a thesis focused on identifying robust multi-omics biomarker panels for complex diseases.

1. The Hyperparameter Tuning Workflow A principled, iterative approach is essential for robust model building.

Table 1: Key Hyperparameters and Their Role

| Hyperparameter | Definition | Purpose in DIABLO |

|---|---|---|

Number of Components (ncomp) |

The number of latent vectors to extract from each dataset. | Captures successive, orthogonal levels of covariation across omics datasets. |

Design Matrix (design) |

A symmetric matrix (values 0-1) defining the assumed network between omics datasets. | Controls the strength of integration; higher values enforce tighter integration between specific omics. |

Diagram 1: DIABLO hyperparameter tuning workflow.

2. Protocol: Tuning the Design Matrix The design matrix is tuned first, as it defines the integration topology.

2.1. Experimental Setup

- Objective: Identify the design value that maximizes discrimination while avoiding overfitting.

- Method: Repeated k-fold Cross-Validation (e.g., 5-fold x 50 repeats).

- Metric: Balanced Error Rate (BER). Lower BER indicates better classification performance.

2.2. Procedure

- Define a grid of design values to test (e.g.,

c(0.1, 0.2, ..., 0.9)). - For each design value in the grid:

a. Run repeated k-fold CV using the

tune.block.splsdafunction. b. Use a temporarily fixed, generousncomp(e.g., 3-5). c. Record the mean BER across all repeats for each design value. - Plot mean BER against design values.

- Selection Criterion: Choose the design value with the lowest mean BER, often found between 0.5 and 0.9. A value too low (e.g., 0.1) under-integrates; a value of 1 forces all omics to be equally correlated, which may be biologically unrealistic.

Table 2: Example Cross-Validation Results for Design Tuning

| Design Value | Mean BER (SE) | Feature Correlation* |

|---|---|---|

| 0.1 | 0.35 (0.04) | Very Weak |

| 0.3 | 0.28 (0.03) | Weak |

| 0.5 | 0.22 (0.02) | Moderate |

| 0.7 | 0.20 (0.02) | Strong |

| 0.9 | 0.21 (0.03) | Very Strong |

| 1.0 | 0.23 (0.03) | Complete |

*Estimated strength of correlation enforced between selected features across omics.

3. Protocol: Selecting the Optimal Number of Components (ncomp)

With the tuned design matrix fixed, determine the optimal ncomp.

3.1. Performance-Based Selection

- Run a final CV on the model with the tuned design, now varying

ncomp. - Extract the BER trend across components.

- Selection Criterion: Choose the

ncompwhere the BER curve reaches a plateau or its minimum. Adding more components beyond this point yields negligible performance gain.

3.2. Correlation-Based Validation

- For each component, examine the correlation between the latent variables (

variates) of each omics pair. - Plot these correlations per component.

- Selection Criterion: The chosen

ncompshould have consistently high correlations (e.g., >0.7) across most dataset pairs for all retained components, confirming successful integration.

Diagram 2: Criteria for selecting the final ncomp value.

4. The Scientist's Toolkit: Research Reagent Solutions Table 3: Essential Materials and Computational Tools

| Item | Function in DIABLO Hyperparameter Tuning |

|---|---|

| R Statistical Environment (v4.3+) | The core software platform for all analyses. |

mixOmics Package (v6.24+) |

Provides the block.splsda, tune.block.splsda, and all DIABLO functions. |

| High-Performance Computing (HPC) Cluster | Essential for running extensive repeated cross-validations in a feasible time. |

| Pre-processed Multi-omics Datasets | Normalized, scaled, and filtered data matrices (e.g., transcriptomics, proteomics, metabolomics) with matched samples. |

| Phenotypic Classification Vector | A factor vector defining the sample groups (e.g., Disease vs. Control). |

| Integrated Development Environment (RStudio/Posit) | Facilitates code development, visualization, and documentation. |

Visualization Libraries (ggplot2, pheatmap) |

For generating BER plots, correlation heatmaps, and component plots. |

This protocol details the implementation of the block.splsda function, a core component of the Data Integration Analysis for Biomarker discovery using Latent cOmponents (DIABLO) framework. Within the broader thesis on DIABLO for multi-omics biomarker discovery, block.splsda is the engine for supervised classification and feature selection across multiple, heterogeneous data blocks (e.g., transcriptomics, proteomics, metabolomics). It extends the sPLS-DA (sparse Partial Least Squares Discriminant Analysis) method to an integrative setting, identifying a multi-omics signature that maximally discriminates between sample classes while capturing the covariation between different data types.

Core Quantitative Parameters & Tuning

The performance of block.splsda is contingent on the selection of key tuning parameters. These parameters are typically optimized via repeated cross-validation.

Table 1: Core Tuning Parameters for block.splsda

| Parameter | Description | Typical Range / Options | Impact on Model |

|---|---|---|---|

ncomp |

Number of components (latent vectors) to extract. | 1-10 | Defines model complexity; more components may capture more variance but risk overfitting. |

keepX (per block) |

Number of features to select per component per data block. | List of vectors (e.g., list(omics1=c(50,25), omics2=c(30,15))) |

Controls sparsity and the size of the multi-omics signature. Critical for feature selection. |

design |

Between-block connection matrix. | 0-1 matrix (full: 1, null: 0). Often max(cor(Y)) or user-defined. |

Governs the strength of integration. Higher values force the model to seek stronger block correlations. |

scheme |

Function to combine block variates. | "horst", "centroid", "factorial" |

Influences the criterion for maximization in the algorithm ("horst" maximizes sum of correlations). |

Table 2: Key Performance Metrics from Cross-Validation

| Metric | Formula / Description | Interpretation for Biomarker Discovery |

|---|---|---|

| Balanced Error Rate (BER) | Weighted average of misclassification rates across classes. | Primary metric for imbalanced class sizes. Lower BER indicates better predictive accuracy. |

| Overall Error Rate | Total misclassifications / total samples. | General measure of classifier performance. |

| Stability of Selected Features | Frequency of feature selection across CV folds. | High stability indicates a robust biomarker candidate. |

Detailed Experimental Protocol

Protocol: Supervised Multi-Omics Classification withblock.splsda

I. Preprocessing & Data Preparation

- Data Input: Organize each omics dataset into a matrix (

X1,X2, ...) with matching rows (samples, N) and variable columns (features, P_omic). - Outcome Variable: Create a categorical factor vector

Y(length N) indicating the class membership (e.g., Disease vs. Control). - Normalization & Scaling: Perform omics-specific normalization (e.g., TPM for RNA-Seq, sum-normalization for metabolomics). Scale each feature to zero mean and unit variance within each block (

scale = TRUE). - Train-Test Split: Split data into independent training (e.g., 70%) and hold-out test (e.g., 30%) sets, preserving class proportions (stratification).

II. Model Tuning via Repeated k-fold Cross-Validation

- Define Parameter Grid: Create a grid of candidate

keepXvalues for the first component (e.g.,list(omics1=seq(10,100,10), omics2=seq(5,50,5))). Start withncomp=1anddesign=0. - Run

tune.block.splsda: Use the training set only. - Identify Optimal

keepX: Extract the parameters yielding the minimum BER (cv.tune$choice.keepX). - Iterate for Higher Components: Using the optimal

keepXfor comp1, fix those features and repeat step 2 to tunekeepXfor comp2. Continue until adding a component no longer improves performance.

III. Final Model Training

- Train Model: Using all optimal parameters from tuning, train the final

block.splsdamodel on the entire training set.

IV. Evaluation & Discovery

- Prediction on Test Set:

- Calculate Test Set Error: Construct confusion matrix to calculate BER and overall error rate.

- Biomarker Discovery: Extract the selected features (

selectVar(final.model, comp=1)$name) from each block and component. These form the multi-omics biomarker signature. - Pathway Enrichment: Input selected gene/protein IDs into tools like g:Profiler or MetaboAnalyst for functional interpretation.

Visual Workflows and Pathways

Diagram Title: DIABLO Workflow: From Data to Biomarkers

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools

| Item | Function in block.splsda Protocol |

Example/Note |

|---|---|---|

| R Statistical Environment | Core platform for analysis. | Version ≥ 4.1.0. |

mixOmics R Package |

Contains the block.splsda() and tune.block.splsda() functions. |

Core dependency (≥ 6.20.0). |

| High-Performance Computing (HPC) Cluster | For computationally intensive repeated CV tuning with large omics datasets. | Essential for large-scale studies. |

| Normalization Software | For block-specific data preprocessing (e.g., DESeq2 for RNA-seq, MSnbase for proteomics). | Critical for data quality. |

| Functional Enrichment Tools | For interpreting selected biomarker signatures (e.g., g:Profiler, MetaboAnalyst, Enrichr). | Links results to biology. |

| Sample Metadata Database | Curated table linking sample IDs to class labels (Y) and covariates. |

Ensures correct supervised analysis. |

| Version Control System (Git) | To track all analysis code and parameter changes. | Ensures reproducibility. |

| Containerization (Docker/Singularity) | Packages the exact software environment for portability and reproducibility. | Mitigates "works on my machine" issues. |

Within a thesis on Data Integration Analysis for Biomarker discovery using Latent variable approaches for Omics data (DIABLO), the interpretation of key graphical outputs is paramount. DIABLO is a multi-omics integration method designed to identify correlated biomarker signatures across multiple data types. This protocol details the systematic analysis of three core plot types—sample plots, variable loadings plots, and correlation circos plots—essential for validating and extracting biological insights from a DIABLO model.

Sample Plots: Assessing Clustering and Discrimination

Sample plots (e.g., scatter plots of sample scores on latent components) visualize the model's ability to discriminate between predefined groups (e.g., disease vs. control).

Table 1: Key Metrics for Interpreting Sample Plots

| Metric | Description | Ideal Outcome | Typical Threshold |

|---|---|---|---|

| Between-Group Variance | Proportion of variance explained by group separation on Component 1. | High value, indicating clear discrimination. | > 50% |

| Within-Group Variance | Variance of samples within the same group. | Low value, indicating tight clustering. | Minimized |

| Centroid Distance | Euclidean distance between group centroids on the plot. | Larger distance indicates stronger separation. | Context-dependent |

| Overlap Area | Geometrical overlap of confidence ellipses (e.g., 95% CI). | Minimal to no overlap. | None |

Protocol 2.1: Visual and Statistical Assessment of Sample Plots

- Plot Generation: Using the

plotDiablofunction in themixOmicsR package, generate a sample plot for the first two components. - Overlay Confidence Ellipses: Set

plot.ellipse = TRUEto add 95% confidence ellipses for each group. - Calculate Explained Variance: Extract the proportion of variance explained by each component for each dataset from the model output (

$prop_expl_var). - Quantify Separation: Compute the Mahalanobis distance between group centroids using the

mahalanobisfunction in R on the component scores. - Cross-validate: Overlay sample labels from cross-validation (e.g.,

plotIndiv(..., legend=TRUE, pch=c(16,17), style='graphics')) to check for stability.

Variable Loadings Plots: Identifying Key Biomarkers

Loadings plots display the contribution (weight) of each variable (e.g., gene, protein) from each omics block to the latent component. Higher absolute loading indicates greater importance.

Table 2: Interpretation Guide for Variable Loadings

| Loading Value Range | Interpretation | Action for Biomarker Discovery | |||

|---|---|---|---|---|---|

| > | 0.7 | Very high contribution. Prioritize for downstream validation. | |||

| 0.5 | to | 0.7 | Strong contribution. Include in candidate shortlist. | ||

| 0.3 | to | 0.5 | Moderate contribution. Consider in context of correlation. | ||

| < | 0.3 | Weak contribution. Typically deprioritized. |

Protocol 2.2: Extracting and Ranking Biomarker Candidates from Loadings

- Extract Loadings: Use

plotLoadings(model, comp = 1, contrib = 'max')to extract and visualize top contributors for a given component. - Set Contribution Threshold: Filter variables with absolute loading values above a threshold (e.g., 0.5). The threshold should be justified based on the simulated null distribution or permutation testing.

- Assess Consistency: Check the stability of the selected variables across multiple components and via cross-validation (

perf(model, validation='Mfold', folds=5, nrepeat=10)). - Generate Ranked List: Create a consolidated table across all integrated omics datasets.

Table 3: Example Top Loadings from a Two-Omics DIABLO Model (Component 1)

| Dataset | Variable ID | Loading Value | Assigned Group | Correlation with Component |

|---|---|---|---|---|

| Transcriptomics | Gene_ABC | 0.89 | Disease | 0.95 |

| Transcriptomics | Gene_XYZ | -0.82 | Control | -0.91 |

| Proteomics | Protein_123 | 0.78 | Disease | 0.87 |

| Metabolomics | Meta_456 | -0.71 | Control | -0.82 |

Correlation Circos Plots: Visualizing Multi-Omic Networks

The circos plot provides a holistic view of the selected multi-omics biomarker signature, showing correlations between variables across different datasets.

Table 4: Elements of a DIABLO Correlation Circos Plot

| Plot Element | Represents | Interpretation |

|---|---|---|

| Outer Track Segments | Different omics data blocks (e.g., Transcriptome, Proteome). | The source of variables. |

| Inner Points/Bars on Track | Individual selected variables (biomarker candidates). | Their position is often ordered by loading value. |

| Ribbons/Chords Connecting Points | Correlation between variables from different omics blocks. | Thickness and color often encode strength and sign of correlation. |

| Block Color | Distinct color per omics block. | For easy visual differentiation. |

Protocol 2.3: Generating and Interpreting the Correlation Circos Plot

- Plot Creation: Use

circosPlot(model, cutoff = 0.7, line = TRUE, color.blocks = c('#EA4335', '#4285F4'), color.cor = c('#FBBC05', '#34A853')). Thecutofffilters connections based on a correlation threshold. - Identify High-Correlation Hubs: Look for variables with many thick connecting ribbons; these are potential key regulators in the multi-omics network.

- Validate Biological Plausibility: Export the adjacency list of correlations from the plot object and input these variable pairs into pathway analysis tools (e.g., STRING, MetaboAnalyst).

- Cross-Reference with Loadings: Ensure variables with high loadings are central in the circos network, reinforcing their importance.

Experimental Workflow for DIABLO Output Analysis

Diagram Title: DIABLO Output Analysis Workflow for Biomarkers

The Scientist's Toolkit: Research Reagent Solutions

Table 5: Essential Reagents and Tools for DIABLO-Based Biomarker Validation

| Item | Function/Description | Example Vendor/Catalog |

|---|---|---|

| R Statistical Software | Primary platform for running the mixOmics package and generating all plots. |

The R Project |

mixOmics R Package |

Contains the DIABLO function and all plotting routines (plotIndiv, plotLoadings, circosPlot). | Bioconductor |

| High-Performance Computing (HPC) Access | Essential for running permutation tests, cross-validation, and large-scale integration. | Local institutional cluster or cloud services (AWS, GCP). |

| RT-qPCR Assays | For technical validation of top-ranked transcriptomic biomarker candidates. | Thermo Fisher Scientific (TaqMan), Bio-Rad. |

| ELISA Kits | For orthogonal validation of proteomic biomarker candidates from the loadings plot. | R&D Systems, Abcam. |

| Pathway Analysis Software | To interpret the circos plot network in a biological context (e.g., Ingenuity Pathway Analysis, MetaboAnalyst). | QIAGEN, open-source platforms. |

| Sample Cohort with Matched Multi-omics Data | Essential input. Requires high-quality, clinically annotated samples (tissue, blood) processed for multiple omics assays. | Internal biobank or public repositories (TCGA, GEO). |

Advanced Protocol: Integrated Output Interpretation Session

- Synchronized Viewing: Open the sample plot, loadings table, and circos plot simultaneously.

- Triangulate Findings: Identify a sample that is misclassified on the sample plot. In the circos plot, check if the biomarker connections for that sample's predicted group are weak or atypical.

- Check Loadings Consistency: For a variable with a high loading but few connections in the circos plot, investigate its within-omics variance or potential as a unique predictor.

- Generate a Final Report Table: Synthesize findings into a master table listing biomarker ID, omics type, loading value, key cross-omic correlations (from circos), and proposed biological role.

Within the broader thesis on the DIABLO (Data Integration Analysis for Biomarker discovery using Latent variable approaches for Omics studies) framework, this protocol details the critical downstream steps following initial multi-omics integration. DIABLO identifies correlated multi-omics components, but extracting biologically interpretable, prioritized biomarker signatures requires rigorous downstream analysis. This document provides application notes and protocols for this essential phase.

Table 1: Common Prioritization Metrics for Multi-Omics Biomarkers

| Metric | Description | Typical Threshold | Application in DIABLO | ||

|---|---|---|---|---|---|

| Variable Importance in Projection (VIP) | Measures a feature's contribution to the DIABLO model. | >1.0 indicates high importance. | Rank features from each omics block. | ||

| Loading Value | Weight of each feature in the latent component. | Absolute value >0.5 often considered strong. | Identify drivers of component correlation. | ||

| Correlation to Component | Correlation between original feature and latent component. | High | >0.7 | Assess representativeness. | |

| Between-Omics Correlation | Pairwise correlation of selected features across omics types. | High | >0.6 | Validate multi-omics signature coherence. | |

| AUC (ROC Analysis) | Predictive performance in classification tasks. | >0.7 acceptable, >0.9 excellent. | Evaluate signature's diagnostic power. | ||

| p-value (Statistical Test) | Significance from univariate/bivariate analysis. | <0.05 after correction. | Filter for statistically significant features. |

Table 2: Suggested Workflow Parameters and Outputs

| Step | Parameter | Recommended Setting | Output Example |

|---|---|---|---|

| Signature Extraction | VIP Cutoff | 1.2 - 1.5 | Shortlist of 50-200 total features. |

| Functional Enrichment | FDR Correction (Gene Ontology) | Benjamini-Hochberg <0.05 | Top 10 enriched pathways per omics layer. |

| Network Integration | Confidence Score (STRING DB) | >0.7 (high confidence) | Integrated network with 30 nodes, 50 edges. |

| Prioritization Scoring | Weights: VIP, Correlation, Enrichment | 0.4, 0.3, 0.3 | Final ranked list of top 20 biomarker candidates. |

Experimental Protocols

Protocol 3.1: Extracting a Robust Signature from DIABLO Output

Objective: To filter and select a concise list of multi-omics features from the full DIABLO model.

Materials: R environment, mixOmics package, DIABLO model object (block.splsda).

Procedure:

- Determine Optimal Component Number: Use

perf()function with 5-fold cross-validation repeated 10 times to select the number of DIABLO components that minimizes overall classification error. - Calculate VIP: Extract VIP scores for each feature on the first n components using

vip(). - Apply Composite Threshold: Retain features meeting either criterion:

- VIP >= 1.3 on any of the first n components, OR

- Absolute loading value >= 0.6 on any of the first n components.

- Cross-Omics Correlation Filter: For each retained feature, check its correlation (Pearson |r| > 0.65) with at least one feature from another omics block selected in Step 3. Discard uncorrelated features.

- Output: A data frame of selected features with their omics block, VIP, loadings, and cross-omics correlations.

Protocol 3.2: Biological Prioritization via Enriched Pathway Mapping

Objective: To prioritize signature elements based on their collective biological function.

Materials: List of selected genes/proteins/metabolites; enrichment tools (e.g., clusterProfiler for genes, MetaboAnalystR for metabolites).

Procedure:

- Separate by Omics Type: Split the signature into gene/protein and metabolite lists.

- Conduct Enrichment Analysis:

- Genes/Proteins: Use

enrichGO()for Gene Ontology (Biological Process) andenrichKEGG()for pathways. Apply FDR correction (q-value < 0.05). - Metabolites: Use the

mummichogorGREDAalgorithm in MetaboAnalyst to predict enriched metabolic pathways (p-value < 0.01).

- Genes/Proteins: Use

- Integrate Pathway Results: Manually map gene-centric and metabolite-centric pathways to unified biological themes (e.g., "Inflammatory Response").

- Score Features by Enrichment: Assign each signature feature a score based on the number and significance of pathways it belongs to. Features in top enriched pathways receive higher priority.

Protocol 3.3: Experimental Validation Prioritization Score

Objective: To compute a unified score to rank biomarker candidates for downstream validation. Materials: Outputs from Protocol 3.1 and 3.2. Procedure:

- Normalize Metrics: For each retained feature, normalize key metrics to a 0-1 scale:

- NormVIP = (VIP - min(VIP)) / (max(VIP) - min(VIP))

- NormCorr = (|MaxCrossOmicsCorrelation| - 0.65) / (1 - 0.65) [clamp at 0 and 1]

- NormEnrich = (NumberofEnrichedPathways) / (max(NumberofEnrichedPathways))

- Calculate Composite Score: Apply a weighted sum. Example weights: VIP (0.5), Correlation (0.3), Enrichment (0.2).

Composite_Score = (0.5 * Norm_VIP) + (0.3 * Norm_Corr) + (0.2 * Norm_Enrich)

- Rank and Categorize: Rank features by Composite_Score. Classify top 10% as "Tier 1 - High Priority", next 20% as "Tier 2 - Medium Priority".

Visualizations

Diagram 1: Downstream Analysis Workflow after DIABLO

Diagram 2: Composite Scoring Logic for Prioritization

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Downstream Analysis

| Item | Function in Downstream Analysis | Example/Supplier |

|---|---|---|

R mixOmics Package |

Core functions for running DIABLO, extracting VIP scores, loadings, and performing cross-validation. | CRAN: mixOmics |

| Enrichment Analysis Suites | Tools for functional interpretation of gene/protein (e.g., GO, KEGG) and metabolite lists. | clusterProfiler (R), MetaboAnalyst (web/R), g:Profiler (web) |

| Protein-Protein Interaction Databases | Provide context for network-based prioritization of proteomic/genomic hits. | STRING Database, BioGRID |

| Metabolic Pathway Databases | Essential for mapping metabolites from signature to biological processes. | HMDB, KEGG, Reactome |

| Commercial Biomarker Validation Kits | For transitioning computational hits to wet-lab validation (ELISA, qPCR, MS-based). | R&D Systems, Abcam, Qiagen, MyBioSource |

| Integrated Network Visualization Software | Aids in building and interpreting multi-omics interaction networks. | Cytoscape (+ Omics visualizer apps) |

| High-Performance Computing (HPC) Resources | Necessary for permutation testing, bootstrapping confidence intervals, and large-scale network analyses. | Local clusters or cloud solutions (AWS, Google Cloud) |

Optimizing DIABLO Performance: Solving Common Pitfalls and Enhancing Robustness

Within biomarker discovery research, particularly in high-throughput omics studies, the "small n, large p" problem is pervasive. This context addresses overfitting within the framework of a broader thesis on Data Integration Analysis for Biomarker discovery using Latent cOmponents (DIABLO). DIABLO, a multivariate method, is designed for integrative analysis of multiple omics datasets to identify multi-omics biomarker panels. However, its application is critically challenged when sample sizes (n) are vastly outnumbered by features (p), leading to models that fit noise rather than biological signal. These application notes detail strategies and protocols to mitigate this risk.

Core Strategies & Quantitative Comparison

The primary defense against overfitting in the n << p regime combines data reduction, regularization, and rigorous validation.

Table 1: Quantitative Comparison of Overfitting Mitigation Strategies

| Strategy | Typical Implementation | Key Hyperparameter(s) | Impact on DIABLO Framework | Risk if Misapplied |

|---|---|---|---|---|

| Dimensionality Reduction | Univariate pre-filtering (e.g., ANOVA), PCA | FDR threshold, # of components | Reduces p before DIABLO, simplifying latent structure. | Loss of synergistic multi-omics signals. |

| Regularization | Sparse PLS/CCA (as in DIABLO), Elastic Net | KeepX (component sparsity), penalty λ | Embeds feature selection directly in model training. | Over-sparsity discards weak but contributory biomarkers. |

| Resampling Validation | Repeated k-fold Cross-Validation (CV), Bootstrap | # folds, # repeats, # iterations | Provides unbiased performance estimate (e.g., BER, AUC). | High variance in performance metrics with very small n. |

| Ensemble Methods | Bootstrap aggregating (Bagging) of models | # bootstrap samples | Stabilizes feature selection and performance. | Computationally intensive; risk of correlated predictors. |

| Data Augmentation | Synthetic Minority Over-sampling (SMOTE) | # synthetic samples, k-neighbors | Artificially increases n for training, improves balance. | Introduction of biologically implausible data points. |

Experimental Protocols

Protocol 2.1: Pre-Processing & Dimensionality Reduction for DIABLO

Objective: Reduce feature space (p) while preserving biologically relevant multi-omics variance.

- Per-omics Univariate Filtering: For each omics dataset (e.g., transcriptomics, metabolomics), perform a univariate statistical test (e.g., ANOVA for class separation, Cox regression for survival). Retain features with False Discovery Rate (FDR) adjusted p-value < 0.05.

- Variance Filtering: Within each filtered dataset, remove features with near-zero variance (coefficient of variation < 10%).

- Protocol Note: This step must be performed strictly within the training set during cross-validation to avoid data leakage.

Protocol 2.2: Nested Cross-Validation for DIABLO Model Tuning & Assessment

Objective: Obtain an unbiased estimate of model performance and optimal sparsity parameters.

- Define Outer Loop (Performance Estimation): Split data into k folds (e.g., k=5, stratified by outcome). Use k-1 folds for training and 1 fold for testing.

- Define Inner Loop (Parameter Tuning): On the training set from Step 1, perform another k-fold CV.

- For each candidate

keepXvalue (defining features per component), run DIABLO on the inner training folds. - Evaluate the model's predictive accuracy (e.g., Balanced Error Rate, BER) on the held-out inner test fold.

- For each candidate

- Model Selection & Assessment: Select the

keepXparameters yielding the lowest average BER in the inner loop. Train a final model on the entire outer-loop training set using these parameters. Evaluate this final model on the held-out outer-loop test fold. - Iteration: Repeat Steps 1-3 for all outer-loop splits. The final performance is the average across all outer test folds.

Protocol 2.3: Bootstrap-Enhanced Feature Selection Stability Analysis

Objective: Identify a robust core multi-omics biomarker panel.

- Bootstrap Sampling: Generate B (e.g., B=100) bootstrap samples by randomly drawing n samples from the full dataset with replacement.

- Run DIABLO: For each bootstrap sample, run DIABLO with a fixed, moderately sparse

keepXsetting. - Calculate Frequency: Record the selection frequency for each feature across all B models.

- Define Stable Signature: Define the final biomarker panel as features selected in >80% of bootstrap models.

Visualizations

Diagram 1: Nested CV Workflow for DIABLO

Diagram 2: Overfitting Mitigation Strategy Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for DIABLO-based Biomarker Discovery

| Item / Solution | Function / Role | Example / Note |

|---|---|---|

| mixOmics R Package | Core software implementing the DIABLO framework for multi-omics integration. | Provides block.plsda and block.splsda functions. Essential for protocol execution. |

| Caret or MLR R Package | Provides unified interface for implementing nested cross-validation and hyperparameter tuning. | Streamlines Protocol 2.2, ensuring reproducible train/test splits. |

| Stability Selection Algorithm | Extends Protocol 2.3, formalizing feature frequency analysis. | Can be implemented via the mixOmics selectVar function output across bootstraps. |

| SMOTE Algorithm | Synthetic data augmentation for small and/or imbalanced sample classes. | Available via the DMwR or themis R packages. Use cautiously within CV loops. |

| High-Performance Computing (HPC) Cluster | Computational resource for running intensive resampling (nested CV, bootstrap). | Necessary for realistic application with large p and multiple omics blocks. |

| Benchmark Public Datasets | Gold-standard multi-omics datasets for method validation and comparison. | E.g., TCGA (cancer) or multi-omics microbiome datasets. Used as a positive control. |

Handling Missing Data and Batch Effects Within and Across Omics Blocks

Within the framework of a thesis on DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) for multi-omics biomarker discovery, managing data quality is paramount. Two pervasive challenges are missing data and batch effects, which can severely compromise integration and model generalizability. This document provides application notes and protocols to address these issues systematically.

Table 1: Common Sources and Impact of Missing Data Across Omics

| Omics Block | Typical Missingness Rate | Primary Causes | Potential Impact on DIABLO |

|---|---|---|---|

| Proteomics | 10-40% | Low-abundance proteins, detection limits | Biased component loading, reduced power |

| Metabolomics | 5-30% | Spectral noise, compound ID uncertainty | Distorted covariance structures |

| Transcriptomics | <5% | Low expression, technical artifacts | Minor, but can affect network inference |

| Epigenomics | 5-20% | Coverage depth, probe design | Misleading correlation patterns |

Table 2: Batch Effect Correction Method Comparison

| Method | Algorithm Type | Suitability for DIABLO | Key Limitation |

|---|---|---|---|

| ComBat | Empirical Bayes | High (pre-processing) | Requires batch annotation |

| sva (Surrogate Variable Analysis) | Latent factor | Moderate | Can remove biological signal |

| ARSyN (ANOVA Removal of Systematic Noise) | ANOVA-based | High for multi-factorial designs | Complex experimental designs needed |

| RUV (Remove Unwanted Variation) | Factor analysis | Moderate | Control genes/features required |

Application Notes for DIABLO Workflows

Pre-Processing Philosophy

In DIABLO, data integration occurs via a multivariate framework that seeks correlated patterns across blocks. Missing data and batch effects must be addressed prior to integration to ensure these patterns are biologically driven.

Missing Data Protocol

Protocol: k-Nearest Neighbors (kNN) Imputation for Multi-Omics Data Prior to DIABLO

Objective: Impute missing values in each omics block separately while preserving block-specific variance structure.

Reagents & Materials:

impute.knnfunction from theimputeR package (orscikit-learnKNNImputerin Python).- Normalized, but not scaled, omics matrices.

Procedure:

- Segmentation: Handle each omics data matrix (e.g.,

X_mRNA,X_protein,X_metabolite) independently. - Parameter Selection: Set

k = 10as a starting point. This should be tuned based on dataset size (smallerkfor smaller N). - Execution: For each block:

a. Transpose the matrix to have features as columns.

b. Apply the kNN imputation algorithm, which uses the Euclidean distance between samples to identify neighbors.

c. Replace missing values with the weighted average of the non-missing values from the

knearest neighbors. - Validation: After imputation, check that the variance distribution of each feature has not been artificially compressed. Compare density plots pre- and post-imputation.

- DIABLO Readiness: Scale each completed block (mean-centered, unit variance) as required by the DIABLO framework.

Note: For missingness >30% in a specific feature, consider removing the feature entirely, as imputation may be unreliable.

Batch Effect Correction Protocol

Protocol: Sequential Batch Correction Using ComBat Prior to DIABLO Integration

Objective: Remove technical batch variation within each omics block while retaining biological variation for cross-block correlation.

Reagents & Materials:

svaR package (forComBat).- A data frame (

batch_df) detailing sample batch (e.g., sequencing run, processing date) and relevant biological covariates (e.g., disease status, gender).

Procedure:

- Model Setup: Define a model matrix for the biological covariates of interest (e.g.,

~ disease_status). This ensures ComBat preserves this signal. - Block-wise Correction: Apply ComBat sequentially to each omics data matrix.

par.prior=TRUEfits a parametric empirical Bayes model, preferred for small sample sizes. - Diagnostic Check: For each block, perform Principal Component Analysis (PCA) on the corrected data. Color samples by batch ID. Successful correction is indicated by the lack of clustering by batch in the first 2-3 PCs.

- DIABLO Integration: Use the batch-corrected blocks as input for the

mixOmics::block.plsdaormixOmics::wrapper.sgccafunction to construct the DIABLO model.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Multi-Omics QA/QC

| Item/Category | Example Product/Software | Primary Function in Protocol |

|---|---|---|

| Imputation | impute R package, fancyimpute Python package |

Implements kNN, MissForest, and matrix completion algorithms for missing data. |

| Batch Correction | sva R package |

Contains ComBat and sva functions for empirical batch effect removal. |

| Quality Metrics | pvca R package, PCA functions (stats, mixOmics) |

Assesses batch effect magnitude pre/post correction via Principal Variance Component Analysis. |

| Normalization | edgeR (TMM), DESeq2 (median ratio), MetaboAnalystR |

Block-specific normalization to correct for technical variation before imputation/batch correction. |

| DIABLO Framework | mixOmics R package (v6.24.0+) |

Core software for multi-omics integration after data cleaning. |

| Visualization | ggplot2, pheatmap |

Creates diagnostic plots (density, PCA, heatmaps) to evaluate protocol success. |

Visualization of Integrated Workflow

Title: Workflow for Multi-Omics Curation Before DIABLO

Title: Problem-Solution Logic for Reliable Integration

Within the broader thesis on Data Integration Analysis for Biomarker discovery using Latent variable approaches for ‘Omics data (DIABLO) for biomarker discovery research, a central challenge is optimizing the design weight parameter. This parameter controls the trade-off between maximizing the integration strength (shared signal across multi-omic blocks) and preserving block-specific biological signals that may be biologically relevant but not fully cross-correlated. This document provides application notes and protocols to systematically fine-tune this balance.

A live search for recent DIABLO applications (2022-2024) reveals key quantitative metrics used to evaluate this balance. The following tables summarize common findings and target ranges.

Table 1: Performance Metrics for Evaluating Integration-Specificity Trade-off

| Metric | Description | Target Range (Strong Integration) | Target Range (Block-Specific Emphasis) | Optimal Balance Indicator |

|---|---|---|---|---|