Mastering Multi-omics Data Imputation: Strategies, Tools, and Best Practices for Researchers

This comprehensive guide explores the critical role of multi-omics data imputation in modern biomedical research.

Mastering Multi-omics Data Imputation: Strategies, Tools, and Best Practices for Researchers

Abstract

This comprehensive guide explores the critical role of multi-omics data imputation in modern biomedical research. We provide a foundational understanding of missing data mechanisms in genomics, transcriptomics, proteomics, and metabolomics. The article details cutting-edge methodological approaches, from matrix factorization to deep learning, and their practical applications in drug discovery and disease modeling. We address common challenges in implementation, optimization strategies for different data types, and robust validation frameworks. Finally, we present comparative analyses of leading tools and platforms, empowering researchers to select and implement the most effective imputation strategies for their specific projects, thereby enhancing data integrity and unlocking deeper biological insights.

Why Multi-omics Data is Incomplete: Understanding the Sources and Impact of Missing Values

The Ubiquity of Missing Data in Genomics, Transcriptomics, Proteomics, and Metabolomics

Missing data is a pervasive, systematic challenge across all omics layers, arising from technological limitations, biological factors, and computational preprocessing. The prevalence and mechanisms differ by platform.

Table 1: Prevalence and Primary Causes of Missing Data by Omics Layer

| Omics Layer | Typical Missing Rate | Primary Technical Causes | Primary Biological Causes |

|---|---|---|---|

| Genomics (SNP Array) | 0.1% - 5% | Poor probe hybridization, low signal intensity, genotyping algorithm ambiguity. | Low sample quality, copy number variations, rare alleles. |

| Transcriptomics (RNA-seq) | 5% - 30% (for lowly expressed genes) | Low read count, detection limit of sequencing depth, alignment errors. | Biological absence of expression, dynamic range of expression. |

| Proteomics (LC-MS/MS) | 15% - 50% (DDA) 5% - 20% (DIA) | Stochastic data-dependent acquisition (DDA), limit of detection, ion suppression, dynamic range. | Low-abundance proteins, incomplete digestion, PTM heterogeneity. |

| Metabolomics (LC-MS) | 10% - 40% | Ionization efficiency variability, signal below limit of detection, co-elution. | Metabolite concentration below detection, rapid turnover, matrix effects. |

Table 2: Characterization of Missing Data Mechanisms

| Mechanism | Definition | Omics Examples | Implication for Analysis |

|---|---|---|---|

| Missing Completely At Random (MCAR) | Missingness is unrelated to observed or unobserved data. | Sample handling errors, random technical glitches. | Least problematic; simple imputation may work. |

| Missing At Random (MAR) | Missingness depends on observed data but not on unobserved data. | Low-intensity peptides missing because total protein signal is low (observed). | Can be addressed using observed variables. |

| Missing Not At Random (MNAR) | Missingness depends on the unobserved value itself. | A metabolite is missing because its true concentration is below the instrument's detection limit. | Most challenging; requires specialized models. |

Application Notes & Protocols for Handling Missing Data

Protocol: Systematic Assessment of Missing Data Patterns in a Multi-omics Cohort

Objective: To characterize the extent, mechanism, and pattern of missing data across genomics, transcriptomics, proteomics, and metabolomics datasets prior to integration or imputation.

Materials:

- Multi-omics data matrices (e.g., SNP calls, gene expression counts, protein/peptide abundances, metabolite intensities).

- Sample metadata (e.g., batch, clinical group, sample quality metrics).

Procedure:

- Data Preparation: Standardize identifiers. Convert all data matrices into a sample x feature format. Log-transform (typically log2) intensity-based data (proteomics, metabolomics) after adding a minimal offset if needed.

- Missingness Heatmap: For each omics layer, generate a binary matrix (1=observed, 0=missing). Create a clustered heatmap to visualize if missingness clusters by sample batch or by feature group.

- Quantification: Calculate the overall missing rate per dataset. Calculate the missing rate per sample and per feature. Plot distributions. Flag samples/features with missingness >30% for potential removal.

- Mechanism Investigation (MAR vs. MNAR):

- For intensity-based data (proteomics/metabolomics), create a "type 2" missing value plot. For each feature, plot the proportion of missing values against the mean observed intensity (log-scale). A strong negative correlation suggests MNAR (detection limit-driven).

- Perform a two-sample t-test comparing the mean observed intensity of samples where a target feature is missing vs. observed for a related, fully-observed "guide" feature. A significant difference suggests MAR.

Expected Output: A comprehensive report detailing missingness per layer, identification of problematic samples/features, and a preliminary classification of missing data mechanisms to guide imputation method selection.

Protocol: K-Nearest Neighbors (KNN) Imputation for Transcriptomics and Proteomics Data

Objective: To impute missing values in a gene expression or protein abundance matrix under the MAR assumption, leveraging similarity between samples.

Materials: Normalized expression/abundance matrix with missing values (NaNs).

Procedure:

- Normalization: Ensure data is properly normalized (e.g., quantile normalization for transcriptomics, median normalization for proteomics) across samples before imputation.

- Distance Calculation: For the sample-wise KNN variant, compute the Euclidean distance (or Pearson correlation distance) between all pairs of samples using only the features that are observed in both samples being compared.

- Neighbor Selection: For each sample

icontaining missing values, identify theknearest neighbor samples (kis tunable, often start withk=10). - Imputation: For a missing value in sample

ifor featurej, calculate the weighted average of featurej's values in theknearest neighbors. Weights are inversely proportional to the distance to samplei. - Iteration: Perform steps 2-4 iteratively until the imputed matrix converges (change between iterations falls below a threshold) or for a fixed number of iterations.

Note: A feature-wise KNN variant can also be used, finding neighbors among features based on sample correlation. Choose based on whether sample or feature correlation is more biologically meaningful.

Protocol: MissForest Imputation for Mixed Multi-omics Data

Objective: To impute missing values in a complex, integrated multi-omics dataset that may contain mixed data types (continuous, categorical) and non-linear relationships.

Materials: Integrated multi-omics feature matrix (samples x features from multiple layers), possibly with mixed data types.

Procedure:

- Data Integration & Type Assignment: Merge features from different omics layers into a single matrix. Annotate each column/variable as either

continuous(e.g., expression, abundance) orcategorical(e.g., genotype, mutation status). - Initialization: Perform a simple imputation (e.g., mean/mode) to create a complete initial matrix.

- Random Forest Iteration: For each variable

jwith missing values: a. Split the data into observed (y_obs) and missing (y_miss) parts for variablej. b. Train a Random Forest model using all other variables as predictors on the subset of samples wherejis observed (y_obs). c. Predict the missing values forjusing the trained model and the predictor values from samples wherejis missing. d. Update the matrix with the newly imputed values forj. - Cycling: Repeat Step 3 for all variables with missing data. This constitutes one cycle.

- Stopping Criterion: Repeat cycles until the difference between the newly imputed matrix and the previous one increases for the first time (indicating potential overfitting) or a pre-set maximum number of cycles is reached.

Advantage: MissForest makes no assumptions about data distribution or missingness mechanism and handles complex interactions.

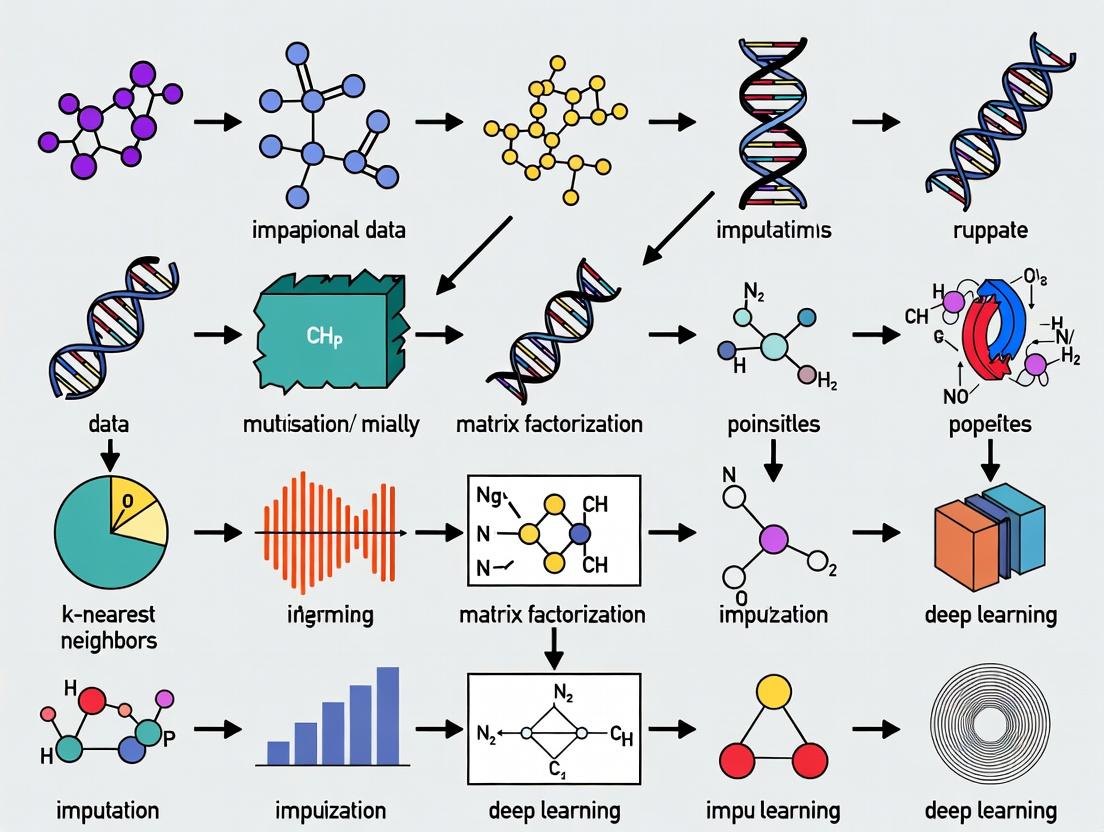

Visualizations

Title: Missing Data Assessment Workflow

Title: K-Nearest Neighbors Imputation Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Reagents for Multi-omics Missing Data Research

| Item / Solution | Supplier Examples | Function in Context | Key Application Note |

|---|---|---|---|

Bioconductor (missForest, impute, pcaMethods) |

R/Bioconductor Project | Provides peer-reviewed, standardized R packages for implementing KNN, Random Forest, SVD, and MNAR-specific imputation methods. | Essential for reproducible protocol execution. Packages like MissMethyl handle platform-specific (e.g., methylation array) missingness. |

| Python SciKit-learn & SciPy Ecosystem | Python Community | Libraries like sklearn.impute.IterativeImputer (for MICE), sklearn.ensemble.RandomForestRegressor (for custom MissForest), and scipy for distance calculations. |

Offers flexibility for building custom pipelines and integrating imputation into machine learning workflows. |

| Proteomics/Metabolomics QC Standards | Agilent, Waters, SCIEX | Labeled internal standards, pooled QC samples, and blank runs. | Critical for distinguishing technical MNAR (detection limit) from biological absence. Used to monitor and correct for batch effects that induce MAR. |

| Sequest/Proteome Discoverer, MaxQuant, OpenMS | Thermo, Open Source | Proteomics data processing suites with built-in handling of missing LC-MS peaks (e.g., matching between runs). | These tools perform the first line of "imputation" by cross-referencing peaks across runs, reducing missingness before downstream statistical imputation. |

| Multi-omics Integration Suites (e.g., MOFA2) | Bioconductor, GitHub | Bayesian framework that inherently handles missing data as part of its factor analysis model. | A powerful alternative to separate imputation: models all omics simultaneously, learning latent factors from observed data to account for missing entries. |

| High-Performance Computing (HPC) Cluster | Institutional, Cloud (AWS, GCP) | Imputation methods like MissForest or deep learning models are computationally intensive, especially for large feature sets. | Necessary for applying advanced methods to cohort-scale (n>1000) multi-omics data within a reasonable timeframe. |

In multi-omics research, missing data is a pervasive challenge that can bias biological interpretation and hinder biomarker discovery. The mechanisms underlying missing data—Missing Completely At Random (MCAR), Missing at Random (MAR), and Missing Not at Random (MNAR)—determine the appropriate statistical handling and imputation strategy. This document details the characterization and experimental protocols for identifying these mechanisms within the context of multi-omics data imputation method development.

Mechanisms of Missingness: Definitions & Biological Examples

Table 1: Mechanisms of Missingness in Biological Data

| Mechanism | Acronym | Formal Definition | Biological Example in Proteomics |

|---|---|---|---|

| Missing Completely at Random | MCAR | The probability of missingness is independent of both observed and unobserved data. | Sample degradation due to a random tube failure during storage. |

| Missing at Random | MAR | The probability of missingness depends only on observed data. | Low-abundance proteins are less likely to be detected (missing) in samples with low total protein concentration (observed). |

| Missing Not at Random | MNAR | The probability of missingness depends on the unobserved value itself. | A cytokine is not detected because its true concentration is below the assay's limit of detection (LOD). |

Diagnostic Protocols for Identifying Missingness Mechanisms

Protocol 3.1: Statistical Testing for MCAR

Aim: To test if missingness is independent of any observed variable. Method: Little’s MCAR Test.

- Input a dataset

Dwithnsamples andpomics features (e.g., protein abundances). - Create a binary indicator matrix

MwhereM_ij = 1if value for featurejin sampleiis missing, else0. - Group samples based on patterns in

M. - For each group, calculate the mean vector of observed values across all features.

- Perform a likelihood-ratio test comparing the observed group means. A non-significant p-value (>0.05) suggests data may be MCAR.

Materials: R Statistical Software,

naniarorBaylorEdPsychpackage.

Protocol 3.2: Pattern Analysis & Logistic Regression for MAR/MNAR

Aim: To assess if missingness in a target variable Y is associated with other observed variables (MAR) or its own latent value (MNAR).

Method:

- Create Indicator Variable: For a target feature

Ywith missing values, createR_Y(1=missing, 0=observed). - Fit MAR Model: Perform logistic regression:

R_Y ~ X1 + X2 + ... + Xk, whereXs are other fully observed omics features or metadata (e.g., sample batch, patient age). - Analyze Coefficients: Statistically significant predictors indicate a potential MAR mechanism for

Ywith respect to thoseXs. - MNAR Investigation: This is inherently untestable from data alone. Strong prior biological knowledge is required. For example, if

Yis a known low-abundance metabolite and missing values align with measurements near the platform's technical LOD, MNAR is plausible.

Visualization of Diagnostic Workflows

Title: Statistical Workflow for MCAR Testing

Title: Decision Pathway for MAR vs. MNAR Assessment

Implications for Multi-omics Imputation Method Selection

Table 2: Implication of Missingness Mechanism on Imputation Choice

| Mechanism | Implication for Bias | Recommended Imputation Approach | Biological Example Protocol |

|---|---|---|---|

| MCAR | No bias introduced by missingness. | Any imputation method (Mean, KNN, MICE) may be suitable. Simple methods can increase power. | Impute missing protein levels from random storage failure using sample-wise median. |

| MAR | Bias can be corrected using observed data. | Model-based methods (MICE, MissForest) that leverage correlations with other observed variables. | Impute missing lipid species values using observed correlated lipid concentrations and clinical covariates. |

| MNAR | High risk of bias; imputation is challenging. | Methods incorporating missingness model or LOD-based approaches (e.g., left-censored imputation, QRILC). | For metabolites below LOD, use quantile regression imputation (QRILC) to draw values from a truncated distribution. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Missing Data Analysis in Omics

| Item Name | Function/Brief Explanation | Example Vendor/Catalog |

|---|---|---|

| Standard Reference Materials (SRMs) | Complex, well-characterized biological samples (e.g., NIST SRM 1950 - Plasma) used to benchmark platform performance and missing data patterns. | National Institute of Standards and Technology (NIST) |

| Processed Data with Spiked-in Controls | Datasets from experiments with known concentrations of exogenous proteins/transcripts (e.g., S. cerevisiae spike-ins in human background) to quantify detection limits. | Spike-In SILAC Proteomics Standard Kit (Thermo Fisher) |

| Quality Control (QC) Pool Samples | A homogeneous sample injected repeatedly throughout an LC-MS/MS run to monitor instrumental drift, which can cause MAR (missingness depends on run order). | Prepared in-house from a pooled aliquot of all study samples. |

| Limit of Detection (LOD) Calibration Standards | Serial dilutions of analytes of known concentration to empirically determine platform-specific LODs, critical for MNAR diagnosis. | Custom synthetic peptide mixes (e.g., JPT Peptide Technologies) |

| Data Analysis Software Suite | Integrated environment for statistical testing, imputation, and visualization (e.g., R with mice, imputeLCMD, ggplot2 packages). |

The R Project for Statistical Computing |

Application Notes: The Multi-Omics Data Imputation Imperative

Within multi-omics integration studies, missing values (MVs) are ubiquitous due to technical limitations (e.g., detection thresholds in mass spectrometry) and biological factors (e.g., low analyte abundance). These gaps are rarely Missing Completely At Random (MCAR); they are more often Missing Not At Random (MNAR), introducing systematic bias. Unaddressed, MVs corrupt downstream statistical inference, leading to false discoveries in differential expression, incorrect patient stratification, and flawed biomarker identification.

Quantitative Impact of Missing Data on Analysis

Table 1: Documented Consequences of Unimputed Missing Values in Omics Studies

| Analysis Type | Effect of Non-Imputed MVs | Typical Error Rate Increase | Primary Cause |

|---|---|---|---|

| Differential Expression | Reduced statistical power, inflated false positives | Power loss: 15-40% (RNA-seq) | Exclusion of incomplete cases reduces sample size |

| Clustering / Stratification | Distorted distance metrics, spurious subgroups | Cluster accuracy drop: 20-35% | Non-random missingness mimics biological patterns |

| Correlation & Network Analysis | Attenuated correlation coefficients, sparse networks | Correlation bias: Up to 50% underestimation | Pairwise deletion ignores joint distributions |

| Pathway Enrichment | Biased gene set statistics, irrelevant pathway selection | Top pathway misidentification: ~30% of studies | Under-representation of genes with frequent MVs (e.g., lowly expressed) |

| Machine Learning Prediction | Poor model generalizability, feature selection bias | AUC decrease: 0.05-0.15 | Training on incomplete features misrepresents underlying biology |

Protocols for Evaluating & Addressing Missingness

Protocol 1: Diagnostic Workflow for Missing Value Pattern Analysis

Objective: To characterize the mechanism and pattern of missingness prior to imputation method selection.

Materials:

- Complete (pre-dropout) dataset for simulation (if available).

- Software: R (packages:

mice,VIM,ggplot2,MissMech) or Python (libraries:scikit-learn,missingno,scipy).

Procedure:

- Quantification: Generate a missingness map. Calculate the percentage of MVs per sample and per feature (e.g., gene, protein).

- Pattern Testing:

- Perform Little's MCAR test (

MissMechpackage in R). A significant p-value (<0.05) rejects MCAR, suggesting data is MAR or MNAR. - For suspected MNAR (e.g., left-censored data in proteomics), apply sensitivity analysis: compare the distribution of observed values for a feature against the distribution of values where that feature is missing in other samples.

- Perform Little's MCAR test (

- Visualization: Use

missingnomatrix plot orVIM::aggrplot to identify if missingness clusters in specific sample groups (e.g., treatment vs. control) or co-occurs across features. - Documentation: Tabulate results to guide imputation method choice.

Protocol 2: Benchmarking Imputation Methods for Single-Cell RNA-Seq Data

Objective: To empirically select the optimal imputation method for a given single-cell RNA-seq (scRNA-seq) dataset.

Materials:

- scRNA-seq count matrix (cells x genes) with high-quality pre-processing (e.g., after doublet removal).

- High-performance computing cluster recommended.

- Software: R/Python environments with imputation tools (e.g.,

SAVERX,scImpute,ALRA,MAGIC).

Procedure:

- Simulate Missing Data: Start with a high-coverage, high-quality subset of data. Introduce MVs under known mechanisms (MCAR, MAR, MNAR) at rates of 10%, 20%, and 30%.

- Apply Imputation Methods: Run 3-4 candidate algorithms (selected based on dataset size and missingness pattern) on both simulated and original datasets.

- Evaluation Metrics: Calculate on simulated data where ground truth is known.

- Root Mean Square Error (RMSE) between imputed and true values.

- Preservation of biological variance: Correlation of gene-gene distances before dropout and after imputation.

- Impact on downstream: Perform PCA on imputed data; calculate the Spearman correlation between the first 5 PCs from complete data and imputed data.

- Final Selection: Choose the method that minimizes distortion of global structures (PC correlation >0.9) while maintaining reasonable local accuracy (low RMSE).

Table 2: Research Reagent Solutions for Multi-Omics Imputation Studies

| Item / Tool Name | Type | Primary Function in Imputation Research |

|---|---|---|

| SAVERX | Software Package (R) | Uses a transfer learning approach to borrow information across datasets and cell types for accurate scRNA-seq imputation. |

| MissForest | Algorithm (R/Python) | Non-parametric imputation using random forests, robust to non-linear relationships and complex multi-omics data structures. |

| MICE (Multivariate Imputation by Chained Equations) | Software Package (R/Python) | Creates multiple plausible imputations (mice) for datasets with arbitrary missing patterns, enabling uncertainty estimation. |

| DeepImpute | Algorithm (Python) | Utilizes deep neural networks with dropout layers to learn patterns for accurate and scalable scRNA-seq imputation. |

| Simulated MV Datasets | Benchmarking Resource | Gold-standard datasets (e.g., from Genome in a Bottle consortium) with known truth, used for controlled evaluation of imputation performance. |

| k-Nearest Neighbors (kNN) | Basic Algorithm | Baseline method imputes missing values using the average from the k most similar samples (neighbors), often used for proteomics data. |

Visualizations

Title: Decision Workflow for Multi-Omics Data Imputation

Title: How MNAR Data Leads to False Discovery

The inherent challenges in multi-omics integration stem from the fundamental properties of each data layer. The table below summarizes the typical scale and sparsity of major omics modalities, which directly inform imputation method selection.

Table 1: Characteristic Scale and Sparsity of Primary Omics Modalities

| Omics Layer | Typical Feature Dimension (per sample) | Primary Source of Sparsity | Typical Missingness Rate (Technical) | Data Structure Complexity |

|---|---|---|---|---|

| Genomics (WGS) | 3-5 million SNPs/Indels | Rare variants, low-coverage sequencing | 1-5% (genotype calling uncertainty) | Linear sequence, phased haplotypes. |

| Transcriptomics (scRNA-seq) | 20,000-30,000 genes | Dropout events (gene not detected) | 30-90% (gene-cell matrix) | High-dimensional, zero-inflated, count data. |

| Proteomics (LC-MS/MS) | 5,000-10,000 proteins | Low-abundance peptides, detection limits | 20-60% (missing not at random) | Dynamic range >10^6, hierarchical (peptide→protein). |

| Metabolomics (MS/NMR) | 500-5,000 metabolites | Low abundance, spectral interference | 10-40% (compound-specific) | Diverse chemical structures, continuous intensities. |

| Epigenomics (ATAC-seq) | 100,000+ chromatin peaks | Cell-type specificity, sampling depth | 15-50% (peak-cell matrix) | Sparse binary/count, genomic coordinate-based. |

Experimental Protocols for Benchmarking Imputation Methods

A critical component of thesis research involves the systematic evaluation of imputation algorithms against controlled, biologically relevant benchmarks.

Protocol 2.1: Generating a Ground-Truth Dataset with Introduced Missingness Objective: To create a benchmark dataset for evaluating imputation accuracy in transcriptomics data. Materials:

- High-quality bulk RNA-seq dataset (e.g., from GTEx) with >100 samples, depth >30M reads/sample.

- Computing cluster with R/Python environment.

- Research Reagent Solutions: See Table 2.

Procedure:

- Data Preprocessing: Download raw count matrices. Apply stringent quality control: remove genes expressed in <10% of samples, normalize using TPM or DESeq2's median of ratios.

- Create "Complete" Set: Filter to the top 5,000 most variable genes. Log2-transform (TPM+1). This subset is

G_true. - Introduce Missingness:

- MCAR (Random): For each value in

G_true, set toNAwith probability p (e.g., p=0.2) using a random number generator. - MNAR (Dropout): Simulate scRNA-seq-like dropout. For each value x in

G_true, calculate dropout probability: P(dropout) = exp(-k * x^2). Set toNAbased on this probability. Parameter k controls dropout severity.

- MCAR (Random): For each value in

- Validation Split: Before imputation, mask an additional 5% of values in the corrupted matrix as a held-out validation set

V. The final benchmark matrix isG_missing. - Output: Save

G_true,G_missing, and the indices ofV.

Protocol 2.2: Evaluating Imputation Performance on Scrambled Multi-omics Data Objective: To assess an imputation method's ability to leverage inter-omics correlations. Materials:

- Paired multi-omics dataset (e.g., TCGA mRNA expression and DNA methylation).

- Imputation software (e.g., Bayesian-based, matrix factorization).

Procedure:

- Data Alignment: Match samples across omics layers. Preprocess each layer independently (normalization, filtering).

- Baseline Creation: Introduce 30% MNAR missingness into the mRNA expression matrix (

M_missing). Keep the paired methylation matrix (C_complete) intact. - Imputation Execution:

- Run a single-omics imputation (e.g., SVD) on

M_missing-> ResultM_single. - Run a multi-omics imputation method (e.g., MOFA+, or integrative NMF) using

M_missingandC_complete-> ResultM_multi.

- Run a single-omics imputation (e.g., SVD) on

- Performance Metric Calculation: On the held-out validation values, compute:

- Root Mean Square Error (RMSE).

- Pearson correlation between imputed and true values.

- Preservation of co-expression structure: Calculate the correlation distance between the true and imputed gene-gene correlation matrices.

- Statistical Test: Use a paired Wilcoxon signed-rank test to compare RMSE from

M_singlevs.M_multiacross all imputed values.

Table 2: Research Reagent Solutions for Multi-omics Imputation Benchmarking

| Item | Function / Relevance | Example Source / Tool |

|---|---|---|

| Reference Datasets | Provide gold-standard, high-quality data to simulate missingness. | GTEx, TCGA, Human Cell Atlas. |

| Imputation Software | Algorithms to test and compare. | SAVER (scRNA-seq), MissForest (kNN-based), MAGIC (diffusion), MOFA+ (integration). |

| Benchmarking Pipeline | Standardized framework for fair comparison. | OpenProblems (single-cell), mimpute R package. |

| High-Performance Computing (HPC) | Enables running resource-intensive matrix completion and deep learning models. | SLURM cluster, Google Cloud Platform. |

| Containerization | Ensures reproducibility of software environments. | Docker, Singularity images for each imputation method. |

Visualization of Methodologies and Relationships

Title: Benchmarking Workflow for Imputation Methods

Title: Core Challenges Map to Multi-omics Layers

Title: Thesis Context: From Challenges to Applications

Within multi-omics data imputation research, the selection of an imputation method must be guided by a clearly defined goal. The three primary, often competing, objectives are Accuracy (minimizing error between imputed and true values), Biological Plausibility (ensuring imputed values are consistent with known biological mechanisms), and Preserving Relationships (maintaining the multivariate structure and correlations between features). This protocol outlines how to design experiments to evaluate these goals in the context of genomics, transcriptomics, and proteomics data.

Application Notes: Goal Evaluation Criteria

Table 1: Quantitative Metrics for Evaluating Imputation Goals

| Goal | Primary Metrics | Typical Benchmark Data | Target Range (Ideal) |

|---|---|---|---|

| Accuracy | Root Mean Square Error (RMSE), Mean Absolute Error (MAE) | Datasets with known, artificially introduced missingness (e.g., MCAR, MAR) | RMSE/MAE approaching 0 relative to data scale. |

| Biological Plausibility | Pathway Activity Score Deviation, Enrichment P-value consistency | Known pathway databases (KEGG, Reactome), prior biological knowledge | Imputed data should not distort known pathway signals (p-value change < 0.05 log10). |

| Preserving Relationships | Correlation Distance (e.g., Pearson/Spearman), PCA Procrustes Rotation | Complete-case subsets, external orthogonal datasets (e.g., matched cohorts) | Correlation displacement < 0.1; Procrustes correlation > 0.95. |

| Composite Score | Weighted sum of normalized metrics (Z-scores) | Combined assessment using all above | Dependent on research priority weights. |

Table 2: Common Pitfalls and Trade-offs by Goal

| Prioritized Goal | Common Risk | Mitigation Strategy |

|---|---|---|

| Accuracy Alone | Overfitting to noise, generating biologically impossible values (e.g., negative protein abundance). | Constrain imputation output ranges (e.g., non-negative matrix factorization). |

| Biological Plausibility Alone | Introducing strong bias, reinforcing only known patterns and missing novel discoveries. | Use weakly informative priors in Bayesian methods; validate on orthogonal data. |

| Preserving Relationships Alone | Preserving technical artifacts or batch effects along with true biological signal. | Perform imputation after initial batch correction, or use model-based corrections concurrently. |

Experimental Protocol: A Three-Phase Evaluation Framework

Protocol 1: Controlled Accuracy Assessment with Spike-in Missingness

Objective: Quantify the raw imputation error under controlled missingness patterns. Materials:

- Input Data: A high-quality, complete multi-omics dataset (e.g., a subset of the TCGA or a cell line atlas with no missing values).

- Software: R (package

mice,missForest,softImpute) or Python (packagescikit-learn,fancyimpute,IterativeImputer). - Hardware: Standard workstation (16GB RAM minimum).

Procedure:

- Data Preparation: Log-transform and normalize the complete dataset

D_complete. Standardize features if using distance-based methods. - Missingness Induction: Introduce Missing Completely at Random (MCAR) or Missing at Random (MAR) patterns into

D_completeto createD_missing. A typical masking rate is 10-20%. - Imputation: Apply candidate imputation methods (e.g., k-NN, SVD, Matrix Factorization, Deep Learning) to

D_missingto generateD_imputed. - Calculation: Compute RMSE and MAE by comparing only the artificially masked entries in

D_imputedto their original values inD_complete. - Analysis: Tabulate results as in Table 1. Statistically compare methods using a paired t-test across multiple random missingness inductions.

Protocol 2: Assessing Biological Plausibility via Pathway Integrity

Objective: Ensure imputation does not distort established, high-confidence biological knowledge. Materials:

- Input Data:

D_complete,D_imputedfrom Protocol 1. - Database: MSigDB, KEGG, or Reactome pathway gene sets.

- Software: Gene set enrichment analysis (GSEA) software (e.g.,

clusterProfilerin R,GSEApyin Python).

Procedure:

- Differential Analysis: For both

D_completeandD_imputed, perform a consistent mock differential expression analysis (e.g., compare two predefined sample groups or against a random label to establish a null). - Pathway Enrichment: Run GSEA on the ranked gene lists from both differential analyses.

- Integrity Metric: For a set of gold-standard pathways (e.g., "Oxidative Phosphorylation," "Immune Response"), calculate the absolute change in normalized enrichment score (NES) or -log10(p-value) between the complete and imputed results.

- Analysis: A successful imputation should maintain the significance and direction of truly enriched pathways. Flag methods that cause large deviations (>2 fold change in NES) or generate spurious enrichment in implausible pathways.

Protocol 3: Evaluating Relationship Preservation with Procrustes Analysis

Objective: Quantify how well the global covariance structure of the data is maintained. Materials:

- Input Data:

D_complete,D_imputed. - Software: R (

veganpackage) or Python (scikit-bio).

Procedure:

- Dimensionality Reduction: Perform PCA on

D_completeandD_imputedseparately, retaining the topkprincipal components (PCs) that explain 80% of the variance. - Procrustes Transformation: Superimpose the PCA coordinates from

D_imputedonto the coordinates fromD_completeusing a Procrustes rotation (translation, rotation, scaling). - Calculation: Compute the Procrustes correlation (symmetric Procrustes statistic) and the residual sum of squares (RSS).

- Analysis: A high Procrustes correlation (>0.95) and low RSS indicate good preservation of the multivariate geometry. Visualize the Procrustes alignment to inspect specific sample-level distortions.

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Resources for Multi-omics Imputation Research

| Item / Resource | Function / Application | Example / Specification |

|---|---|---|

| Reference Complete Datasets | Gold-standard for benchmarking; used to spike-in missingness. | CPTAC proteogenomic cohorts, 1000 Genomes Project, GTEx v8 RNA-seq data. |

| Benchmarking Suites | Provide standardized pipelines and metrics for fair comparison. | OpenProblems for single-cell omics, missingpy for general machine learning. |

| Biological Knowledge Bases | Provide ground truth for assessing biological plausibility. | KEGG Pathway API, Reactome database, STRING protein-protein interaction network. |

| High-Performance Computing (HPC) Access | Enables testing of computationally intensive methods (e.g., deep learning, large matrix factorization). | Cloud platforms (AWS, GCP) or local cluster with GPU nodes. |

| Containerization Software | Ensures reproducibility of imputation experiments. | Docker or Singularity containers with versioned software stacks. |

Visualizations

Title: Three Pillars of Imputation Goal Definition

Title: Experimental Workflow for Multi-omics Imputation Evaluation

From Matrix Factorization to AI: A Guide to Modern Multi-omics Imputation Techniques

1. Introduction and Thesis Context Within a broader thesis on multi-omics data imputation methods, the integration and analysis of genomics, transcriptomics, proteomics, and metabolomics datasets are frequently hampered by missing values. Traditional statistical methods like k-Nearest Neighbors (k-NN), Singular Value Decomposition (SVD), and MissForest offer robust, assumption-flexible frameworks for imputing these gaps, thereby enabling downstream integrative analyses. This document provides detailed application notes and protocols for implementing these methods on multi-layer omics data.

2. Methodological Overview and Quantitative Comparison

Table 1: Comparison of Traditional Imputation Methods for Multi-Omics Data

| Method | Core Principle | Data Type Handling | Key Hyperparameters | Strengths for Multi-Omics | Primary Limitations |

|---|---|---|---|---|---|

| k-NN Imputation | Uses feature similarity to find k closest samples, imputes via mean/median of neighbors. | Continuous, scaled. | k (number of neighbors), distance metric. | Simple, intuitive, preserves local data structure. | Computationally heavy for large p, sensitive to k and noise, requires complete distance matrix. |

| SVD-Based (e.g., SVDimpute) | Low-rank matrix approximation. Captures global covariance structure. | Continuous, centered. | Rank (d) of approximation. | Captures global patterns, efficient for high-dimensional data. | Assumes data is missing at random, sensitive to initial guess, less effective for very sparse data. |

| MissForest | Iterative, model-based. Uses Random Forest to predict missing values for each variable. | Continuous & categorical mixed. | Number of trees, iteration stop criterion. | Non-parametric, handles mixed data types, robust to non-linearity. | Computationally intensive, risk of overfitting with small n, slower convergence. |

Table 2: Typical Performance Metrics (Hypothetical Benchmark on a 100-sample, 3-omics Dataset with 15% MAR Values)

| Method | NRMSE (Expression Data) | Overall F1 Score (Mutation Data) | Average Runtime (seconds) | Stability (SD over 10 runs) |

|---|---|---|---|---|

| k-NN (k=10) | 0.18 | 0.87 | 45 | Low (0.002) |

| SVD (rank=5) | 0.22 | 0.75 | 12 | Medium (0.015) |

| MissForest | 0.15 | 0.92 | 310 | High (0.008) |

NRMSE: Normalized Root Mean Square Error; MAR: Missing at Random; SD: Standard Deviation.

3. Experimental Protocols

Protocol 1: k-NN Imputation for Multi-Omics Data Preprocessing

Objective: Impute missing values in a concatenated or integrated multi-omics matrix.

Reagents & Input: Normalized, scaled matrices (e.g., RNA-seq TPM, protein abundance) merged by sample ID. Missing values encoded as NA.

Procedure:

- Data Concatenation: Merge m omics layers (samples x features) into a single matrix X of dimensions (n samples x p total features). Ensure feature-wise standardization (z-score) is applied post-concatenation.

- Distance Calculation: Compute a sample-wise distance matrix using a suitable metric (e.g., Euclidean, Pearson correlation distance).

- Neighbor Identification: For each sample i with missing values in feature j, identify the k nearest neighbors (samples) that have observed values for feature j.

- Imputation: Calculate the weighted or unweighted mean (for continuous) or mode (for categorical) of feature j from the k neighbors. Use this value for imputation.

- Iteration (Optional): Repeat steps 2-4 for a fixed number of iterations or until convergence (change in imputed values < threshold).

- De-concatenation: Split the imputed matrix X'_imputed back into distinct omics layers for downstream analysis.

Protocol 2: SVD-Based Imputation (SVDimpute) Objective: Leverage global correlation structure for imputation in a continuous omics matrix. Procedure:

- Initialization: Replace all missing values in matrix X with the global mean of their respective feature (column).

- Decomposition: Perform SVD on the current complete matrix: X = U Σ V^T.

- Rank Selection: Retain only the top d singular values/vectors, creating a low-rank approximation: X_d = U_d Σ_d V_d^T.

- Imputation: Use the values from X_d to replace only the previously missing entries in the original X.

- Iteration: Repeat steps 2-4, each time performing SVD on the latest imputed matrix, until convergence (e.g., difference between successive imputed matrices falls below 1e-4).

Protocol 3: MissForest Imputation for Mixed Multi-Omics Data Objective: Impute missing values in datasets containing both continuous and categorical omics features (e.g., mutations, clinical data). Procedure:

- Data Preparation: Assemble a mixed-type data frame. Specify the data type (continuous/categorical) for each variable.

- Initial Guess: Impute all missing values with initial guesses (mean for continuous, mode for categorical).

- Iterative Random Forest Fitting: a. Sort variables by amount of missing data (increasing order). b. For each variable y with missing data: i) Set y as the response. ii) Use all other variables as predictors to train a Random Forest model on observed data. iii) Predict missing values for y. c. Loop through all variables with missingness once. This constitutes one iteration.

- Stopping Criterion: Repeat Step 3 until either: a) The difference between newly imputed and previous values increases for the first time, or b) A predefined maximum number of iterations is reached.

- Output: Return the final, fully imputed data frame.

4. Visualization of Workflows

Title: k-NN imputation workflow for multi-omics data

Title: Iterative SVD imputation (SVDimpute) protocol

Title: MissForest iterative imputation logic

5. The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Implementing Traditional Imputation Methods

| Tool/Reagent | Function/Description | Example in Protocol |

|---|---|---|

| Normalized Multi-Omics Matrices | Pre-processed, batch-corrected data matrices for each omics layer. The primary input. | RNA-seq count matrix (TPM), Methylation beta-value matrix. |

| Feature Scaling Algorithm | Standardizes features to mean=0, SD=1 (z-score) to ensure equal weight in distance calculations. | Essential pre-step for k-NN and SVD imputation. |

| Distance Metric Library | Functions to compute pairwise sample distances (Euclidean, Manhattan, Correlation). | Used in k-NN to find nearest neighbors. |

| Linear Algebra Library (SVD Solver) | Efficient computation of Singular Value Decomposition for large, sparse matrices. | Core component of SVDimpute (e.g., scipy.sparse.linalg.svds). |

| Random Forest Implementation | A library supporting regression and classification forests for mixed data types. | Core engine of MissForest (e.g., ranger in R, scikit-learn in Python). |

| Iteration Control Script | Custom code to manage the iterative process, check convergence, and log changes. | Used in all three methods, especially critical for SVDimpute and MissForest. |

| High-Performance Computing (HPC) Cluster | For computationally demanding tasks (MissForest on large datasets, k-NN on many samples). | Enables practical application of these methods to real multi-omics studies (n > 500). |

Within the broader thesis on Multi-omics data imputation methods, this document details two critical correlation-based approaches for handling missing values: Similarity-based (e.g., k-Nearest Neighbors) and Local Least Squares (LLS) imputation. These methods are foundational for addressing missingness in genomics, transcriptomics, proteomics, and metabolomics datasets, where leveraging inherent correlation structures between features (genes, proteins, metabolites) or samples is crucial for downstream integrative analysis.

Core Methodologies & Protocols

Protocol: Similarity-Based Imputation Using k-Nearest Neighbors (kNN)

Objective: Impute missing values in an omics data matrix by borrowing information from the most similar rows (genes) or columns (samples).

Materials & Input:

Data Matrix (D): Am x nmatrix withmfeatures (e.g., genes) andnsamples. Contains missing values (NA).Similarity Metric: Euclidean distance, Pearson correlation, or cosine similarity.k: Number of nearest neighbors to use.Imputation Function: Mean, weighted mean, or median of neighbors' values.

Experimental Procedure:

- Data Preparation: Normalize the data matrix (e.g., Z-score normalization per feature).

- Distance Calculation: For each feature

iwith a missing value in samplej:- Calculate the pairwise distance (or similarity) between feature

iand all other features using only the samples where both have observed values.

- Calculate the pairwise distance (or similarity) between feature

- Neighbor Selection: Identify the

kfeatures with the smallest distance (highest similarity) to featurei. - Value Imputation: Compute the imputed value for

D(i,j)using the values from thekneighbors for samplej. For weighted imputation, use similarity scores as weights.Imputed_Value = Σ (weight_neighbor * value_neighbor) / Σ weight_neighbor

- Iteration: Repeat steps 2-4 for all missing entries. The process may be iterated multiple times until convergence.

Protocol: Local Least Squares (LLS) Imputation

Objective: Impute a missing value by performing a least squares regression on the feature's k nearest neighbors within a localized subspace.

Materials & Input:

Data Matrix (D): Normalizedm x nmatrix.k: Number of nearest neighbors for the local subspace.λ: Regularization parameter (for regularized versions, e.g., LLSimpute).

Experimental Procedure:

- Target Vector Identification: For a feature

gwith a missing value in samples, denotex_gas the target row vector with the missing entry. - Neighbor Selection & Matrix Formation:

- Select

knearest neighbor features ofgbased on similarity in then-1complete samples (excluding samples). - Form a

k x (n-1)matrixAfrom these neighbors' values in the complete samples. - Form a

k x 1vectorbfrom these neighbors' values in the missing samples.

- Select

- Regression Coefficient Estimation: Solve the linear system

A * w ≈ bfor the coefficient vectorwusing least squares. To avoid overfitting, use regularized regression (e.g., ridge regression):w = (A^T A + λI)^{-1} A^T b

- Imputation:

- Form vector

a_gfrom the target featureg's values in then-1complete samples. - The imputed value for the missing entry is calculated as:

x_g(s) = a_g · w.

- Form vector

- Iteration: Apply iteratively across all missing values until the total change in the matrix falls below a set threshold.

Table 1: Performance Comparison of Imputation Methods on a Public Multi-omics Dataset (TCGA BRCA Subset)

| Method | Parameter (k) | NRMSE* | Runtime (sec) | Correlation Preservation (Avg. r) |

|---|---|---|---|---|

| kNN Impute | 10 | 0.154 | 12.7 | 0.891 |

| kNN Impute | 20 | 0.148 | 24.3 | 0.903 |

| LLS Impute | 10 | 0.132 | 18.5 | 0.921 |

| LLS Impute | 20 | 0.129 | 35.1 | 0.928 |

| Mean Impute | N/A | 0.201 | 0.5 | 0.832 |

| MissForest | N/A | 0.121 | 310.2 | 0.935 |

*Normalized Root Mean Square Error (NRMSE) evaluated on 10% artificially introduced missing values.

Table 2: Suitability for Omics Data Types

| Data Type | Recommended Method | Rationale |

|---|---|---|

| Transcriptomics (RNA-seq) | LLS or kNN | High feature correlation structure; LLS leverages local linearity. |

| Proteomics (LC-MS) | kNN (weighted) | Moderate correlation; weighted kNN handles noisy abundance data well. |

| Metabolomics (NMR/LC-MS) | kNN | Smaller feature sets; global similarity is often sufficient. |

| Methylation Arrays | LLS | Strong local correlation patterns across genomic loci. |

Visualizations

Diagram 1: Correlation-based imputation workflow.

Diagram 2: LLS imputation conceptual model.

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Implementation & Validation

| Item/Category | Function in Imputation Research | Example/Note |

|---|---|---|

| Programming Environment | Core platform for algorithm implementation and testing. | Python (scikit-learn, numpy, pandas) or R (impute, pcaMethods). |

| High-Performance Computing (HPC) Access | Enables iterative testing and large-scale multi-omics matrix imputation within feasible time. | Slurm cluster or cloud compute instances (AWS, GCP). |

| Benchmark Omics Datasets | Gold-standard complete datasets for introducing artificial missingness and validating imputation accuracy. | TCGA (cancer), GTEx (tissue), or simulated multi-omics datasets from Synapse. |

| Validation Metrics | Quantitative assessment of imputation quality against held-out or artificially masked data. | Normalized Root Mean Square Error (NRMSE), Pearson correlation of recovered values, Procrustes analysis. |

| Downstream Analysis Pipeline | To test the biological validity of imputation results in the context of the broader thesis. | Pre-established pipelines for differential expression, clustering, or pathway enrichment (e.g., DESeq2, WGCNA, GSEA). |

| Missingness Pattern Simulator | Tool to generate Missing Completely at Random (MCAR), Missing at Random (MAR), or structured missingness for robust method testing. | Custom scripts or R package Amelia. |

Within the broader thesis on multi-omics data imputation, the challenge of handling missing values is paramount. Datasets from genomics, transcriptomics, proteomics, and metabolomics are inherently sparse due to technical limitations, cost, and detection thresholds. Advanced matrix completion techniques, specifically Nuclear Norm Minimization (NNM) and Iterative Imputation Algorithms, provide a rigorous mathematical framework for recovering missing entries by leveraging the inherent low-rank structure of biological data. These methods assume that the complete data matrix has low rank, meaning that rows (e.g., samples) and columns (e.g., molecular features) are highly correlated, which is a valid assumption in omics studies due to underlying coordinated biological pathways and processes.

Theoretical Foundations

Nuclear Norm Minimization (NNM)

The nuclear norm (or trace norm) of a matrix (X), denoted (\|X\|_*), is the sum of its singular values. NNM aims to find the lowest-rank matrix that fits the observed entries, but as rank minimization is NP-hard, the convex surrogate—the nuclear norm—is minimized.

The standard formulation is: [ \min{X} \|X\|* \quad \text{subject to} \quad \mathcal{P}\Omega(X) = \mathcal{P}\Omega(M) ] where (M) is the incomplete data matrix, (\Omega) is the set of indices of observed entries, and (\mathcal{P}\Omega) is the projection operator onto (\Omega). In practice, a noisy version is solved using: [ \min{X} \|X\|* + \frac{\lambda}{2} \|\mathcal{P}\Omega(X) - \mathcal{P}\Omega(M)\|F^2 ]

Iterative Imputation Algorithms

These algorithms, such as Soft Impute and Iterative SVD, alternate between imputing missing values with current estimates and computing a low-rank approximation of the filled matrix. The process iterates until convergence.

Application Notes for Multi-Omics Data

Key Advantages:

- Data Integration: Effective for concatenated multi-omics matrices (samples × multi-omics features).

- No Requirement for Biological Priors: Purely data-driven, though biological knowledge can guide parameter tuning.

- Handling Non-Random Missingness: Certain algorithms are robust to Missing Not At Random (MNAR) patterns common in proteomics (missing low-abundance proteins).

Primary Challenges:

- Parameter Selection: The regularization parameter (\lambda) critically balances rank and fit.

- Computational Scale: Singular Value Decomposition (SVD) for large matrices (e.g., single-cell multi-omics) is intensive.

- Assumption of Low Rank: May not hold if datasets are highly heterogeneous.

Experimental Protocols for Benchmarking

Protocol 4.1: Simulated Missingness Experiment

Objective: Evaluate imputation accuracy under controlled conditions. Input: A complete, curated multi-omics matrix (e.g., from a reference cell line). Procedure:

- Matrix Normalization: Apply per-feature (column) standardization (z-score).

- Induce Missingness: Randomly mask entries (e.g., 10%, 20%, 30%) under Missing Completely at Random (MCAR) and MAR schemes.

- Imputation:

- NNM: Solve using accelerated proximal gradient descent (e.g.,

softImputeR package). - Iterative SVD: Use

IterativeSVDfromfancyimpute(Python) with rank (k) incrementally increased.

- NNM: Solve using accelerated proximal gradient descent (e.g.,

- Validation: Calculate Root Mean Square Error (RMSE) between imputed and original values for the masked entries.

- Biological Concordance: For a subset of known co-regulated gene-protein pairs, compute the correlation pre- and post-imputation.

Protocol 4.2: Real-World Downstream Analysis Validation

Objective: Assess impact on real biological analyses. Dataset: TCGA multi-omics data with inherent missingness. Procedure:

- Apply NNM and Iterative SVD imputation to the merged mRNA expression and DNA methylation matrix.

- Perform consensus clustering on the completed matrices.

- Compare resulting patient subtypes against known PAM50 breast cancer subtypes using Adjusted Rand Index (ARI).

- Perform differential expression analysis between imputation-derived clusters. Compare the number of significantly dysregulated pathways (via GSEA) against analysis on listwise-deleted data.

Performance Data & Benchmarking

Table 1: Comparative Performance on Simulated Multi-Omics Data (20% MCAR missingness)

| Method | Software Package | RMSE (Mean ± SD) | Runtime (seconds) | Spearman Correlation* |

|---|---|---|---|---|

| Nuclear Norm Minimization | softImpute (R) | 0.48 ± 0.03 | 125.6 | 0.92 |

| Iterative SVD (k=50) | fancyimpute (Py) | 0.51 ± 0.04 | 89.2 | 0.89 |

| k-NN Imputation | scikit-learn (Py) | 0.67 ± 0.05 | 15.4 | 0.75 |

| Mean Imputation | (Baseline) | 0.95 ± 0.01 | <1 | 0.61 |

*Correlation of feature-feature relationships in original vs. imputed data.

Table 2: Impact on TCGA BRCA Subtype Classification (ARI)

| Analysis Method | No Imputation (Listwise) | NNM Imputation | Iterative SVD Imputation |

|---|---|---|---|

| Consensus Clustering | 0.62 | 0.81 | 0.78 |

| Differential Pathways Found | 112 | 154 | 148 |

Visualization of Workflows and Relationships

Title: Advanced Matrix Completion Workflow for Multi-omics Data

Title: Iterative SVD Imputation Algorithm Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Matrix Completion in Multi-Omics

| Item (Software/Package) | Primary Function | Application Note |

|---|---|---|

| softImpute (R) | Solves NNM via convex optimization. | Core package for NNM. Use lambda.grid for parameter tuning via cross-validation. |

| fancyimpute (Python) | Implements IterativeSVD, Matrix Factorization. | Good for large-scale data. Requires initial rank k estimate via scree plot. |

| Spectra (C++/R) | Fast SVD for large sparse matrices. | Essential for scaling to single-cell multi-omics (millions of cells). |

| MissMDA (R) | PCA-based imputation with regularization. | Useful for comparison, handles mixed data types. |

| CVXR (R/Python) | Domain-specific language for convex optimization. | Allows customization of complex NNM constraints (e.g., non-negativity). |

| IntegrateMultiomicsData (Custom Script) | Pre-processing pipeline for matrix alignment. | Merges disparate omics layers into a single sample×feature matrix with consistent missingness patterns. |

Application Notes

Multi-omics Data Landscape and Imputation Challenges

Multi-omics integration presents a high-dimensional, sparse, and heterogeneous data challenge. Missing values arise from technological limitations, cost constraints, and sample quality. Deep learning methods offer nonlinear, high-capacity models to learn latent representations and impute missing data types across genomics, transcriptomics, proteomics, and metabolomics.

Quantitative Comparison of Deep Learning Imputation Methods

The following table summarizes the performance metrics of key deep learning architectures on benchmark multi-omics imputation tasks, based on recent literature.

Table 1: Performance Comparison of Deep Learning Models for Multi-omics Imputation

| Model Class | Typical Architecture | Avg. Imputation Accuracy (NRMSE↓) | Key Strength | Major Limitation | Best-suited Omics Data |

|---|---|---|---|---|---|

| Autoencoders (Denoising) | Encoder-Bottleneck-Decoder | 0.12 - 0.18 | Learns robust latent representations; handles non-linear relationships. | May impute towards average if corruption is high. | Bulk RNA-seq, Methylation arrays |

| Variational Autoencoders (VAE) | Encoder-Latent Distribution-Decoder | 0.10 - 0.16 | Generates probabilistic imputations; good for uncertainty estimation. | Can produce over-regularized, blurry imputations. | scRNA-seq, Proteomics |

| Generative Adversarial Networks (GANs) | Generator + Discriminator | 0.08 - 0.14 | Can generate highly realistic, sharp data points. | Training instability; mode collapse risk. | Metabolomics, Chip-seq peaks |

| Graph Neural Networks (GNNs) | Graph Convolutional Networks | 0.07 - 0.13 | Leverages biological network priors (e.g., PPI, metabolic pathways). | Dependent on quality and relevance of input graph. | Any omics with prior network knowledge |

| Multi-modal AE/GAN | Multiple encoders/decoders | 0.06 - 0.11 | Directly models cross-omics correlations for cross-type imputation. | Complex architecture; large sample size required. | Paired multi-omics (e.g., RNA + Protein) |

NRMSE: Normalized Root Mean Square Error (lower is better). Ranges are aggregated from recent studies on datasets like TCGA, GTEx, and CellBench.

Experimental Protocols

Protocol: Cross-omics Imputation using a Multi-modal Variational Autoencoder (mmVAE)

Objective: To impute missing proteomics data for samples where only transcriptomics data is available.

Materials & Reagent Solutions:

- Paired RNA-Seq and Proteomics Dataset: (e.g., CP-TAC cohort from TCGA). Provides ground truth for training.

- Pre-processing Pipeline: Tools for normalization (e.g.,

scanpyfor RNA,limmafor protein), log-transformation, and z-scoring. - mmVAE Software Framework:

scvi-tools(Python library) or customPyTorchimplementation. - High-Performance Computing (HPC) Environment: GPU (NVIDIA V100 or A100) with ≥16GB memory.

- Validation Dataset: A held-out subset of the paired data where protein values are artificially masked.

Procedure:

- Data Preparation: a. Download and load paired RNA-seq (log(TPM+1)) and mass-spectrometry proteomics (log-intensity) matrices. b. Align samples by common identifiers. Remove proteins with >50% missing values. For remaining missing protein values, apply a minimal initial imputation (e.g., sample-wise minimum). c. Split data into Training (70%), Validation (15%), and Test (15%) sets. Ensure no patient overlap. d. In the Test set, randomly mask 30% of the protein expression values to serve as the ground truth for imputation accuracy calculation.

Model Architecture & Training: a. Implement an mmVAE with two separate encoders (for RNA and Protein) mapping to a shared latent space

z, and two separate decoders. b. Use a fully connected neural network for each encoder/decoder (2 hidden layers, 128 nodes each, ReLU activation). c. The loss function is the sum of: (i) Reconstruction losses (Mean Squared Error) for both modalities, (ii) Kullback–Leibler divergence between the latent distribution and a standard normal prior. d. Train using the Adam optimizer (learning rate=1e-4, batch size=64) on the Training set. Monitor the Validation set loss for early stopping.Imputation & Validation: a. For a test sample with missing protein data, pass the available RNA data through the RNA encoder to obtain a latent vector

z. b. Passzthrough the protein decoder to generate the imputed protein profile. c. Compare the imputed protein values against the held-out true values using NRMSE and Pearson correlation coefficient.

Protocol: Single-cell RNA-seq Imputation using a Graph Convolutional Autoencoder

Objective: To recover missing gene expression values (dropouts) in single-cell RNA-seq data by leveraging gene-gene interaction networks.

Materials & Reagent Solutions:

- scRNA-seq Count Matrix: Processed using

CellRangeroralevin. Filtered for cells and genes. - Gene Interaction Network: A prior knowledge graph (e.g., STRING PPI network, Gene Co-expression network). Formatted as an adjacency matrix.

- GNN Framework:

PyTorch GeometricorDGLlibrary. - Imputation Metrics:

scikit-learnfor calculating mean absolute error and Spearman rank correlation on highly variable genes.

Procedure:

- Graph Construction & Data Pre-processing:

a. Select the top 5,000 highly variable genes from the scRNA-seq matrix.

b. Fetch the corresponding sub-network from the STRING database for these genes. Create a symmetric binary adjacency matrix

AwhereA_ij = 1if the interaction confidence score > 700. c. Normalize the scRNA-seq matrix using library size normalization and log1p transformation. Input features are per-gene expression vectors.

Model Training: a. Build a Graph Autoencoder (GAE): The encoder consists of two Graph Convolutional Network (GCN) layers. The decoder is an inner product decoder that reconstructs the gene expression matrix from the node embeddings. b. Corrupt the input training data by randomly setting 20% of non-zero values to zero, simulating dropout. c. Train the model to minimize the reconstruction error (MSE) between the original (uncorrupted) matrix and the reconstructed matrix. Use Adam optimizer (lr=0.01).

Imputation Execution: a. Pass the full, real (but sparse) scRNA-seq matrix through the trained GAE. b. The output layer provides the imputed, denoised expression matrix. c. Validate by comparing the imputed expression for a set of housekeeping genes against their expected low variance profile. Use downstream analysis (e.g., clustering, trajectory inference) to assess biological consistency.

Diagrams

Multi-modal VAE for Cross-omics Imputation

Graph Autoencoder Workflow for scRNA-seq

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Deep Learning Omics Imputation

| Item | Supplier/Example | Function in Protocol |

|---|---|---|

| Curated Multi-omics Datasets | TCGA, CPTAC, GTEx, CellBench, NeurIPS Multi-omics Benchmark | Provide standardized, paired omics data for model training, validation, and benchmarking. Essential for reproducibility. |

| Single-cell Analysis Suite | 10x Genomics Cell Ranger, Seurat, Scanpy | Pre-processing raw sequencing data into count matrices, performing QC, and basic normalization before imputation. |

| Biological Network Databases | STRING, KEGG, Reactome, HumanBase | Sources of prior knowledge graphs (protein-protein, metabolic, co-expression) for Graph Neural Network-based imputation methods. |

| Deep Learning Frameworks | PyTorch (PyTorch Geometric), TensorFlow, JAX | Core libraries for building and training custom autoencoder, GAN, and GNN architectures. |

| Specialized Omics DL Libraries | scvi-tools, DeepGraphGen, OmicsGAN | Offer pre-implemented, domain-optimized models that accelerate development and ensure best practices. |

| High-Performance Computing | NVIDIA GPUs (A100, H100), Google Colab Pro, AWS EC2 (P4 instances) | Provide the necessary computational power for training large models on high-dimensional omics data in a feasible time. |

| Imputation Metrics Package | scikit-learn, SciPy, custom scripts for NRMSE, PCC, Kendall's Tau | Quantitatively assess the accuracy and robustness of imputation results against held-out ground truth. |

| Visualization Tools | TensorBoard, wandb, Scanpy plotting, Gephi | Track model training in real-time, visualize latent spaces, and interpret the biological impact of imputation on clusters/ trajectories. |

Application Notes

Cross-omics imputation addresses the critical challenge of missing data in multi-omics studies by leveraging the statistical relationships and biological coherence between different molecular layers. The core premise is that data from one complete or partially complete omics layer (e.g., transcriptomics) can inform and predict missing values in another, more sparse omics layer (e.g., proteomics). This is distinct from within-omics imputation, which relies only on patterns within a single data type. The utility of these methods is paramount in scenarios where certain assays are costly, low-throughput, or prone to technical dropout, such as in single-cell proteomics or spatial metabolomics.

Table 1: Comparison of Selected Cross-omics Imputation Methods & Performance

| Method Name | Core Algorithm | Primary Source Omics | Target Omics (Imputed) | Reported Performance (NRMSE/R²/Pearson r) | Key Application Context |

|---|---|---|---|---|---|

| MOG (Multi-Omics Gaussian) | Gaussian Process Latent Variable Models | Transcriptomics | Proteomics | NRMSE: 0.15-0.22 on benchmark datasets | Bulk tissue cohorts (e.g., TCGA, CPTAC) |

| netNMF-sc | Joint Non-negative Matrix Factorization | scRNA-seq | scATAC-seq | Cell-type clustering accuracy >90% | Single-cell multi-omics with paired nuclei |

| MIDAS (Multi-omics Imputation via Deep AutoencoderS) | Deep Autoencoder with Adversarial Training | Metabolomics / Transcriptomics | Metabolomics | Pearson r: 0.62-0.78 on missing metabolites | Large-scale population cohorts (plasma/serum) |

| Protein Expression Prediction (PEP) | Elastic Net / XGBoost Regression | Transcriptomics (RNA-seq) | Proteomics (RPPA/LC-MS) | R²: 0.3-0.6 across cancer types | Translational oncology, drug target validation |

| GRN-based Imputation | Graph Neural Networks on Gene Regulatory Networks | Chromatin Accessibility (ATAC-seq) | Gene Expression | Improves correlation with held-out data by ~20% | Developmental biology, cellular differentiation |

Protocol 1: Cross-omics Imputation for Proteomics from RNA-seq Data Using PEP Framework

Objective: To impute protein abundance levels for a target set of proteins using matched RNA-seq data as the source.

Materials & Reagents:

- Input Data: Normalized RNA-seq read counts (FPKM or TPM) and matched protein abundance data (e.g., from LC-MS/MS or RPPA) for a training set of samples.

- Software: R (v4.2+) or Python (v3.9+).

- Key R Packages:

glmnet,caret,xgboost. - Key Python Libraries:

scikit-learn,xgboost,pandas,numpy.

Procedure:

- Data Preprocessing: Log2-transform both RNA-seq and proteomics data. For the proteomics data, handle missing values either by removing proteins with >50% missingness or using simple within-omics imputation (e.g., k-nearest neighbors) for proteins with limited missing data.

- Feature Selection: For each target protein to be imputed, identify the top n (e.g., 100) most correlated mRNA transcripts from the training data based on Pearson correlation. Optionally, incorporate prior knowledge from protein-protein interaction or pathway databases to refine features.

- Model Training: Split the training dataset into 70% training and 30% validation sets. For each protein, train a predictive model (e.g., Elastic Net regression or XGBoost) using the selected mRNA features as predictors and the measured protein abundance as the response variable. Perform 10-fold cross-validation on the training set to tune hyperparameters (e.g., alpha/lambda for Elastic Net, tree depth for XGBoost).

- Model Validation: Apply the trained model to the held-out validation set. Calculate performance metrics (R², Pearson correlation) between imputed and actual protein abundance. Retain models that meet a pre-defined threshold (e.g., R² > 0.2).

- Imputation Phase: For new samples with only RNA-seq data available, apply the validated, trained models to the preprocessed mRNA expression data to generate imputed protein abundance values.

- Downstream Analysis: Use the complete, imputed proteomics matrix for integrated analysis, such as clustering, classification, or pathway enrichment.

Protocol 2: Single-cell Multi-omics Imputation using netNMF-sc

Objective: To impute missing single-cell ATAC-seq peaks leveraging paired scRNA-seq data from a subset of cells.

Materials & Reagents:

- Input Data: scRNA-seq count matrix (cells x genes) and a sparse scATAC-seq binary matrix (cells x peaks) from a partially overlapping set of cells.

- Software: Python (v3.9+).

- Key Python Libraries:

scikit-learn,scanpy,episcanpy, and the author's implementation ofnetNMF-sc. - Computational Resources: High-performance computing node with ≥ 32 GB RAM recommended.

Procedure:

- Data Alignment & Preprocessing: Filter cells and features (genes/peaks) for quality. Normalize scRNA-seq data using SCTransform or total-count normalization followed by log1p transformation. Binarize the scATAC-seq matrix. Identify the subset of cells measured by both modalities.

- Joint Matrix Construction: Create a aligned multi-omics matrix for the paired cells by row-concatenating the processed RNA and ATAC feature matrices.

- Running netNMF-sc: Apply the netNMF-sc algorithm, which performs joint non-negative matrix factorization on the concatenated matrix. This learns a shared low-dimensional representation (latent factors) for each cell that captures co-variation across both omics layers.

- Imputation & Reconstruction: Use the learned cell latent factors and the ATAC-specific factor loadings to reconstruct a complete scATAC-seq matrix for all cells, including those originally missing ATAC data.

- Validation (if hold-out data exists): For paired cells, mask a portion of ATAC peaks, run imputation, and compare imputed values to the held-out true values using area under the ROC curve (AUC) for peak accessibility.

- Downstream Analysis: Use the imputed, denoised ATAC matrix for chromatin accessibility analysis, peak calling, and integration with gene expression for regulatory network inference.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Resource | Function in Cross-omics Imputation |

|---|---|

| CPTAC Assay Portal (Proteomic Data) | Provides standardized, high-quality tumor proteomics datasets (LC-MS/MS) with matched genomics/transcriptomics for model training and benchmarking. |

| 10x Genomics Multiome ATAC + Gene Exp. | Commercial kit generating paired scATAC-seq and scRNA-seq from the same single nucleus, providing the gold-standard ground truth data for developing and validating single-cell cross-omics methods. |

| Cell Signaling Technology (CST) RPPA | Reverse Phase Protein Array allows targeted, cost-effective protein abundance measurement for 100s of samples, useful for generating validation data for transcriptomics-to-proteomics imputation models. |

| Metabolon HD4 Metabolomics Platform | Broad-coverage metabolomics profiling service, often used in cohort studies. The structured, curated metabolite data serves as a key source or target for metabolomics-integrated imputation. |

| STRING Database / KEGG Pathways | Provide prior biological knowledge on protein-protein interactions and pathway memberships. Used to constrain or weight feature selection in model training to improve biological plausibility. |

| Google Colab / AWS Sagemaker | Cloud computing platforms with GPU support essential for running and developing deep learning-based imputation methods (e.g., MIDAS, GNN models) without local hardware constraints. |

Visualizations

Title: General Cross-omics Imputation Workflow

Title: Biological Basis for RNA-to-Protein Imputation

The integration of multi-omics (genomics, transcriptomics, proteomics, metabolomics) is pivotal for modern precision drug development. A significant bottleneck is missing data across omics layers due to technical variability. This application note, framed within broader research on multi-omics data imputation methods, demonstrates how robust imputation enables reliable biomarker discovery and patient stratification, using recent non-small cell lung cancer (NSCLC) and inflammatory bowel disease (IBD) case studies.

Case Study 1: NSCLC – Overcoming Proteomic Missingness for Predictive Biomarkers

Background: A 2023 study sought predictive biomarkers for immune checkpoint inhibitor (ICI) response in NSCLC using plasma proteomics. High missingness in low-abundance inflammatory proteins threatened analytical validity.

Experimental Protocol: Multi-omics Profiling with Imputation for NSCLC

- Sample Cohort: Pre-treatment plasma from 120 advanced NSCLC patients (discovery n=80, validation n=40) initiating anti-PD-1 therapy.

- Proteomic Profiling: Plasma analyzed using the Olink Target 96 Inflammation panel. Data generated as Normalized Protein eXpression (NPX) values.

- Genomic Correlates: Tumor tissue from same patients subjected to whole-exome sequencing (WES) for tumor mutation burden (TMB) and somatic variant calling.

- Data Imputation: Proteins with >20% missingness across cohort excluded. Remaining missing values imputed using the Stochastic Gradient Descent-based Matrix Factorization (SVD-based) method, selected for its efficacy with high-dimensional, non-normally distributed proteomic data.

- Analysis:

- Imputed proteomic data analyzed via partial least squares discriminant analysis (PLS-DA) to identify protein signatures separating responders (RECIST v1.1: CR/PR) from non-responders (SD/PD).

- Signature proteins correlated with genomic features (e.g., TMB) using Spearman's rank.

- A logistic regression classifier combining proteomic signature and TMB built on discovery set and tested on validation set.

Key Results (Summarized):

Table 1: Performance of Biomarker Signatures in NSCLC Validation Cohort (n=40)

| Biomarker Model | AUC | Sensitivity (%) | Specificity (%) | PPV (%) |

|---|---|---|---|---|

| Proteomic Signature (Post-Imputation) Alone | 0.78 | 70 | 75 | 73 |

| TMB Alone (≥10 mut/Mb) | 0.65 | 40 | 90 | 80 |

| Combined Model (Proteomic + TMB) | 0.87 | 80 | 85 | 83 |

Conclusion: Imputation recovered critical signal from proteins like CXCL9 and LAMP3, enabling a robust combined biomarker model that outperformed single-omics predictors.

Case Study 2: IBD – Multi-omics Integration for Patient Subtyping

Background: A 2024 initiative aimed to stratify Crohn's disease patients beyond clinical phenotypes by integrating gut microbiome metagenomics and host serum metabolomics, where sample mismatch and batch effects created missing data patterns.

Experimental Protocol: Integrated Omics Workflow for IBD Stratification

- Sample Collection: Stool and matched serum from 200 Crohn's disease patients at active flare. Clinical remission status assessed at 52 weeks.

- Multi-omics Profiling:

- Metagenomics: Stool DNA shotgun sequenced. Species-level abundance profiles generated using MetaPhlAn4.

- Metabolomics: Serum analyzed via untargeted LC-MS. Features aligned and annotated.

- Data Integration & Imputation: The Multivariate Imputation by Chained Equations (MICE) algorithm was applied to handle missing metabolomic features and sporadic missing microbial abundances. MICE preserved inter-omics relationships crucial for integration.

- Patient Stratification: Imputed datasets integrated using MOFA+ (Multi-Omics Factor Analysis). Factors derived were clustered (k-means) to identify patient subgroups.

- Validation: Subgroup clinical outcomes (remission rates) compared. Differential species and metabolites defining subgroups identified.

Key Results (Summarized):

Table 2: Characteristics and Outcomes of MOFA-Defined Crohn's Disease Subgroups

| Subgroup | Patients (n) | Dominant Omics Drivers | 52-Week Steroid-Free Remission Rate | Key Imputed Features Critical to Definition |

|---|---|---|---|---|

| Group 1: "Inflammatory" | 85 | High host inflammatory lipids | 25% | Arachidonic acid metabolites (prostaglandins) |

| Group 2: "Dysbiotic" | 65 | Depleted Faecalibacterium prausnitzii, Roseburia spp. | 40% | Microbial butyrate synthesis pathway intermediates |

| Group 3: "Balanced" | 50 | Balanced metabolome & microbiome | 68% | Secondary bile acids, microbial diversity index |

Conclusion: MICE-based imputation allowed for robust MOFA integration, revealing three biologically distinct subtypes with significant prognostic differences, guiding potential targeted trial recruitment.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Platforms for Multi-omics Biomarker Studies

| Item | Function & Application in Featured Studies |

|---|---|

| Olink Proseek Multiplex Assays | Proximity extension assay (PEA)-based technology for high-specificity, high-sensitivity multiplex protein quantification in plasma/serum (used in NSCLC study). |

| Nextera DNA Flex Library Prep Kit | For preparing high-quality sequencing libraries from low-input genomic DNA, including from stool microbiome samples (used in IBD study). |

| Pierce Top 12 Abundant Protein Depletion Spin Columns | Depletes high-abundance plasma proteins (e.g., albumin, IgG) to enhance detection of low-abundance biomarker candidates. |

| QIAamp Fast DNA Stool Mini Kit | Efficient, standardized isolation of microbial and host DNA from complex stool samples for metagenomic sequencing. |

| Seahorse XFp Analyzer & Kits | For functional metabolic phenotyping (e.g., OCR, ECAR) of patient-derived cells, validating biomarker-identified pathways. |

| Cytiva ÄKTA go Protein Purification System | Purification of recombinant proteins for assay standards or functional validation of biomarker candidates. |

Visualization: Workflows & Pathways

Diagram 1: Multi-omics Imputation & Integration Workflow

Diagram 2: NSCLC Biomarker Discovery Pathway

Navigating Pitfalls: Best Practices for Optimizing Imputation Performance in Your Research

Within multi-omics data imputation research, selecting an appropriate method is contingent upon a rigorous diagnostic analysis of the missing data pattern. This protocol outlines a standardized pre-imputation workflow to characterize the nature of missingness, a critical step for valid downstream analysis in pharmaceutical and basic research.

Table 1: Missing Data Mechanisms: Definitions and Implications for Imputation

| Mechanism | Acronym | Definition | Key Testable Characteristic | Recommended Imputation Approach |

|---|---|---|---|---|

| Missing Completely at Random | MCAR | The probability of missingness is unrelated to any data, observed or missing. | No systematic difference between complete and incomplete cases. | Any imputation method (e.g., mean, k-NN, SVD) may be unbiased. |

| Missing at Random | MAR | The probability of missingness depends only on observed data. | Missingness can be predicted from other complete variables. | Model-based methods (MICE, MissForest, matrix factorization). |