Missing in Multi-Omics: A Comprehensive Guide to Handling Missing Values in Genomic, Transcriptomic, and Proteomic Data

Missing data is an omnipresent challenge in multi-omics studies, threatening the validity of integrative analysis and downstream biological discovery.

Missing in Multi-Omics: A Comprehensive Guide to Handling Missing Values in Genomic, Transcriptomic, and Proteomic Data

Abstract

Missing data is an omnipresent challenge in multi-omics studies, threatening the validity of integrative analysis and downstream biological discovery. This article provides a targeted guide for researchers, scientists, and drug development professionals. It first establishes a foundational understanding of missingness mechanisms (MCAR, MAR, MNAR) across omics layers and their biological and technical causes. It then details a modern toolkit of imputation methods, from traditional k-NN to advanced deep learning models, with practical application workflows. The guide addresses critical troubleshooting and optimization strategies, including parameter tuning and method selection based on data structure. Finally, it offers a robust framework for validating imputation performance using biological and statistical metrics and comparing leading tools. The goal is to empower researchers to make informed, defensible decisions in their multi-omics pipelines, leading to more robust and reproducible findings in translational research.

Understanding the Void: Types, Causes, and Diagnostics of Missingness in Multi-Omics Data

Technical Support Center: Troubleshooting Missing Data

Troubleshooting Guides

Guide 1: Diagnosing the Source of Missingness

- Issue: Inconsistent missing data patterns across omics layers (e.g., proteomics has more missing values than transcriptomics).

- Root Cause Analysis: This is often due to the technological limits of detection (e.g., low-abundance proteins) or sample processing failures.

- Step-by-Step Solution:

- Audit: Create a missingness heatmap per sample and per feature for each omics dataset.

- Correlate: Check if missingness in one layer (e.g., metabolomics) correlates with low signal in another related layer (e.g., transcriptomics of metabolic enzymes).

- Classify: Use statistical tests (e.g., Little's MCAR test) to classify missingness as Missing Completely At Random (MCAR), Missing At Random (MAR), or Missing Not At Random (MNAR).

- Action: Choose an imputation or analysis method appropriate for the classified missingness mechanism.

Guide 2: Handling Batch Effects Coupled with Missing Data

- Issue: Missing data is not random but concentrated in specific experimental batches.

- Root Cause Analysis: Batch-specific technical artifacts (different reagents, operators, instrument calibrations) cause systematic dropouts.

- Step-by-Step Solution:

- Visualize: Perform PCA on each dataset, coloring points by batch. Look for batch clustering coinciding with high missingness.

- Pre-process: Apply batch correction methods (e.g., ComBat, limma's

removeBatchEffect) before imputation to minimize bias. - Impute Post-Correction: Use a model-based imputation method (e.g., missForest, SVD-based) that can incorporate batch as a covariate.

- Validate: Check that the distribution of imputed values is consistent across batches post-correction.

Frequently Asked Questions (FAQs)

Q1: I have missing values in my proteomics data. Should I impute them or just remove those proteins/peptides?

A: Removal (listwise deletion) is only advisable if the missingness is minimal (<5%) and verified to be MCAR. For typical proteomics data where missingness is MNAR (below detection limit), imputation is necessary. Use left-censored imputation methods like MinDet or model-based methods like QRILC that account for the non-random, left-shifted nature of the data.

Q2: How do I choose an imputation method for my integrated multi-omics dataset? A: The choice depends on the mechanism and scale of missingness. See the table below for a structured comparison.

Q3: Can I use machine learning integration tools (like MOFA+) with missing data? A: Yes, a key advantage of tools like MOFA+ is their inherent ability to handle missing values. They use a probabilistic framework to model the data, treating missing entries as latent variables to be inferred during the factor analysis. No prior imputation is strictly necessary, though some pre-imputation for heavily missing features can improve stability.

Data Presentation: Imputation Method Comparison

Table 1: Common Imputation Methods for Multi-Omics Data

| Method | Principle | Best For Missingness Type | Key Advantage | Key Limitation |

|---|---|---|---|---|

| k-Nearest Neighbors (kNN) | Uses values from 'k' most similar samples. | MCAR, MAR | Simple, preserves data structure. | Computationally heavy, poor for MNAR. |

| MissForest | Iterative imputation using Random Forests. | MAR, mild MNAR | Non-parametric, handles complex relations. | Very computationally intensive. |

| Singular Value Decomposition (SVD) | Low-rank matrix approximation. | MCAR, MAR | Captures global data structure. | Assumes linearity, poor for high MNAR. |

| MinDet / MinProb | Draws from a left-shifted distribution. | MNAR (e.g., proteomics) | Specific for detection limit MNAR. | Simplistic, may underestimate variance. |

| Bayesian PMF | Probabilistic matrix factorization. | MCAR, MAR | Provides uncertainty estimates. | Complex implementation and tuning. |

Experimental Protocol: Benchmarking Imputation Performance

Title: Protocol for Systematic Evaluation of Imputation Methods in Multi-Omics Integration.

Objective: To empirically determine the optimal imputation strategy for a given multi-omics dataset.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Data Preparation: Start with a complete, high-quality multi-omics dataset (D_original). Ensure all matrices (genomics, transcriptomics, proteomics) are aligned by sample ID.

- Induce Missingness: Artificially introduce missing values (e.g., 10%, 20%, 30%) into Doriginal under different mechanisms (MCAR, MAR, MNAR) to create incomplete datasets (Dmissing). For MNAR, simulate a detection limit threshold.

- Apply Imputation: Apply each candidate imputation method (from Table 1) to Dmissing, generating imputed datasets (DimputedA, Dimputed_B, ...).

- Downstream Integration: Perform a standard multi-omics integration analysis (e.g., using DIABLO or an integrated clustering pipeline) on both Doriginal and each Dimputed.

- Performance Metrics:

- Imputation Accuracy: Calculate Root Mean Square Error (RMSE) between the imputed values and the true values from D_original (only for artificially removed values).

- Biological Preservation: Compare the results of the downstream integration (e.g., cluster concordance, correlation of latent components) between Dimputed and Doriginal using metrics like Adjusted Rand Index (ARI) or similar.

- Statistical Comparison: Rank methods based on RMSE and ARI for each missingness scenario to select the best-performing one for your real, unknown data.

Mandatory Visualizations

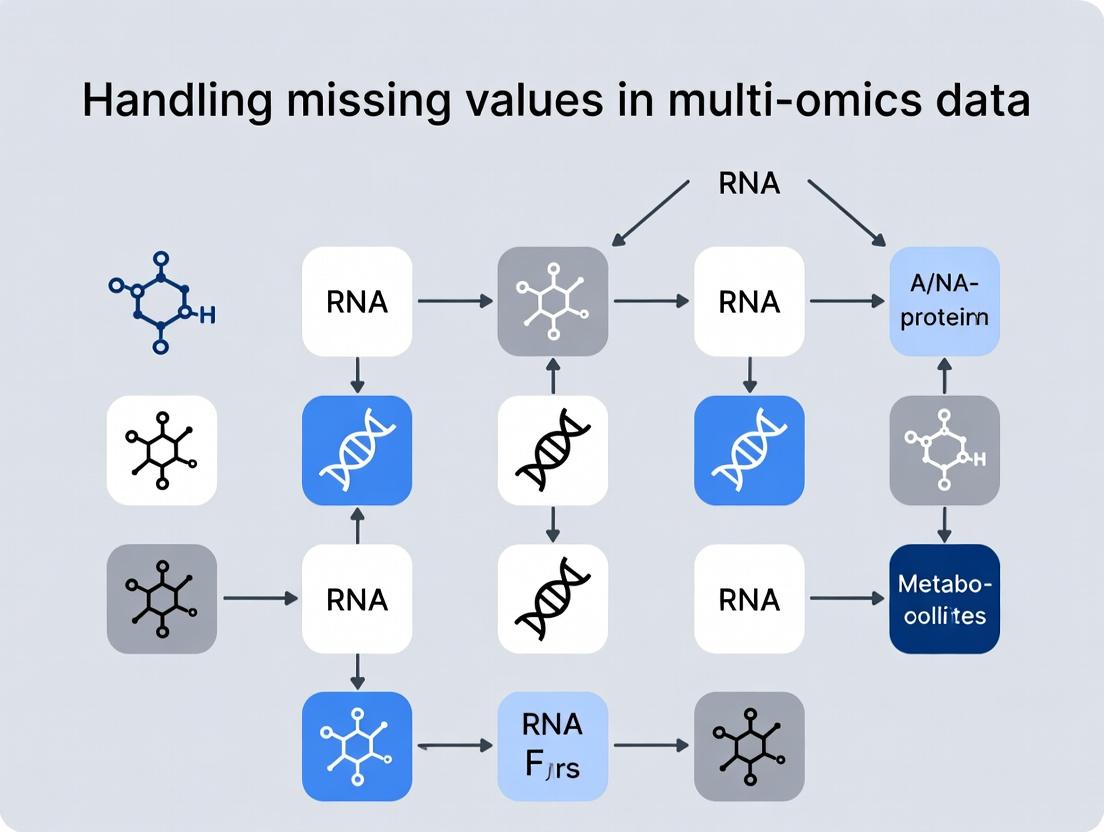

Diagram 1: Multi-Omics Integration Workflow with Missing Data Handling

Diagram 2: Missing Data Mechanisms (MCAR, MAR, MNAR)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for Missing Data Experiments

| Item / Reagent | Function in Context |

|---|---|

| R Environment (v4.3+) with Bioconductor | Primary platform for statistical analysis and implementation of most imputation methods (e.g., impute, pcaMethods, missMDA packages). |

| Python (v3.9+) with scikit-learn & SciPy | Alternative platform for machine learning-based imputation (e.g., IterativeImputer, custom SVD) and deep learning approaches. |

| High-Quality Reference Multi-Omics Dataset (e.g., a fully observed cell line dataset from a public repository). | Serves as the "ground truth" for benchmarking imputation methods by artificially inducing missingness. |

| MOFA+ (R/Python) | A multi-omics integration tool with built-in handling of missing values, useful as a benchmark for downstream analysis preservation. |

| Batch Correction Software (e.g., ComBat, sva R package). | Critical for pre-processing when missingness is confounded with batch effects, done prior to imputation. |

| High-Performance Computing (HPC) Cluster Access | Many imputation methods (MissForest, Bayesian PMF) are computationally intensive and require parallel processing for realistic datasets. |

Troubleshooting Guides & FAQs

FAQ 1: My LC-MS metabolomics data has many missing values. How do I determine if the mechanism is MCAR, MAR, or MNAR? Answer: The mechanism is often assay-specific. For LC-MS, missing values are frequently MNAR due to metabolite concentrations falling below the instrument's limit of detection (LOD). To diagnose:

- Perform a Missing Value Pattern Analysis: Create a table of missingness per sample group or experimental condition.

- Conduct a Statistical Test: Use Little's MCAR test on a subset of your complete data. A significant p-value suggests the data is not MCAR.

- Analyze by Abundance: Plot the frequency of missing values against signal intensity (log-scale). A strong inverse correlation is indicative of MNAR.

FAQ 2: I suspect MNAR in my proteomics dataset. What are my best imputation options? Answer: For MNAR (often called left-censored missingness), use methods designed for this mechanism. Avoid mean/median imputation.

- Recommended: Use a left-censored imputation like

QRILC(Quantile Regression Imputation of Left-Censored data) orMinProb(replace with a value drawn from a distribution of small values). - Workflow: First, separate MNAR from potentially MAR missingness using density and intensity plots, then apply a two-step imputation strategy.

FAQ 3: After RNA-seq normalization, I still have missing values for low-expression genes. Is this MAR or MNAR?

Answer: This is typically MNAR. The absence of read counts for a gene in specific samples is not random; it is directly related to the true expression level being biologically zero or technically undetectable. Imputation here is risky and may create false positives. Consider a no imputation approach using statistical models like limma-voom or negative binomial models that can handle zeros, or use a method specifically for count data like SAVER.

FAQ 4: How can I test if missingness in my multi-omics dataset is dependent on another assay's values (i.e., MAR)? Answer: You can perform a correlation analysis between missingness patterns.

- Create a binary matrix (0=present, 1=missing) for Assay A (e.g., metabolomics).

- Correlate this matrix with the quantitative values from a complete or more complete Assay B (e.g., transcriptomics) using a point-biserial correlation.

- Significant correlations suggest the missingness in Assay A may be MAR, dependent on the values from Assay B. This can inform integrative imputation methods.

Experimental Protocols

Protocol 1: Diagnostic Workflow for Classifying Missingness Mechanisms in Omics Data

Objective: To systematically determine the likely missingness mechanism (MCAR, MAR, MNAR) in a single-omics dataset.

Materials: Processed data matrix (features x samples), sample metadata, statistical software (R/Python).

Procedure:

- Generate Missingness Summary: Calculate the percentage of missing values per sample and per feature. Flag samples or features with >20% missingness for potential removal.

- Visualize Patterns: Create a heatmap of the missingness matrix, ordered by experimental groups.

- Perform Little's MCAR Test: Apply the test to a subset of complete cases. A p-value > 0.05 fails to reject the null hypothesis that data is MCAR.

- Intensity-Dependence Plot: For each feature, calculate the average abundance (log2) for cases where it is observed. Plot the missing rate per feature against this average abundance. A monotonic decreasing trend indicates MNAR.

- Group-Difference Test: For each feature, perform a t-test (or ANOVA) comparing the average abundance in samples where it is observed vs. samples where it is missing (using other correlated features as a proxy if needed). A significant difference suggests not MCAR.

Protocol 2: Two-Step Imputation for Mixed MAR/MNAR Proteomics Data

Objective: To accurately impute a dataset containing a mixture of MAR and MNAR missing values.

Materials: Normalized log2-transformed proteomics intensity matrix.

Procedure:

- Identify MNAR Components: Use the

impute.MAR.MNARfunction in theimp4pR package or a similar tool. This method uses the distribution of missing values across sample groups to classify missing values as either MAR or MNAR. - Apply Mechanism-Specific Imputation:

- For values classified as MNAR, perform deterministic imputation using the

MinDetmethod (replace with the minimum value observed for that feature across all samples). - For values classified as MAR, perform probabilistic imputation using the

micepackage (Multivariate Imputation by Chained Equations) with a predictive mean matching method.

- For values classified as MNAR, perform deterministic imputation using the

- Validate: Perform a post-imputation PCA and compare it to the pre-imputation PCA on the complete-case subset to check if the data structure has been preserved.

Data Presentation Tables

Table 1: Characteristics of Missingness Mechanisms in Omics Assays

| Mechanism | Acronym | Cause in Omics | Common Assays | Diagnostic Cue |

|---|---|---|---|---|

| Missing Completely At Random | MCAR | Technical failure (pipetting error, chip defect, random sample loss). | All, but rare. | Missingness is unrelated to observed or unobserved data. Little's test is non-significant. |

| Missing At Random | MAR | Missingness depends on observed data (e.g., a protein is missing in high-grade tumors because tumor grade, a recorded variable, influences extraction). | Any integrative multi-omics. | Missingness pattern is correlated with other measured variables in the dataset. |

| Missing Not At Random | MNAR | Missingness depends on the unobserved true value itself (e.g., metabolite abundance below LOD). | Metabolomics (LC-MS), Proteomics (LC-MS), low-count RNA-seq. | Strong inverse correlation between missing rate and measured signal intensity. |

Table 2: Recommended Imputation Methods by Mechanism and Data Type

| Mechanism | Data Type | Recommended Method | Software/Package | Key Consideration |

|---|---|---|---|---|

| MCAR | Any | k-Nearest Neighbors (kNN) | impute (R), sklearn.impute (Python) |

Can be computationally heavy for large datasets. |

| MAR | Continuous (MS data) | MICE / MissForest | mice, missForest (R) |

Creates multiple imputed datasets; requires pooling. |

| MNAR | Left-censored (MS) | QRILC or MinProb | imputeLCMD (R) |

Assumes data is missing below a "detection threshold." |

| MNAR | Count (RNA-seq) | No imputation, or SAVER | SAVER (R), DESeq2 |

Direct model-based analysis (DESeq2) is often preferable to imputing counts. |

Visualizations

Title: Decision Flowchart for Missingness Mechanism & Imputation

Title: Two-Step MAR/MNAR Imputation Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Context of Missing Data |

|---|---|

| Internal Standards (IS) | Stable isotopically labeled compounds spiked into samples prior to MS analysis. They correct for technical variation and signal loss, reducing MNAR due to ion suppression. |

| Proteinase K | Robust protease used in nucleic acid and protein extraction. Incomplete digestion is a source of MAR/MNAR; using a high-quality, active enzyme minimizes this. |

| SP3 Beads | Paramagnetic beads for single-pot, solid-phase-enhanced sample prep for proteomics. Increase reproducibility and protein recovery, lowering missingness across samples. |

| ERCC RNA Spike-In Mix | Known, exogenous RNA controls added to RNA-seq experiments. They allow monitoring of technical sensitivity and can help diagnose if missing low-expression genes is technical (MAR) or biological. |

| QC Pool Sample | A representative sample injected repeatedly throughout an LC-MS run sequence. Used to monitor instrument drift and detect batch effects that can cause systematic missingness (MAR). |

A core challenge in multi-omics integration is distinguishing between missing values arising from technical limitations (e.g., instrument sensitivity) and those representing true biological absence (e.g., gene silencing). This technical support center provides targeted guidance for diagnosing and resolving these issues during data generation.

FAQs & Troubleshooting Guides

Q1: In my LC-MS proteomics run, I have many missing values for low-abundance proteins. Is this a technical detection issue or could they be biologically absent? A: This is primarily a technical issue related to the Limit of Detection (LOD). Follow this diagnostic protocol:

- Check Instrument Performance: Ensure the column is not degraded, the mass spectrometer is calibrated, and the ion source is clean.

- Spike-in Controls: Use a standardized protein/peptide mix at known, low concentrations across all samples. If these controls are inconsistently detected, the issue is technical.

- Review Raw Data: Examine the base peak chromatogram and total ion current for inconsistencies. A drop in overall signal suggests technical problems.

- Statistical Imputation Test: Apply a missing-not-at-random (MNAR) imputation method (like

MinProbin R). If imputed values are consistently very low, it supports technical absence.

Q2: My RNA-seq data shows zero counts for a gene in some conditions, but literature suggests it should be expressed. Is this biological silencing or a technical artifact? A: This requires investigation of both biological and technical factors.

- Verify Sample Integrity: Check RNA Integrity Number (RIN) > 8 for all samples. Degradation can cause 3' bias and gene dropout.

- Check Alignment Rates: Low alignment rates may indicate poor library prep or sample contamination.

- Examine Housekeeping Genes: If expression of stable control genes (e.g., GAPDH, ACTB) is highly variable, the issue is likely technical.

- Employ External Controls: Spike-in RNA (e.g., ERCC controls) can differentiate amplification biases from true biological changes.

- Confirm Biologically: Use an orthogonal method (e.g., qPCR) on the original sample to confirm true absence.

Q3: How can I systematically decide if a missing value in my integrated dataset is technical or biological? A: Implement a standardized workflow (see Diagram 1) that combines:

- Technical Replicates: Assess reproducibility.

- Internal/External Controls: Gauge platform sensitivity.

- Orthogonal Validation: Use a different technological principle to confirm.

Q4: What are the best practices for handling these two types of missing data in downstream analysis? A: They must be treated differently:

- Technically Missing (MNAR): Use imputation methods designed for left-censored data (e.g., detection limit-based imputation,

MinDet). - Biologically Missing (True Zero): These are informative and should be marked as "absently biologically significant" (ABS) and potentially coded as zero or use a separate indicator in statistical models.

Data Presentation

Table 1: Diagnostic Signatures for Technical vs. Biological Origins of Missing Data

| Feature | Technical Origin (e.g., Below LOD) | Biological Origin (e.g., Silenced Gene) |

|---|---|---|

| Pattern Across Samples | Random in low-concentration samples, correlates with poor QC metrics. | Consistent within biological groups/conditions (e.g., all control samples show expression, all treated are silent). |

| Response to Depth Increase | May appear with increased sequencing depth or MS injection amount. | Unchanged with increased technical effort. |

| Spike-in Control Data | Spike-in controls at similar low abundance are also missing. | Spike-in controls are detected normally. |

| Orthogonal Assay Result | Detected via a more sensitive or different technique (e.g., qPCR for RNA-seq dropouts). | Confirmed as absent by orthogonal technique. |

| Recommended Imputation | MNAR-specific methods (e.g., MinProb, QRILC). | No imputation; treat as meaningful zero or use binary presence/absence feature. |

Experimental Protocols

Protocol 1: Diagnosing LC-MS Detection Limit Issues

Objective: To determine if missing protein identifications are due to instrument sensitivity. Materials: See "Research Reagent Solutions" below. Procedure:

- Prepare a Standard LOD Curve: Serially dilute a stable isotope-labeled standard peptide mix covering a 5-order magnitude range (1 fmol/µL to 10,000 fmol/µL).

- Inject in Triplicate: Analyze each dilution on the LC-MS system identical to your experimental setup.

- Data Analysis: Plot measured peak area vs. injected amount. The LOD is defined as the lowest amount with a signal-to-noise ratio > 10 and consistent detection in all replicates.

- Benchmarking: Compare the abundance estimates of your missing proteins (from other samples where they were detected) against this LOD curve. Values near or below the LOD indicate technical dropout.

Protocol 2: Validating Biologically Silenced Genes via RT-qPCR

Objective: Orthogonally confirm the absence of gene expression suggested by RNA-seq. Materials: Original RNA samples, reverse transcription kit, qPCR master mix, primers for target and control genes. Procedure:

- cDNA Synthesis: Reverse transcribe 1 µg of total RNA from each key sample using a high-efficiency kit. Include a no-reverse-transcriptase (-RT) control.

- qPCR Setup: Perform qPCR in triplicate for: the putatively silenced gene, a positive control gene known to be expressed, and a spike-in exogenous control (e.g., from Arabidopsis).

- Cycle Threshold (Ct) Analysis: For the target gene: A Ct value ≥ 35 in the experimental sample, coupled with a strong signal (Ct < 30) in the positive control and a normal spike-in Ct, confirms biological silencing. High Ct in the -RT control confirms lack of genomic DNA contamination.

Mandatory Visualizations

Title: Decision Workflow for Missing Data Origin

Title: Multi-Omics Data Integration with Missing Value Annotation

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Origin Diagnosis

| Item | Function in Diagnosis |

|---|---|

| Stable Isotope-Labeled Standard (SIS) Peptides (Proteomics) | Absolute quantification and generation of Limit of Detection (LOD) curves to benchmark instrument sensitivity. |

| ERCC RNA Spike-In Mix (Transcriptomics) | Exogenous RNA controls at known concentrations to distinguish technical variability from biological change in RNA-seq. |

| Protein Standard Mix (e.g., BSA Digest) | Monitors LC-MS system performance and column retention time stability across runs. |

| High-Affinity Magnetic Beads (e.g., for SP3 cleanup) | Improves recovery of low-abundance proteins/peptides, reducing technical missingness. |

| Duplex-Specific Nuclease (DSN) | Normalizes cDNA libraries by reducing high-abundance transcripts, improving detection of low-expressed genes. |

| Digital PCR (dPCR) Assay | Provides absolute nucleic acid quantification without a standard curve, ideal for orthogonally validating low counts/silence. |

Troubleshooting Guides & FAQs

Q1: My heatmap shows a uniform pattern of missingness. Does this mean my data is Missing Completely at Random (MCAR)? A: Not necessarily. A uniform pattern in a heatmap (randomly scattered missing cells) is suggestive of MCAR but does not prove it. You must complement the visualization with a statistical test. Use Little's MCAR test or a permutation test. A non-significant p-value (>0.05) in Little's test supports the MCAR hypothesis, but domain knowledge about the experimental process is crucial for final determination.

Q2: When performing a statistical test for MAR (e.g., logistic regression test), I get a significant result. What are the immediate next steps? A: A significant result indicates the missingness is likely not MCAR and may be MAR or MNAR (Missing Not at Random). Your immediate steps are:

- Document the Pattern: Note which variables predict the missingness in your target variable.

- Adjust Your Imputation Model: Shift from simple mean imputation to a model that incorporates the predictors of missingness. Use Multiple Imputation by Chained Equations (MICE) or a maximum likelihood method, explicitly including the identified predictor variables in the imputation model.

- Conduct Sensitivity Analysis: Explore if results hold under different missingness mechanisms, potentially considering MNAR models like pattern-mixture models.

Q3: The missing data heatmap for my multi-omics dataset (e.g., proteomics vs. transcriptomics) shows clear block-wise patterns. What does this imply? A: Block-wise patterns often indicate a systematic, technology- or sample-specific issue. This is common in multi-omics integration. For example, all proteomic data for a specific batch of samples might be missing due to a failed LC-MS run. This pattern suggests the need to:

- Investigate batch logs and sample preparation records.

- Apply batch correction methods after imputation.

- Consider performing imputation separately per platform before integration, using information from other platforms as predictors.

Q4: How do I choose variables to include in a logistic regression test for MAR? A: Select variables that are:

- Fully observed or have very low missingness.

- Scientifically plausible as causes of the missingness (e.g., sample quality metrics, batch ID, total ion current in metabolomics, overall gene expression depth in RNA-Seq).

- Correlated with the variable that has missing values (if known from complete cases). Create a binary indicator variable (1=missing, 0=observed) for your target variable and regress it on your chosen predictors. A model with significant predictors refutes MCAR.

Key Experimental Protocols

Protocol 1: Generating a Missing Data Pattern Heatmap

- Data Preparation: Load your dataset (e.g., a matrix of samples x molecular features). Convert non-missing values to 0 and missing values to 1 (or use a dedicated library function).

- Sorting (Optional but Recommended): Sort rows (samples) and/or columns (features) by missingness percentage to reveal patterns. Use hierarchical clustering for unbiased pattern discovery.

- Visualization: Use a plotting library (e.g.,

seaborn.heatmapin Python,heatmap.2in R). Set an appropriate color map (e.g., binary: gray for observed, dark red for missing). - Interpretation: Annotate for known batch effects or sample groups. Look for random scatter (MCAR), vertical/horizontal stripes (systematic), or correlated block patterns.

Protocol 2: Conducting a Logistic Regression Test for MAR

Objective: Test whether the probability of missingness in a target variable Y depends on other observed variables.

- Create Missingness Indicator: For the variable

Ywith missing values, create a new binary variableM_YwhereM_Y = 1ifYis missing, and0if observed. - Select Predictor Variables: Assemble a set of fully observed or nearly fully observed variables

X1, X2, ..., Xpfrom your dataset. - Fit Logistic Model: Fit the model:

logit(P(M_Y = 1)) = β0 + β1*X1 + ... + βp*Xp. Use only cases whereX1,...,Xpare observed. - Global Test: Perform a likelihood-ratio test comparing the full model to a null model (intercept only). A significant p-value (e.g., <0.05) provides evidence against MCAR, suggesting the missingness is predictable from

X(MAR mechanism). - Report Results: Report the test statistic, p-value, and significant predictor variables.

Table 1: Common Missing Data Patterns in Multi-Omics & Suggested Actions

| Pattern in Heatmap | Likely Mechanism | Common Cause in Multi-Omics | Suggested Imputation Approach |

|---|---|---|---|

| Random, isolated cells | MCAR | Random technical noise, stochastic detection limits | Simple imputation (mean/median), k-NN, or deletion if minimal. |

| Vertical stripes (missing by feature) | MAR or MNAR | Failed probes, compounds below LOD in specific assays | Feature-wise deletion or imputation using correlated features from other platforms. |

| Horizontal stripes (missing by sample) | MAR | Poor sample quality, insufficient biomass, batch failure | Sample-wise deletion or robust multi-omics imputation (e.g., MICE with sample metadata). |

| Block patterns | MAR (Systematic) | Complete platform failure for a sample subset, different experimental panels | Platform-specific imputation first, then integration. Treat as a structured missing design. |

Table 2: Comparison of Statistical Tests for Missing Data Mechanisms

| Test Name | Tests For | Key Principle | Output Interpretation | Software Package Example |

|---|---|---|---|---|

| Little's MCAR Test | MCAR vs. (MAR+MNAR) | Compares means of different missingness pattern groups | p > 0.05: Fail to reject MCAR. p ≤ 0.05: Data not MCAR. | naniar (R), statsmodels.stats.missingness (Python) |

| Logistic Regression Test | Predictability of Missingness (MAR evidence) | Models missing indicator as a function of observed data | Significant model/chisq test: Missingness is predictable from observed data (MAR likely). | Base stats (R/Python) |

| t-test / Wilcoxon Test | Local MAR check | Compares distribution of an observed variable X between groups where Y is missing vs. observed |

Significant difference: Missingness in Y related to X (not MCAR). |

Base stats (R/Python) |

| Diggle-Kenward Test | MNAR for longitudinal data | Model-based test for dropout mechanisms. | Complex, requires specialized software. | lcmm (R) |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Missing Data Diagnostics |

|---|---|

naniar R Package |

Provides a cohesive framework for visualizing (gg_miss_* functions) and exploring missing data, including heatmaps and summaries. |

missingno Python Package |

Generates quick, informative visualizations of missing data patterns, including matrix heatmaps, bar charts, and correlation heatmaps. |

mice R Package / scikit-learn IterativeImputer |

Enables Multiple Imputation by Chained Equations, the gold-standard method for handling MAR data after diagnosis. |

Finalfit R Package |

Streamlines the process of using logistic regression to test and tabulate associations between missingness and observed variables. |

| High-Quality Sample Metadata | Critical, fully-observed variables (e.g., Batch ID, RIN, BMI, Collection Date) used as predictors in MAR tests and imputation models. |

| Benchmark Omics Datasets (e.g., TCGA) | Datasets with intentionally introduced missing patterns to validate diagnostic and imputation pipelines. |

Visualizations

Diagram 1: Diagnostic Workflow for Missing Data Mechanism

Diagram 2: Logistic Regression Test for MAR Logic

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My multi-omics dataset has missing values. How do I quickly assess if the missingness is random or systematic? A: Systematic missingness often correlates with low-abundance features or specific sample groups. Perform these diagnostic steps:

- Create a Missingness Heatmap: Cluster samples and features based on missingness patterns.

- Correlation with Total Counts: For sequencing data (e.g., proteomics/transcriptomics), plot the missing rate per feature against the mean log-intensity. A strong negative correlation suggests "Missing Not At Random" (MNAR) due to detection limits.

- Group Comparison Test: Use a statistical test (e.g., chi-square) to check if missingness in a feature is independent of sample phenotype (e.g., disease vs. control). A significant p-value indicates systematic bias.

Q2: What is the concrete impact of choosing different imputation methods (e.g., k-NN vs. MinProb) on a network analysis? A: Different imputation algorithms introduce varying degrees of distortion in correlation structures, which directly affects network inference. See the comparative simulation results below:

Table 1: Impact of Imputation Method on Network Inference Metrics (Simulated Proteomic Data)

| Imputation Method | Principle | Mean Correlation Error | False Positive Edge Rate | Recommended Scenario |

|---|---|---|---|---|

| Complete Case (No Imp.) | Deletes features with any NAs | N/A (Data Loss >40%) | N/A | Not recommended for >5% missing |

| k-Nearest Neighbors (k-NN) | Borrows values from similar samples | 0.12 | 18% | Data Missing At Random (MAR) |

| MinProb (MNAR-focused) | Down-shifts low abundance values | 0.08 | 12% | Strongly suspected MNAR |

| Random Forest | Model-based, iterative | 0.09 | 15% | Complex MAR patterns |

| BPCA | Bayesian PCA model | 0.10 | 16% | Large datasets, MAR |

Experimental Protocol: Benchmarking Imputation Impact on Network Analysis

- Input: A complete multi-omics matrix (e.g., 100 samples x 500 features).

- Induce Missingness: Artificially introduce 20% missing values under two mechanisms: a) Random (MAR), and b) On low-abundance values (MNAR).

- Apply Imputation: Use each method (k-NN, MinProb, etc.) to generate five complete datasets.

- Reconstruct Networks: For each dataset, compute pairwise feature correlations (Pearson). Threshold to create adjacency matrices (|r| > 0.8).

- Evaluate: Compare each inferred network to the "ground truth" network from the original complete data. Calculate metrics from Table 1.

Q3: I'm integrating transcriptomics and metabolomics. Should I impute datasets jointly or separately before integration? A: Joint imputation can preserve inter-omics relationships but risks propagating noise. Follow this workflow to decide:

Diagram Title: Decision Workflow for Joint vs. Separate Imputation

Q4: What are the key reagent solutions for a controlled spike-in experiment to quantify missing value impact in proteomics? A: This experiment systematically introduces known proteins at known concentrations to evaluate imputation accuracy.

Table 2: Research Reagent Toolkit for Spike-In Imputation Benchmarking

| Reagent / Material | Function in Experiment |

|---|---|

| UPS2 Proteomic Dynamic Range Standard (Sigma-Aldrich) | A calibrated mixture of 48 human proteins at known, differing abundances. Spiked into the sample to generate a "ground truth" gradient. |

| Heavy Labeled Peptide Standards (AQUA/PRISM) | Synthetic, isotopically labeled peptides for absolute quantification of specific spike-in proteins, validating measured vs. expected amounts. |

| Depletion Column (e.g., MARS-14) | Removes high-abundance proteins to simulate the low-abundance proteome where missing values are most prevalent. |

| LC-MS/MS Grade Solvents (Acetonitrile, Formic Acid) | Ensure optimal chromatography and ionization, minimizing technical missingness. |

Statistical Software (R/Python) with mice, pcaMethods, imp4p packages. |

To perform and benchmark the various imputation algorithms on the generated spike-in data. |

Q5: Are there established thresholds for "acceptable" levels of missing data before integration becomes unreliable? A: There is no universal threshold, as impact depends on mechanism and analysis goal. Use this diagnostic diagram to guide your assessment:

Diagram Title: Acceptable Missing Data Decision Tree

The Imputation Toolkit: From Traditional Statistics to AI-Driven Methods for Multi-Omics

Troubleshooting Guides and FAQs

Q1: My proteomics dataset has a high proportion of missing values (>20%) that are clearly concentrated in low-abundance proteins. Which mechanism is this, and what is the primary class of methods I should avoid? A1: This pattern strongly suggests Missing Not At Random (MNAR), specifically a limit of detection (LOD) mechanism. Values are missing because the protein concentration falls below the instrument's detection threshold. You must avoid methods that assume Missing Completely At Random (MCAR) or Missing At Random (MAR), such as simple listwise deletion or many basic imputation models. Using these would severely bias your downstream analysis.

Q2: After imputing missing values in my metabolomics data, my differential abundance analysis yields hundreds of significant hits, far more than before imputation. Is this a sign of a problem? A2: Not necessarily, but it requires careful validation. This can happen because imputation reduces variance and increases statistical power. However, it can also introduce false positives if the imputation model is poorly chosen or overfitted. You must:

- Benchmark: Compare results using a hold-out dataset or via simulation if possible.

- Check Imputation Plausibility: Use the

summary()function in R ordescribe()in pandas to ensure imputed values fall within a biologically plausible range (e.g., not negative for abundance data). - Apply Multiple Methods: Run your analysis pipeline with 2-3 different, mechanism-appropriate imputation methods (see Table 1). Consistent findings across methods are more robust.

Q3: I have integrated transcriptomics and methylation data, but the missingness patterns differ between platforms. How do I choose an imputation approach for such a multi-omics scenario? A3: For heterogeneous, linked multi-omics data, a two-step framework is recommended:

- Perform platform-specific imputation first. Use the optimal single-omics method for each data type (e.g., k-NN for transcriptomics, MAR-based methods for methylation arrays).

- Employ multi-omics aware integration. Use methods like Multi-Omics Factor Analysis (MOFA) or Integrative Missing Data Imputation (iMISS) that can model shared and unique factors across datasets, potentially refining imputations in the integration step itself. Do not naively apply a single imputation method to the concatenated dataset.

Data Presentation

Table 1: Method Selection Guide Based on Missingness Mechanism & Data Scale

| Mechanism (How to Diagnose) | Recommended Methods (Small N < 100) | Recommended Methods (Large N > 100) | Methods to Avoid |

|---|---|---|---|

| MCAR (Little's test p > 0.05, no pattern in missing data heatmap) | Mean/Median Mode Imputation, Regression Imputation | Expectation-Maximization (EM), Multiple Imputation by Chained Equations (MICE) | Listwise Deletion if >5% missing |

| MAR (Missingness predictable from observed data, e.g., younger samples have more missing metabolites) | MICE with simple models, k-Nearest Neighbors (k-NN, k=5-10) | Random Forest Imputation (e.g., MissForest), Bayesian Principal Component Analysis (BPCA) | Simple mean imputation (introduces bias) |

| MNAR (Missingness depends on unobserved value, e.g., values below detection limit) | LOD-based: Replace with LOD/√2, Model-based: Survival curve model (left-censored) | Advanced: Quantile regression imputation of left-censored data (QRILC), Model-based: Gaussian mixture models | Imputation methods assuming MAR/MCAR (e.g., MICE without MNAR model) |

Experimental Protocols

Protocol: Evaluating Imputation Performance via a Hold-Out Experiment

Objective: To empirically determine the best imputation method for your specific multi-omics dataset.

- Data Preparation: Start with a complete dataset (or a subset of features with no missing values). This is your ground truth.

- Induce Missingness: Artificially introduce missing values into the ground truth dataset under a specific mechanism (e.g., random for MCAR, dependent on observed values for MAR, threshold-based for MNAR) at a rate similar to your real data.

- Apply Imputation Methods: Impute the artificially missing values using 3-4 candidate algorithms (e.g., MissForest, k-NN, BPCA, QRILC).

- Calculate Error Metrics: Compare the imputed values to the held-out true values. Common metrics include:

- Normalized Root Mean Square Error (NRMSE): For continuous data.

- Proportion of Falsely Classified (PFC): For categorical data.

- Distance in Principal Component Space: Assesses preservation of global data structure.

- Select Optimal Method: The method with the lowest error metrics and best structure preservation is recommended for your real dataset.

Mandatory Visualization

Diagram: Framework for Selecting a Missing Data Strategy

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Multi-Omics with Missing Data

| Item/Reagent | Function in Context of Missing Data Research |

|---|---|

| Complete Case Dataset (Subset) | A curated subset of your multi-omics data with no missing values. Serves as the essential "ground truth" for benchmarking imputation algorithm performance via hold-out experiments. |

mice R Package (or scikit-learn in Python) |

Provides robust, flexible implementations of Multiple Imputation by Chained Equations (MICE), a gold-standard framework for handling MAR data. Allows specification of different models per variable type. |

missForest R Package |

Offers a non-parametric Random Forest-based imputation method. Highly effective for mixed data types (continuous/categorical) and complex, non-linear relationships under MAR. |

imputeLCMD R Package / QRILc method |

A specialized package for left-censored data (MNAR). Contains the Quantile Regression Imputation of Left-Censored data (QRILC) algorithm, crucial for handling missing values due to limits of detection in proteomics/metabolomics. |

DataExplorer or naniar R Packages |

Provides automated visualization and diagnostic tools (e.g., missingness heatmaps, profile plots) to visually assess the pattern and mechanism of missing data before method selection. |

| MOFA2 (Multi-Omics Factor Analysis) | A Bayesian framework for multi-omics integration. While not solely an imputation tool, it inherently handles missing values by learning a shared latent space, making it a powerful option for the final integrated analysis step. |

Troubleshooting Guides & FAQs

Q1: After mean imputation on my proteomics dataset, downstream clustering results show unrealistic tightness and loss of biological variance. What went wrong? A: Mean imputation reduces variance and distorts covariance structures. This artificially inflates the similarity between samples, leading to biased cluster formation. It is not recommended for omics data where covariance is critical for analysis. Consider SVD-based or MICE methods instead.

Q2: When using SVD-based imputation (e.g., softImpute), my algorithm fails to converge and returns 'NA' values. How can I fix this?

A: This is often due to excessive missingness (>30%) or improper rank (k) selection.

- Troubleshooting Steps:

- Check Missingness Rate: Calculate the percentage of missing values per feature and overall. If >30%, consider feature filtering before imputation.

- Adjust Rank (

k): Start with a very low rank (e.g., 2-5) and incrementally increase. Use cross-validation on a small, complete subset to estimate optimalk. - Scale Data: Ensure data is centered (and potentially scaled) before applying SVD.

- Increase Iterations: Increase the

maxitparameter (e.g., from 100 to 1000). - Add Regularization: Increase the lambda (

λ) parameter to enforce stronger regularization.

Q3: Running MICE for metabolomics data is computationally prohibitive. How can I optimize performance? A: MICE with high-dimensional data is resource-intensive.

- Optimization Protocol:

- Pre-filtering: Remove features with >40% missingness or low variance.

- Variable Selection: Use a random subset of features (

micefunction'sblocksargument) to predict each target variable, rather than all features. - Reduce

m: Decrease the number of multiple imputations (m) for exploration (e.g., from 5 to 3). Usem=5-10only for final analysis. - Use

maxitEfficiently: Monitor chain convergence; often,maxit=5-10is sufficient. - Parallelize: Use the

parallelorfurrrpackages in R to run imputation chains in parallel.

Q4: How do I choose between median imputation and regularized iterative methods for my RNA-seq dataset with <5% missing values? A: For low-level missingness (<5%), the choice impacts subtle biological signals.

- Decision Guide:

- Median Imputation: Acceptable only for initial exploratory visualization. It will artificially inflate p-value significance in differential expression. Not recommended for any formal statistical testing.

- Regularized Iterative (MICE/SVD): Strongly preferred. These methods preserve relationships between genes. Use MICE with a

pmm(Predictive Mean Matching) method for continuous, non-normal RNA-seq data (e.g., log-CPMs) to keep imputed values within the observed range.

Q5: After SVD imputation, my PCA plot shows a strong batch effect that wasn't visible before. Is this an artifact?

A: It is likely a revealed, not an induced, artifact. SVD-based methods can recover the underlying data structure, which includes both biological and technical variations. The batch effect was likely masked by the noise of missing values. You should now apply batch correction methods (e.g., ComBat, limma's removeBatchEffect) after imputation.

Table 1: Comparison of Traditional & Linear Imputation Methods for Multi-Omics Data

| Method | Typical Use Case | Data Type Suitability | Pros | Cons | Impact on Covariance |

|---|---|---|---|---|---|

| Mean/Median | Quick exploration, <5% MCAR* | Any, but not recommended | Simple, fast | Severe bias, reduces variance, distorts distances | Heavily attenuates |

| SVD-Based | High-dimensional data (e.g., transcriptomics) | Continuous, approximately normal | Preserves global structure, handles high dimensions | Sensitive to rank selection, may blur local patterns | Well-preserved |

| MICE | Complex missing patterns (MAR), inter-related features | Mixed (continuous, categorical) | Flexible, models feature relationships, provides uncertainty | Computationally heavy, convergence issues in high-dimensions | Well-preserved |

MCAR: Missing Completely At Random. *MAR: Missing At Random.

Table 2: Recommended Experimental Parameters for MICE in Multi-Omics

| Parameter | Recommended Setting for Omics | Rationale |

|---|---|---|

Number of Imputations (m) |

5-10 | Balances stability of pooled results with computation time. |

Iterations per Chain (maxit) |

10-20 | Usually sufficient for convergence in omics-scale data. |

Imputation Method (method) |

pmm, norm.nob, lasso.norm |

pmm (predictive mean matching) is robust for non-normal data. |

| Predictor Matrix | Quickpred (with high correlation threshold) | Uses only highly correlated features as predictors to stabilize models. |

Experimental Protocols

Protocol 1: Evaluating Imputation Accuracy with a Hold-Out Validation Set

- Prepare a Complete Dataset: Start with a complete multi-omics matrix (e.g., from a public repository).

- Artificially Introduce Missingness: Randomly remove 10-20% of values under a Missing Completely At Random (MCAR) mechanism. Record the positions of these "true" values.

- Apply Imputation Methods: Run Mean, SVD (

softImpute), and MICE on the dataset with artificial missingness. - Calculate Error Metrics: For each method, compute the Root Mean Square Error (RMSE) or Normalized RMSE between the imputed "true" values and the original values.

- Compare: The method with the lowest error provides the best accuracy for that dataset under MCAR.

Protocol 2: Implementing SVD-Based Imputation using softImpute in R

Protocol 3: Standard MICE Workflow for Metabolomics Data

Visualizations

Title: Decision Workflow for Choosing an Imputation Method

Title: MICE Algorithm Iterative Cycle Diagram

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Imputation Experiments

| Item/Software | Function | Example/Note |

|---|---|---|

R mice Package |

Implements MICE for multivariate data. | Use miceadds::mice.impute.norm for high-dimensional regularized regression. |

R softImpute / bcv |

Performs regularized SVD matrix completion. | softImpute handles large matrices with sparsity. |

Python fancyimpute |

Provides multiple imputation algorithms (KNN, SoftImpute, IterativeImputer). | IterativeImputer is sklearn's implementation of MICE. |

missForest (R Package) |

Non-linear method using Random Forests. | Useful benchmark against linear methods. |

Simpute (R Package) |

Fast SVD-based imputation for very large matrices. | Optimized for scalability. |

| Cross-Validation Script | Custom script to evaluate imputation accuracy (RMSE). | Critical for parameter tuning and method selection. |

| High-Performance Computing (HPC) Cluster Access | For running MICE on full multi-omics datasets. | Necessary for realistic experiments with >10,000 features. |

Technical Support Center: Troubleshooting & FAQs

This support center is designed within the context of handling missing values in multi-omics data (e.g., genomics, transcriptomics, proteomics) for research and drug development. Below are common issues and solutions when employing k-NN, Random Forest, and MissForest imputation techniques.

Frequently Asked Questions (FAQs)

Q1: My multi-omics dataset has over 30% missing values in some features. Can I use k-NN imputation directly? A: Direct application of k-NN imputation is not recommended for such high missingness. k-NN relies on distance metrics between samples, and excessive missingness corrupts these distances.

- Recommended Protocol: Perform a two-step imputation.

- Initial Coarse Imputation: Use a simple, global method like mean/median (for continuous) or mode (for categorical) imputation on features with >20% missingness. This creates a complete dataset for distance calculation.

- Refined k-NN Imputation: Apply k-NN imputation on the coarsely imputed dataset to refine the values, using a relevant distance metric (e.g., Gower distance for mixed data types common in multi-omics).

- Pre-processing Checklist:

- Always scale your data (e.g., Z-score normalization) before k-NN imputation if using Euclidean distance.

- Use domain knowledge to remove features where missingness likely indicates a biological non-detection (e.g., low-abundance protein) rather than a technical artifact.

Q2: After using MissForest for imputation on my integrated genomics and metabolomics data, the model seems to have "over-imputed," reducing the variance of my features. How can I diagnose and prevent this? A: MissForest, as an iterative Random Forest-based method, can sometimes converge to a solution that underestimates variance, especially if the "out-of-bag" (OOB) error stopping criterion is too strict.

- Diagnostic Protocol: Compare the distribution (mean, variance, histogram) of key features before and after imputation. A significant shrinkage in variance is a red flag.

- Solution & Protocol Adjustment:

- Adjust Stopping Criterion: Increase the

maxiterparameter (e.g., from default 10 to 15) and loosen the stopping tolerance (stop.measure). Monitor the OOB error across iterations; it should plateau, not minimize to near zero. - Post-Imputation Noise Injection: Add a small amount of random noise, drawn from a normal distribution with mean zero and variance equal to the residual variance of the imputation model, to the imputed values. This preserves the uncertainty of the imputation.

- Iterative Refinement: Consider using the imputed dataset as a starting point for a more complex downstream model that accounts for imputation uncertainty (e.g., multiple imputation frameworks where MissForest generates several imputed datasets).

- Adjust Stopping Criterion: Increase the

Q3: When using Random Forest for classification after data imputation, the feature importance plot is dominated by features that had many missing values. Is this a bias? A: Yes, this is a known potential bias. Features with many missing values, imputed with a sophisticated method like MissForest, can artificially appear more important because the imputation model itself learned patterns from other features to predict them.

- Troubleshooting Protocol for Feature Importance Bias:

- Create a Missingness Indicator Matrix: Generate binary variables indicating whether a value was originally missing (1) or observed (0) for each feature.

- Augment Your Dataset: Concatenate these indicator variables to your fully imputed dataset as additional features.

- Re-train and Compare: Re-train your Random Forest classifier on the augmented dataset. Examine the new feature importance list.

- Interpretation: If a missingness indicator for a specific feature ranks highly, it signals that the pattern of missingness is informative for the outcome, a crucial biological/technical insight. The importance of the imputed feature itself in this new model is a more reliable estimate.

Q4: What is the optimal way to choose 'k' for k-NN imputation in a heterogeneous multi-omics dataset? A: There is no universal optimal 'k'. It must be tuned as a hyperparameter.

- Experimental Tuning Protocol:

- Artificially Induce Missingness: From a complete subset of your data, randomly mask 5-10% of known values (Missing Completely at Random - MCAR).

- Grid Search: Perform k-NN imputation on this artificially masked dataset across a range of 'k' values (e.g., 5, 10, 15, 20).

- Error Metric Calculation: For each 'k', calculate the imputation error (e.g., Root Mean Square Error (RMSE) for continuous, proportion of falsely classified for categorical) between the imputed and the true, known values.

- Select k: Choose the 'k' that minimizes the error metric. Use this 'k' for imputation on your original dataset.

Comparative Performance Data

The following table summarizes quantitative findings from recent benchmark studies on imputation methods for multi-omics data.

Table 1: Benchmark Comparison of Imputation Methods for Multi-Omics Data

| Method | Typical Use Case | Relative Computational Cost | Handles Mixed Data Types? | Preserves Data Structure & Variance? | Key Consideration for Multi-Omics |

|---|---|---|---|---|---|

| k-NN Impute | Smaller datasets, MCAR/MAR* missingness. | Low to Moderate | Yes (with Gower/Podani distance) | Moderate (can smooth out extremes) | Distance metric choice is critical; suffers from "curse of dimensionality". |

| Random Forest (as a predictor for imputation) | Complex, non-linear relationships, any data type. | High | Yes (natively) | High | Can overfit on small sample sizes; excellent for capturing interactions. |

| MissForest (Iterative RF) | High-dimensional data, complex patterns, MAR/MNAR* missingness. | Very High | Yes (natively) | Very High (best-in-class) | Iterative process is computationally intensive but often top-performing. |

| Mean/Median/Mode | Baseline, initial step for high missingness. | Very Low | No (separate models needed) | Poor (severely reduces variance) | Not recommended for final analysis due to bias introduction. |

*MCAR: Missing Completely at Random, MAR: Missing at Random, MNAR: Missing Not at Random.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Multi-Omics Imputation

| Tool / Reagent | Function / Purpose | Example in Python/R |

|---|---|---|

| Normalization & Scaling Suite | Pre-processes features to comparable scales, essential for distance-based methods like k-NN. | sklearn.preprocessing.StandardScaler (Python), scale() (R) |

| Advanced Distance Metric | Calculates dissimilarity between samples with mixed continuous, categorical, and ordinal data (common in multi-omics). | gower.gower_matrix() (Python), daisy() in cluster package (R) |

| Iterative Model Engine | The core algorithm that iteratively imputes missing values using a predictive modeling approach. | sklearn.ensemble.RandomForestRegressor/Classifier (Python), missForest package (R) |

| Error Metric Calculator | Quantifies imputation accuracy during method tuning and validation. | sklearn.metrics.mean_squared_error (Python), Metrics::rmse() (R) |

| Missingness Pattern Visualizer | Diagnoses the mechanism of missing data (MCAR, MAR, MNAR) before selecting an imputation strategy. | missingno.matrix() (Python), naniar::geom_miss_point() (R) |

| High-Performance Computing (HPC) Cluster / Cloud Credits | Provides the necessary computational power for running iterative methods like MissForest on large multi-omics matrices. | AWS, Google Cloud, Azure, or local Slurm cluster access. |

Experimental Workflow & Pathway Diagrams

Title: Workflow for Multi-Omics Data Imputation with k-NN and MissForest

Title: Bias Loop in Imputation and Downstream Analysis

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions (FAQs)

Q1: When using an autoencoder for imputing missing multi-omics values, my model converges but the imputed values show unrealistically low variance. What is the cause and solution? A: This is a common symptom of posterior collapse or an over-regularized latent space. The model learns to ignore the latent variables, outputting the mean. Solutions include:

- Reduce the bottleneck size gradually and monitor reconstruction loss on a validation set with artificial missing masks.

- Adjust the weighting between the reconstruction loss and any regularization term (e.g., KL divergence in a VAE). Start with a very low regularization weight.

- Use a more complex decoder architecture or introduce dropout in the early encoder layers to prevent the encoder from taking an "easy shortcut."

Q2: My GAN (Generative Adversarial Network) for generating synthetic multi-omics profiles fails to converge; the generator loss goes to zero while the discriminator loss remains high. What's wrong? A: This indicates mode collapse and a failing discriminator. The generator finds a single, plausible output that fools the discriminator. Troubleshooting steps:

- Implement Wasserstein GAN with Gradient Penalty (WGAN-GP): This uses a critic (not a classifier) with a Lipschitz constraint, leading to more stable training.

- Apply spectral normalization to both generator and discriminator layers.

- Monitor generated samples throughout training. Use paired omics data visualizations (e.g., t-SNE) to check for diversity.

- Ensure your discriminator is not too weak. Temporarily increase its capacity or learning rate relative to the generator.

Q3: When applying netNMF-sc to single-cell multi-omics data with missing entries, the algorithm fails to complete or returns 'NaN' values. How do I resolve this? A: This is typically due to improper initialization or invalid input matrices containing all-zero rows/columns after preprocessing.

- Preprocessing Check: Ensure no feature (gene/peak) has zero counts across all cells. Filter these out. Log-transform and normalize data appropriately before input.

- Initialization: Use SVD-based initialization (

init='svd') rather than random for more stability. Run multiple random initializations and select the one with the lowest objective function value. - Parameter Tuning: The hyperparameter alpha (α), which controls network regularization strength, may be set too high. Start with α=0 and incrementally increase. Refer to the parameter table below for guidance.

- Missing Value Mask: Confirm your missing value mask (

mask) is a binary matrix of the same shape as the input, where 1 indicates an observed value and 0 indicates missing.

Q4: How do I choose between an autoencoder, a GAN, and netNMF-sc for my specific multi-omics missing data problem? A: The choice depends on data scale, structure, and goal.

- Autoencoders (VAEs): Best for continuous, high-dimensional data (e.g., RNA-seq, proteomics). They provide a probabilistic framework and a direct, fast imputation pathway. Use when you need a compressed latent representation for downstream tasks.

- GANs: Ideal for generating realistic, synthetic multi-omics profiles to augment small datasets or create a fully imputed cohort. More complex to train but can capture complex joint distributions.

- netNMF-sc: Specifically designed for single-cell multi-omics data (e.g., CITE-seq, SHARE-seq). It excels when you have linked measurements (cells measured for multiple modalities) and unlinked features. It jointly factorizes matrices while leveraging a cell similarity network.

Comparison of Model Characteristics for Missing Value Imputation

| Aspect | Autoencoder (e.g., VAE) | GAN (e.g., GAIN) | netNMF-sc |

|---|---|---|---|

| Primary Strength | Efficient latent representation learning; probabilistic imputation. | Captures complex, high-dimensional data distributions. | Integrates network biology; designed for sparse, linked single-cell data. |

| Output | Deterministic or distributional imputations. | Synthetic data samples that can be used for imputation. | Factor matrices (cell clusters & feature modules) used to reconstruct data. |

| Training Stability | Generally stable with proper regularization. | Can be unstable; requires careful tuning (use WGAN-GP). | Stable with proper initialization and hyperparameter (α) selection. |

| Best For Data Type | Bulk or single-cell omics (continuous). | Bulk omics with complex co-variance structures. | Single-cell multi-omics with paired and unpaired features. |

| Key Hyperparameter | Bottleneck dimension, KL loss weight. | Learning rate ratio (D:G), gradient penalty coefficient (λ). | Rank (k), network regularization weight (α). |

Detailed Experimental Protocols

Protocol 1: Variational Autoencoder (VAE) for Multi-Omics Imputation Objective: Impute missing values in a bulk multi-omics dataset (e.g., RNA-seq and DNA methylation).

- Data Preparation: Normalize each omics dataset separately (e.g., log2(CPM+1) for RNA, M-values for methylation). Concatenate features horizontally into a matrix

X(samples x features). Introduce an artificial missing maskMfor validation (e.g., randomly mask 10% of observed values). - Model Architecture:

- Encoder: Two fully connected (FC) layers with ReLU activation, mapping input to mean (

μ) and log-variance (logσ²) vectors of the latent space. - Sampling: Use the reparameterization trick:

z = μ + ε * exp(0.5 * logσ²), where ε ~ N(0,1). - Decoder: Two FC layers with ReLU activation, mapping

zto a reconstruction of the original input dimension.

- Encoder: Two fully connected (FC) layers with ReLU activation, mapping input to mean (

- Loss Function: Total Loss = Reconstruction Loss (Mean Squared Error on observed entries) + β * KL Divergence Loss (between q(z\|X) and N(0,1)). Start with β=0.0001.

- Training: Train using Adam optimizer for 500 epochs. Use the validation mask to monitor imputation error (MSE) and prevent overfitting.

- Imputation: For a sample with missing values, pass the observed portion through the trained VAE. The decoder's output provides imputations for the missing entries.

Protocol 2: Running netNMF-sc on Single-Cell Multi-Omics Data Objective: Impute and jointly analyze paired single-cell RNA-seq and ATAC-seq data.

- Input Construction:

- Matrix Y1: scRNA-seq matrix (cells x genes). Normalize (e.g., log1p(TP10K)).

- Matrix Y2: scATAC-seq peak matrix (cells x peaks). Binarize or use TF-IDF transformation.

- Network A: A cell similarity graph (e.g., k-nearest neighbor graph) computed from a preliminary dimensionality reduction (PCA on Y1 or combined data).

- Model Fitting: Use the netNMF-sc function (Python/R). Critical parameters:

k(rank): Start with k=20; use cross-validation or an elbow plot of reconstruction error to choose.alpha(α): Network regularization parameter. Start with alpha=1, then tune.init: Use'svd'for stability.

- Command (Conceptual):

Where

Wis the shared cell factor matrix,H1andH2are modality-specific feature matrices. - Imputation & Analysis: The reconstructed data

WH1.TandWH2.Tare the imputed/factored matrices. UseWfor cell clustering andH1/H2for identifying co-modulated genes and peaks.

Visualizations

VAE for Missing Data Imputation Workflow

netNMF-sc Matrix Factorization Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Multi-Omics Imputation Experiments |

|---|---|

| Python Libraries (scikit-learn, TensorFlow/PyTorch, scanpy) | Provide foundational algorithms, deep learning frameworks, and single-cell data structures for implementing and testing autoencoders, GANs, and preprocessing. |

| netNMF-sc Software Package (R/Python) | The specific implementation of the netNMF-sc algorithm, required for network-regularized matrix factorization on single-cell multi-omics data. |

| Benchmark Datasets (e.g., PBMC CITE-seq from 10X Genomics) | Well-characterized public multi-omics datasets with minimal missingness, used as gold standards to artificially introduce missing values and validate imputation performance. |

| Imputation Metrics (RMSE, MAE, PCC) | Quantitative measures to compare imputed vs. originally observed (held-out) values. Critical for tuning model hyperparameters and benchmarking. |

| Graph Construction Tool (e.g., SCANPY's pp.neighbors) | Used to build the cell similarity network (A) required as input for netNMF-sc, typically from PCA on gene expression data. |

| High-Performance Computing (HPC) or Cloud GPU | Essential for training deep learning models (VAEs, GANs) on large multi-omics datasets within a reasonable timeframe. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: I am working with multi-omics proteomics data with >30% missing values (MNAR). Which imputation method is most appropriate, and why does my Mean Imputation produce biologically unrealistic results?

A: For Missing Not At Random (MNAR) data common in proteomics (e.g., values missing below detection limit), simple mean/median imputation is inappropriate as it severely distorts the distribution and covariance structure, leading to false downstream conclusions. Recommended methods include:

- Left-censored MNAR imputation:

qrnnin R orsklearn.impute.IterativeImputerwith a tailored function. - Minimum Value / Constant Imputation: A baseline method, often using a value derived from the minimum observed values per column.

missForest(R)/IterativeImputerwith RandomForest (Python): Can model complex, non-linear relationships.

- Protocol for

IterativeImputerwith RandomForest for MNAR:- Environment: Python with

scikit-learn>=1.3,numpy,pandas. - Setup: Create an imputer instance:

imputer = IterativeImputer(estimator=RandomForestRegressor(n_estimators=100, random_state=42), max_iter=20, random_state=42, skip_complete=True). - Pre-fit: Consider fitting the imputer on a representative subset of your data or public dataset from the same platform.

- Transform: Apply to your data:

imputed_data = imputer.fit_transform(your_dataframe). - Validation: Perform a statistical sanity check (e.g., compare distributions of observed vs. imputed values for a few features).

- Environment: Python with

Q2: After imputing my metabolomics dataset, my PCA and clustering results are dominated by the imputation method artifact. How can I diagnose and mitigate this?

A: This indicates the imputation method is introducing strong, systematic bias.

- Diagnosis: Perform PCA on only the complete cases (no missing values). Then perform PCA on the imputed dataset. If the explained variance and component loadings are drastically different, imputation artifacts are likely.

- Mitigation Strategy:

- Use a Multi-Method Workflow: Implement 2-3 different imputation methods (e.g., KNN, MICE, Bayesian PCA).

- Apply Downstream Analysis Separately: Run your differential analysis or clustering on each imputed dataset.

- Perform Results Integration: Use consensus clustering or vote on stable features across results to identify robust signals.

- Experimental Protocol for Consensus Analysis:

- Impute dataset using Method A (e.g., KNN), Method B (e.g., MICE), Method C (e.g., SoftImpute).

- For each method, perform t-test/Wilcoxon rank test for case vs control.

- Record the list of significant features (p<0.05) from each method.

- Identify the intersection of significant features across all methods. These are your high-confidence results.

Q3: How do I handle imputation in a tidymodels workflow for a predictive model without data leakage?

A: The recipes package within tidymodels is designed for this. You must fit preprocessing steps (including imputation) on the training set only and apply that fitted recipe to the testing set.

- Code Protocol:

- Create Recipe on Training Data:

impute_recipe <- recipe(target ~ ., data = train_data) %>% step_impute_knn(all_predictors(), neighbors = 5). - Prep (Fit) the Recipe:

fitted_recipe <- prep(impute_recipe, training = train_data). This step learns the KNN model from the training data. - Bake (Transform) Both Sets:

train_baked <- bake(fitted_recipe, new_data = train_data),test_baked <- bake(fitted_recipe, new_data = test_data). The test set is imputed using the patterns learned from the train set, preventing leakage.

- Create Recipe on Training Data:

Q4: What are the best practices for benchmarking multiple imputation methods on my specific genomics dataset before final analysis?

A: Implement a simulation-based validation study.

- Core Protocol:

- Create a "Complete" Dataset: Start with a subset of your data that has no missing values (

complete_subset). - Artificially Introduce Missingness: Randomly remove values (e.g., 10%, 20%) under a defined mechanism (MCAR, MAR). Use a function like

prodNAfrom R'smissForestpackage. - Apply Candidate Imputation Methods: Impute the artificially degraded dataset using each method you wish to test (e.g., Mean, KNN, MICE, MissForest).

- Calculate Performance Metrics: Compare the imputed values against the known, original values.

- Repeat: Perform multiple iterations (e.g., 50) for robustness.

- Create a "Complete" Dataset: Start with a subset of your data that has no missing values (

Performance Benchmarking Table

Table 1: Common Imputation Methods & Their Benchmarking Results (Simulated MCAR on Gene Expression Data).

| Imputation Method | Package/Function | Average NRMSE | Average PCC | Speed (sec) on 1000x500 matrix | Suitability for MNAR |

|---|---|---|---|---|---|

| Mean/Median | sklearn.impute.SimpleImputer, recipes::step_impute_mean() |

0.45 | 0.10 | <1 | Poor |

| K-Nearest Neighbors | sklearn.impute.KNNImputer, recipes::step_impute_knn() |

0.25 | 0.75 | ~15 | Fair |

| Iterative/MICE | sklearn.impute.IterativeImputer, mice (R) |

0.20 | 0.82 | ~120 | Good |

| Random Forest | missForest (R) |

0.18 | 0.88 | ~300 | Good |

| SoftImpute | softImpute (R), fancyimpute.SoftImpute |

0.22 | 0.80 | ~45 | Fair |

| Bayesian PCA | pcaMethods::bpca() (R) |

0.21 | 0.83 | ~60 | Good |

NRMSE: Normalized Root Mean Square Error (lower is better). PCC: Pearson Correlation Coefficient between imputed and true values (higher is better). Speed is illustrative; varies by hardware and implementation.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Packages for Multi-Omics Imputation.

| Item | Function | Primary Use Case |

|---|---|---|

scikit-learn (Python) |

Unified framework for SimpleImputer, KNNImputer, IterativeImputer. |

General-purpose, integration into ML pipelines. |

tidymodels + recipes (R) |

Preprocessing engine for leak-proof imputation within modeling workflows. | Predictive modeling with tidy data principles. |

missForest (R) |

Non-parametric imputation using Random Forests. | Complex, non-linear data (e.g., metabolomics, proteomics). |

mice (R) |

Multiple Imputation by Chained Equations (MICE). | Creating multiple plausible datasets for statistical rigor. |

pcaMethods (R/Bioconductor) |

Implements BPCA, PPCA, SVDimpute. | Multi-omics integration, microarray data. |

impute (R/Bioconductor) |

KNN imputation optimized for bioinformatics data. | Genomic data matrices (e.g., gene expression). |

fancyimpute (Python) |

Includes Matrix Factorization, SoftImpute. | Exploratory analysis on medium-large datasets. |

Impyute (Python) |

Benchmarking suite and multiple algorithms. | Comparative evaluation of imputation methods. |

Workflow Diagrams

Title: Multi-Method Imputation & Consensus Analysis Workflow

Title: Leakage-Free Imputation in a Tidymodels Pipeline

Beyond Defaults: Practical Troubleshooting and Optimization for Reliable Imputation

This technical support center addresses key issues encountered when handling missing values in multi-omics data integration, a critical step for researchers, scientists, and drug development professionals.

Troubleshooting Guides & FAQs

Q1: After imputation, my downstream analysis (e.g., differential expression) shows an inflated number of significant hits. What went wrong? A: This is a classic sign of Over-Imputation. Imputing too many missing values, especially with complex models, can create an artificially clean dataset that reduces noise unrealistically, leading to false positives. The imputation algorithm may have been applied to features with an excessively high missing rate.

- Diagnosis: Compare the number of significant features (p<0.05) pre- and post-imputation on a simulated complete dataset. A dramatic increase post-imputation is a red flag.

- Solution: Apply a missing value threshold per feature (e.g., >20% missing) and filter out those features before imputation. Use less aggressive imputation methods (e.g., k-NN with a small k) and validate stability with multiple imputation.

Q2: How can I check if my imputation method has distorted the natural variance structure of my data? A: Distortion of Variance occurs when an imputation method over-smooths or under-represents the true biological variability.

- Diagnosis Protocol:

- For each sample/condition, artificially introduce missing values (e.g., 5-10%) into a complete dataset (a subset with no missing values).

- Impute the introduced missing values using your chosen method.

- Calculate the variance for each feature in the original complete data and the imputed data.

- Plot the variances against each other (see diagram below). Points deviating from the y=x line indicate variance distortion.

Table 1: Variance Comparison Pre- and Post-Imputation (Simulated Example)

| Feature ID | Original Variance (Log2) | Imputed Variance (Log2) | Variance Ratio (Imputed/Original) |

|---|---|---|---|

| Gene_A | 1.85 | 1.22 | 0.66 |

| Gene_B | 0.92 | 0.91 | 0.99 |

| Gene_C | 2.41 | 3.10 | 1.29 |

| Protein_X | 1.50 | 1.01 | 0.67 |

| Metabolite_Y | 3.20 | 3.25 | 1.02 |

Q3: My integrated multi-omics pathway analysis shows strong, novel cross-omics correlations post-imputation. Could these be artifacts? A: Yes, they could be False Biological Signals introduced by the imputation algorithm itself, especially if the method borrows information across samples or features inappropriately.

- Diagnosis & Mitigation Workflow:

- Segment Data: Split data by biological condition or batch.

- Impute Independently: Perform imputation separately on each segment.

- Compare: Check if the strong cross-omics correlations persist within each biologically homogeneous segment. Correlations that only appear in the pooled, imputed data are likely artifacts.

- Use Informed Methods: Employ left-censored (MNAR-aware) imputation like

QRILCfor proteomics/ metabolomics, rather than assuming data is Missing at Random (MAR).

Diagnostic Workflow for Variance Distortion

Experimental Protocols for Validating Imputation

Protocol: Benchmarking Imputation Methods in Multi-Omics Data Objective: To quantitatively evaluate the performance of different imputation methods and select the least biased one for a given dataset.

- Input: A multi-omics dataset (e.g., Transcriptomics, Proteomics).

- Create a Gold-Standard Subset: Identify a subset of features (genes/proteins) with no missing values across a set of samples.

- Introduce Artificial Missingness: Randomly remove values in the gold-standard subset at varying rates (5%, 10%, 20%) following different patterns (MCAR, MNAR-simulated).

- Apply Candidate Imputation Methods: Impute the artificially missing values using methods under test (e.g., Mean, k-NN, SVD, MissForest, BPCA).

- Calculate Performance Metrics:

- Normalized Root Mean Square Error (NRMSE): Measures accuracy for continuous data.

- Proportion of Falsely Altered Significant Features: Apply a statistical test pre- and post-imputation; count features that change significance status.

- Statistical Comparison: Rank methods based on NRMSE and stability across multiple simulation runs.

Benchmarking Workflow for Imputation Methods

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Managing Missing Values in Multi-Omics

| Item / Solution | Function / Purpose | Example / Note |

|---|---|---|

NAguideR (R package) |

A systematic pipeline for evaluating and selecting missing value imputation methods for proteomics and metabolomics data. | Provides performance metrics (NRMSE, etc.) and visualization. |

scikit-learn SimpleImputer (Python) |