Multi-omics Data Preprocessing: A Comprehensive Guide to Standards, Tools, and Best Practices for Researchers

This article provides a detailed, actionable guide to multi-omics data preprocessing, tailored for researchers, scientists, and drug development professionals.

Multi-omics Data Preprocessing: A Comprehensive Guide to Standards, Tools, and Best Practices for Researchers

Abstract

This article provides a detailed, actionable guide to multi-omics data preprocessing, tailored for researchers, scientists, and drug development professionals. We explore the fundamental principles of integrating genomics, transcriptomics, proteomics, and metabolomics data, outlining critical standards for quality control, normalization, and batch correction. The guide delves into methodological workflows using popular tools and pipelines, addresses common troubleshooting and optimization challenges, and compares validation strategies to ensure robust, reproducible results. The goal is to equip practitioners with the knowledge to establish rigorous preprocessing standards that form the foundation for reliable downstream integrative analysis and translational insights.

The Bedrock of Integration: Foundational Concepts and Exploratory Analysis in Multi-omics Preprocessing

This whitepaper, framed within a broader thesis on Multi-omics data preprocessing standards research, provides a technical guide to the core data layers that constitute the multi-omics landscape. The integration of genomics, transcriptomics, proteomics, and metabolomics is revolutionizing systems biology and precision medicine, yet each layer presents distinct technological and analytical challenges that must be addressed for effective data fusion and interpretation. This document details these data types, their experimental acquisition, inherent complexities, and their role in constructing a coherent biological narrative.

Genomics: The Blueprint

Genomics involves the comprehensive study of an organism's complete set of DNA, including all of its genes. It provides the static blueprint, detailing genetic variants, mutations, and structural variations.

Key Experimental Protocol: Whole Genome Sequencing (WGS)

- Sample Preparation: Genomic DNA is extracted from tissue or blood using kits with silica-based membrane columns.

- Library Preparation: DNA is fragmented (e.g., via acoustic shearing), end-repaired, A-tailed, and ligated to platform-specific sequencing adapters. Fragments are size-selected.

- Amplification: Adapter-ligated fragments are PCR-amplified to create the final sequencing library.

- Sequencing: Libraries are loaded onto a sequencing platform (e.g., Illumina NovaSeq). Clusters are generated, and fluorescently labeled nucleotides are incorporated in a massively parallel sequencing-by-synthesis reaction.

- Data Output: Base calling generates FASTQ files containing reads and quality scores.

Unique Challenges: Managing immense data volume (~100 GB per human genome); distinguishing true variants from sequencing artifacts; interpreting the functional impact of non-coding variants; ensuring consistent variant calling across pipelines.

Transcriptomics: The Dynamic Expression

Transcriptomics studies the complete set of RNA transcripts (mRNA, non-coding RNA) produced by the genome under specific conditions, reflecting dynamic gene expression.

Key Experimental Protocol: Bulk RNA-Sequencing

- RNA Extraction: Total RNA is isolated using guanidinium thiocyanate-phenol-chloroform extraction (e.g., TRIzol) or spin-column methods, with DNase treatment.

- Library Preparation: mRNA is enriched using poly-A selection or ribosomal RNA is depleted. RNA is fragmented, reverse-transcribed into cDNA, end-repaired, A-tailed, adapter-ligated, and PCR-amplified.

- Sequencing & Analysis: High-throughput sequencing is performed (similar to WGS). Reads are aligned to a reference genome, and transcript abundance is quantified (e.g., in FPKM or TPM units).

Unique Challenges: RNA instability and rapid degradation; capturing full-length transcripts; accurately quantifying low-abundance transcripts; distinguishing biological from technical noise in expression levels; complex alternative splicing analysis.

Proteomics: The Functional Effectors

Proteomics identifies and quantifies the complete set of proteins, their post-translational modifications (PTMs), interactions, and structures, representing the functional machinery of the cell.

Key Experimental Protocol: Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS)

- Protein Extraction & Digestion: Proteins are extracted from lysed cells/tissues in a denaturing buffer. They are reduced, alkylated, and digested into peptides using trypsin.

- LC Separation: Peptides are separated by reverse-phase liquid chromatography based on hydrophobicity.

- MS Analysis: Eluted peptides are ionized (e.g., by electrospray) and analyzed in a mass spectrometer. A full MS1 scan identifies peptide ions, which are selected for fragmentation (MS2) to generate sequence spectra.

- Database Search: MS2 spectra are matched against a protein sequence database using search engines (e.g., MaxQuant, FragPipe) for identification and label-free or isobaric tag-based (e.g., TMT) quantification.

Unique Challenges: Immense dynamic range (>10^7) in protein abundance; lack of amplification methods; complexity of PTMs; difficulty in detecting low-abundance proteins; data-dependent acquisition stochasticity.

Metabolomics: The Metabolic Phenotype

Metabolomics targets the comprehensive analysis of small-molecule metabolites (<1.5 kDa), providing a snapshot of the physiological state and downstream output of cellular processes.

Key Experimental Protocol: Untargeted Metabolomics by LC-MS

- Metabolite Extraction: A biphasic solvent system (e.g., methanol/chloroform/water) is used to quench metabolism and extract a broad range of polar and non-polar metabolites.

- LC-MS Analysis: Extracts are analyzed using complementary LC methods (reverse-phase for hydrophobic, hydrophilic interaction for polar metabolites) coupled to high-resolution mass spectrometry.

- Data Processing: Raw data are converted, aligned, and features (m/z-retention time pairs) are detected. Features are annotated by matching to spectral libraries (e.g., GNPS, HMDB) based on mass, fragmentation pattern, and retention time.

Unique Challenges: Extreme chemical diversity of metabolites; lack of a universal extraction method; absence of a complete reference library for compound identification; rapid metabolite turnover; susceptibility to batch effects.

Comparative Analysis of Multi-omics Data Types

Table 1: Core Characteristics and Challenges of Omics Data Types

| Data Type | Measured Molecule | Core Technology | Typical Sample Input | Key Output Metrics | Primary Preprocessing Challenge |

|---|---|---|---|---|---|

| Genomics | DNA | NGS (e.g., Illumina) | 100-500 ng gDNA | Variants (SNPs, Indels), Coverage | Alignment, variant calling, batch correction |

| Transcriptomics | RNA | RNA-Seq | 10-1000 ng total RNA | Read Counts, FPKM/TPM | Alignment, quantification, normalization |

| Proteomics | Proteins/Peptides | LC-MS/MS | 1-100 µg protein peptides | Spectral Counts, Intensity | Feature detection, database search, imputation |

| Metabolomics | Metabolites | LC/GC-MS, NMR | 10-100 µL serum/plasma | Peak Intensity, m/z/RT | Peak alignment, annotation, normalization |

Table 2: Quantitative Data Scale and Complexity

| Data Type | Approx. # of Features per Human Sample | Data Volume per Sample (Raw) | Temporal Resolution | Major Noise Sources |

|---|---|---|---|---|

| Genomics | ~3 billion bases (5M variants) | 70-100 GB (FASTQ) | Static (Lifetime) | Sequencing errors, PCR duplicates |

| Transcriptomics | ~60,000 genes/transcripts | 5-20 GB (FASTQ) | Minutes-Hours | RNA degradation, amplification bias |

| Proteomics | 10,000-20,000 proteins | 2-10 GB (RAW) | Minutes-Days | Ion suppression, missing data |

| Metabolomics | 1,000-10,000 features | 0.5-5 GB (RAW) | Seconds-Minutes | Ion drift, matrix effects, batch variation |

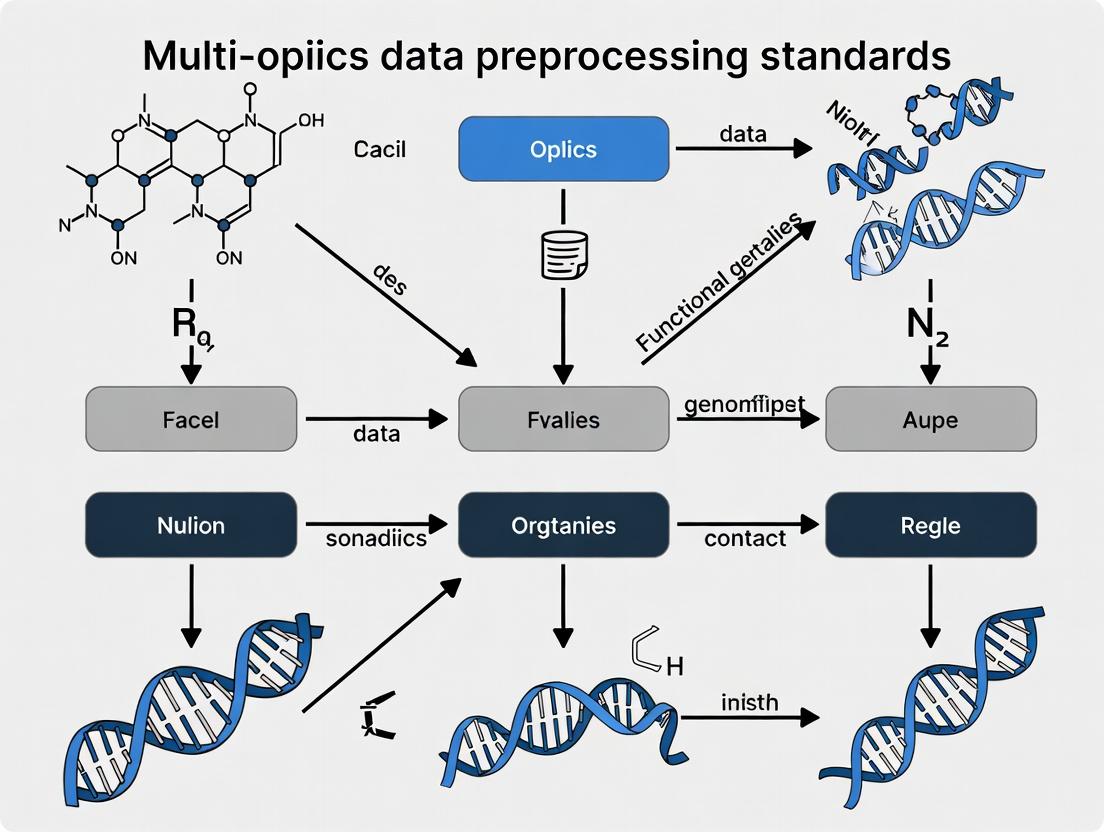

Visualizing the Multi-omics Workflow and Integration

Title: Multi-omics Data Generation and Integration Workflow

Title: Central Dogma to Omics Correlation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents and Materials for Multi-omics Experiments

| Reagent/Material | Supplier Examples | Function in Multi-omics Workflow |

|---|---|---|

| Poly-A Magnetic Beads | Thermo Fisher, NEB | Enrichment of eukaryotic mRNA from total RNA for RNA-Seq library prep. |

| Tn5 Transposase | Illumina, Diagenode | Enzyme for simultaneous fragmentation and adapter ligation in NGS library prep (Nextera). |

| Trypsin, Sequencing Grade | Promega, Thermo Fisher | Protease for specific digestion of proteins into peptides for bottom-up proteomics. |

| TMTpro 16-plex Isobaric Labels | Thermo Fisher | Chemical tags for multiplexed quantitative comparison of up to 16 proteome samples in one MS run. |

| Matched MS/MS Spectral Libraries | NIST, SRMAtlas | Curated reference spectra for confident identification of peptides and metabolites. |

| Stable Isotope-Labeled Internal Standards | Cambridge Isotopes, Sigma | Spiked-in labeled metabolites/proteins for absolute quantification and correcting MS variation. |

| Silica-based DNA/RNA Extraction Kits | Qiagen, Zymo Research | Solid-phase purification of high-quality nucleic acids, essential for NGS. |

| Methanol (LC-MS Grade) | Fisher, Honeywell | High-purity solvent for metabolite extraction and mobile phase in LC-MS to minimize background. |

Within the broader thesis of multi-omics data preprocessing standards research, the establishment and strict adherence to standardized preprocessing protocols emerge as a foundational pillar. This in-depth technical guide examines the critical role of these standards in ensuring analytical validity and combating the reproducibility crisis pervasive in life sciences and drug development.

The Reproducibility Crisis: A Quantitative Perspective

A synthesis of recent studies quantifies the scope and financial impact of irreproducible research.

Table 1: Quantifying the Reproducibility Crisis in Biomedical Research

| Metric | Value | Source/Study Context |

|---|---|---|

| Irreproducible Preclinical Studies | > 50% | Systematic reviews in psychology, cancer biology |

| Estimated Annual Cost (USA) | ~$28 Billion | Freedman et al., PLoS Biology (2015) - Estimated waste from irreproducible preclinical research |

| Studies Replicating Landmark Papers | ~40% | Survey by Baker (2016) of replication attempts |

| Attribution to Data Analysis Issues | ~25% | Analysis of retraction notices and methodological reviews |

| Multi-omics Integration Failures Linked to Inconsistent Processing | ~30-40% | Meta-analysis of published integrative models (2020-2023) |

Foundational Preprocessing Standards by Omics Layer

Detailed methodologies for core preprocessing steps are essential for cross-platform reproducibility.

Experimental Protocol: RNA-Seq Read Processing & Quantification

This protocol outlines a standardized workflow for transcriptomic data.

- Raw Data QC & Adapter Trimming: Assess raw FASTQ files using FastQC (v0.12.0+). Trim adapter sequences and low-quality bases using Trimmomatic (PE settings, LEADING:20, TRAILING:20, SLIDINGWINDOW:4:20, MINLEN:36).

- Alignment: Align trimmed reads to the reference genome (e.g., GRCh38.p14) using a splice-aware aligner (STAR v2.7.10b) with recommended parameters for gene-level quantification (

--quantMode GeneCounts). - Quantification: Generate a gene count matrix directly from STAR output or using featureCounts (Subread v2.0.3). For transcript-level analysis, use Salmon (v1.10.0) in alignment-free mode with a decoy-aware transcriptome index.

- Normalization: Apply normalization appropriate for downstream analysis. For differential expression with count matrices, use methods like DESeq2's median-of-ratios or edgeR's TMM. Key Standard: Record the exact genome assembly, annotation version (e.g., Gencode v44), and software versions with parameters.

Experimental Protocol: LC-MS/MS Proteomics Preprocessing

A standardized workflow for bottom-up proteomics data.

- Raw File Conversion: Convert vendor-specific raw files to an open format (e.g., .mzML) using MSConvert (ProteoWizard) with peak picking and vendor error detection enabled.

- Database Search: Search spectra against a concatenated target-decoy protein sequence database (e.g., UniProtKB Human reference + common contaminants) using search engines (e.g., MSFragger, MaxQuant). Standard Parameters: Trypsin/P digestion, up to 2 missed cleavages, fixed modification (Carbamidomethylation of C), variable modifications (Oxidation of M, Acetylation of protein N-term), precursor mass tolerance ±20 ppm, fragment mass tolerance ±0.05 Da.

- False Discovery Rate (FDR) Control: Apply a 1% FDR threshold at the PSM, peptide, and protein levels using the target-decoy approach.

- Label-Free Quantification (LFQ): Perform intensity extraction and normalization (e.g., in MaxQuant or with the

proteusR package). Apply variance-stabilizing normalization and filter for proteins with valid values in ≥70% of samples per group. - Data Deposition: Mandatory Standard: Deposit raw files, search parameters, and final output in a public repository like PRIDE, following MIAPE guidelines.

Impact of Inconsistent Preprocessing on Downstream Analysis

Variations in preprocessing choices directly alter biological conclusions.

Table 2: Impact of Preprocessing Parameters on Differential Analysis Results

| Preprocessing Variable | Test Condition A | Test Condition B | Observed Effect on DE Results (Example) |

|---|---|---|---|

| RNA-Seq Normalization | TMM (edgeR) | Median-of-Ratios (DESeq2) | ~5-10% discrepancy in genes called significant at FDR<0.05 |

| Proteomics Imputation | MinProb (imputeLCMD) | K-Nearest Neighbors | Significant shift in PCA clustering, affecting outlier detection |

| Metabolomics Scaling | Pareto Scaling | Unit Variance Scaling | Alters network centrality measures in correlation networks |

| 16S rRNA Seq Clustering | 97% vs. 99% OTU Identity | ASV (DADA2) | Differential abundance of taxa changes at genus/family level |

| ChIP-Seq Peak Caller | MACS2 (broad) | HOMER (narrow) | ~30% non-overlap in identified regulatory regions |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents & Tools for Standardized Multi-omics Preprocessing

| Item / Solution | Function / Role in Standardization | Example Product / Resource |

|---|---|---|

| Reference Standards (Spike-Ins) | Controls for technical variation in RNA-Seq and Proteomics; enable cross-platform normalization. | ERCC RNA Spike-In Mix (Thermo Fisher), Proteome Dynamic Range Standard (Promega) |

| Universal Protein Standard | A defined protein mixture for inter-laboratory MS performance assessment and calibration. | UPS2 (Sigma-Aldrich) |

| Standardized Nucleic Acid Kits | Ensure consistent library preparation quality and yield, minimizing batch effects. | Illumina Stranded mRNA Prep, KAPA HyperPrep |

| Quality Control Software Suites | Automate QC metric generation and flag outliers against predefined benchmarks. | MultiQC, PTXQC |

| Workflow Management Platforms | Enforce predefined preprocessing pipelines, ensuring version control and provenance tracking. | Nextflow, Snakemake, Galaxy |

| Containerization Software | Package entire analysis environment (OS, software, dependencies) for perfect reproducibility. | Docker, Singularity |

| Public Data Repository | Mandatory deposition site enforcing metadata standards for verification and reuse. | GEO, PRIDE, Metabolomics Workbench |

A Path Forward: Implementing Community Standards

Adoption of community-endorsed standards is non-negotiable. This includes leveraging workflow languages (CWL, WDL) for pipeline sharing, adhering to MIAME, MIAPE, and similar reporting guidelines, and mandating the public availability of both raw data and processed data alongside the exact computational code used for preprocessing. Only through such rigorous standardization can the integrity of downstream multi-omics integration and translational drug development be secured.

In the context of advancing Multi-omics data preprocessing standards research, the transformation of raw, heterogeneous biological data into integration-ready datasets is a critical bottleneck. Inconsistencies in preprocessing propagate through analysis, compromising reproducibility and the integration of genomic, transcriptomic, proteomic, and metabolomic data. This technical guide outlines a standardized, high-level blueprint for a preprocessing pipeline, designed to ensure data fidelity, comparability, and readiness for downstream systems biology or drug discovery applications.

The Preprocessing Pipeline: A Stage-Wise Deconstruction

The pipeline is conceptualized as sequential, interdependent stages, each with defined inputs, processes, and quality-controlled outputs.

Stage 1: Raw Data Acquisition & Integrity Verification

Objective: To ensure the fidelity of the initially generated data files before any transformative processing.

Methodology: Upon data generation (e.g., from NGS sequencers, mass spectrometers), cryptographic checksums (MD5, SHA-256) are computed and compared against provider-supplied values. File format validation is performed using standard tools (e.g., FastQC for FASTQ, ThermoRawFileParser for .raw files). Metadata pertaining to sample ID, experimenter, date, and instrument settings is extracted and logged into a sample manifest.

Key Research Reagent Solutions:

| Item | Function |

|---|---|

| Nucleic Acid Isolation Kits (e.g., Qiagen, Zymo) | High-purity DNA/RNA extraction, crucial for sequencing library prep. |

| Protein Lysis/Extraction Buffers (e.g., RIPA, 8M Urea) | Efficient and reproducible protein recovery from complex samples. |

| Internal Standard Spikes (e.g., SIRM, PSAQ peptides, labeled metabolites) | Added pre-processing for normalization and absolute quantification in MS-based proteomics/metabolomics. |

| Indexing/Barcoding Oligonucleotides | Enable multiplexed sequencing of multiple samples in a single run. |

Stage 2: Format Standardization & Metadata Annotation

Objective: To convert diverse raw data formats into a consistent, analysis-friendly structure and attach rich, standardized metadata.

Methodology: Data is converted to community-standard formats: FASTQ to aligned BAM/SAM via standardized aligners (e.g., STAR for RNA-seq, BWA for DNA-seq); raw mass spectra to open formats like mzML using Proteowizard MSConvert. Metadata is structured using ontologies (e.g., EDAM for operations, NCBI BioSample for samples) and formatted as JSON-LD or TSV following the ISA (Investigation-Study-Assay) framework.

Stage 3: Core Signal Processing & Artefact Removal

Objective: To perform technology-specific cleaning, enhancing the biological signal by removing technical noise. Experimental Protocols:

- NGS Data (RNA-seq example): Adapter trimming is performed with

Trimmomaticorcutadapt. Quality-based read filtering follows. For gene expression quantification, alignment to a reference genome (e.g., GRCh38) is done usingSTAR(spliced-aware). PCR duplicates are marked/removed. Gene-level counts are generated viafeatureCountsfrom subread. - LC-MS/MS Proteomics Data: Raw spectra are processed through a search engine (e.g.,

MaxQuant,FragPipe). The workflow includes: database search against a reference proteome, peptide-spectrum matching (PSM), false discovery rate (FDR) control at peptide and protein levels (typically ≤1% using target-decoy strategy), and label-free or label-based quantification intensity extraction. - Metabolomics (NMR Data): Processing includes Fourier transformation, phase and baseline correction, chemical shift calibration (e.g., to TSP reference), and spectral binning (bucket integration) using tools like

Bruker TopSpinorChenomx NMR Suite.

Quantitative Benchmarks for Common Preprocessing Tools: Table 1: Comparison of NGS Read Processing Tools (Performance on Human RNA-seq Sample, 50M PE Reads)

| Tool | Adapter Trim Speed (min) | Memory Usage (GB) | Duplicate Marking Accuracy (%) | Citation |

|---|---|---|---|---|

| Trimmomatic | 25 | 4 | N/A | Bolger et al., 2014 |

| cutadapt | 18 | 2 | N/A | Martin, 2011 |

| Picard MarkDuplicates | 40 | 8 | >99 | Broad Institute |

| STAR Aligner | 45 | 32 | N/A | Dobin et al., 2013 |

Stage 4: Normalization & Batch Effect Correction

Objective: To render measurements comparable across samples by removing non-biological variation (e.g., sequencing depth, LC-MS run day).

Methodology: Technique-specific normalization is applied first: e.g., TPM (Transcripts Per Million) or DESeq2's median-of-ratios for RNA-seq; median centering or quantile normalization for proteomics. Subsequently, batch effect correction algorithms are applied if experimental design indicates batch confounding. Common methods include ComBat (empirical Bayes), limma's removeBatchEffect, or ARSyN for multi-omics. Performance is assessed via PCA plots pre- and post-correction.

Diagram: Multi-omics Batch Effect Correction Workflow

Stage 5: Quality Assessment & Reporting

Objective: To generate a comprehensive, automated report quantifying data quality at each pipeline stage, ensuring fitness for integration.

Methodology: Quality Control (QC) metrics are aggregated: for NGS, including read count, alignment rate, duplication rate, GC content; for proteomics, including MS1/MS2 count, identification FDR, intensity distribution. Automated reporting frameworks like MultiQC are employed to visualize metrics across all samples. Data that fails predefined thresholds (e.g., <70% alignment rate) is flagged for exclusion or re-processing.

The Unified Pipeline Blueprint

The integration of all stages into an automated, containerized workflow is the final step toward a standard.

Diagram: End-to-End Preprocessing Pipeline Architecture

This blueprint provides a high-level, standardized framework for preprocessing disparate omics data types. By adhering to such a structured pipeline—emphasizing integrity checks, format standardization, rigorous artefact removal, systematic normalization, and comprehensive QC—researchers can generate integration-ready datasets that are robust, comparable, and primed for discovering complex biological mechanisms. The adoption of this blueprint is a foundational step in fulfilling the broader thesis of establishing reliable, community-agreed Multi-omics data preprocessing standards, ultimately accelerating translational research and drug development.

Within the context of establishing robust multi-omics data preprocessing standards, Exploratory Data Analysis (EDA) serves as the critical first pillar. It is the process of investigating and characterizing omics datasets prior to formal modeling or integration, aiming to understand their inherent structure, quality, and potential biases. This guide provides a technical framework for EDA across genomics, transcriptomics, proteomics, and metabolomics, focusing on universal and modality-specific assessments to inform subsequent normalization, batch correction, and integration steps.

Core Data Quality Metrics Across Omics Layers

A systematic EDA begins with quantifying standard quality control (QC) metrics. The thresholds in Table 1 are generalized starting points and must be adjusted based on specific experimental protocols and technologies.

Table 1: Universal and Modality-Specific QC Metrics

| Omics Layer | Key QC Metric | Typical Threshold / Target | Common Tool/Kits |

|---|---|---|---|

| WGS/WES | Mean Coverage Depth | >30x (clinical), >15x (discovery) | Illumina DRAGEN Bio-IT, GATK |

| % Bases ≥ Q30 | >80% | FastQC, MultiQC | |

| Alignment Rate | >95% | STAR, HISAT2, BWA | |

| RNA-seq | Total Reads | >20M per sample (bulk) | NEBNext Ultra II, TruSeq |

| % rRNA Reads | <5% (poly-A selection) | RiboCop (ribodepletion) | |

| Exonic Rate | >60% | RSeQC, Qualimap | |

| Proteomics (LC-MS/MS) | MS2 Spectra ID Rate | >20% | MaxQuant, Proteome Discoverer |

| Missing Values (per sample) | <20% of total proteins | TMT/SILAC kits (Thermo) | |

| Protein Sequence Coverage | >15% (typical) | Trypsin (Promega) | |

| Metabolomics (LC-MS) | Peak Shape (Asymmetry Factor) | 0.8 - 1.5 | Waters ACQUITY, Shimadzu |

| QC Sample CV | <30% for known analytes | Bio-Rad QC kits, NIST SRM | |

| Signal Drift (in batch) | RSD < 15% in ISTDs | MetaboAnalyst, XCMS |

Experimental Protocols for Key QC Assessments

Protocol 3.1: Sample-Level RNA-seq Quality Verification using Bioanalyzer

- Objective: Assess RNA Integrity Number (RIN) prior to library prep.

- Materials: Agilent Bioanalyzer 2100, RNA Nano Kit, RNA samples.

- Procedure: 1) Load 1 µL of RNA sample onto the Bioanalyzer chip. 2) Run the Eukaryote Total RNA Nano assay. 3) Analyze electrophoregram peaks (18S and 28S ribosomal RNA). 4) Extract RIN algorithm score (1=degraded, 10=intact). Samples with RIN < 7 are typically flagged.

Protocol 3.2: Batch Effect Detection via Principal Component Analysis (PCA)

- Objective: Visually identify technical batch effects vs. biological variation.

- Materials: Normalized abundance matrix (e.g., gene counts, protein intensities), metadata with batch and group labels.

- Procedure: 1) Perform log-transformation and standardization (z-scoring) on the matrix. 2) Compute PCA on the covariance matrix. 3) Plot PC1 vs. PC2, coloring points by batch ID and shaping points by biological group. 4) A strong clustering of samples by batch, especially overriding biological group separation, indicates a significant batch effect requiring correction.

Protocol 3.3: Assessment of Proteomics Data Completeness

- Objective: Quantify missing data patterns (Missing Not At Random vs. Random).

- Materials: Protein intensity matrix from label-free or labeled MS.

- Procedure: 1) Create a binary matrix (1=detected, 0=missing). 2) Hierarchically cluster samples and features based on missingness pattern. 3) Calculate the percentage of missing values per sample and per protein. 4) Visualize using a heatmap. A systematic lack of detection in specific sample groups suggests MNAR, often due to biological absence, while sporadic missingness is more likely random technical failure.

Visualizing EDA Workflows and Relationships

EDA Decision Workflow for Multi-omics Data

Omics-Specific Distribution Visualization Guide

The Scientist's Toolkit: Research Reagent & Solution Reference

Table 2: Essential Reagents & Kits for Multi-omics EDA Phase

| Item Name (Example) | Vendor/Provider | Primary Function in EDA Context |

|---|---|---|

| Agilent Bioanalyzer High Sensitivity DNA/RNA Kits | Agilent Technologies | Provides precise electrophoretic quantification and integrity number (RIN/DIN) for input nucleic acid samples, critical for downstream sequencing success. |

| Illumina DRAGEN Bio-IT Platform | Illumina, Inc. | Secondary analysis suite for rapid QC, alignment, and variant calling; generates key metrics (e.g., coverage, mapping rate) for genomic EDA. |

| Thermo Scientific TMTpro 16plex Kit | Thermo Fisher Scientific | Enables multiplexed proteomics; EDA involves checking labeling efficiency and reporter ion intensity distribution across channels. |

| Waters MassTrak AAA Solution | Waters Corporation | Standardized kit for amino acid analysis; used as a system suitability test to validate LC-MS metabolomics platform performance prior to sample runs. |

| Biocrates AbsoluteIDQ p400 HR Kit | Biocrates Life Sciences | Targeted metabolomics kit with validated internal standards; QC involves analyzing CVs of standards across the plate to assess technical variation. |

| MultiQC | Open Source (Python) | Aggregation software that compiles QC reports from multiple tools (FastQC, STAR, etc.) across many samples into a single interactive HTML report for holistic assessment. |

The promise of multi-omics integration in systems biology and precision medicine is contingent upon the robust preprocessing and harmonization of heterogeneous data streams. While algorithmic advances in data fusion are rapid, the foundational step of consistent, comprehensive, and machine-actionable metadata collection remains a pervasive bottleneck. This whitepaper, framed within a broader thesis on multi-omics data preprocessing standards, argues that critical metadata is the essential substrate for any meaningful integration, transforming disparate datasets into a coherent knowledge resource.

The Quantitative Metadata Gap: A Snapshot

Current public repositories suffer from inconsistent and incomplete metadata, severely limiting reproducibility and integrative analysis. The following table summarizes a recent audit of metadata completeness for high-throughput sequencing datasets in major repositories.

Table 1: Metadata Completeness Audit in Public Repositories (2023-2024)

| Metadata Field Category | ENA (%) | SRA (%) | GEO (%) | Ideal Requirement |

|---|---|---|---|---|

| Basic Descriptors (Sample Title, Source Organism) | 100 | 100 | 100 | Mandatory |

| Sample Characteristics (e.g., Phenotype, Disease Stage) | 85 | 72 | 88 | Mandatory |

| Experimental Protocol (Library Prep, Kit, Instrument) | 90 | 65 | 45 | Mandatory |

| Processing Parameters (Read Length, Adapter Trim Info) | 40 | 30 | 10 | Highly Recommended |

| Controlled Vocabulary Terms (e.g., Ontology IDs) | 35 | 20 | 60 | Mandatory for Integration |

| Data-Provenance Links (Link to Raw Mass Spec or NMR data) | 15* | N/A | 5* | Mandatory for Multi-omics |

*ENA & GEO figures represent linked Proteomics (PRIDE) or Metabolomics (MetaboLights) datasets.

Foundational Protocols for Critical Metadata Capture

Protocol 3.1: Minimum Information Framework for Multi-omics Samples (MIMOS)

- Objective: To define the minimal metadata required to unambiguously interpret multi-omics data from a biological sample.

- Procedure:

- Sample Origin: Record donor/patient ID (de-identified), organism, anatomical site (using UBERON ontology), cell type (using Cell Ontology), and disease state (using MONDO or DOID).

- Processing History: Log sample collection date, preservation method (e.g., snap-frozen, FFPE), storage duration and conditions, and number of freeze-thaw cycles.

- Multi-omics Aliquot Tracking: For each analytical technique (genomics, transcriptomics, proteomics, metabolomics), record a unique aliquot ID derived from the parent sample ID, extraction date, protocol DOI, and technician ID.

- Instrument & Run Data: Document instrument model, software version, data acquisition mode, and a unique run identifier.

Protocol 3.2: Retrospective Metadata Annotation via Text Mining and Curation

- Objective: To extract and structure metadata from legacy publications and poorly annotated datasets.

- Procedure:

- Document Aggregation: Compile relevant published PDFs and supplementary data files.

- Named Entity Recognition (NER): Apply a pre-trained NER model (e.g., SciBERT) to identify entities like genes, compounds, diseases, and species.

- Ontology Mapping: Use ontology resolution services (e.g., OLS API) to map extracted entity strings to standardized identifiers (e.g., ChEBI for metabolites, UniProt for proteins).

- Manual Curation & Validation: Deploy a dual-curator system using a tool like CEDAR Workbench to validate NER outputs, fill gaps, and ensure FAIR compliance.

Visualizing the Metadata-Integration Ecosystem

Multi-omics Integration Metadata Pipeline

Core Multi-omics Integration Pathway

Table 2: Key Research Reagent Solutions for Metadata-Managed Multi-omics

| Item / Resource | Category | Function in Metadata Context |

|---|---|---|

| Sample Multiplexing Kits(e.g., CellPlex, TMT, Multiplex PCR Barcodes) | Wet-lab Reagent | Enables pooling of multiple samples in one sequencing run or mass spec injection. The barcode sequence is critical metadata for demultiplexing and must be rigorously recorded. |

| Unique Molecular Identifiers (UMIs) | Molecular Biology | Short random nucleotide sequences added to each molecule pre-amplification. UMI sequences and their handling protocol are essential metadata for accurate quantification and removing PCR duplicates. |

| CEDAR Workbench | Software Tool | An open-source, web-based tool for creating, managing, and validating metadata templates using community-based standards (e.g., ISA, MIAME). Ensures machine-actionability. |

| BioSamples Database | Repository Service | A central portal at EBI to assign globally unique, persistent identifiers (SAMN IDs) to biological samples. This ID is the core metadata for linking all derived omics data. |

| SPROUT-Launcher Kit | Integrated System | A commercial system (e.g., from SPT Labtech) that integrates nanolitre dispensing with laboratory information management system (LIMS) tracking. Automatically captures process metadata (volumes, dates, reagents). |

| Ontology Lookup Service (OLS) | Web Service | An API for querying and visualizing life science ontologies. Critical for curation to map free-text sample descriptions (e.g., "heart") to standardized terms (e.g., UBERON:0000948). |

From Theory to Practice: Step-by-Step Methodological Standards and Application with Modern Tools

Within the framework of Multi-omics data preprocessing standards research, establishing rigorous, layer-specific quality control (QC) thresholds is paramount. This technical guide details contemporary, omics-specific QC criteria and filtering methodologies for single nucleotide polymorphisms (SNPs), sequencing reads, proteins, and metabolites. Standardized preprocessing is the critical foundation ensuring the biological validity and integrative potential of downstream multi-omics analyses.

Genomic Variant (SNP/Indel) QC

Core QC Metrics and Thresholds

Post-variant calling, filtering is required to remove technical artifacts. Thresholds are applied at the sample and variant levels.

Table 1: Standard QC Thresholds for Genomic Variants

| QC Metric | Typical Threshold | Rationale |

|---|---|---|

| Sample-Level | ||

| Call Rate | > 98% | Excludes samples with excessive missing data. |

| Sex Consistency | Match reported sex | Detects sample mix-ups or contamination. |

| Heterozygosity Rate | Within ±3 SD of mean | Identifies inbreeding or contamination. |

| Variant-Level | ||

| Missingness Rate (--geno) | < 5% | Removes variants with poor genotyping across samples. |

| Hardy-Weinberg Equilibrium (HWE) p-value | > 1x10⁻⁶ (general pop.) | Flags genotyping errors or population stratification. |

| Minor Allele Frequency (MAF) | > 0.01 (or 0.05) | Filters rare variants with low statistical power. |

Experimental Protocol: Genotyping Array QC Workflow

- Data Import: Load raw intensity data (

.idatfiles for Illumina) into analysis software (e.g.,PLINK,GenomeStudio). - Sample QC: Calculate call rates, gender checks using X chromosome heterozygosity, and relatedness (IBD > 0.1875 indicates duplicates/close relatives). Remove outliers.

- Variant QC: Filter variants based on call rate, HWE p-value in controls, and MAF.

- Population Stratification: Perform Principal Component Analysis (PCA) using a set of linkage-disequilibrium-pruned, high-quality autosomal SNPs to identify and adjust for genetic ancestry outliers.

Diagram 1: Genotyping QC workflow from raw data to clean set.

Transcriptomic (Reads) QC

RNA-Seq QC Metrics

QC is performed on raw reads, alignment, and gene counts.

Table 2: Standard QC Thresholds for RNA-Seq Data

| Analysis Stage | Metric | Typical Threshold |

|---|---|---|

| Raw Reads (FastQC) | Per base sequence quality | Phred score > 28 |

| Adapter content | < 5% | |

| % of reads with Ns | < 5% | |

| Alignment | Overall alignment rate | > 70-80% |

| rRNA alignment rate | < 5-10% | |

| Post-Alignment | Strand-specificity (for lib prep) | RSeQC > 0.6 |

| Gene body coverage 3'/5' bias | RSeQC > 0.5 | |

| Duplicate read rate | < 50% (sample-dependent) |

Experimental Protocol: RNA-Seq Preprocessing Pipeline

- Raw Read QC: Run

FastQCon all FASTQ files. Trim adapters and low-quality bases usingTrimmomaticorcutadapt(parameters: ILLUMINACLIP:adapter.fa:2:30:10, LEADING:3, TRAILING:3, SLIDINGWINDOW:4:20, MINLEN:36). - Alignment: Align cleaned reads to a reference genome/transcriptome using a splice-aware aligner like

STAR(parameters: --outSAMtype BAM SortedByCoordinate --outFilterMultimapNmax 20 --alignSJoverhangMin 8). - Post-Alignment QC: Generate metrics with

QualiMaporRSeQC. UsePicard MarkDuplicatesto flag PCR duplicates. - Quantification: Generate gene-level counts using

featureCounts(parameters: -t exon -g gene_id -s [0,1,2 for strand specificity]) orHTSeq-count. - Sample-level Filtering: Remove samples where total counts are >3 median absolute deviations from the median. Filter lowly expressed genes (e.g., require >1 count per million in at least n samples, where n is the size of the smallest group).

Diagram 2: RNA-seq preprocessing and QC workflow.

Proteomic QC

LC-MS/MS Based Proteomics QC

QC focuses on sample preparation reproducibility, instrument performance, and identification confidence.

Table 3: Standard QC Thresholds for LC-MS/MS Proteomics

| Metric | Typical Threshold | Purpose |

|---|---|---|

| Peptide/Protein ID | FDR (Peptide-Spectrum Match) | ≤ 1% |

| Minimum unique peptides per protein | ≥ 2 | |

| Quantitative (Label-Free) | CV of technical replicates | < 20% |

| Missing values per sample | < 20% of proteins | |

| Missing values per protein (across all samples) | < 50% (for imputation) | |

| Instrument Performance | Total MS1/MS2 spectra count | Stable across runs |

| Retention time drift | < 5% over batch |

Experimental Protocol: Label-Free Quantification (LFQ) Workflow

- Sample Prep & Digestion: Lyse cells/tissue, reduce (DTT), alkylate (IAA), and digest with trypsin (1:50 enzyme:protein, 37°C, overnight). Desalt using C18 StageTips.

- MS Data Acquisition: Analyze peptides via LC-MS/MS on a Q-Exactive or similar instrument. Use a 60-120 min gradient.

- Database Search & FDR: Process raw files (

*.raw) withMaxQuantorProteome Discoverer. Search against UniProt DB. Apply reverse-decoy strategy to control FDR at 1% at PSM and protein levels. - QC Filtering: Filter protein table to remove contaminants, reverse hits, and proteins only identified by site. Require at least 2 unique peptides. Filter proteins present in <50% of samples per group (optional, for imputation).

- Normalization & Imputation: Normalize using median or quantile normalization. Impute missing values (for putative low-abundance proteins) using methods like

k-nearest neighborsorminimum value imputationfrom a narrow distribution.

Metabolomic QC

LC/GC-MS Metabolomics QC

QC ensures analytical stability and correct feature identification.

Table 4: Standard QC Thresholds for Untargeted Metabolomics

| QC Sample Type | Metric | Typical Threshold |

|---|---|---|

| Pooled QC Samples | Feature intensity RSD (CV) in pooled QCs | < 20-30% |

| Retention time drift in pooled QCs | < 2-5% | |

| Blanks | Signal in biological samples vs. blanks | > 5-10x fold change |

| Internal Standards | Recovery of IS (spiked pre-extraction) | 70-130% |

| RSD of IS across all runs | < 15% |

Experimental Protocol: Untargeted LC-MS Metabolomics Workflow

- Sample Extraction: Use methanol/water/chloroform (2:1.5:1 ratio) for polar/non-polar metabolite extraction. Include a pooled QC sample (mix of all aliquots) and process blanks.

- Data Acquisition: Run samples in randomized order, injecting pooled QC samples every 6-10 injections. Use full-scan MS (m/z 50-1500) in both positive and negative ionization modes.

- Peak Picking & Alignment: Use

XCMSorMS-DIALfor feature detection (parameters: centWave for peak picking, mzwid=0.015, minfrac=0.5, bw=5). Align features across samples. - QC-Based Filtering: Remove features with RSD > 30% in pooled QC samples. Remove features where signal in biological samples is not significantly greater than in blanks (e.g., fold change < 5).

- Identification: Match accurate mass (ppm < 5-10) and MS/MS fragmentation spectra (if available) to authentic standards in databases (e.g., HMDB, METLIN). Use tiered confidence levels (Level 1: confirmed standard, Level 2: library MS/MS match, Level 3: tentative candidate).

The Scientist's Toolkit: Research Reagent Solutions

Table 5: Essential Reagents and Materials for Multi-omics QC

| Item | Function in QC | Example/Note |

|---|---|---|

| Genomics | ||

| HapMap/1000 Genomes DNA | Positive control for genotyping array performance and batch alignment. | Coriell Institute repositories. |

| Transcriptomics | ||

| ERCC RNA Spike-In Mix | Exogenous controls to assess technical variation, sensitivity, and dynamic range in RNA-seq. | Thermo Fisher Scientific 4456740. |

| RiboZero/RiboMinus Kits | Deplete ribosomal RNA to increase informative reads in total RNA-seq. | Illumina/Thermo Fisher. |

| Proteomics | ||

| Trypsin, Sequencing Grade | Specific and consistent protein digestion for reproducible peptide generation. | Promega V5111. |

| UPS2 Protein Standard Mix | Defined mix of 48 recombinant human proteins for benchmarking quantitative accuracy. | Sigma Aldrich UPS2. |

| Metabolomics | ||

| Stable Isotope Labeled Internal Standards | Correct for matrix effects and instrument variability during extraction/MS. | e.g., Cambridge Isotope Labs. |

| NIST SRM 1950 | Standard Reference Material of human plasma for inter-laboratory method validation. | National Institute of Standards and Technology. |

| Cross-Omics | ||

| Pooled QC Sample | Aliquot from all study samples, run repeatedly to monitor and correct for batch effects. | Prepared in-house. |

| Commercial HeLa or Yeast Cell Lysate | Well-characterized, reproducible positive control for proteomic/metabolomic pipelines. | e.g., Promega P/N V7951. |

Within the multi-omics data preprocessing standards research framework, normalization is a critical first step to ensure data from diverse high-throughput technologies are comparable, accurate, and biologically interpretable. This guide provides an in-depth comparison of strategies designed to mitigate technical variance from sources like sequencing depth and mass spectrometry (MS) signal intensity.

Technical variance arises from non-biological factors inherent to experimental protocols and instrumentation. Its sources differ by platform:

- Sequencing (RNA-seq, DNA-seq, ATAC-seq): Library preparation efficiency, sequencing depth, GC content bias, and batch effects.

- Mass Spectrometry (Proteomics, Metabolomics): Sample loading variance, ionization efficiency, detector sensitivity, and instrument drift.

- Microarrays: Hybridization efficiency, scanner settings, and spatial artifacts.

Failure to correct for this variance can obscure true biological signals, leading to false conclusions in downstream integrative analysis.

Normalization Strategies by Technology

Sequencing-Based Data Normalization

These methods primarily address variance in library size (total read count).

Detailed Protocol: DESeq2's Median-of-Ratios Method

- Preprocessing: Begin with a raw count matrix (genes x samples). Filter out genes with extremely low counts across all samples.

- Reference Calculation: For each gene, calculate its geometric mean across all samples.

- Ratio Calculation: For each sample and each gene, compute the ratio of the gene's count to the geometric mean.

- Scaling Factor: For each sample, the scaling factor (size factor, SF) is the median of all gene ratios for that sample, excluding genes with a geometric mean of zero or ratios in the extreme tails.

- Normalization: Divide the raw counts for each sample by its calculated SF to obtain normalized counts.

- Key Assumption: Most genes are not differentially expressed.

Detailed Protocol: TMM (Trimmed Mean of M-values) from edgeR

- Preprocessing: Start with a raw count matrix. Choose a reference sample (often the one with upper quartile closest to the mean).

- M-Value Calculation: For each gene i in sample A vs reference R, compute M-value = log2(countA / countR) and A-value = (log2(countA) + log2(countR))/2.

- Trimming: Trim 30% of the M-values from the lower A-value range and 30% from the upper A-value range to remove highly expressed and low-count genes.

- Weighting: Weight remaining M-values by inverse variance.

- Scaling Factor: The scaling factor for sample A is the weighted mean of the trimmed M-values. Normalized counts = (countA * effective library size of R) / (library size of A * SFA).

- Key Assumption: The majority of genes are not differentially expressed between the sample and reference.

Mass Spectrometry-Based Data Normalization

These methods correct for variance in total protein/peptide abundance, ionization efficiency, and sample loading.

Detailed Protocol: Median Absolute Deviation (MAD) Scaling

- Preprocessing: Start with a log2-transformed intensity matrix (features x samples). This stabilizes variance.

- Median Calculation: Calculate the median intensity for each sample.

- Global Median: Compute the global median across all sample medians.

- Shift Adjustment: Subtract each sample's median from the global median to obtain a shift value.

- Normalization: Add the shift value to all feature intensities in the corresponding sample. This centers all sample medians at the same value.

- Application: Common in label-free proteomics and metabolomics.

Detailed Protocol: Quantile Normalization

- Preprocessing: Start with an intensity matrix (features x samples).

- Sorting: For each sample (column), sort feature intensities in ascending order.

- Averaging: For each row (rank position), compute the mean intensity across all samples.

- Replacement: Replace each sorted intensity value with the corresponding row mean.

- Reordering: Map the sorted, normalized values back to their original feature order for each sample.

- Result: All samples share an identical intensity distribution.

- Key Assumption: The overall distribution of abundances is expected to be similar across samples.

Cross-Platform and Multi-Batch Normalization

ComBat (from sva package): Uses an empirical Bayes framework to adjust for batch effects while preserving biological variance.

- Model Fitting: Models the data as having both biological covariates of interest and known batch covariates.

- Parameter Estimation: Empirically estimates batch-specific location (mean) and scale (variance) parameters.

- Adjustment: Shrinks the batch parameters towards the overall mean and uses them to adjust the data.

Quantitative Comparison of Normalization Methods

The performance of normalization strategies is typically evaluated using metrics like Median Absolute Deviation (MAD) of housekeeping genes, clustering accuracy, or reduction in technical replicate variance.

Table 1: Comparison of Sequencing Depth Normalization Methods

| Method | Core Principle | Strengths | Limitations | Best For |

|---|---|---|---|---|

| DESeq2 Median-of-Ratios | Gene-wise ratios relative to geometric mean, sample median. | Robust to few highly DE genes; part of integrated DE pipeline. | Assumes few DE genes; sensitive to composition bias. | RNA-seq DGE with in-pipeline analysis. |

| edgeR TMM | Weighted trimmed mean of log-expression ratios. | Robust to asymmetry in DE gene counts; efficient. | Performance degrades with extreme composition bias. | RNA-seq DGE, especially with expected up/down asymmetry. |

| Upper Quartile (UQ) | Scales counts by upper quartile of counts. | Simple, fast. | Biased by high-abundance genes; unstable with low counts. | Initial exploratory analysis. |

| Reads Per Million (RPM/CPM) | Simple total count scaling. | Extremely simple, interpretable. | Highly influenced by few dominant genes; poor for DGE. | Metagenomics, counting small RNA categories. |

Table 2: Comparison of Mass Spectrometry Signal Normalization Methods

| Method | Core Principle | Strengths | Limitations | Best For |

|---|---|---|---|---|

| Median/MAD Scaling | Centers medians and scales variances across runs. | Simple, robust to outliers. | Assumes most features are non-DA. | Label-free proteomics/metabolomics (global profiling). |

| Quantile | Forces identical intensity distribution across runs. | Powerful, makes runs technically identical. | Removes legitimate global intensity differences; aggressive. | Large cohort LC-MS runs where distribution is stable. |

| Total Ion Current (TIC) | Scales to sum of all intensities per run. | Intuitive, accounts for loading differences. | Overly sensitive to high-abundance features. | Targeted analyses or preliminary steps. |

| Cyclic Loess | Applies intensity-dependent smoothing between sample pairs. | Non-linear, accounts for intensity-dependent bias. | Computationally heavy (O(n²)); for smaller datasets. | 2-sample designs (e.g., label-free with internal standard). |

Visualization of Workflows and Logical Frameworks

Sequencing Data Normalization Decision Path

MS Data Normalization Decision Path

Multi-Omics Preprocessing Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Tools for Normalization Experiments

| Item | Function in Normalization Context | Example Product/Kit |

|---|---|---|

| External Spike-in Controls (RNA) | Distinguishes technical from biological variance; enables absolute scaling. | ERCC RNA Spike-In Mix (Thermo Fisher), SIRV Spike-in Kit (Lexogen) |

| External Spike-in Controls (MS) | Quantifies absolute abundance, corrects for sample prep and ionization variance. | Pierce Quantitative Peptide/Protein Standards (Thermo Fisher), UPS2 Proteomic Dynamic Range Standard (Sigma-Aldrich) |

| Stable Isotope Labeled Internal Standards (MS) | Provides run-to-run signal correction for specific target analytes. | Various SIL/SIS peptides, metabolite isotope standards (e.g., Cambridge Isotopes) |

| UMI Adapters (Sequencing) | Corrects for PCR amplification bias during library prep, improving count accuracy. | TruSeq UMI Adapters (Illumina), SMARTer smRNA-seq with UMIs (Takara Bio) |

| Pooled Reference Samples | Serves as a common baseline across multiple batches/runs for relative normalization. | Custom-generated pool of study- or tissue-type specific biological material. |

| Benchmarking Datasets | Gold-standard datasets with known truths to validate normalization performance. | SEQC/MAQC-III consortium data, simulated in silico datasets from Polyester. |

| Bioinformatics Pipelines | Implement standardized, reproducible normalization workflows. | nf-core/rnaseq, MSstats, Proteome Discoverer, XCMS Online. |

In the context of multi-omics data preprocessing standards research, the systematic technical variation introduced by batch effects represents a formidable challenge to data integration and reproducibility. These non-biological artifacts, arising from differences in sample processing times, equipment, reagents, or personnel, can obscure true biological signals and lead to spurious conclusions. This whitepaper provides an in-depth technical guide on identifying, characterizing, and correcting batch effects using advanced statistical methodologies, with a focus on maintaining biological fidelity across genomics, transcriptomics, proteomics, and metabolomics datasets.

Identification and Diagnostics

Batch effect identification precedes correction. A multi-faceted diagnostic approach is required.

Table 1: Common Batch Effect Diagnostic Metrics & Tools

| Metric/Tool | Data Type | Principle | Interpretation |

|---|---|---|---|

| Principal Component Analysis (PCA) | All omics | Dimensionality reduction to visualize largest sources of variance. | Clustering of samples by batch along early PCs suggests strong batch effects. |

| Percent Variance Explained | All omics | Quantifies proportion of total variance attributable to batch. | >10% often warrants correction. Biology should explain more variance than batch. |

| Silhouette Width | All omics | Measures cohesion vs. separation of predefined groups (batch/class). | High batch silhouette width (>0.5) indicates strong batch clustering. |

| ANOVA-based F-statistic | Continuous | Tests if batch means differ significantly for each feature. | High F-statistics with low p-values indicate feature-level batch association. |

| Boxplots/Density Plots | Continuous | Visual distribution comparison per batch. | Non-overlapping medians/distributions suggest batch-specific shifts. |

Experimental Protocol 1: Systematic Batch Effect Diagnosis

- Data Preparation: Log-transform (if appropriate) and normalize data using a chosen method (e.g., quantile normalization for arrays, TPM for RNA-Seq).

- Unsupervised Visualization: Perform PCA on the full feature set. Generate 2D/3D plots of PC1 vs. PC2 (vs. PC3), coloring points by batch and by biological condition.

- Variance Decomposition: Fit a linear model for each feature:

feature ~ batch + condition. Extract the sum of squares attributed to batch and condition. Calculate the average percent variance explained by each factor across all features. - Quantitative Scoring: Calculate silhouette widths for batch labels. A width >0.5 indicates pronounced batch structure.

- Feature-Level Testing: Conduct ANOVA (for linear models) or Kruskal-Wallis tests (non-parametric) per feature with batch as the main effect. Apply False Discovery Rate (FDR) correction. A large number of significant features confirms a pervasive batch effect.

Correction Methods: A Technical Deep Dive

ComBat and its Extensions

ComBat (Combating Batch Effects) uses an empirical Bayes framework to stabilize variance estimates across batches, making it powerful for small-sample studies.

Experimental Protocol 2: Standard ComBat Implementation

- Input: An $m \times n$ matrix of normalized expression/abundance values for $m$ features (genes, proteins) across $n$ samples, with known batch and (optional) biological covariate vectors.

- Model Fitting: For each feature $i$ in batch $j$, model the data as: $Y{ij} = \alphai + X\betai + \gamma{ij} + \delta{ij}\epsilon{ij}$, where $\alphai$ is the overall mean, $X\betai$ models biological covariates, $\gamma{ij}$ is the batch-specific additive effect, and $\delta{ij}$ is the batch-specific multiplicative (scale) effect.

- Empirical Bayes Estimation: Pool information across features to estimate the prior distributions for $\gamma{ij}$ and $\delta{ij}$. This shrinkage improves estimates for small batches.

- Adjustment: Subtract the additive effect and divide by the multiplicative effect to obtain batch-adjusted data: $Y{ij}^{adj} = \frac{Y{ij} - \hat{\gamma}{ij}}{\hat{\delta}{ij}}$.

- Output: An $m \times n$ matrix of batch-adjusted values. Note: ComBat-Seq is a variant for raw count data (RNA-Seq) that uses a negative binomial model.

Diagram Title: ComBat Empirical Bayes Adjustment Workflow

Surrogate Variable Analysis (SVA)

SVA estimates latent variables (surrogate variables, SVs) that capture unmodeled variation, including batch effects, without requiring explicit batch annotation.

Experimental Protocol 3: SVA for Latent Batch Effect Capture

- Define Models: Specify a full model that includes all known biological covariates (e.g.,

~ disease_state) and a null model that includes only intercept or nuisance variables. - Residual Calculation: For each feature, fit the null model and extract residuals. These residuals contain biological signal and unmodeled variation.

- Singular Value Decomposition (SVD): Perform SVD on the residual matrix to identify orthogonal patterns of variation.

- SV Identification: Apply a statistical test (e.g., Buja-Eyuboglu permutation test) to identify which singular vectors are significantly associated with the residual variation but orthogonal to the biological covariates.

- SV Incorporation: Add the significant SVs as covariates to the downstream differential expression/abundance model (e.g., in DESeq2, limma).

Diagram Title: Surrogate Variable Analysis (SVA) Procedure

Other Advanced Methods

Table 2: Comparison of Advanced Batch Correction Methods

| Method | Category | Key Assumption | Strength | Weakness | Best For |

|---|---|---|---|---|---|

| Harmony | Integration | Cells of the same type cluster across batches. | Iterative clustering & correction. Scalable. | Requires clusterable data (e.g., single-cell). | Single-cell omics, large datasets. |

| MMD-ResNet | Deep Learning | Batch effects are non-linear but separable. | Captures complex, non-linear effects. | High computational cost, requires large n. | Imaging mass spec, highly non-linear artifacts. |

| AROMA | Signal Processing | Batch effects are intensity-dependent. | Automatically identifies technical probes (microarrays). | Primarily for Affymetrix microarray data. | Genotyping, methylation microarrays. |

| RUV (Remove Unwanted Variation) | Factor Analysis | Control features (e.g., housekeepers, spike-ins) are known. | Flexible (RUV-2, RUV-4, RUVg). | Performance depends on quality of control features. | Experiments with reliable negative controls. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Batch Effect-Managed Experiments

| Item | Function in Batch Management | Example/Note |

|---|---|---|

| Reference/QC Samples | A pooled sample aliquoted and run across all batches to monitor technical variation. | Commercial human reference RNA (e.g., Universal Human Reference RNA), pooled plasma. |

| Spike-In Controls | Exogenous, synthetic molecules added in known quantities to correct for technical noise. | ERCC RNA Spike-In Mix (RNA-Seq), S. pombe spike-in for ChIP-Seq. |

| Inter-Plate Calibrators | Identical samples placed on each processing plate (e.g., in MS, ELISA) to align measurements. | Calibration peptides, standardized serum pools. |

| Automated Nucleic Acid/Protein Extractors | Minimize operator-induced variation in sample preparation. | Qiagen QIAcube, Promega Maxwell. |

| Barcoded Multiplex Kits | Allow pooling of samples from different batches early in workflow to reduce batch confounds. | 10x Genomics kits, TMT/iTRAQ reagents for proteomics. |

| Version-Controlled Reagent Lots | Single, large lot of key reagents reserved for a study to avoid lot-to-lot variation. | Antibodies, enzymatic master mixes, sequencing kits. |

| Integrated Laboratory Information Management System (LIMS) | Tracks all sample metadata, reagent lots, and instrument parameters essential for modeling batch. | Benchling, Labguru, custom solutions. |

Validation and Post-Correction Assessment

Correction must be validated to ensure biological signal is preserved.

Experimental Protocol 4: Post-Correction Validation Pipeline

- Visual Inspection: Repeat PCA. Samples should cluster by biological condition, not batch.

- Metric Re-calculation: Recompute percent variance explained and silhouette width for batch. These should be minimized.

- Positive Control Validation: Confirm known, strong biological differences (e.g., treated vs. untreated control) remain significant post-correction using a statistical test.

- Negative Control Check: For features expected not to differ (e.g., housekeeping genes in a non-perturbing experiment), test for induced spurious differences. P-value distribution should be uniform.

- Downstream Analysis Consistency: Perform primary differential analysis on corrected and uncorrected data. Compare results; true biological findings should be enhanced, not lost.

Effective batch effect management is non-negotiable for robust multi-omics science. The choice of method depends on the study design: ComBat for known batches, SVA for complex or unknown artifacts, and Harmony/RUV for specific data types. A rigorous, method-agnostic diagnostic and validation pipeline is critical. Within multi-omics preprocessing standards, batch correction must be documented with explicit parameters, software versions, and diagnostic plots to ensure full reproducibility and data integration across studies.

Within the critical research context of establishing robust Multi-omics data preprocessing standards, the selection and implementation of computational workflows are paramount. Inconsistent preprocessing leads to irreproducible results, directly hampering downstream integrative analysis and biomarker discovery. This guide provides an in-depth technical examination of prominent workflow platforms and essential R/Python packages, offering practical, standardized methodologies for preprocessing genomics, transcriptomics, proteomics, and metabolomics data.

Workflow Platforms: Architecture and Application

Nextflow

Nextflow enables scalable and reproducible computational workflows using a dataflow programming model. It excels in complex, large-scale multi-omics pipelines deployed across clusters and clouds.

Core Methodology for Multi-omics Preprocessing:

- Channel Creation: Define input data (e.g., FASTQ files, raw mass spectrometry files) as channels using

Channel.fromPathorfromSRA. - Process Definition: Write each preprocessing step (e.g., quality control, alignment, quantification) as an independent

process. Each process runs in its own container (Docker/Singularity) for isolation. - Workflow Composition: Chain processes together by defining the output of one process as the input to the next, enabling parallel execution where possible.

- Configuration: Separate pipeline logic (

main.nf) from execution parameters (nextflow.config), specifying compute resources, container images, and reference file paths per omics layer.

Snakemake

Snakemake is a Python-based workflow engine that uses a rule-directed, top-down approach, ideal for defining explicit input-output dependencies.

Core Methodology for Multi-omics Preprocessing:

- Rule Specification: Each preprocessing step is a

rule. A rule definesinput:files,output:files, ashell:command orscript:(Python/R), and optionalconda:orcontainer:directives for environment control. - Wildcard Utilization: Use wildcards (

{sample}) in input/output definitions to generalize rules across all samples. - Target Rule: The first rule (

rule all) typically aggregates all desired final outputs, driving the execution of the entire DAG. - Execution: Snakemake resolves dependencies and executes rules in the correct order, maximizing parallelization based on available cores.

Galaxy

Galaxy provides a web-based, accessible interface for data analysis, emphasizing user-friendliness and provenance tracking without command-line requirements.

Core Methodology for Multi-omics Preprocessing:

- Tool Selection: Use the tool panel to select preprocessing tools (e.g., FastQC, Trimmomatic, MaxQuant) installed by a Galaxy administrator.

- Workflow Construction: Execute tools sequentially, using outputs as inputs for subsequent steps. The "Extract Workflow" function can automatically generate a reusable workflow from a history.

- Parameter Standardization: Within a saved workflow, set and lock critical preprocessing parameters (e.g., quality thresholds, adapter sequences) to enforce a standard operating procedure across users.

- Sharing and Reproducibility: Share complete histories and workflows via published URLs or the Galaxy workflow repository.

Comparative Analysis of Platform Capabilities

Table 1: Quantitative Comparison of Workflow Platforms for Multi-omics Preprocessing

| Feature | Nextflow | Snakemake | Galaxy |

|---|---|---|---|

| Primary Language | DSL (Groovy-based) | Python (DSL) | Web UI (Python server) |

| Execution Environment | Containers, Conda | Containers, Conda | Containers, Conda |

| Parallelization Model | Dataflow / Reactive | DAG-based | Built-in job queuing |

| Portability | High (Reproducible) | High (Reproducible) | High (via web) |

| Learning Curve | Steeper | Moderate | Gentle |

| Provenance Tracking | Explicit log & reports | Detailed reports | Automatic, comprehensive |

| Cloud Native Support | Excellent (K8s, AWS) | Good (K8s, Google LS) | Good (CloudMan, Pulsar) |

| Best Suited For | Large-scale, complex pipelines | Lab-focused, modular pipelines | Collaborative, multi-user teams |

Table 2: Common Multi-omics Preprocessing Steps Mapped to Platforms

| Omics Layer | Preprocessing Step | Nextflow Tool / Process | Snakemake Rule | Galaxy Tool |

|---|---|---|---|---|

| Genomics (WGS) | Adapter Trimming | nf-core/raredisease (FastP) |

trim_reads (Cutadapt) |

Trimmomatic |

| Transcriptomics (RNA-seq) | Read Alignment | RNASeq workflow (STAR) |

align_to_genome (HISAT2) |

STAR |

| Proteomics (LC-MS) | Peptide Identification | proteomicslfq (MSGF+) |

run_search (Comet) |

MaxQuant |

| Metabolomics (NMR/LC-MS) | Peak Alignment & Annotation | LCMSmapping (XCMS) |

align_features (OpenMS) |

XCMS |

Essential R/Python Packages for Standardized Preprocessing

Beyond workflow managers, specific libraries are critical for implementing standardized preprocessing algorithms.

In R:

SummarizedExperiment: The foundational S4 class for storing rectangular data (e.g., counts) with associated row/column metadata, forming the standard data structure for Bioconductor omics analysis.limma&DESeq2: For normalized transformation and batch correction of transcriptomics/proteomics data.removeBatchEffect(limma) andvarianceStabilizingTransformation(DESeq2) are key functions.sva(ComBat): Gold-standard for empirical Bayes batch effect adjustment across all omics data types.MetaboAnalystR: Provides a standardized pipeline for metabolomics data processing, including peak filtering, normalization, and missing value imputation.

In Python:

Scanpy(ppmodule): Providesscanpy.pp.filter_cells,normalize_total,log1p, andhighly_variable_genesfor standardized single-cell RNA-seq preprocessing.PyMS&OpenMS: Libraries for mass spectrometry data processing, enabling reproducible peak picking, alignment, and compound identification workflows.scikit-learn(StandardScaler,SimpleImputer): Essential for feature-wise scaling and systematic missing value imputation prior to integration.

Integrated Multi-omics Preprocessing Protocol

The following experimental protocol outlines a standardized preprocessing workflow for transcriptomics and proteomics data integration, implementable across the featured platforms.

Title: Standardized Preprocessing for Transcriptomic-Proteomic Integration

Objective: To generate clean, batch-corrected, and normalized gene expression (RNA-seq) and protein abundance (LC-MS) matrices suitable for integrated multi-omics analysis.

Materials: See "The Scientist's Toolkit" below.

Methods:

- Raw Data Acquisition & QC:

- RNA-seq: Obtain paired-end FASTQ files. Run FastQC for initial quality assessment. Using Trimmomatic, trim adapters (

ILLUMINACLIP:TruSeq3-PE.fa:2:30:10) and low-quality bases (LEADING:3 TRAILING:3 SLIDINGWINDOW:4:15 MINLEN:36). Re-run FastQC to confirm improvement. - Proteomics: Obtain raw

.rawfiles and associated experimental design. Run MaxQuant. Set parameters:Label-free quantification(LFQ) enabled, match-between-runs enabled,iBAQcalculated. Use species-specific FASTA for database search.

- RNA-seq: Obtain paired-end FASTQ files. Run FastQC for initial quality assessment. Using Trimmomatic, trim adapters (

Quantification & Initial Matrix Generation:

- RNA-seq: Align trimmed reads to the reference genome using STAR (

--outSAMtype BAM SortedByCoordinate --quantMode GeneCounts). Generate a raw gene count matrix fromReadsPerGene.out.tabfiles. - Proteomics: Use the

proteinGroups.txtoutput from MaxQuant. Filter to remove contaminants, reverse database hits, and proteins 'Only identified by site'. Extract theLFQ intensitycolumns as the raw abundance matrix.

- RNA-seq: Align trimmed reads to the reference genome using STAR (

Platform-Specific Normalization & Filtering:

- RNA-seq (in R/DESeq2): Create a

DESeqDataSetobject. Apply independent filtering:dds <- dds[rowSums(counts(dds)) >= 10, ]. Perform variance stabilizing transformation (vst) for downstream integration. - Proteomics (in Python): Load LFQ matrix. Filter proteins with >70% valid values across samples. Impute missing values using a KNN-based algorithm (e.g.,

sklearn.impute.KNNImputer). Log2-transform the data.

- RNA-seq (in R/DESeq2): Create a

Cross-Assay Batch Effect Correction:

- Merge the processed RNA and protein matrices by common gene/protein identifiers, ensuring sample order matches.

- Identify batch covariates (e.g., sequencing run, MS instrument, preparation date).

- Apply a batch correction algorithm (e.g.,

ComBatfrom thesvapackage) to the combined matrix, using a known biological condition (e.g., disease state) as the model and technical factors as the batch.

Output: Produce two harmonized, batch-corrected matrices (transcriptomic and proteomic) ready for joint dimensionality reduction or network-based integration analysis.

Visualizing the Standardized Workflow

Title: Standardized Multi-omics Data Preprocessing Workflow

Title: Ecosystem for Reproducible Multi-omics Preprocessing

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Multi-omics Preprocessing Workflows

| Item | Function in Preprocessing | Example / Specification |

|---|---|---|

| Reference Genome | Baseline for read alignment and quantification in genomics/transcriptomics. | Human: GRCh38.p14 (Genome Reference Consortium) |

| Annotation Database (GTF/GFF) | Provides gene model coordinates and metadata for assigning sequence reads to features. | Ensembl Homo_sapiens.GRCh38.110.gtf |

| Protein Sequence Database (FASTA) | Essential for mass spectrometry search engines to identify peptides and proteins. | UniProtKB/Swiss-Prot human reviewed database |

| Adapter Sequence File | Contains common oligo sequences used in NGS library prep for adapter trimming. | TruSeq3-PE.fa (for Illumina paired-end) |

| Contaminant Database | List of common protein contaminants (e.g., keratins, enzymes) to filter from proteomics results. | MaxQuant contaminants.fasta |

| Container Image | Snapshot of a complete software environment ensuring reproducible execution of tools. | Docker: biocontainers/fastqc:v0.12.1_cv1 |

| Conda Environment File (YAML) | Declarative list of software packages and versions to recreate an analysis environment. | environment.yml specifying Python 3.10, Snakemake 7.32, etc. |

Within the broader thesis on Multi-omics data preprocessing standards, a critical challenge is the unification of heterogeneous omics data layers (e.g., genomics, transcriptomics, proteomics, metabolomics) for integrated analysis. Each layer differs fundamentally in scale, distribution, noise characteristics, and biological context. This technical guide details the core principles and methodologies for transforming and scaling disparate omics datasets into a cohesive framework suitable for downstream multi-omics modeling.

The Challenge of Heterogeneity

Omics data types are generated from distinct technological platforms, resulting in incompatible value ranges, missingness patterns, and batch effects. The table below summarizes the quantitative characteristics of major omics layers.

Table 1: Characteristic Ranges and Properties of Major Omics Data Layers

| Omics Layer | Typical Measurement | Dynamic Range | Common Distribution | Primary Source of Technical Noise |

|---|---|---|---|---|

| Genomics (SNP Array) | Allele Intensity (Log R Ratio, B Allele Freq) | ~2-3 orders | Mixture (Gamma, Normal) | Hybridization efficiency, GC bias |

| Transcriptomics (RNA-seq) | Read Counts | >6 orders | Negative Binomial | Library prep, sequencing depth, amplification bias |

| Proteomics (LC-MS) | Spectral Counts / Intensity | ~4-5 orders | Log-normal, Heavy-tailed | Ion suppression, digestion efficiency |

| Metabolomics (NMR/LC-MS) | Spectral Peak Intensity | ~3-4 orders | Log-normal | Sample prep, instrument drift |

| Epigenomics (ChIP-seq) | Read Counts/Peak Scores | >4 orders | Zero-inflated, Negative Binomial | Antibody specificity, fragmentation bias |

Foundational Transformation Methodologies

Variance-Stabilizing Transformations

The goal is to render the variance independent of the mean, a common issue in count-based data.

Protocol: Variance-Stabilizing Transformation (VST) for RNA-seq Count Data

- Input: Raw count matrix ( C ) with genes ( g ) and samples ( s ).

- Model Fitting: Fit a generalized linear model of the form: ( \text{Var}(C{gs}) = \mu{gs} + \alpha \cdot \mu{gs}^2 ), where ( \mu{gs} ) is the expected count and ( \alpha ) is the dispersion parameter (estimated per gene via

DESeq2or similar). - Transformation: Apply the integral transformation: ( \text{VST}(C{gs}) = \int^{C{gs}} \frac{1}{\sqrt{\mu + \alpha \mu^2}} d\mu ). In practice, this is approximated analytically within tools like

DESeq2::vst(). - Output: A transformed matrix where variance is approximately homogeneous across the mean expression range, suitable for linear PCA.

Scaling and Normalization Techniques

Scaling adjusts data to a common range, while normalization corrects for systematic technical biases.

Table 2: Scaling and Normalization Methods by Omics Type

| Method | Formula / Algorithm | Primary Application | Effect |

|---|---|---|---|

| Quantile Normalization | Align empirical distribution functions across samples. | Microarray, Methylation arrays | Forces identical distributions across samples. |

| Centered Log Ratio (CLR) | ( \text{CLR}(xi) = \ln[\frac{xi}{g(\mathbf{x})}] ), ( g(\mathbf{x}) ) = geometric mean. | Metabolomics, Microbiome (relative abundance) | Handles compositional data, removes sum constraint. |

| Z-Score Standardization | ( z = \frac{x - \mu}{\sigma} ) (per feature across samples). | Post-normalization proteomics/transcriptomics | Centers to zero mean, unit variance. |

| Min-Max Scaling | ( x' = \frac{x - \min(x)}{\max(x) - \min(x)} ). | Genomic score integration (e.g., chromatin accessibility) | Bounds features to [0,1] range. |

| ComBat (Batch Correction) | Empirical Bayes framework to adjust for batch means and variances. | Any omics layer with known batch effects | Removes batch-associated variation while preserving biological signal. |

Protocol: ComBat-Based Batch Correction for Multi-Omic Integration

- Prerequisite: Perform platform/assay-specific normalization (e.g., VST for RNA-seq, CLR for metabolomics).

- Model Specification: Define a design matrix ( X ) for biological covariates of interest (e.g., disease status).

- Empirical Bayes Adjustment: For each transformed dataset ( Db ) from batch ( b ), use the

sva::ComBat()function to estimate batch-specific location (( \alphab )) and scale (( \delta_b )) parameters, adjusting them toward the grand mean via empirical Bayes shrinkage. - Adjustment: Apply the formula ( D{ij}^{\text{corrected}} = \frac{D{ij} - \hat{\alpha}b}{\hat{\delta}b} \cdot \delta{\text{pooled}} + \alpha{\text{pooled}} ).

- Output: Batch-corrected matrices for each omics layer, enabling direct concatenation or relational analysis.

Missing Value Imputation Strategies

Missing data mechanisms (Missing Completely at Random - MCAR, Missing at Random - MAR) dictate the imputation approach.

Protocol: k-Nearest Neighbors (kNN) Imputation for Proteomics Data

- Input: A protein intensity matrix with missing values (often MAR due to detection limits).

- Distance Calculation: Compute a distance matrix (Euclidean or correlation-based) using only features with complete data, or after initial simple imputation (e.g., with minimum value).

- Neighbor Selection: For each sample ( i ) with a missing value in protein ( p ), identify the ( k ) most similar samples (neighbors) that have a valid measurement for ( p ). Typical ( k ) = 5-15.

- Imputation: Replace the missing value with the weighted average (by distance) of the value from the ( k ) neighbors.

- Iteration: Repeat steps 2-4 until convergence or for a preset number of iterations (

impute::impute.knn).

Workflow for Unified Multi-Omics Preprocessing

Multi-Omics Data Preprocessing Pipeline

Key Considerations for Integration Architecture

The choice of integration method (early: concatenation; intermediate: kernel/channel; late: model-based) depends on the preprocessed data structure.

Multi-Omics Integration Strategy Decision

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Tools for Multi-Omics Data Preprocessing

| Item / Tool | Function in Preprocessing | Example Product / Package |

|---|---|---|