Multi-Omics Integration: A Comprehensive Guide to Methods, Applications, and Best Practices for Biomedical Research

This article provides a comprehensive overview of multi-omics integration methods, tailored for researchers, scientists, and drug development professionals.

Multi-Omics Integration: A Comprehensive Guide to Methods, Applications, and Best Practices for Biomedical Research

Abstract

This article provides a comprehensive overview of multi-omics integration methods, tailored for researchers, scientists, and drug development professionals. We begin by establishing the foundational principles of genomics, transcriptomics, proteomics, and metabolomics, exploring the core rationale for their integration. We then delve into key methodological approaches, from early to late integration and AI-driven techniques, with concrete applications in disease subtyping and biomarker discovery. Practical guidance is offered for navigating common challenges like batch effects, missing data, and computational demands. The guide concludes with a critical evaluation of method validation, benchmarking strategies, and comparative analysis of popular tools, synthesizing key takeaways and future directions for clinical translation.

Multi-Omics 101: Unlocking the Why and What of Genomic, Transcriptomic, Proteomic, and Metabolomic Data Fusion

This whitepaper provides an in-depth technical guide to the core omics disciplines, framing their individual and integrated roles within the broader thesis of multi-omics integration methods research. Understanding each layer—from the static genome to the dynamic metabolome—is foundational for developing robust integration strategies that accelerate biomedical discovery and therapeutic development.

The Hierarchical Omics Cascade

Biological information flows from the genetic blueprint through functional and phenotypic layers. Each omics tier captures a distinct dimension of this complexity.

Table 1: The Core Omics Tiers: Scope, Measurement Technologies, and Output

| Omics Tier | Definition & Scope | Key Technologies | Primary Output |

|---|---|---|---|

| Genomics | Study of the complete DNA sequence, including genes, non-coding regions, and structural variants. | Next-Generation Sequencing (NGS), Whole-Genome Sequencing, SNP arrays. | DNA sequence, genetic variants, structural alterations. |

| Epigenomics | Study of heritable chemical modifications to DNA and histones that regulate gene expression without altering sequence. | Bisulfite Sequencing (WGBS), ChIP-Seq, ATAC-Seq. | DNA methylation patterns, histone marks, chromatin accessibility maps. |

| Transcriptomics | Study of the complete set of RNA transcripts produced by the genome under specific conditions. | RNA-Seq, single-cell RNA-Seq, microarrays. | Gene expression levels, splice variants, non-coding RNA profiles. |

| Proteomics | Study of the full complement of proteins, including their structures, modifications, and abundances. | Mass Spectrometry (LC-MS/MS), affinity proteomics (antibody arrays). | Protein identification, quantification, post-translational modifications (PTMs). |

| Metabolomics | Study of the complete set of small-molecule metabolites within a biological system. | Mass Spectrometry (GC-MS, LC-MS), Nuclear Magnetic Resonance (NMR). | Metabolite identification and concentration, metabolic pathway activity. |

Detailed Experimental Methodologies

Whole-Genome Sequencing (WGS) for Genomics

Objective: To determine the complete DNA sequence of an organism's genome. Protocol Summary:

- DNA Extraction & QC: Isolate high-molecular-weight genomic DNA (e.g., using phenol-chloroform or kit-based methods). Assess purity (A260/A280 ~1.8) and integrity (e.g., via gel electrophoresis).

- Library Preparation: Fragment DNA via acoustic shearing or enzymatic digestion. End-repair, A-tail fragments, and ligate with platform-specific sequencing adapters. Size-select fragments (typically 300-500 bp).

- Cluster Amplification & Sequencing: Denature library and load onto flow cell (Illumina) or bead (Ion Torrent). Perform bridge amplification or emulsion PCR to generate clonal clusters. Sequence-by-synthesis (Illumina) or semiconductor sequencing (Ion Torrent) is performed.

- Data Analysis: Base calling, read alignment to a reference genome (e.g., using BWA-MEM), variant calling (e.g., using GATK), and annotation.

LC-MS/MS-Based Shotgun Proteomics

Objective: To identify and quantify the proteome of a complex biological sample. Protocol Summary:

- Protein Extraction & Digestion: Lyse cells/tissue in strong denaturing buffer (e.g., 8M urea). Reduce disulfide bonds (DTT) and alkylate cysteines (iodoacetamide). Digest proteins into peptides using trypsin (overnight, 37°C).

- Peptide Cleanup & Fractionation: Desalt peptides using C18 solid-phase extraction tips or columns. For deep profiling, fractionate peptides via high-pH reverse-phase chromatography or SCX.

- LC-MS/MS Analysis: Separate peptides on a nano-flow C18 column with a gradient of acetonitrile. Eluting peptides are ionized (ESI) and analyzed on a high-resolution tandem mass spectrometer (e.g., Q-Exactive). Perform data-dependent acquisition (DDA): survey MS1 scan followed by MS2 fragmentation of top N ions.

- Data Processing: Search MS2 spectra against a protein sequence database using engines (e.g., Sequest, MaxQuant). Apply false discovery rate (FDR) thresholds. Quantify via label-free (peak area) or labeled (TMT, SILAC) methods.

Untargeted Metabolomics via LC-MS

Objective: To comprehensively profile small-molecule metabolites in a biological sample. Protocol Summary:

- Metabolite Extraction: Quench metabolism rapidly (liquid nitrogen). Extract metabolites using a biphasic solvent system (e.g., cold methanol/water/chloroform) to recover polar and non-polar species.

- Chromatographic Separation: Inject extract onto orthogonal LC columns. Common modes: Reversed-Phase (C18): For hydrophobic metabolites (lipids). Hydrophilic Interaction Liquid Chromatography (HILIC): For polar metabolites (sugars, amino acids).

- Mass Spectrometry: Use high-resolution mass spectrometer (e.g., TOF, Orbitrap) in both positive and negative electrospray ionization modes. Acquire data in full-scan MS mode (m/z 50-1500) to detect all ions.

- Data Processing & Annotation: Perform peak picking, alignment, and deconvolution using software (XCMS, MS-DIAL). Annotate metabolites by matching m/z, retention time, and MS/MS fragmentation spectra to authentic standards in databases (e.g., HMDB, METLIN).

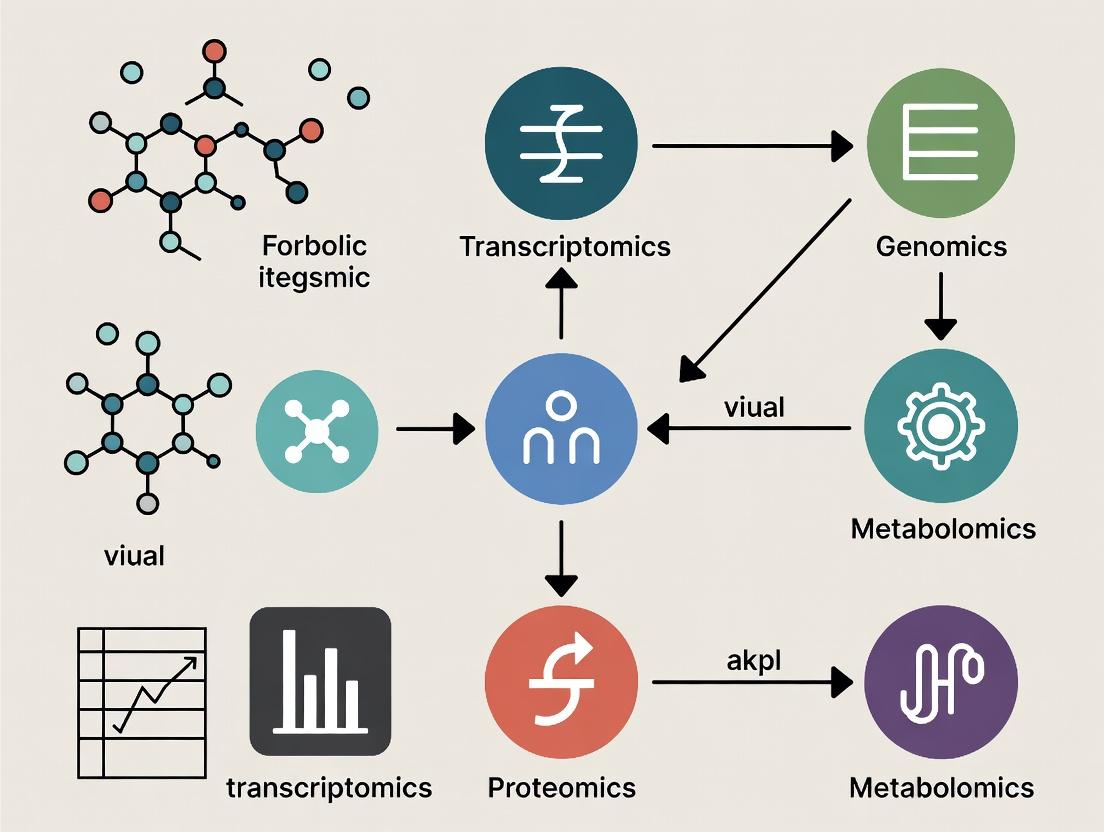

Visualizing Omics Relationships and Workflows

Title: The Omics Cascade from Genome to Phenotype

Title: Multi-Omic Data Generation and Integration Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Kits for Core Omics Experiments

| Item Name (Example) | Omics Field | Function & Application |

|---|---|---|

| KAPA HyperPrep Kit | Genomics/Transcriptomics | For construction of high-quality, Illumina-compatible sequencing libraries from DNA or RNA. |

| NEBNext Enzymatic Methyl-seq Kit | Epigenomics | Provides a workflow for enzymatic conversion of unmethylated cytosines for bisulfite-free DNA methylation sequencing. |

| Trypsin, Sequencing Grade | Proteomics | Protease that cleaves specifically at the C-terminal side of lysine and arginine residues, generating peptides for LC-MS/MS analysis. |

| TMTpro 16plex Isobaric Label Reagent Set | Proteomics | Enables multiplexed quantification of proteins from up to 16 samples simultaneously by MS/MS, increasing throughput. |

| BioGenesis LC-MS Acclaim Column (C18) | Metabolomics/Proteomics | High-performance UHPLC column for robust separation of complex mixtures of peptides or metabolites prior to MS. |

| Preeclampsia Metabolomics Standard | Metabolomics | A curated mix of deuterated internal standards for quantifying key metabolites in relevant biological pathways, ensuring accurate MS quantification. |

| Multi-omics QC Reference Material (e.g., HeLa) | Multi-omics | A standardized cell line extract used as a quality control material across genomic, proteomic, and metabolomic platforms to assess batch effects and technical variation. |

The Central Dogma of molecular biology describes the unidirectional flow of information from DNA to RNA to protein. This framework has historically structured biological research, leading to the development of siloed omics disciplines: genomics, transcriptomics, proteomics, and metabolomics. However, this linear, compartmentalized view is insufficient for understanding complex phenotypic outcomes. Within the broader thesis of multi-omics integration research, this guide argues that only through concurrent analysis and integration of these layers can we decipher the non-linear, regulatory networks that govern health and disease.

The Limitations of Siloed Omics

Single-omics studies provide a limited snapshot. Genomic variants may not predict transcript abundance due to epigenetic regulation; mRNA levels often correlate poorly with protein abundance due to post-transcriptional and translational control; and protein activity is further modulated by post-translational modifications and metabolite availability.

Table 1: Discordance Between Omics Layers in a Hypothetical Cancer Study

| Omics Layer | Measured Entity | Key Finding in Siloed Analysis | Limitation Revealed by Multi-Omics |

|---|---|---|---|

| Genomics | Somatic Mutations | Oncogene EGFR amplified. | Does not inform on functional protein output or activation state. |

| Transcriptomics | mRNA levels | EGFR transcript is elevated 5-fold. | Poor correlation (R~0.4-0.5) with actual protein abundance. |

| Proteomics & Phosphoproteomics | Protein & Phospho-protein | Total EGFR protein elevated 2-fold; p-EGFR (Y1068) elevated 10-fold. | Reveals hyper-activation not predictable from genomics/transcriptomics. |

| Metabolomics | Metabolites | Lactate, succinate levels highly elevated. | Indicates downstream Warburg effect and potential oncometabolite activity. |

Foundational Multi-Omics Experimental Protocol

Protocol: Integrated Multi-Omics Sample Preparation from a Tissue Biopsy Objective: To extract high-quality DNA, RNA, proteins, and metabolites from a single, limited tissue sample for coordinated multi-omics profiling.

- Tissue Lysis & Homogenization: Flash-frozen tissue (e.g., 20-30 mg) is cryo-pulverized. Powder is suspended in a tri-phasic monophasic lysis buffer (e.g., Phenol/Guanidine Thiocyanate). This simultaneously denatures nucleases and proteases.

- Phase Separation: Add chloroform and centrifuge. The mixture separates into: an upper aqueous phase (RNA), an interphase (DNA and proteins), and a lower organic phase (lipids, small non-polar molecules).

- RNA Recovery: The aqueous phase is recovered. RNA is precipitated with isopropanol, washed with ethanol, and eluted. Used for RNA-seq.

- DNA & Protein Recovery from Interphase: The interphase and organic phase are treated with ethanol to precipitate DNA, which is pelleted. The subsequent supernatant is mixed with isopropanol to precipitate proteins. The protein pellet is washed and solubilized in an SDS-based buffer for proteomics (e.g., LC-MS/MS). The DNA pellet is processed for WES or WGS.

- Metabolite Extraction (Parallel): A separate aliquot of tissue powder is quenched with cold methanol/acetonitrile/water mixture, vortexed, and centrifuged. The supernatant is dried and reconstituted for LC-MS metabolomics.

Diagram Title: Multi-Omics Sample Prep Workflow

Key Signaling Pathway in Multi-Omics Context

A canonical pathway like PI3K-AKT-mTOR demonstrates the need for integration. A genomic variant in PIK3CA (encoding PI3K) may be identified, but its functional consequence requires measuring phosphorylated AKT (p-AKT) and p-S6K in phosphoproteomics, and downstream metabolic shifts like increased glycolytic intermediates in metabolomics.

Diagram Title: PI3K Pathway Multi-Omics Regulation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for Multi-Omics Integration Studies

| Item | Function in Multi-Omics |

|---|---|

| Tri-Reagent (Monophasic Lysis Buffer) | Enables simultaneous isolation of RNA, DNA, and protein from a single sample, critical for matched multi-omics. |

| Stable Isotope Labeling by Amino Acids in Cell Culture (SILAC) | Mass spectrometry-based proteomics method using heavy amino acids to provide accurate, quantitative protein and phosphorylation data across conditions. |

| Single-Cell Multi-Omics Kits (e.g., CITE-seq/REAP-seq) | Allow simultaneous measurement of transcriptomics and surface proteinomics from single cells, linking gene expression to phenotypic markers. |

| Next-Generation Sequencing (NGS) Kits | For whole genome, exome, and transcriptome library preparation. Paired sequencing of DNA and RNA from the same sample is standard for integration. |

| Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) Columns | Core hardware for separating and identifying complex mixtures of peptides (proteomics) or metabolites (metabolomics). |

| Multi-Omics Data Integration Software (e.g., MOFA, mixOmics) | Statistical and machine learning frameworks designed specifically for the joint analysis of multiple omics datasets. |

Moving beyond the linear Central Dogma requires a paradigm shift towards multi-omics integration. Siloed analyses miss the emergent properties arising from interactions across molecular layers. By employing robust, matched sample protocols, leveraging complementary reagent solutions, and utilizing integrative computational frameworks, researchers can construct a more holistic, causal, and actionable understanding of biological systems, accelerating biomarker discovery and therapeutic development.

Within the broader thesis on Introduction to Multi-Omics Integration Methods Research, this technical guide elucidates the core objectives driving the integration of disparate biological data layers. The transition from descriptive systems biology to predictive, mechanistic modeling represents a paradigm shift in biomedical research and therapeutic development. This document outlines the key goals, technical methodologies, and practical resources essential for this endeavor.

Core Goals of Multi-Omics Integration

The integration of genomics, transcriptomics, proteomics, metabolomics, and epigenomics data is pursued with several interconnected, high-level goals.

- Holistic System Characterization: To move beyond single-molecule or single-pathway analyses and construct a comprehensive, multi-scale view of biological systems, from cells to tissues.

- Mechanistic Insight Discovery: To infer and validate causal regulatory relationships and signaling cascades that link genetic variation to phenotypic outcomes.

- Biomarker Identification & Validation: To discover robust, composite biomarkers (e.g., multi-omic signatures) with superior diagnostic, prognostic, or predictive power compared to single-omics markers.

- Predictive Model Construction: To build in silico models capable of simulating system perturbations (e.g., drug treatment, gene knockout) and predicting phenotypic responses.

- Clinical Translation & Personalization: To stratify patient populations, identify novel therapeutic targets, and inform personalized treatment strategies based on integrated molecular profiles.

Quantitative Landscape of Multi-Omics Studies

The scale and complexity of integrated studies are reflected in the following quantitative summaries.

Table 1: Typical Data Scale in Multi-Omics Studies

| Omics Layer | Typical Features per Sample | Common Sequencing/Assay Depth | Primary Technology Platform |

|---|---|---|---|

| Genomics (WGS) | ~5M variants (SNVs/Indels) | 30-60x coverage | Illumina NovaSeq, PacBio HiFi |

| Transcriptomics (RNA-seq) | 20,000-60,000 transcripts | 20-50M reads per sample | Illumina NextSeq, scRNA-seq |

| Proteomics (Mass Spec) | 5,000-10,000 proteins | ~120min LC-MS/MS gradient | Thermo Orbitrap Exploris, TMT labeling |

| Metabolomics | 500-2,000 metabolites | MS1 & MS/MS acquisition | Agilent Q-TOF, Waters ACQUITY |

| Epigenomics (ATAC-seq) | 50,000-150,000 peaks | 50-100M reads per sample | Illumina NextSeq, Assay for Transposase-Accessible Chromatin |

Table 2: Performance Metrics of Common Integration Methods

| Integration Method Class | Example Algorithm | Key Strength | Typical Computation Time* (for n=1000, p=5000) | Primary Goal Addressed |

|---|---|---|---|---|

| Concatenation-Based | MOFA+ | Handles missing data, extracts latent factors | 30-60 minutes | 1, 2 |

| Similarity-Based | Similarity Network Fusion (SNF) | Preserves data-specific structures, good for clustering | 15-30 minutes | 1, 3 |

| Manifold Alignment | MMD-MA | Aligns heterogeneous data in common low-dim space | 2-4 hours | 1, 4 |

| Deep Learning (DL) | Autoencoder-based | Captures non-linear relationships, powerful for prediction | 4-8 hours (GPU-dependent) | 2, 4, 5 |

| Bayesian Networks | Multi-omics Bayesian Network (MOBN) | Infers directed, causal relationships | 8-12 hours | 2, 4 |

| Computation time is indicative and varies based on hardware, data sparsity, and parameter tuning. |

Detailed Experimental Protocol: A Multi-Omics Workflow for Drug Response Prediction

This protocol details a representative study integrating transcriptomics and proteomics to model cancer cell line response to a kinase inhibitor.

1. Experimental Design & Sample Preparation

- Cell Lines: Use a panel of 50 genetically diverse cancer cell lines (e.g., from NCI-60 or CCLE).

- Treatment: Treat each cell line with a targeted kinase inhibitor (e.g., Trametinib, a MEK inhibitor) at its IC50 concentration and a DMSO vehicle control for 24 hours.

- Replicates: Perform three biological replicates per condition.

2. Multi-Omics Data Generation

- Transcriptomics (RNA-seq):

- Lysis & Extraction: Lyse cells in TRIzol. Isolate total RNA using silica-membrane columns. Assess integrity (RIN > 8.5, Agilent Bioanalyzer).

- Library Prep: Deplete ribosomal RNA. Prepare stranded cDNA libraries using a kit (e.g., Illumina Stranded Total RNA Prep).

- Sequencing: Pool libraries and sequence on an Illumina NextSeq 2000 platform to a depth of 30 million 150bp paired-end reads per sample.

- Proteomics (LC-MS/MS):

- Lysis & Digestion: Lyse cell pellets in RIPA buffer with protease inhibitors. Reduce, alkylate, and digest proteins with trypsin (1:50 enzyme-to-protein ratio, 37°C, overnight).

- Peptide Labeling (Optional): Use TMTpro 16plex kits to multiplex samples, reducing batch effects.

- LC-MS/MS: Fractionate peptides via high-pH reversed-phase chromatography. Analyze fractions on a Thermo Orbitrap Exploris 480 coupled to a nanoLC system (120min gradient). Use data-dependent acquisition (DDA) with MS1 resolution 120,000 and MS2 45,000.

3. Data Processing & Bioinformatics

- RNA-seq Analysis:

- Alignment & Quantification: Align reads to the human reference genome (GRCh38) using STAR aligner. Quantify gene-level counts with featureCounts.

- Differential Expression: Using the

DESeq2R package, normalize counts (median-of-ratios) and identify differentially expressed genes (DEGs) between treatment and control (FDR-adjusted p-value < 0.05, |log2FC| > 1).

- Proteomics Analysis:

- Identification & Quantification: Process raw files with MaxQuant or FragPipe. Search against the UniProt human database. Use a 1% FDR cutoff at peptide and protein levels.

- Differential Abundance: Perform statistical testing (e.g., LIMMA in R) on log2-transformed protein intensities to find differentially abundant proteins (DAPs) (FDR < 0.05).

4. Data Integration & Modeling

- Pre-integration: Match gene and protein identifiers. Impute missing protein values (if any) using k-nearest neighbors. Scale and center all features.

- Integration & Feature Reduction: Apply Multi-Omics Factor Analysis (MOFA+) to the combined matrix of DEGs and DAPs. This extracts a set of latent factors that capture shared and specific variance across the two omics layers.

- Predictive Model Training: Use the latent factors from MOFA+ as input features (X). The output variable (Y) is the continuous drug response metric (e.g., -log10(IC50)) from public pharmacogenomics databases (e.g., GDSC). Train a regression model (e.g., Elastic Net, Random Forest, or XGBoost) using 70% of cell lines. Tune hyperparameters via 5-fold cross-validation.

- Validation: Evaluate the model on the held-out 30% test set. Calculate performance metrics: Root Mean Square Error (RMSE), Mean Absolute Error (MAE), and R-squared.

Pathway and Workflow Visualizations

Diagram 1: MAPK Pathway & Multi-Omics Measurement

Diagram 2: Predictive Multi-Omics Integration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for a Multi-Omics Study

| Category | Item | Function & Brief Explanation |

|---|---|---|

| Sample Prep | TRIzol Reagent | A mono-phasic solution of phenol and guanidine isothiocyanate for simultaneous disruption of cells and denaturation of proteins, ideal for co-extracting RNA, DNA, and proteins. |

| Sample Prep | RIPA Lysis Buffer | A radioimmunoprecipitation assay buffer for efficient cell lysis and extraction of total cellular proteins, compatible with downstream proteomic digestion. |

| Sample Prep | Trypsin, Sequencing Grade | A protease that cleaves peptide chains at the carboxyl side of lysine and arginine residues, generating peptides suitable for LC-MS/MS analysis. |

| Transcriptomics | Illumina Stranded Total RNA Prep | A library preparation kit that includes ribosomal RNA depletion and strand-specific cDNA synthesis for high-quality RNA-seq libraries. |

| Proteomics | TMTpro 16plex Isobaric Label Reagent Set | Chemical tags for multiplexing up to 16 samples in a single LC-MS/MS run, enabling quantitative comparison and reducing instrumental run time. |

| Proteomics | C18 StageTips (or Columns) | Microcolumns packed with reversed-phase C18 material for desalting and concentrating peptide samples prior to LC-MS/MS injection. |

| Bioinformatics | R/Bioconductor Packages (DESeq2, LIMMA, MOFA2) | Open-source software tools for statistical analysis of differential expression, differential abundance, and multi-omics factor integration. |

| Data Storage | High-Performance Computing (HPC) Cluster or Cloud (AWS/GCP) | Essential for storing large sequencing files (~TB scale) and performing computationally intensive integration and modeling tasks. |

Within the framework of multi-omics integration research, a fundamental prerequisite is a deep understanding of the distinct core data types generated by each major omics layer. Each layer provides a unique, high-dimensional snapshot of biological activity, from the static genomic blueprint to the dynamic metabolomic state. This technical overview delineates the nature of the primary data, the key technologies for their generation, their inherent analytical challenges, and the implications for their integration, serving as a foundation for methodological development in systems biology and precision medicine.

The Major Omics Layers: Data Types and Characteristics

Genomics

Genomics concerns the complete set of DNA within an organism, including all genes and non-coding sequences. It represents the foundational, largely static blueprint.

Core Data Type: DNA sequences (strings of A, T, C, G nucleotides). Primary outputs include reference-aligned reads (BAM files), variant calls (VCF files), and assembled genomes (FASTA).

Key Technologies: Next-Generation Sequencing (NGS), including Whole Genome Sequencing (WGS) and Targeted Panels; Third-Generation Sequencing (e.g., PacBio, Oxford Nanopore) for long reads.

Unique Challenges:

- Data Scale: A single human WGS run generates ~100-150 GB of raw data.

- Variant Interpretation: Distinguishing pathogenic mutations from benign polymorphisms is non-trivial.

- Structural Variants: Detection of large insertions, deletions, and rearrangements remains challenging with short-read technologies.

Transcriptomics

Transcriptomics profiles the complete set of RNA transcripts (the transcriptome) produced in a cell or population at a specific time point, reflecting active gene expression.

Core Data Type: RNA sequence reads (RNA-seq) or probe intensity values (microarrays). Key outputs are read counts or normalized expression values (e.g., TPM, FPKM) per gene/transcript.

Key Technologies: Bulk RNA-seq, Single-Cell RNA-seq (scRNA-seq), Spatial Transcriptomics.

Unique Challenges:

- Dynamic Range: Expression levels can span several orders of magnitude.

- Transcript Isoforms: Accurate quantification of alternative splicing events requires specialized library prep and analysis.

- Single-Cell Sparsity: scRNA-seq data is characterized by high technical noise and "dropout" events (zero counts for expressed genes).

Proteomics

Proteomics identifies and quantifies the complete set of proteins (the proteome), which are the functional effectors of cellular processes.

Core Data Type: Mass-to-charge (m/z) ratios and intensity spectra from mass spectrometers. Outputs are peptide/protein identification and abundance values.

Key Technologies: Liquid Chromatography with Tandem Mass Spectrometry (LC-MS/MS), Data-Independent Acquisition (DIA), Antibody-based arrays (e.g., Olink).

Unique Challenges:

- Dynamic Range & Detection: The proteome's dynamic range exceeds 10^10, making low-abundance proteins difficult to detect.

- Post-Translational Modifications (PTMs): Comprehensive profiling of PTMs (e.g., phosphorylation) requires specific enrichment techniques.

- No Direct Amplification: Unlike DNA/RNA, proteins cannot be amplified, limiting sensitivity.

Metabolomics

Metabolomics measures the collection of small-molecule metabolites (e.g., sugars, lipids, amino acids) within a biological system, representing the downstream functional readout of cellular processes.

Core Data Type: Spectra from Nuclear Magnetic Resonance (NMR) or m/z spectra from Mass Spectrometry (MS). Outputs are metabolite identification and relative/absolute concentrations.

Key Technologies: LC-MS, Gas Chromatography-MS (GC-MS), NMR Spectroscopy.

Unique Challenges:

- Chemical Diversity: Metabolites are highly diverse in structure and chemical properties, requiring multiple analytical platforms.

- Rapid Turnover: Metabolites can change on a sub-second timescale, demanding careful sample quenching.

- Annotation & Identification: A large proportion of detected spectral features remain unknown or unannotated.

Epigenomics

Epigenomics studies heritable changes in gene function that do not involve changes in the DNA sequence itself, such as DNA methylation and histone modifications.

Core Data Type: Sequencing reads from enriched DNA fragments (ChIP-seq) or bisulfite-converted DNA (WGBS). Outputs include peak calls for protein binding sites or methylation ratios at cytosine bases.

Key Technologies: Chromatin Immunoprecipitation Sequencing (ChIP-seq), Assay for Transposase-Accessible Chromatin (ATAC-seq), Whole-Genome Bisulfite Sequencing (WGBS).

Unique Challenges:

- Cell-Type Specificity: Epigenetic marks are highly cell-type specific, complicating bulk tissue analysis.

- Bisulfite Conversion Artifacts: WGBS can suffer from DNA degradation and incomplete conversion.

- Data Integration: Relating histone marks or chromatin accessibility to gene expression is complex.

Table 1: Quantitative and qualitative comparison of core omics data types.

| Omics Layer | Core Molecule | Typical Data Volume per Sample | Temporal Dynamics | Primary Technological Platform | Key File Formats |

|---|---|---|---|---|---|

| Genomics | DNA | 100-150 GB (WGS) | Static (mostly) | NGS (Illumina), Long-Read Seq | FASTQ, BAM, VCF, FASTA |

| Transcriptomics | RNA | 5-50 GB (RNA-seq) | High (minutes-hours) | RNA-seq (Illumina) | FASTQ, BAM, TXT/CSV (count matrix) |

| Proteomics | Proteins | 1-10 GB (LC-MS/MS) | Moderate (hours) | LC-MS/MS | .raw (vendor), mzML, mzIdentML |

| Metabolomics | Metabolites | 0.5-5 GB (LC-MS) | Very High (seconds-minutes) | LC-MS, GC-MS, NMR | .raw (vendor), mzML, CDF |

| Epigenomics | DNA/Histones | 20-100 GB (ChIP-seq/WGBS) | Moderate to High | NGS (Illumina) | FASTQ, BAM, BED, bigWig |

Protocol 1: Bulk RNA-Sequencing (Standard Poly-A Selection)

Objective: To profile the polyadenylated transcriptome in a bulk tissue or cell population.

- RNA Extraction & QC: Isolate total RNA using a guanidinium thiocyanate-phenol-chloroform method (e.g., TRIzol). Assess integrity via RIN (RNA Integrity Number) on a Bioanalyzer.

- Poly-A Selection: Use oligo(dT) magnetic beads to enrich for messenger RNA (mRNA).

- Library Preparation: Fragment mRNA, synthesize cDNA, add sequencing adapters, and perform PCR amplification. Barcode samples for multiplexing.

- Sequencing: Pool libraries and sequence on an Illumina platform (e.g., NovaSeq) to generate 50-150 bp paired-end reads.

- Primary Analysis: Align reads to a reference genome (e.g., STAR aligner) and quantify gene-level counts (e.g., featureCounts).

Protocol 2: Data-Independent Acquisition (DIA) Proteomics

Objective: To achieve reproducible, comprehensive protein quantification.

- Sample Lysis & Digestion: Lyse cells/tissue in a denaturing buffer (e.g., 8M Urea). Reduce disulfide bonds (DTT), alkylate cysteines (IAA), and digest proteins with trypsin (1:50 enzyme-to-protein ratio, 37°C, overnight).

- Peptide Desalting: Desalt peptides using C18 solid-phase extraction tips or columns.

- LC-MS/MS Analysis:

- Chromatography: Separate peptides on a C18 reversed-phase nanoLC column with a 60-180 minute gradient.

- Mass Spectrometry (DIA Mode): Operate the mass spectrometer (e.g., Orbitrap) in cycles of one full MS1 scan followed by ~20-40 sequential MS2 scans across predefined, wide m/z isolation windows (e.g., 25 Da each) covering the entire mass range of interest.

- Data Analysis: Use spectral library-based (e.g., Spectronaut, DIA-NN) or library-free tools to deconvolute the multiplexed DIA MS2 spectra and quantify peptides/proteins.

Protocol 3: Whole-Genome Bisulfite Sequencing (WGBS)

Objective: To generate single-base-pair resolution maps of DNA methylation (5-methylcytosine).

- DNA Extraction & Fragmentation: Isolate high-molecular-weight genomic DNA and fragment it via sonication (200-300 bp).

- Bisulfite Conversion: Treat DNA with sodium bisulfite, which deaminates unmethylated cytosines to uracil, while methylated cytosines remain unchanged.

- Library Preparation: Repair ends, add adapters to bisulfite-converted DNA, and perform PCR amplification. Note: Adapters must be added after conversion to avoid their degradation.

- Sequencing & Analysis: Sequence on an Illumina platform. Align reads using bisulfite-aware aligners (e.g., Bismark, BWA-meth). Calculate methylation percentage as (C reads / (C + T reads)) at each cytosine position in the reference.

Visualizing Omics Workflows and Relationships

Diagram 1: Central Dogma & Omics Layers Relationship

Diagram 2: Generalized Multi-Omics Experimental & Computational Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key reagents and materials for core omics experiments.

| Category / Item | Specific Example(s) | Primary Function in Omics Workflow |

|---|---|---|

| Nucleic Acid Isolation | TRIzol Reagent, Qiagen DNeasy/ RNeasy Kits, Magnetic Beads (SPRI) | Lyse cells and separate/purify DNA or RNA based on chemical or physical properties. |

| Protein Digestion | Trypsin (Sequencing Grade), Lys-C, RapiGest SF Surfactant | Enzymatically cleave proteins into peptides for LC-MS/MS analysis. |

| Bisulfite Conversion | EZ DNA Methylation Kit (Zymo), Sodium Bisulfite Solution | Chemically convert unmethylated cytosine to uracil for methylation sequencing. |

| Chromatin Immunoprecipitation | Protein A/G Magnetic Beads, ChIP-Validated Antibodies (e.g., H3K27ac), Formaldehyde | Cross-link and immuno-enrich specific protein-DNA complexes for sequencing. |

| Metabolite Extraction | Methanol, Acetonitrile, Methyl-tert-butyl ether (MTBE) | Precipitate proteins and extract a broad range of polar and non-polar metabolites. |

| Mass Spec Standards | iRT Kit (Biognosys), Stable Isotope Labeled Amino Acids (SILAC), Heavy Labeled Metabolites | Provide internal retention time and quantification standards for LC-MS calibration. |

| Sequencing Library Prep | Illumina TruSeq Kits, NEBNext Ultra II DNA Library Kit, SMARTer cDNA Synthesis Kit | Prepare fragmented, adapter-ligated DNA/RNA libraries compatible with NGS platforms. |

| Single-Cell Isolation | Chromium Controller & Chips (10x Genomics), FACS Sorter | Partition individual cells or nuclei into droplets or wells for barcoding. |

Within the broader thesis on Introduction to multi-omics integration methods research, a robust foundation in bioinformatics and statistics is not merely beneficial—it is indispensable. Multi-omics integration aims to synthesize data from genomics, transcriptomics, proteomics, metabolomics, and other layers to construct a holistic model of biological systems. This endeavor is foundational for modern drug discovery and systems biology. This guide details the core knowledge and practical methodologies required to embark on this research journey.

Foundational Statistical Knowledge

A deep understanding of statistical concepts is critical for experimental design, data preprocessing, and inferential analysis in multi-omics studies.

Core Statistical Concepts

The following table summarizes the essential statistical areas and their application in multi-omics research.

Table 1: Core Statistical Prerequisites for Multi-Omics Research

| Statistical Domain | Key Concepts | Application in Multi-Omics |

|---|---|---|

| Probability & Distributions | Bayes' Theorem, Binomial, Poisson, Gaussian, Gamma, Beta distributions. | Modeling read counts (Negative Binomial for RNA-seq), prior knowledge integration in Bayesian models. |

| Hypothesis Testing & Correction | p-values, Type I/II error, False Discovery Rate (FDR), Bonferroni correction. | Differential expression/abundance analysis across thousands of features. |

| Multivariate Statistics | Principal Component Analysis (PCA), Multidimensional Scaling (MDS), Canonical Correlation Analysis (CCA). | Dimensionality reduction, visualization, and initial data integration. |

| Regression & Modeling | Linear/Generalized Linear Models (GLM), Logistic Regression, Regularization (LASSO, Ridge). | Modeling relationships between omics layers and phenotypic outcomes. |

| Machine Learning Fundamentals | Supervised (Random Forest, SVM) vs. Unsupervised (Clustering, k-means) learning; Cross-validation; Overfitting. | Predictive model building for patient stratification or clinical outcome prediction. |

Experimental Protocol: Differential Expression Analysis (RNA-seq)

A canonical application of statistical inference in omics.

Protocol: DESeq2 Workflow for Differential Gene Expression

- Data Input: Raw count matrix (genes x samples) and sample metadata (e.g., condition, batch).

- Normalization: DESeq2 performs median-of-ratios normalization to correct for library size and RNA composition bias.

- Model Fitting: A Negative Binomial GLM is fitted to the count data:

Count ~ Condition + Batch. - Dispersion Estimation: Gene-wise dispersion estimates are shrunk towards a trended mean to improve stability.

- Hypothesis Testing: The Wald test or Likelihood Ratio Test (LRT) is applied to coefficients of interest (e.g., Condition B vs. A). p-values are generated.

- Multiple Testing Correction: The Benjamini-Hochberg procedure is applied to control the False Discovery Rate (FDR). Genes with an adjusted p-value (padj) < 0.05 are typically considered significant.

- Output: A results table with log2 fold changes, standard errors, test statistics, p-values, and adjusted p-values.

Diagram 1: DESeq2 differential expression analysis workflow.

Foundational Bioinformatics Knowledge

Bioinformatics provides the computational frameworks and biological context to process and interpret omics data.

Core Bioinformatics Competencies

Table 2: Essential Bioinformatics Competencies

| Competency Area | Specific Skills & Knowledge | Relevance to Multi-Omics |

|---|---|---|

| Molecular Biology | Central Dogma, gene regulation, epigenetics, pathway biology (e.g., KEGG, Reactome). | Provides biological meaning to integrated data; essential for interpreting results. |

| Programming | Proficiency in R and/or Python; bash/shell scripting for pipeline management. | Data manipulation, statistical analysis, and custom tool development. |

| Data Structures & Formats | FASTQ, SAM/BAM, VCF, GTF/GFF, mx, HDF5. FASTA/FASTQ parsing, sequence alignment principles. | Handling raw and processed data from diverse omics technologies. |

| Databases & Resources | NCBI, EBI, UCSC Genome Browser, UniProt, STRING, TCGA, GTEx, Human Protein Atlas. | Accessing reference genomes, annotations, and public datasets for validation. |

| Pipeline & Workflow Tools | Snakemake, Nextflow, WDL, Docker/Singularity. | Ensuring reproducibility and scalability of analyses. |

Experimental Protocol: Read Alignment and Quantification

A fundamental upstream bioinformatics step for sequencing-based omics (genomics, transcriptomics).

Protocol: RNA-seq Read Alignment and Gene Quantification using STAR

- Prerequisite: Generate a genome index.

STAR --runMode genomeGenerate --genomeDir /path/to/index --genomeFastaFiles genome.fa --sjdbGTFfile annotation.gtf --sjdbOverhang 100 - Alignment: Map reads to the reference genome.

STAR --genomeDir /path/to/index --readFilesIn sample_R1.fastq.gz sample_R2.fastq.gz --readFilesCommand zcat --runThreadN 8 --outSAMtype BAM SortedByCoordinate --outFileNamePrefix sample_ - Quantification: Generate read counts per gene. Use the

--quantMode GeneCountsflag during alignment or a tool likefeatureCounts(from Subread package) on the aligned BAM file.featureCounts -T 8 -p -a annotation.gtf -o counts.txt sample_Aligned.sortedByCoord.out.bam - Output: A counts matrix ready for statistical analysis (as in Section 2.2).

Diagram 2: RNA-seq alignment and quantification workflow.

The Multi-Omics Integration Mindset

Integration itself requires specialized conceptual and methodological bridges between statistics and bioinformatics.

Integration Approaches & Statistical Frameworks

Table 3: Common Multi-Omics Integration Methods

| Integration Approach | Description | Key Statistical/Bioinformatics Methods |

|---|---|---|

| Concatenation (Early) | Datasets merged at the feature level before analysis. | PCA on scaled data; Multi-block PLS; Deep Autoencoders. |

| Network-Based | Relationships between omics features modeled as a graph. | Correlation networks (WGCNA), Bayesian networks, Knowledge-graphs. |

| Matrix Factorization | Joint decomposition of multiple data matrices into lower-dimensional factors. | Joint Non-negative Matrix Factorization (jNMF), Multi-Omics Factor Analysis (MOFA). |

| Similarity-Based (Late) | Analyses performed separately, then results integrated. | Kernel fusion, Similarity Network Fusion (SNF). |

Diagram 3: Conceptual approaches to multi-omics data integration.

The Scientist's Toolkit

Table 4: Research Reagent Solutions & Essential Tools

| Item / Tool | Category | Function in Multi-Omics Research |

|---|---|---|

| RStudio / Posit | Software Environment | Integrated development environment for R, essential for statistical analysis and visualization (ggplot2). |

| Jupyter Notebook / Lab | Software Environment | Interactive development for Python, enabling literate programming and sharing of analysis narratives. |

| Bioconductor | Software Repository | Vast collection of R packages for the analysis and comprehension of high-throughput genomic data (e.g., DESeq2, limma). |

| Conda / Bioconda | Package Manager | Manages isolated software environments and provides thousands of bioinformatics tools, ensuring reproducibility. |

| Docker / Singularity | Containerization | Packages an entire analysis pipeline (OS, code, data) into a portable, reproducible container. |

| STAR | Bioinformatics Tool | Spliced-aware ultrafast aligner for RNA-seq reads, a standard for alignment. |

| MOFA+ | Bioinformatics Tool | Statistical framework for multi-omics integration via factor analysis to uncover latent biological processes. |

| STRING API | Database Resource | Programmatic access to protein-protein interaction networks, providing functional context for proteomics/genomics lists. |

| KEGG REST API | Database Resource | Allows retrieval of pathway maps and gene annotation data for enrichment analysis. |

| MultiQC | Quality Control Tool | Aggregates results from bioinformatics analyses across many samples into a single interactive report. |

From Theory to Bench: A Practical Guide to Multi-Omics Integration Techniques and Real-World Use Cases

This whitepaper presents an in-depth technical guide to multi-omics data integration frameworks, contextualized within the broader research thesis on Introduction to multi-omics integration methods. The convergence of genomics, transcriptomics, proteomics, and metabolomics promises transformative insights into complex biological systems and disease mechanisms. The selection of an integration strategy—early, intermediate, or late—is a fundamental decision that dictates downstream analytical power, interpretability, and success in applications like biomarker discovery and drug development.

Core Integration Frameworks: A Technical Synopsis

Integration strategies are primarily classified by the stage at which disparate omics datasets are combined.

Early Integration (Data-Level): Raw or pre-processed data from multiple omics platforms are concatenated into a single composite matrix before model construction. This approach feeds all features into a multivariate model, such as a deep neural network or multi-kernel learning algorithm, allowing for the detection of complex, non-linear interactions across molecular layers from the outset.

Intermediate Integration (Feature-Level): This framework involves transforming individual omics datasets into lower-dimensional spaces or similarity matrices (kernels) before integration. Methods like Multiple Kernel Learning (MKL) or Statistically Inspired Modification of PLS (SIMCA) model each omics layer separately and then fuse the transformed representations. It balances flexibility with the preservation of data-type-specific structures.

Late Integration (Decision-Level): Models are built independently on each omics dataset. Their outputs—such as predicted labels, risk scores, or selected features—are combined in a final meta-analysis (e.g., via ensemble voting or rank aggregation). This strategy is highly modular and leverages the strengths of domain-specific models but cannot model cross-omic interactions directly.

Quantitative Comparison of Integration Frameworks

The choice of framework entails critical trade-offs in performance, interpretability, and computational demand. The following table synthesizes quantitative findings from recent benchmark studies (2023-2024).

Table 1: Comparative Analysis of Multi-Omics Integration Strategies

| Characteristic | Early Integration | Intermediate Integration | Late Integration |

|---|---|---|---|

| Typical Model Architecture | Deep Autoencoders, Concatenated->DNN | Multiple Kernel Learning (MKL), iCluster, MOFA | Ensemble of single-omics models (Random Forest, SVM) |

| Ability to Model Cross-Omic Interactions | High (Directly models interactions) | Moderate (Through shared latent space) | None (Models are independent) |

| Interpretability Challenge | Very High (Black-box nature) | Moderate (Latent factors can be analyzed) | Low (Relies on interpretable base models) |

| Handling of High Dimensionality | Requires robust feature selection/regularization | Good (Kernel methods reduce dimension) | Excellent (Performed per dataset) |

| Tolerance to Noise & Batch Effects | Low (Sensitive to data quality) | Moderate-High (Can model batch as covariate) | High (Issues confined per dataset) |

| Typical Computational Cost | High (Large, complex models) | Moderate-High (Depends on kernel computations) | Low-Moderate (Parallelizable) |

| Reported Avg. AUC Increase* (vs. best single-omics) | 8-15% | 6-12% | 4-8% |

| Dominant Use Case | Holistic pattern discovery in large cohorts | Identifying co-varying factors across omics | Leveraging validated, domain-specific predictors |

*Range aggregated from benchmark studies on cancer subtyping and clinical outcome prediction (Pan-omics, 2023; Nature Methods, 2024).

Experimental Protocols for Key Integration Methods

Protocol 1: Early Integration Using a Deep Learning Autoencoder Framework

Objective: To integrate RNA-Seq (transcriptomics) and RPPA (proteomics) data for unsupervised patient stratification.

Materials: Normalized count matrix (RNA-Seq), normalized protein abundance matrix (RPPA), Python with PyTorch/TensorFlow, scikit-learn.

Method:

1. Pre-processing & Concatenation: Perform min-max scaling per feature across each omics dataset. Horizontally concatenate the scaled matrices by sample ID to create a unified matrix X_multi of dimensions [Nsamples x (NRNAFeatures + NProtein_Features)].

2. Model Architecture: Construct a symmetric denoising autoencoder.

* Encoder: Input Layer -> Dense(512, ReLU) -> Dropout(0.3) -> Dense(128, ReLU) -> Dense(32, ReLU) -> Latent Space (8 units).

* Decoder: Latent Space -> Dense(32, ReLU) -> Dense(128, ReLU) -> Dense(512, ReLU) -> Output Layer (Linear activation).

3. Training: Use Mean Squared Error (MSE) reconstruction loss. Train for 200 epochs with a batch size of 32, Adam optimizer (lr=1e-4), with 15% Gaussian noise added to inputs for denoising.

4. Clustering: Extract the 8-dimensional latent vectors for all samples. Apply k-means clustering (k determined by silhouette score) to stratify patients.

5. Validation: Perform survival analysis (Kaplan-Meier log-rank test) and differential expression analysis between clusters to assess biological relevance.

Protocol 2: Intermediate Integration using Multiple Kernel Learning (MKL)

Objective: To integrate methylation, transcriptomics, and clinical data for supervised classification of disease status.

Materials: Methylation beta-values matrix, Gene expression matrix, Clinical covariates table, R package MixKernel or Python library scikit-learn.

Method:

1. Kernel Construction: For each omics dataset and the clinical data table, compute a similarity matrix (kernel).

* For continuous data (expression): Use a linear kernel K_lin = X * X^T or an RBF kernel.

* For methylation data: Use a Gaussian kernel on top-5k most variable CpG sites.

* For categorical clinical data: Use a Jaccard similarity kernel.

2. Kernel Combination: Combine kernels K1, K2, K3 using a weighted sum: K_combined = μ1*K1 + μ2*K2 + μ3*K3, where weights μ are optimized during model training (e.g., via centered-kernel alignment or gradient descent).

3. Model Training: Train a kernel-based classifier (e.g., Support Vector Machine) or a Cox regression model (for survival) on the combined kernel K_combined.

4. Interpretation: Analyze the optimized kernel weights (μ) to infer the relative contribution of each data type. Project samples using the combined kernel for visualization.

Visualization of Workflows and Relationships

Title: Conceptual Workflow of Three Core Multi-Omics Integration Strategies

Title: Intermediate Integration via Multiple Kernel Learning (MKL) Pipeline

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 2: Essential Materials and Tools for Multi-Omics Integration Research

| Item / Solution | Provider / Example | Function in Integration Research |

|---|---|---|

| Total Multi-Omics Profiling Kits | Qiagen (QIAseq Multimodal Panels), 10x Genomics (Chromium Single Cell Multiome) | Generate matched genomic, transcriptomic, and epigenomic data from a single sample input, ensuring sample identity alignment for integration. |

| Cross-Linking Mass Spectrometry (XL-MS) Reagents | DSSO, BS3 crosslinkers (Thermo Fisher) | Capture protein-protein interactions (PPI), providing structural proteomics data to integrate with transcriptional networks. |

| Nucleic Acid & Protein Co-isolation Kits | AllPrep (Qiagen), TRIzol (Thermo Fisher) | Isolve DNA, RNA, and protein simultaneously from a single tissue or cell sample, minimizing biological variation between omes. |

| Multi-Omic Data Analysis Software | R/Bioconductor: (mixOmics, MOFA2, iClusterPlus). Python: (muon, scikit-learn, PyTorch). |

Provide specialized, validated algorithms and pipelines for implementing early, intermediate, and late integration frameworks. |

| Cloud Computing Platforms with Omics Workflows | Terra (Broad/Verily), Seven Bridges, Google Cloud Life Sciences | Offer scalable computational environments, pre-configured workflow DSLs (WDL/CWL), and secure data hubs for large-scale multi-omics integration. |

| Knowledge Graph Databases | STRING, Reactome, Hetionet | Provide prior biological network information (e.g., PPI, pathways) to constrain and interpret integrated models, enhancing biological plausibility. |

The strategic selection of an integration framework—early, intermediate, or late—is paramount and must be guided by the specific biological question, data characteristics, and desired outcome. Early integration seeks a holistic view at the cost of interpretability, intermediate integration balances joint learning with structural preservation, and late integration prioritizes robustness and modularity. As multi-omics becomes central to systems biology and precision medicine, mastering these foundational strategies, along with their associated experimental and computational protocols, is essential for researchers and drug development professionals aiming to decode complex disease etiologies and identify novel therapeutic targets.

The analysis of high-dimensional multi-omics data—spanning genomics, transcriptomics, proteomics, and metabolomics—presents a fundamental challenge in modern systems biology. The primary goal is to extract biologically meaningful signals by integrating these diverse data modalities to uncover novel disease mechanisms, biomarkers, and therapeutic targets. Statistical and matrix-based dimensionality reduction techniques, including Principal Component Analysis (PCA), Canonical Correlation Analysis (CCA), and Non-negative Matrix Factorization (NMF), form a critical cornerstone for this integration. This technical guide details their core principles, applications, and experimental protocols within multi-omics research, providing a framework for their effective implementation in translational and drug development contexts.

Core Methodologies: Principles and Applications

Principal Component Analysis (PCA)

Principle: PCA is an unsupervised linear transformation method that identifies orthogonal axes (principal components) of maximum variance in a high-dimensional dataset. It reduces dimensionality by projecting data onto a lower-dimensional subspace defined by the top k eigenvectors of the covariance matrix.

Multi-Omics Application: Commonly used for initial data exploration, batch effect correction, visualization, and noise reduction within a single omics layer. For integration, it can be applied to concatenated multi-omics datasets or used in frameworks like Multi-Omics Factor Analysis (MOFA+).

Canonical Correlation Analysis (CCA)

Principle: CCA finds linear combinations of variables from two datasets that are maximally correlated with each other. It identifies pairs of canonical variates (latent vectors) by solving a generalized eigenvalue problem derived from the cross-covariance matrix.

Multi-Omics Application: Directly models relationships between two omics data types (e.g., mRNA and miRNA expression). Sparse CCA (sCCA) extensions incorporate L1 regularization to select relevant features, crucial for identifying key drivers of correlation from thousands of molecular entities.

Non-negative Matrix Factorization (NMF)

Principle: NMF factorizes a non-negative data matrix V (n x m) into two lower-rank, non-negative matrices W (n x k) and H (k x m), such that V ≈ WH. It is a parts-based decomposition that often yields more interpretable latent factors.

Multi-Omics Application: Ideal for decomposing count-based data (e.g., gene expression, microbiome reads) into metagenes or molecular patterns (H) and their sample-specific weights (W). Integrative NMF jointly factorizes multiple omics matrices, sharing a common H matrix to reveal coherent multi-omic molecular subtypes.

Quantitative Comparison of Methods

Table 1: Comparative Analysis of PCA, CCA, and NMF in Multi-Omics Integration

| Feature | Principal Component Analysis (PCA) | Canonical Correlation Analysis (CCA) | Non-negative Matrix Factorization (NMF) |

|---|---|---|---|

| Core Objective | Maximize variance within a single dataset. | Maximize correlation between two datasets. | Approximate data with additive, parts-based components. |

| Data Assumptions | Linear relationships, centered data. | Linear relationships, paired samples. | Non-negative input data. |

| Dimensionality Output | Orthogonal principal components (PCs). | Pairs of correlated canonical variates. | Non-orthogonal basis and coefficient matrices. |

| Interpretability | Global structure; components can have mixed signs. | Relationships between two views; can be abstract. | Often higher; yields additive, sparse representations. |

| Key Multi-Omics Use | Exploratory analysis, visualization, batch correction. | Identifying correlated features across two omics layers. | Discovering molecular patterns and patient clusters/subtypes. |

| Sparsity & Regulation | Requires extensions (e.g., sparse PCA). | Often used with L1 regularization (sCCA). | Inherently promotes sparsity; can be explicitly regularized. |

| Handling >2 Datasets | Via concatenation or generalized PCA. | Requires extensions (Multi-view CCA). | Naturally extensible (Joint NMF, iNMF). |

| Computational Scale | Efficient for large n, moderate p. | Challenging for very high p; requires regularization. | Iterative optimization; scalable with efficient solvers. |

Table 2: Recent Benchmark Performance Metrics on TCGA Data (Simulated Summary)

| Method & Tool | Avg. Cluster Purity (Subtyping) | Avg. Feature Selection AUC | Runtime on 500x10k Matrix | Key Citation (Example) |

|---|---|---|---|---|

| PCA (scikit-learn) | 0.72 | 0.65 | <10 sec | Ringnér, 2008 |

| Sparse CCA (PMA R package) | 0.81 | 0.89 | ~2 min | Witten et al., 2009 |

| Integrative NMF (jNMF) | 0.85 | 0.78 | ~5 min | Yang & Michailidis, 2016 |

Experimental Protocols for Multi-Omics Integration

Protocol: Integrative Subtyping using Joint NMF

Objective: To identify patient subtypes by jointly decomposing mRNA expression and DNA methylation data.

Data Preprocessing:

- mRNA (RNA-Seq): Obtain raw read counts. Apply variance-stabilizing transformation (e.g., DESeq2) or log2(CPM+1). Standardize features (mean=0, variance=1).

- DNA Methylation (Array): Obtain beta values. Perform probe filtering (remove cross-reactive probes, SNPs). Use M-values for analysis. Standardize features.

- Sample Matching: Ensure paired samples across assays. Handle missing data via complete-case analysis or imputation.

Matrix Factorization:

- Form matrices X1 (mRNA, nsamples x pgenes) and X2 (methylation, nsamples qprobes).

- Apply Joint NMF optimization: Minimize ||X1 - W1 H||² + ||X2 - W2 H||² + λ(||W1||² + ||W2||² + ||H||²).

- Tool: Use

nmfpackage in R ornimfain Python. Set factorization rank k via consensus clustering or cophenetic coefficient stability.

Interpretation & Validation:

- Use shared matrix H (k x (p+q)) to define multi-omic patterns.

- Cluster patients using W1 or W2 coefficients via k-means.

- Validate subtypes via survival analysis (log-rank test) and differential pathway enrichment (GSEA) against known subtypes.

Protocol: Identifying Cross-Omic Drivers with Sparse CCA

Objective: To find a small set of correlated genes and proteins from paired transcriptomics and proteomics data.

Data Preparation:

- Generate normalized, paired matrices Z1 (gene expression) and Z2 (protein abundance) of dimensions (n x p) and (n x q).

- Center and scale each column.

Sparse CCA Implementation:

- Solve using Penalized Matrix Analysis (PMA): argmax_(u,v) u'Z1'Z2v subject to ||u||²≤1, ||v||²≤1, ||u||₁ ≤ c1, ||v||₁ ≤ c2.

- Tool: Use

PMA::CCAin R. Determine sparsity parameters c1 and c2 via permutation testing or cross-validation to maximize correlation.

Downstream Analysis:

- Extract the first pair of sparse canonical vectors u1, v1 (listing selected genes/proteins).

- Compute canonical scores for each sample: score1 = Z1 * u1, score2 = Z2 * v1.

- Correlate scores with clinical phenotypes. Validate selected features using independent cohorts or functional databases.

Visualization of Workflows and Relationships

Title: PCA Dimensionality Reduction Workflow

Title: CCA Maximizes Correlation Between Datasets

Title: Integrative NMF for Multi-Omics Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Resources for Matrix-Based Multi-Omics Analysis

| Item / Resource | Function in Analysis | Example / Source |

|---|---|---|

| Normalization Packages | Preprocess raw omics data to remove technical artifacts. | DESeq2 (RNA-Seq), limma (microarrays), minfi (methylation). |

| PCA Implementation | Perform efficient singular value decomposition (SVD). | scikit-learn.decomposition.PCA (Python), prcomp (R base). |

| Sparse CCA Solver | Apply regularization for feature selection in CCA. | PMA (R), scca (Python), mixOmics (R). |

| NMF Solver | Factorize non-negative matrices with various algorithms. | NMF (R), nimfa (Python), MATLAB Toolbox for NMF. |

| Multi-Omics Integration Suite | Unified framework for applying PCA/CCA/NMF. | MOFA+ (Python/R), omicade4 (R), IntNMF (R). |

| Consensus Clustering Tool | Determine stable clusters and optimal factorization rank. | ConsensusClusterPlus (R), sklearn.cluster.KMeans. |

| Pathway Analysis Database | Annotate derived molecular patterns with biological function. | MSigDB, KEGG, Reactome, Gene Ontology (GO). |

| High-Performance Computing (HPC) | Enable factorization of large-scale matrices (n, p > 10k). | Cloud platforms (AWS, GCP), SLURM cluster managers. |

The field of multi-omics integration aims to synthesize data from genomic, transcriptomic, proteomic, metabolomic, and epigenomic layers to construct a comprehensive model of biological systems. This is critical for elucidating disease mechanisms and identifying therapeutic targets. Traditional statistical methods often struggle with the high dimensionality, noise, and complex non-linear interactions inherent in such data. Machine Learning (ML) and Artificial Intelligence (AI), particularly neural networks and ensemble models, provide a powerful framework to address these challenges, enabling the discovery of novel biomarkers and biological pathways.

Core Methodologies: Neural Networks and Ensembles

Neural Networks for Multi-Omics

Neural networks, especially deep learning architectures, excel at learning hierarchical representations from complex data.

- Autoencoders (AEs): Used for non-linear dimensionality reduction and feature learning. A bottleneck layer forces the network to learn a compressed, informative representation of the input omics data.

- Multi-Modal Deep Neural Networks: Architectures with dedicated input branches for each omics type, whose learned features are fused in deeper layers for integrated prediction (e.g., clinical outcome).

- Graph Neural Networks (GNNs): Applied when omics data can be structured as a graph (e.g., protein-protein interaction networks with node features from genomics). GNNs propagate information across the graph to capture topological relationships.

Ensemble Models for Robust Integration

Ensemble methods combine predictions from multiple base models to improve accuracy, robustness, and generalizability.

- Stacking: Uses predictions from diverse base models (e.g., SVM, Random Forest, a neural network) trained on different omics views as input to a final "meta-learner" model.

- Supervised Ensemble Integration: Methods like mixOmics (DIABLO framework) identify correlated features across multiple omics datasets that discriminate between sample classes.

Experimental Protocols & Data Presentation

Protocol: A Standard Stacked Ensemble for Patient Stratification

Objective: Integrate mRNA expression, DNA methylation, and microRNA data to classify disease subtypes.

- Data Preprocessing: Each omics dataset is independently normalized, batch-corrected, and subjected to variance-based filtering.

- Base Learner Training:

- Train a Random Forest on the top 1000 most variable mRNA features.

- Train a Lasso Regression on the top 5000 most variable methylation probes.

- Train a 1D Convolutional Neural Network on the full microRNA count data, using 1D convolutions to learn local patterns.

- Perform 5-fold cross-validation on the training set for each model; collect out-of-fold predictions for each sample.

- Meta-Learning: Concatenate the three sets of out-of-fold predictions (probabilities) into a new feature matrix. Train a logistic regression model (the meta-learner) on this matrix using the true labels.

- Validation: Apply base models to the held-out test set, generate predictions, and feed them into the trained meta-learner for final classification. Evaluate using AUC-ROC and balanced accuracy.

Protocol: Multi-Modal Autoencoder for Feature Extraction

Objective: Learn a shared, low-dimensional latent representation from paired RNA-Seq and proteomics data.

- Architecture: Construct a dual-input autoencoder. The encoder has two separate branches (fully connected networks) for each data type, which are concatenated and fed into a final encoder layer.

- Training: The model is trained to simultaneously reconstruct both original inputs from the central latent vector. Loss is a weighted sum of Mean Squared Error (MSE) for both reconstructions.

- Downstream Application: Use the trained encoder to generate the latent vectors for all samples. These integrated features are then used as input to a simpler classifier (e.g., SVM) for survival analysis.

Table 1: Performance Comparison of ML Models on TCGA Pan-Cancer Multi-Omics Classification

| Model Type | Specific Architecture | Avg. AUC-ROC (5 cancers) | Avg. F1-Score | Key Advantage |

|---|---|---|---|---|

| Traditional ML | Random Forest | 0.78 | 0.72 | Interpretability, feature importance |

| Neural Network | Multi-Modal DNN | 0.85 | 0.79 | Captures complex non-linear interactions |

| Ensemble Model | Stacked Generalization | 0.88 | 0.82 | Highest robustness and accuracy |

| Reference Method | PCA + SVM | 0.71 | 0.65 | Linear baseline |

Table 2: Common Software/Packages for Multi-Omics ML

| Tool/Package | Primary Use | Key Algorithm/Model |

|---|---|---|

| PyTorch / TensorFlow | Building custom neural network architectures | Deep Neural Networks, Autoencoders, GNNs |

| Scikit-learn | Implementing base learners and meta-learners | SVM, RF, Logistic Regression, Stacking |

| mixOmics (R) | Supervised multi-omics integration | DIABLO (sPLS-DA), Sparse PCA |

| MOGONET | End-to-end multi-omics integration & classification | Graph Convolutional Networks |

| DeepProteomics | Proteomics-specific deep learning | CNNs for spectrum prediction |

Visualizations

Title: Stacked Ensemble Model Workflow for Multi-Omics

Title: Multi-Modal Autoencoder for Omics Integration

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Multi-Omics ML-Driven Research

| Item / Reagent | Function in the Context of ML & Multi-Omics |

|---|---|

| High-Throughput Sequencing Kits (e.g., RNA-Seq, WES) | Generate the primary genomic/transcriptomic digital data that forms the input feature matrices for ML models. |

| Mass Spectrometry-Grade Solvents & Columns | Essential for reproducible proteomic and metabolomic LC-MS/MS runs, the data from which are used for integration. |

| Multiplex Immunoassay Panels (e.g., Olink, SomaScan) | Provide high-throughput, validated protein quantification data, a key omics layer for clinical ML models. |

| Single-Cell Multi-Omic Kits (e.g., CITE-seq, ATAC-seq) | Enable the generation of paired multi-omics data at single-cell resolution, the complex analysis of which demands advanced AI. |

| Cloud Computing Credits (AWS, GCP, Azure) | Provide the scalable compute resources (GPUs/TPUs) necessary for training large neural networks on high-dimensional omics data. |

| Containerization Software (Docker, Singularity) | Ensure reproducibility of the ML/AI analysis pipeline by encapsulating the exact software environment, including library versions. |

Network-based integration is a core computational methodology within the multi-omics toolkit, enabling the synthesis of disparate data types—genomics, transcriptomics, proteomics, metabolomics—into unified biological interaction graphs. This approach moves beyond simple correlation, modeling the complex, often non-linear, relationships between molecular entities as nodes and edges. By constructing these graphs, researchers can contextualize omics-derived lists, identify key regulatory hubs, and uncover emergent system properties that are not apparent from single-layer analyses. This guide details the technical pipeline for building and interpreting these networks, providing a critical framework for hypothesis generation in systems biology and drug discovery.

Foundational Concepts & Data Types

Biological interaction graphs are mathematical representations (G = (V, E)) where vertices (V) represent biological entities (genes, proteins, metabolites), and edges (E) represent interactions or associations. The nature of edges defines the network type.

| Network Type | Node Examples | Edge Representation | Primary Data Sources |

|---|---|---|---|

| Protein-Protein Interaction (PPI) | Proteins | Physical binding or functional association | Yeast-two-hybrid, AP-MS, curated databases (BioGRID, STRING) |

| Gene Regulatory (GRN) | Transcription Factors, Target Genes | Transcriptional regulation | ChIP-seq, motif analysis, inference from expression (GENIE3) |

| Gene Co-expression | Genes | Similar expression profiles across conditions | RNA-seq, microarrays (Pearson/Spearman correlation) |

| Metabolic | Metabolites, Enzymes | Biochemical reactions | Genome-scale metabolic models (Recon), KEGG pathways |

| Integrated Multi-Omics | Multi-entity types | Heterogeneous relationships (e.g., eQTLs, protein-metabolite) | Multi-assay experimental data, prior knowledge fusion |

Core Construction Methodologies

Data Acquisition and Preprocessing

- Primary Experimental Data: Generate or acquire omics matrices (e.g., gene expression counts, protein abundance).

- Reference Knowledge Bases: Integrate prior knowledge from public repositories. Current (2024-2025) canonical sources include:

- STRING: Known and predicted protein-protein interactions (physical and functional).

- BioGRID: Curated physical and genetic interactions.

- TRRUST/KEGG: Transcriptional regulatory and pathway relationships.

- HumanBase/DepMap: Tissue-specific and context-aware networks.

- Normalization: Apply appropriate normalization (e.g., TPM for RNA-seq, variance-stabilizing transformation) to make datasets comparable.

Network Inference Protocols

Protocol A: Correlation-Based Co-expression Network (Weighted Gene Co-expression Network Analysis - WGCNA)

- Input: Normalized gene expression matrix (n samples x m genes).

- Similarity Matrix: Calculate pairwise correlations (e.g., Pearson) between all genes, resulting in an m x m matrix.

- Adjacency Matrix: Transform similarity to adjacency using a signed or unsigned power function (soft thresholding, β typically 6-12) to emphasize strong correlations and satisfy scale-free topology.

- Topological Overlap Matrix (TOM): Compute TOM from adjacency to measure network interconnectedness, reducing noise and spurious connections.

- Module Detection: Perform hierarchical clustering on TOM-based dissimilarity (1-TOM). Dynamically cut branches to identify modules (clusters) of highly co-expressed genes.

- Module Trait Association: Correlate module eigengenes (first principal component of module expression) with phenotypic traits to identify relevant modules.

Protocol B: Bayesian Network Inference for Regulatory Relationships

- Input: Expression data for potential regulators (TFs) and targets, alongside prior knowledge (e.g., ChIP-seq binding motifs).

- Structure Learning: Use algorithms (e.g., constraint-based PC, score-based greedy search) to learn a directed acyclic graph (DAG) representing probabilistic dependencies.

- Parameter Learning: Estimate conditional probability distributions for each node given its parents (e.g., using maximum likelihood).

- Integration of Priors: Incorporate known TF-target links as prior probabilities to guide and improve inference accuracy.

- Validation: Use bootstrap resampling to assess edge confidence and compare predictions to held-out validation data or perturbation experiments.

Multi-Layer Network Integration

Protocol C: Heterogeneous Network Construction via Matrix Factorization

- Define Layers: Construct individual adjacency matrices for each omics layer (e.g., PPI, co-expression, metabolite-protein interactions).

- Create Inter-Layer Links: Define bipartite edges connecting nodes across layers (e.g., gene-protein identity, enzyme-metabolite reactions).

- Construct Supra-Adjacency Matrix: Assemble a block-structured matrix where diagonal blocks are intra-layer networks and off-diagonals are inter-layer connections.

- Joint Factorization: Apply non-negative matrix tri-factorization (NMTF) or similar to decompose the supra-adjacency matrix into low-dimensional, shared feature matrices for nodes and layers.

- Analysis: Use the latent features for downstream tasks like node classification (e.g., disease gene prediction) or cluster detection across omics types.

Analytical Workflow & Interpretation

Network Construction and Analysis Workflow

Topological Analysis

Key metrics identify structurally and potentially functionally important nodes.

| Metric | Calculation | Biological Interpretation |

|---|---|---|

| Degree (k) | Number of edges incident to a node. | Local connectivity; high-degree nodes are "hubs". |

| Betweenness Centrality | Fraction of shortest paths passing through a node. | Control over information flow; bridge between modules. |

| Closeness Centrality | Reciprocal of the sum of shortest path distances to all other nodes. | Efficiency of information propagation. |

| Eigenvector Centrality | Measure of influence based on connections to high-scoring nodes. | Importance within the network's core structure. |

| Clustering Coefficient | Proportion of a node's neighbors that are connected to each other. | Tendency to form local, dense clusters (protein complexes). |

Functional Module Detection & Enrichment

- Community Detection: Apply algorithms (e.g., Louvain, Leiden, Infomap) to partition the network into densely connected subgraphs (modules).

- Module Eigengene: Calculate the first principal component of the module's expression profile as its representative signal.

- Enrichment Analysis: For each module's gene set, perform over-representation analysis (ORA) or gene set enrichment analysis (GSEA) against databases (GO, KEGG, Reactome) using hypergeometric tests. Correct p-values for multiple testing (FDR).

- Annotation: Assign biological functions (e.g., "Mitochondrial Electron Transport") to modules based on top enriched terms.

Example Network with Functional Modules and Hub

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Tool Category | Specific Examples | Function in Network Validation |

|---|---|---|

| CRISPR-Cas9 Systems | sgRNA libraries, Cas9-expressing cell lines. | Enable knockout/knockdown of predicted hub genes to validate their functional importance via phenotypic assays. |

| Proximity-Dependent Labeling Reagents | BioID2/TurboID enzymes, biotin. | Experimentally map novel protein-protein interactions in living cells to confirm edges in a predicted PPI subnetwork. |

| Antibodies for Protein Detection | Phospho-specific antibodies, validated ChIP-grade antibodies. | Validate regulatory edges (e.g., phosphorylated protein levels post-perturbation) or TF binding at predicted target promoters. |

| Luciferase Reporter Assay Kits | Dual-luciferase vectors, substrate kits. | Test predicted transcriptional regulatory edges by cloning putative promoter regions upstream of luciferase and co-expressing the TF. |

| Small Molecule Inhibitors/Agonists | Kinase inhibitors (e.g., SBI-0206965 for ULK1), receptor agonists. | Pharmacologically perturb predicted network hubs to observe cascading effects on downstream nodes and network state. |

| Multiplexed Immunoassay Kits | Luminex xMAP, Olink, MSD. | Quantify dozens of proteins/phosphoproteins from limited sample to measure network-level changes after perturbation. |

Applications in Drug Development

Network-based integration directly informs target discovery and drug mechanism. It identifies:

- Disease Modules: Subnetworks dysregulated in pathology, offering polypharmacology targets.

- Essential Hubs: High-centrality nodes whose perturbation maximally disrupts disease networks.

- Mechanism of Action: By comparing drug-induced network signatures to disease-reversed signatures.

- Side Effect Prediction: Analyzing the proximity of a drug target subnetwork to subnetworks associated with adverse outcomes.

Network-Based Drug Target Identification

This whitepaper serves as a core chapter in a broader thesis on Introduction to multi-omics integration methods research. It demonstrates the transformative power of integrating genomic, transcriptomic, proteomic, and metabolomic data to solve real-world biomedical challenges. The following case studies exemplify how multi-omics moves beyond single-layer analysis to provide a systems-level understanding of disease mechanisms, patient stratification, and therapeutic intervention.

Case Study 1: Integrative Subtyping in Breast Cancer

Breast cancer is a heterogeneous disease. Multi-omics integration has been pivotal in moving beyond traditional histopathological classifications to define molecular subtypes with prognostic and therapeutic implications.

Experimental Protocol: Multi-Omics Subtyping Workflow

- Cohort & Sample Collection: Collect matched tumor and normal adjacent tissue from 500 patients (TCGA-BRCA cohort). Preserve samples for DNA, RNA, protein, and metabolite extraction.

- Data Generation:

- Genomics (DNA-seq): Identify somatic mutations (SNVs, indels), copy number variations (CNVs), and structural variants.

- Transcriptomics (RNA-seq): Quantify gene expression (mRNA, lncRNA) and perform fusion gene detection.

- Proteomics & Phosphoproteomics (LC-MS/MS): Quantify protein abundance and phosphorylation status.

- Metabolomics (LC-MS/GC-MS): Profile polar and non-polar metabolites.

- Data Preprocessing & Normalization: Use platform-specific tools (e.g., GATK for DNA-seq, DESeq2 for RNA-seq, MaxQuant for proteomics) for quality control, alignment, and quantification.

- Integrative Clustering: Apply similarity network fusion (SNF) or iCluster+ algorithms to concatenated and normalized multi-omic data matrices to identify patient clusters.

- Subtype Characterization: Perform differential analysis (omic-by-omic) between clusters to define driver mutations, activated pathways, and key biomarkers. Validate subtypes against clinical outcomes (survival, drug response).

Table 1: Characteristics of Multi-Omics Breast Cancer Subtypes

| Subtype Designation | Core Genomic Alteration | Pathway Activation (Proteo/Phospho) | Metabolic Hallmark | Clinical Association |

|---|---|---|---|---|

| Immune-Inflamed | High tumor mutational burden (TMB) | PD-L1 expression, JAK/STAT signaling | Increased glycolytic flux | Response to immunotherapy |

| Metabolic | PIK3CA mutations (40%) | PI3K/AKT/mTOR, high acetyl-CoA carboxylase | Dysregulated lipid synthesis | Poor prognosis, resistant to standard chemo |

| Luminal Receptor-Driven | ESR1 amplifications, GATA3 mutations | High ER/PR protein, ERBB2 signaling | Variable | Good response to endocrine therapy |

| Basal-Like/Mesenchymal | TP53 mutations (80%), RB1 loss | Epithelial-mesenchymal transition (EMT) pathways | Increased glutaminolysis | Aggressive, high-grade tumors |

Visualizing the Integrative Workflow