Multi-Omics Integration Demystified: A Practical Guide to Choosing the Right Method for Your Research

This comprehensive guide empowers researchers, scientists, and drug development professionals to navigate the complex landscape of multi-omics data integration.

Multi-Omics Integration Demystified: A Practical Guide to Choosing the Right Method for Your Research

Abstract

This comprehensive guide empowers researchers, scientists, and drug development professionals to navigate the complex landscape of multi-omics data integration. It begins by establishing foundational knowledge of omics data types and core integration goals. It then dives into a detailed taxonomy of modern integration methods—from early to late fusion and machine learning approaches—providing clear criteria for method selection based on biological questions and data structures. The guide addresses common pitfalls, preprocessing challenges, and parameter optimization strategies. Finally, it outlines robust frameworks for validating integrated results and benchmarking method performance, culminating in actionable steps to translate multi-omics insights into impactful biomedical and clinical discoveries.

What is Multi-Omics Integration? Understanding Your Data and Defining Your Goal

Systems biology aims to construct comprehensive, predictive models of biological systems. While single-omics studies (genomics, transcriptomics, proteomics, metabolomics) provide valuable snapshots, they are inherently limited. Each layer captures only a fraction of the complex, multi-scale interactions governing phenotype. True systems-level understanding requires multi-omics integration, which synthesizes data from multiple molecular levels to reveal causal mechanisms, functional context, and emergent properties not discernible from any single layer.

The Core Challenge: Choosing an Integration Method

Within the context of a thesis on How to choose a multi-omics integration method, this guide establishes the fundamental why before addressing the how. The selection of an integration strategy is contingent upon the biological question, data characteristics, and desired output. Integration methods are broadly categorized by their underlying model:

Table 1: Core Multi-Omics Integration Methodologies

| Method Category | Description | Key Strengths | Typical Use Case | Example Tools/Algorithms |

|---|---|---|---|---|

| Concatenation (Early Integration) | Raw or transformed datasets are merged into a single matrix prior to analysis. | Simple; allows for global pattern discovery. | Exploratory analysis when sample count is high relative to features. | PCA, PLS, Deep Learning (Autoencoders) |

| Transformation (Intermediate Integration) | Omics datasets are transformed into a common space (e.g., kernels, graphs) and then combined. | Handles heterogeneous data types; preserves data structure. | Network-based analysis; similarity-based discovery. | Similarity Network Fusion (SNF), Kernel Fusion |

| Model-Based (Late Integration) | Analyses are performed separately, and results are integrated at the statistical or decision level. | Flexible; leverages best practices for each omics type. | Causal inference; biomarker validation across layers. | Bayesian Networks, Multi-block PLS, MOFA+ |

Experimental Protocols for Generating Integrable Data

Robust integration necessitates rigorously generated, complementary datasets. Below are streamlined protocols for paired omics analyses.

Protocol 1: Paired Total RNA-Seq and Global Proteomics from Tissue Objective: Generate transcriptomic and proteomic profiles from the same biological specimen.

- Tissue Homogenization: Flash-freeze tissue in liquid N₂. Pulverize using a cryomill. Split powder into two aliquots (~30 mg each) in pre-chilled tubes.

- RNA Extraction (Aliquot A): Add TRIzol, homogenize. Phase separate with chloroform. Precipitate RNA with isopropanol, wash with 75% ethanol. Perform DNase I treatment. Assess integrity (RIN > 7).

- Protein Extraction (Aliquot B): Suspend in SDT lysis buffer (4% SDS, 100mM Tris-HCl pH 7.6, 0.1M DTT). Heat at 95°C for 5 min. Sonicate. Clarify by centrifugation at 16,000 x g for 10 min.

- Library Prep & Sequencing (RNA): Use poly-A selection for mRNA. Prepare library with strand-specific kit (e.g., Illumina TruSeq). Sequence on a platform like NovaSeq to a depth of 30-50 million paired-end reads per sample.

- Proteomic Preparation & LC-MS/MS (Protein): Digest proteins using filter-aided sample preparation (FASP) with trypsin. Desalt peptides via C18 StageTips. Analyze by liquid chromatography coupled to tandem mass spectrometry (LC-MS/MS) using a 2-hour gradient on a Q Exactive HF or timsTOF Pro. Use data-dependent acquisition (DDA) or data-independent acquisition (DIA).

Protocol 2: Metabolomics and Phosphoproteomics from Cell Culture Objective: Capture metabolic state and signaling activity from the same cell population.

- Cell Quenching & Harvesting: Aspirate medium rapidly. Quench metabolism with cold (-20°C) 80% methanol/water. Scrape cells on dry ice. Transfer suspension to a cold tube.

- Metabolite Extraction: Centrifuge at 16,000 x g, 4°C for 10 min. Transfer supernatant (metabolite fraction) to a new tube. Dry in a vacuum concentrator. Store at -80°C for LC-MS.

- Protein Pellet Processing: Wash the remaining protein pellet with cold acetone. Dry. Resuspend in urea-based lysis buffer (8M urea, 50mM Tris pH 8). Sonicate.

- Phosphopeptide Enrichment: Digest lysates with trypsin/Lys-C. Desalt peptides. Enrich phosphorylated peptides using TiO₂ or Fe-IMAC magnetic beads per manufacturer's protocol.

- LC-MS/MS Analysis:

- Metabolites: Reconstitute in water. Analyze by hydrophilic interaction liquid chromatography (HILIC) coupled to high-resolution MS (e.g., Orbitrap) in negative and positive ion modes.

- Phosphopeptides: Analyze by LC-MS/MS using a C18 column with a basic pH reverse-phase gradient to improve separation, followed by MS on an instrument like an Orbitrap Eclipse.

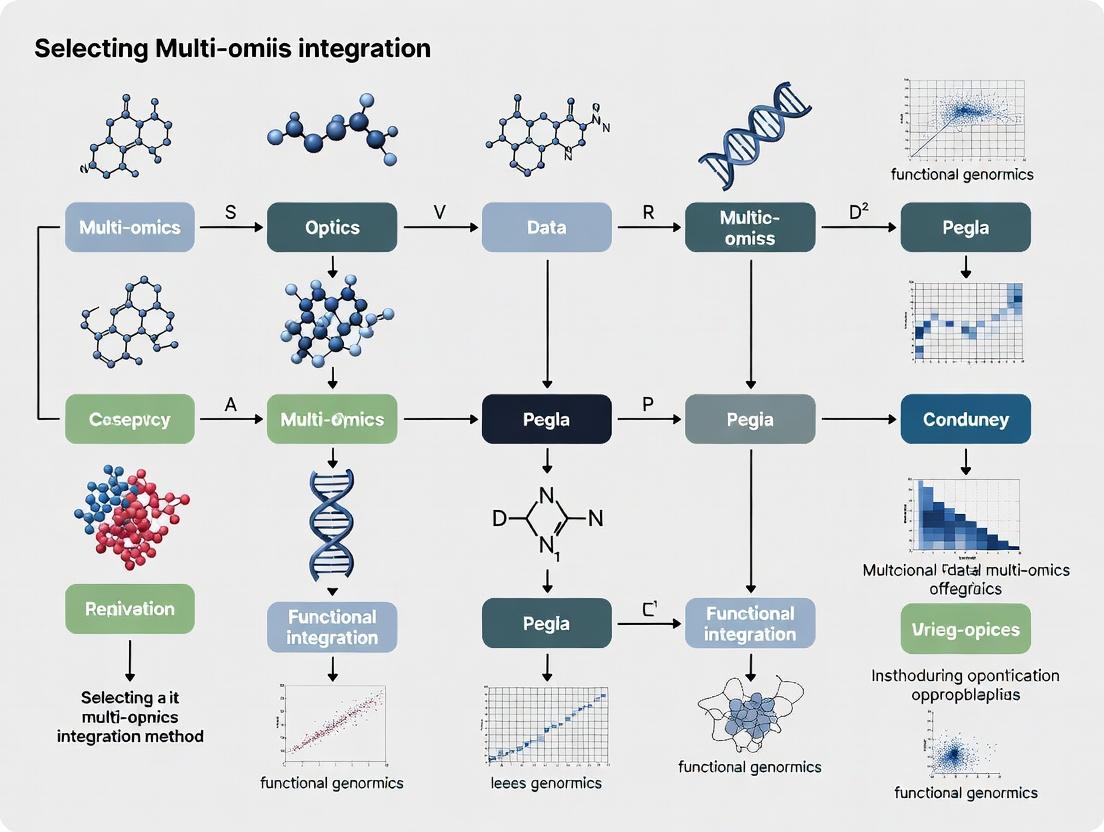

Visualization of Integration Concepts

Multi-Omics Data Integration Workflow

Choosing an Integration Method: Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Multi-Omics Experiments

| Item | Function in Multi-Omics Workflow | Key Consideration |

|---|---|---|

| TRIzol/Chloroform | Simultaneous extraction of RNA, DNA, and protein from a single sample (triple-omics). | Maintains co-registration of molecules from the same source; critical for paired analysis. |

| Poly(A) Magnetic Beads | Isolation of mRNA from total RNA for RNA-Seq library prep. | Ensures focus on protein-coding transcripts for direct comparison with proteomics. |

| Trypsin/Lys-C Mix | High-efficiency, specific proteolytic digestion of protein extracts for bottom-up proteomics. | Reproducible digestion is vital for accurate peptide quantification and cross-omics correlation. |

| TiO₂ or Fe-IMAC Beads | Selective enrichment of phosphorylated peptides from complex digests. | Enables targeted phosphoproteomics to integrate signaling data with transcriptomic/metabolic states. |

| C18 StageTips | Desalting and cleanup of peptide samples prior to LC-MS/MS. | Essential for reproducible MS injection and instrument longevity. |

| Isotope-Labeled Internal Standards (Metabolomics) | Spike-in controls for absolute quantification of metabolites by LC-MS. | Corrects for matrix effects; enables integration of metabolomic data across samples and batches. |

| Cell Lysis Buffer (Urea/SDS-based) | Effective denaturation and solubilization of proteins from complex samples (tissue, cells). | Complete lysis is fundamental for representative proteomic and phosphoproteomic analysis. |

| Unique Molecular Index (UMI) Adapters | Library preparation for RNA-Seq to correct for PCR amplification bias and improve quantification accuracy. | Provides more precise transcript counts, improving correlation with proteomic data. |

| Data-Independent Acquisition (DIA) Kit | Optimized spectral library generation and acquisition methods for comprehensive, reproducible proteomics. | Maximizes proteome coverage and quantitative consistency, key for robust integration. |

This technical guide provides an in-depth examination of the four major omics layers central to modern systems biology. Framed within the broader research thesis on selecting multi-omics integration methods, this primer equips researchers and drug development professionals with a foundational understanding of each layer's biological scope, measurement technologies, and data characteristics. Effective integration hinges on a precise grasp of what each layer measures and its inherent technical and biological noise.

Genomics

Genomics is the study of an organism's complete set of DNA, including all genes and their nucleotide sequences. It provides the static blueprint, encompassing both coding and non-coding regions, and includes the study of genetic variation (e.g., SNPs, CNVs, structural variants).

Core Technology: Next-Generation Sequencing (NGS).

- Whole Genome Sequencing (WGS): Interrogates the entire genome.

- Whole Exome Sequencing (WES): Targets protein-coding regions (~1-2% of the genome).

Experimental Protocol: WGS using Illumina Platform

- Library Preparation: Genomic DNA is fragmented, end-repaired, A-tailed, and ligated with platform-specific adapters.

- Quantification & Normalization: Libraries are quantified via qPCR and normalized for equal pooling.

- Cluster Amplification: On the flow cell, fragments are bridge-amplified to generate clonal clusters.

- Sequencing by Synthesis: Fluorescently labeled, reversibly terminated nucleotides are added. After each incorporation, fluorescence is imaged to determine the base.

- Data Analysis: Base calling, alignment to a reference genome (e.g., GRCh38), and variant identification using pipelines like GATK.

Key Research Reagent Solutions

| Reagent/Material | Function |

|---|---|

| Nextera DNA Flex Library Prep Kit | Prepares sequencing-ready libraries from genomic DNA via tagmentation. |

| Illumina NovaSeq 6000 S-Prime Reagent Kit | Contains flow cell and chemistry for high-throughput sequencing runs. |

| KAPA HyperPrep Kit | For PCR-based library construction with minimal bias. |

| IDT for Illumina DNA/RNA UD Indexes | Unique dual indexes for high-plex, multiplexed sequencing with reduced index hopping. |

| Bioanalyzer DNA High Sensitivity Chip | Microfluidic electrophoresis for precise library quality control and sizing. |

Transcriptomics

Transcriptomics profiles the complete set of RNA transcripts (the transcriptome) produced by the genome under specific conditions or at a specific time. It captures dynamic gene expression levels, alternative splicing, and non-coding RNA expression.

Core Technologies: RNA-Seq and Microarrays.

- Bulk RNA-Seq: Measures average gene expression across a cell population.

- Single-Cell RNA-Seq (scRNA-seq): Resolves expression at the individual cell level, revealing heterogeneity.

Experimental Protocol: Bulk RNA-Seq

- RNA Extraction & QC: Isolate total RNA (e.g., with TRIzol). Assess integrity via RIN (RNA Integrity Number) on a Bioanalyzer.

- Library Preparation: Deplete ribosomal RNA or enrich mRNA via poly-A selection. RNA is fragmented, reverse-transcribed to cDNA, and adapters are ligated.

- Sequencing & Alignment: Sequencing performed on platforms like Illumina. Reads are aligned to a reference genome/transcriptome using STAR or HISAT2.

- Quantification & Analysis: Expression is quantified as counts per gene (e.g., using featureCounts). Differential expression analysis is performed with tools like DESeq2 or edgeR.

Diagram Title: Bulk RNA-Seq Core Workflow

Proteomics

Proteomics is the large-scale study of the entire complement of proteins (proteome), including their abundances, post-translational modifications (PTMs), structures, and interactions. It directly reflects the functional effectors in the cell.

Core Technology: Mass Spectrometry (MS).

- Bottom-Up Proteomics: Proteins are digested into peptides, analyzed by LC-MS/MS, and identified via database searching.

- Data-Independent Acquisition (DIA): Provides comprehensive, reproducible quantification (e.g., SWATH-MS).

Experimental Protocol: Bottom-Up LC-MS/MS Proteomics

- Sample Preparation: Cells/tissues are lysed, proteins extracted, and reduced/alkylated. Proteins are digested with trypsin into peptides.

- Liquid Chromatography (LC): Peptides are separated by reversed-phase HPLC (C18 column) with an organic solvent gradient.

- Mass Spectrometry Analysis: Eluting peptides are ionized (ESI) and analyzed in a tandem mass spectrometer (e.g., Q-Exactive). The instrument cycles between a full MS1 scan and subsequent MS2 scans of the most abundant precursor ions (Top-N DDA).

- Database Search & Quantification: MS2 spectra are matched to theoretical spectra from a protein sequence database using search engines (MaxQuant, Proteome Discoverer). Label-free or isobaric tag (TMT/iTRAQ) quantification is performed.

Key Research Reagent Solutions

| Reagent/Material | Function |

|---|---|

| Trypsin, Sequencing Grade | Specific protease for digesting proteins into peptides for MS analysis. |

| TMTpro 16plex Isobaric Label Reagent Set | Tags peptides from 16 samples for multiplexed relative quantification. |

| Pierce BCA Protein Assay Kit | Colorimetric assay for accurate protein concentration determination. |

| C18 StageTips | Micro-columns for desalting and concentrating peptide samples prior to LC-MS. |

| EVOSEP One LC System | Provides standardized, robust LC gradients for high-throughput proteomics. |

Metabolomics

Metabolomics identifies and quantifies the complete set of small-molecule metabolites (<1.5 kDa) in a biological system. It represents the most downstream functional readout of cellular processes and is highly sensitive to environmental and physiological changes.

Core Technologies: Mass Spectrometry (MS) and Nuclear Magnetic Resonance (NMR) Spectroscopy.

- Liquid Chromatography-MS (LC-MS): Most common, offering broad coverage and sensitivity.

- Gas Chromatography-MS (GC-MS): Excellent for volatile compounds or those made volatile by derivatization.

Experimental Protocol: Untargeted LC-MS Metabolomics

- Metabolite Extraction: Use a biphasic solvent system (e.g., methanol/chloroform/water) to quench metabolism and extract a wide range of metabolites.

- Chromatographic Separation: Employ HILIC (hydrophilic) or reversed-phase (hydrophobic) LC to separate metabolites prior to MS injection.

- Mass Spectrometry: Data is acquired in full-scan mode over a defined m/z range (e.g., 50-1500) on a high-resolution instrument (e.g., Q-TOF). Both positive and negative ionization modes are typically run.

- Data Processing & Identification: Peak picking, alignment, and deconvolution using software (XCMS, MS-DIAL). Metabolites are identified by matching m/z, retention time, and MS/MS spectra to authentic standards in libraries (e.g., NIST, HMDB).

Diagram Title: Untargeted Metabolomics Workflow

Quantitative Comparison of Omics Layers

Table 1: Core Characteristics of Major Omics Layers

| Omics Layer | Analytical Target | Core Technology | Temporal Dynamics | Approx. # of Molecules in Human | Primary Data Output |

|---|---|---|---|---|---|

| Genomics | DNA Sequence & Variation | NGS (WGS, WES) | Static (Lifetime) | ~3.2B base pairs; ~20k genes | Sequence reads, Variant calls (VCF) |

| Transcriptomics | RNA Expression Levels | RNA-Seq, Microarrays | Fast (mins-hrs) | ~50k transcripts | Read counts per gene/transcript |

| Proteomics | Protein Abundance & PTMs | Mass Spectrometry | Medium (hrs-days) | ~20k proteins; >1M proteoforms | MS1/MS2 spectra, Peptide intensities |

| Metabolomics | Small-Molecule Metabolites | MS, NMR Spectroscopy | Very Fast (secs-mins) | ~10k metabolites (estimated) | m/z, Retention time, Intensity |

Table 2: Key Considerations for Multi-Omics Integration

| Consideration | Genomics | Transcriptomics | Proteomics | Metabolomics |

|---|---|---|---|---|

| Biological Noise | Low | High | Medium | Very High |

| Technical Noise | Low (Modern NGS) | Low (Modern RNA-Seq) | High (Sample prep, MS) | High (Ion suppression, etc.) |

| Coverage/Completeness | Near Complete | High | Moderate (Dynamic Range) | Low (Diversity of Chemistry) |

| Cost per Sample | $500-$1k (WES) | $300-$800 (Bulk RNA-Seq) | $200-$600 (LFQ) | $200-$500 (Untargeted) |

| Data Integration Challenge | Causal/Deterministic | Regulatory State | Functional Effector | Functional/Phenotypic Output |

Choosing a multi-omics integration method requires reconciling the fundamental differences summarized above. Horizontal (late) integration (concatenating datasets) must account for differing scales, noise profiles, and missingness. Vertical (early) integration (using prior knowledge networks) is powerful but depends on the completeness of biological knowledge connecting layers (e.g., gene-protein-reaction links). Mid-level integration (dimensionality reduction first) is often preferred. The choice hinges on whether the biological question is causal (favoring models that leverage genomics as a prior) or predictive/phenotypic (where metabolomics may be the target). Understanding each layer's technical genesis, as detailed in this primer, is the critical first step in that selection process.

Thesis Context: This guide is part of a broader thesis on How to choose a multi-omics integration method. The choice of method is fundamentally dictated by the primary goal of the integrative analysis.

Defining the Core Integration Goals

Multi-omics data integration is not a monolithic task. The analytical approach must be aligned with one of three primary, and often mutually exclusive, goals:

- Discovery: The goal is to identify novel, biologically meaningful relationships across different omics layers (e.g., genome, transcriptome, proteome) without a pre-specified outcome. It is hypothesis-generating.

- Prediction: The goal is to construct a model that uses multi-omics data as input features to accurately predict a specific, predefined clinical or phenotypic outcome (e.g., survival, drug response, disease onset).

- Subtyping: The goal is to stratify a heterogeneous population (e.g., cancer patients) into distinct, homogeneous subgroups based on integrated molecular patterns from multiple omics sources.

The following table summarizes the core characteristics, suitable methods, and validation strategies for each goal.

Table 1: Core Characteristics of Multi-Omics Integration Goals

| Goal | Primary Question | Typical Methods | Key Output | Validation Approach |

|---|---|---|---|---|

| Discovery | What are the inter-relationships between different molecular layers? | Correlation networks, Matrix factorization (e.g., MOFA), Canonical Correlation Analysis (CCA) | Latent factors, Correlation networks, Novel cross-omics associations | Biological replication, Functional assays, Enrichment analysis |

| Prediction | Can we accurately forecast a clinical outcome from molecular data? | Penalized regression (LASSO), Random Forests, Deep Neural Networks, Multi-kernel learning | Predictive model with performance metrics (AUC, C-index, accuracy) | Hold-out test sets, Cross-validation, Independent cohort validation |

| Subtyping | Can we identify distinct molecular subgroups within a population? | Clustering (e.g., iCluster, SNF), Consensus clustering, Bayesian non-parametric models | Patient cluster assignments, Subtype-specific signatures | Survival analysis, Clinical annotation, Stability assessment |

Experimental Protocols for Goal-Specific Validation

Protocol 2.1: Validation of Discovery Insights via Functional Assays

Aim: To experimentally validate a discovered cross-omics association (e.g., a specific miRNA-protein pair).

- Target Identification: From the discovery analysis (e.g., MOFA factor), select the top-associated miRNA and its inversely correlated target protein.

- Cell Line Model: Select a relevant cell line expressing both molecules.

- Perturbation: Transfect cells with miRNA mimic (overexpression) or inhibitor (knockdown) using lipofection.

- Measurement:

- qPCR: 48h post-transfection, extract RNA, reverse transcribe, and perform qPCR to confirm miRNA level changes.

- Western Blot: 72h post-transfection, lyse cells, run protein lysate on SDS-PAGE, transfer to membrane, and probe with antibody against the target protein. Use β-actin as a loading control.

- Analysis: Quantify band intensity. Successful validation is indicated by a decrease in target protein upon miRNA mimic transfection, and vice-versa.

Protocol 2.2: Building and Validating a Predictive Model

Aim: To predict tumor drug response (sensitive/resistant) from RNA-seq and methylation data.

- Data Preprocessing: Normalize RNA-seq counts (TPM) and methylarray data (beta values). Perform feature pre-selection (e.g., variance filter).

- Train-Test Split: Randomly split cohort data into training (70%) and hold-out test (30%) sets, preserving the outcome proportion.

- Model Training (Multi-Kernel Learning):

- Construct separate similarity matrices (kernels) for RNA and methylation data in the training set.

- Combine kernels linearly:

K_combined = μ * K_RNA + (1-μ) * K_Methyl. - Use a kernel-based classifier (e.g., Support Vector Machine) with

K_combinedto predict response in the training set via 5-fold cross-validation to tune parameters (e.g., μ, regularization).

- Model Evaluation: Apply the final trained model to the unseen hold-out test set. Calculate Area Under the ROC Curve (AUC), sensitivity, and specificity.

Protocol 2.3: Molecular Subtyping and Clinical Characterization

Aim: To identify subtypes in breast cancer using copy number variation (CNV) and gene expression data.

- Integrative Clustering: Apply iClusterBayes (a latent variable model) to the combined CNV and expression matrix from the cohort.

- Determine K: Fit models for a range of cluster numbers (K=2-6). Choose the optimal K based on the Bayesian Information Criterion (BIC) and the proportion of variance explained.

- Subtype Annotation: Assign each patient to a cluster based on the maximum posterior probability.

- Clinical Validation: Perform Kaplan-Meier survival analysis (log-rank test) across the identified subtypes. Test for associations with clinical variables (e.g., stage, grade) using Chi-squared tests.

Visualizing the Decision Workflow and Biological Integration

Decision Flow for Multi-Omics Goal Selection

Cross-Omics Biological Relationships & Goal Links

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for Multi-Omics Functional Validation

| Reagent / Material | Function in Validation | Example Vendor/Catalog |

|---|---|---|

| miRNA Mimic / Inhibitor | To gain- or loss-of-function of a specific miRNA discovered in integrative analysis. | Thermo Fisher Scientific (mirVana), Dharmacon |

| Lipofectamine RNAiMAX | Lipid-based transfection reagent for efficient delivery of miRNAs/siRNAs into mammalian cells. | Thermo Fisher Scientific (13778075) |

| TRIzol Reagent | For simultaneous isolation of high-quality RNA, DNA, and protein from a single sample. | Thermo Fisher Scientific (15596026) |

| High-Capacity cDNA Reverse Transcription Kit | Converts RNA to cDNA for downstream qPCR analysis of gene expression changes. | Applied Biosystems (4368814) |

| TaqMan Gene Expression Assays | Fluorogenic probes for specific, sensitive quantification of mRNA or miRNA via qPCR. | Applied Biosystems |

| Primary Antibody (Target Protein) | Binds specifically to the protein of interest for detection via Western blot. | Cell Signaling Technology, Abcam |

| HRP-conjugated Secondary Antibody | Binds to primary antibody and enables chemiluminescent detection. | Cell Signaling Technology (7074) |

| Clarity Western ECL Substrate | Chemiluminescent substrate for sensitive detection of HRP on Western blots. | Bio-Rad (1705060) |

| CellTiter-Glo Luminescent Cell Viability Assay | Measures ATP levels to determine cell viability/proliferation in drug response assays. | Promega (G7570) |

The selection of an appropriate multi-omics integration method is a pivotal decision that dictates the success of a systems biology study. This process is fundamentally guided by the biological question, which serves as the primary filter through which all subsequent technical choices are made. This guide outlines a structured approach to defining that question within the context of multi-omics integration research.

The Hierarchical Framework for Question Definition

A well-defined biological question must specify the scale, entities, condition, and expected output of the investigation. The following table categorizes common types of biological questions and their direct implications for the choice of integration strategy.

Table 1: Biological Question Typology and Methodological Implications

| Question Type | Core Biological Goal | Example Question | Implied Data Relationship | Suggested Integration Approach |

|---|---|---|---|---|

| Vertical | Trace causality across molecular layers | "How do germline SNPs alter protein pathways to drive tumor metastasis?" | Causal, directional (Genome → Transcriptome → Proteome → Phenotype) | Sequential or Model-based (e.g., SNPNET, PRS → eQTL → causal inference) |

| Horizontal | Understand coordinated changes within/across conditions | "What multi-omic modules are co-regulated in response to drug X?" | Associative, complementary | Simultaneous Matrix Factorization (e.g., MOFA), Correlation-based Networks |

| Structural | Define system components & interactions | "What is the comprehensive molecular interaction network in cell state Y?" | Interactive, network-based | Network Integration (e.g., LIANA for ligand-receptor), Bayesian Networks |

| Predictive | Forecast clinical or phenotypic outcomes | "Can we predict patient survival better with combined omics than with single-omics?" | Supervised, outcome-driven | Supervised Early/Intermediate Fusion (e.g., DIABLO, MOGONET) |

From Question to Experimental Design: A Protocol Blueprint

Defining the question dictates the experimental design. Below is a generalized protocol for a multi-omics study designed to answer a vertical question about transcriptional regulators of a disease phenotype.

Protocol: A Sequential Multi-Omics Workflow for Causal Mechanism Identification

Sample Preparation & Fractionation:

- Isolate primary cells or tissue of interest from matched case/control cohorts (minimum n=12 per group for discovery).

- Aliquot the same homogenized sample for parallel DNA, RNA, and protein extraction using dedicated, compatible kits (e.g., AllPrep DNA/RNA/Protein Kit).

- For chromatin assays, cross-link cells immediately after isolation.

Parallel Multi-Omic Profiling:

- Genomics (WES/WGS): Perform library prep using a platform like Illumina Nextera Flex. Sequence to a minimum mean coverage of 100x (WES) or 30x (WGS). Call variants using GATK best practices.

- Transcriptomics (RNA-seq): Generate stranded mRNA-seq libraries. Sequence to a depth of 30-50 million paired-end reads per sample. Quantify expression using Salmon or STAR/featureCounts.

- Proteomics (LC-MS/MS): Digest proteins with trypsin, label with TMTpro 16-plex, and fractionate by high-pH reverse-phase HPLC. Analyze on a timsTOF Pro2 with DIA-PASEF. Identify and quantify proteins using DIA-NN or Spectronaut.

Data Preprocessing & Quality Control:

- Apply cohort-level normalization: RUV-seq for RNA, median normalization for proteomics.

- Perform stringent QC: PCA plots to detect batch effects, sample mix-ups confirmed via genotype concordance checks.

Sequential Integration Analysis:

- Step 1: Map significant GWAS variants to candidate genes (using positional, eQTL, and chromatin interaction mapping).

- Step 2: Perform differential expression (DE) analysis (DESeq2 for RNA; limma for proteomics). Filter DE results for genes mapped from Step 1.

- Step 3: Construct a directed network using tools like CausalPath or DoRothEA, overlaying variant, expression, and protein phosphorylation data to infer signaling pathways altered from genotype to functional phenotype.

Visualizing the Decision Pathway

The logical flow from biological question to integration method is a critical pathway. The diagram below maps this decision process.

Flowchart: From Biological Question to Integration Method

The experimental workflow for a typical vertical integration study can be visualized as follows.

Workflow: Vertical Multi-Omics Integration for Causal Inference

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Kits for a Robust Multi-Omics Workflow

| Item | Function | Example Product/Kit |

|---|---|---|

| Integrated Nucleic Acid/Protein Isolation Kit | Enables simultaneous, co-purification of DNA, RNA, and protein from a single sample aliquot, minimizing technical variation and sample requirement. | Qiagen AllPrep DNA/RNA/Protein Kit |

| Stranded mRNA Library Prep Kit | Prepares sequencing libraries that preserve the strand of origin of transcripts, crucial for accurate gene quantification and fusion detection. | Illumina Stranded mRNA Prep |

| Isobaric Mass Tag Reagents | Allows multiplexed analysis of up to 18 samples in a single LC-MS/MS run, dramatically increasing throughput and quantitative precision in proteomics. | Thermo Fisher TMTpro 18-plex |

| Chromatin Shearing Enzymatic Mix | Provides consistent, controlled fragmentation of cross-linked chromatin for assays like ChIP-seq or ATAC-seq, replacing variable sonication. | Illumina Tagmentase Enzyme |

| Single-Cell Multi-Omic Partitioning System | Enables co-encapsulation of single cells for parallel sequencing of transcriptome and surface proteins (CITE-seq) or genotype (scDNA-seq). | 10x Genomics Chromium Single Cell Multiome ATAC + Gene Expression |

| Multiplexed Immunoassay Panel | Validates key protein-level discoveries from proteomics on many samples using a low-volume, high-sensitivity platform. | Olink Target 96 or 384 Panels |

Selecting an appropriate multi-omics integration method is a critical first step in systems biology and precision medicine research. The choice is fundamentally constrained by the nature of the input data. This guide provides a technical framework for assessing three core attributes of your omics datasets—scale, dimensionality, and data type (bulk vs. single-cell)—within the context of informing method selection for integrative analysis.

Data Scale and Throughput

Data scale refers to the number of biological samples, replicates, and features measured. It directly impacts the statistical power and computational requirements of integration.

Table 1: Characteristic Scales of Modern Omics Assays

| Omics Layer | Typical Sample Range (Bulk) | Typical Feature Range | Approx. Data per Sample (Bulk) |

|---|---|---|---|

| Genomics (WGS) | 100s - 1,000,000s | 3-6 billion base pairs | 80-200 GB (FASTQ) |

| Transcriptomics (Bulk RNA-seq) | 10s - 10,000s | 20,000-60,000 genes | 0.5-5 GB (FASTQ) |

| Proteomics (LC-MS/MS) | 10s - 1,000s | 3,000-10,000 proteins | 0.1-1 GB (raw spectra) |

| Metabolomics (LC-MS) | 10s - 1,000s | 500-10,000 metabolites | 0.1-2 GB (raw data) |

| Epigenomics (ATAC-seq) | 10s - 1,000s | ~100,000 peaks | 1-10 GB (FASTQ) |

| Single-Cell RNA-seq | 1,000 - 1,000,000 cells | 20,000-60,000 genes | 10-500 GB (matrix) |

Protocol 1.1: Estimating Data Requirements for Integration

- Calculate Feature Intersection: Identify common samples across all omics layers. The final integrated cohort size (N) will be the intersection.

- Define Feature Space: For each modality, list the number of measured molecular features (e.g., genes, proteins). The total feature space (P) is the sum across modalities, crucial for high-dimensionality methods.

- Compute Data Volume: Estimate total storage as: Σ (Ni * DataperSamplei) for i omics layers, adding 30% overhead for intermediate files.

- Assess Scale Category: N >> P (Low-dimension), N ≈ P (High-dimension), N << P (Very High-dimension). This categorization guides method choice (e.g., matrix factorization vs. network-based).

Data Dimensionality and Sparsity

Dimensionality refers to the number of variables (features) per sample. High-dimensional omics data is often sparse, with many zero or missing values.

Table 2: Dimensionality and Sparsity Profiles by Data Type

| Data Type | Dimensionality | Sparsity Source | Typical Missingness |

|---|---|---|---|

| Bulk RNA-seq | High (~20k features) | Low expression genes | <5% (post-QC) |

| Single-Cell RNA-seq | Very High (~20k x ~10k cells) | Biological dropout & technical zeros | 80-95% (count matrix) |

| Mass Spectrometry Proteomics | Moderate-High (~10k features) | Low-abundance proteins | 20-60% (data-dependent acquisition) |

| Targeted Metabolomics | Low-Moderate (~500 features) | Compounds below LOD | 5-20% |

Protocol 2.1: Quantifying Data Sparsity and Imputation Evaluation

- Load Data Matrix: Input a feature (rows) x sample (columns) count or intensity matrix.

- Calculate Sparsity: Sparsity (%) = (Number of zero or NA values) / (Total entries) * 100.

- Apply Imputation (Comparative): For scRNA-seq, apply multiple imputation methods (e.g., MAGIC, SAVER, scImpute) on a standardized subset.

- Validate: Use a hold-out dataset where 10% of non-zero values are masked. Compute Root Mean Square Error (RMSE) between imputed and true held-out values. The method with the lowest RMSE for your data type should be considered for pre-processing prior to integration.

Bulk vs. Single-Cell Data Types

The choice between bulk and single-cell profiling defines the fundamental unit of observation and the biological questions addressable through integration.

Table 3: Comparative Analysis: Bulk vs. Single-Cell Omics for Integration

| Attribute | Bulk Omics | Single-Cell Omics |

|---|---|---|

| Measurement Unit | Population average | Individual cell |

| Key Insight | Mean state, aggregated signals | Cellular heterogeneity, rare cell types, trajectories |

| Noise Structure | Technical replication noise | High technical noise (dropouts), biological stochasticity |

| Temporal Resolution | Snapshot of population | Can infer pseudo-temporal ordering |

| Cost per Sample | Lower | Significantly higher |

| Suitable Integration Methods | Early fusion (PCA, CCA), Similarity Network Fusion | Late fusion, Anchor-based (Seurat, Harmony), Deep learning (scVI) |

Protocol 3.1: Experimental Design for Paired Multi-Omic Profiling

- Sample Preparation: Split a single, homogenized tissue sample or cell culture aliquot into multiple technical replicates.

- Parallel Assaying: Process one replicate for each desired omics modality (e.g., RNA-seq, ATAC-seq, Proteomics) in parallel to minimize batch effects.

- Spike-in Controls: Use exogenous spike-in standards (e.g., ERCC RNA spikes, stable isotope-labeled peptide/protein standards) for technical normalization across platforms.

- Common Reference: Include a shared reference sample (e.g., commercially available universal cell line) across all experimental batches and platforms for cross-batch alignment.

- Metadata Annotation: Document precise sample handling, lysis conditions, and library prep kits for each modality to inform covariate adjustment during integration.

Pathway to Method Selection: A Decision Framework

The assessment of scale, dimensionality, and data type directly informs the algorithmic approach for integration.

Diagram 1: Decision Framework for Multi-Omics Integration Method Selection.

The Scientist's Toolkit: Key Reagents & Platforms

Table 4: Essential Research Reagent Solutions for Multi-Omic Profiling

| Reagent / Kit / Platform | Primary Function | Key Consideration for Integration |

|---|---|---|

| 10x Genomics Chromium | Partitioning cells for single-cell RNA/ATAC/multiome libraries. | Enables paired single-cell multi-omics from the same cell, reducing alignment ambiguity. |

| BD Rhapsody | Capturing single cells with bead-based mRNA/AbOligo tags. | Allows targeted mRNA and protein (AbSeq) measurement from same cell, linking transcriptome and proteome. |

| IsoCode Chip (FLUIDIGM) | Microfluidic capture for single-cell full-length RNA-seq. | Provides superior transcript coverage, reducing sparsity for more robust per-cell integration. |

| TMT / iTRAQ Reagents | Isobaric chemical tags for multiplexed MS-based proteomics. | Enables precise, multiplexed quantitation across many samples, crucial for matched bulk multi-omics cohorts. |

| ERCC RNA Spike-In Mix | Exogenous RNA controls of known concentration. | Allows technical noise modeling and cross-platform normalization between RNA-seq batches. |

| Cell Hashing Antibodies | Antibody-oligonucleotide conjugates for sample multiplexing. | Enables pooling of samples pre-scRNA-seq, reducing batch effects—the primary confounder in integration. |

| Nuclei Isolation Kits (e.g., from MilliporeSigma) | Isolation of intact nuclei from complex tissues. | Enables joint profiling of transcriptome (scRNA-seq) and epigenome (snATAC-seq) from the same biological source. |

| DMSO or Cryopreservation Media | Long-term viability storage of single-cell suspensions. | Allows identical aliquots of cells to be run on different omics platforms over time, enabling true bulk multi-omics. |

The Methodologist's Toolbox: A Taxonomy of Modern Multi-Omics Integration Strategies

Within the pivotal research on How to choose a multi-omics integration method, the stage at which disparate data types are integrated—Early versus Late Fusion—is a fundamental architectural decision. This guide provides a technical dissection of these paradigms, aiding researchers and drug development professionals in selecting an appropriate integration strategy for their multi-omics investigations.

Core Paradigms: Definitions and Conceptual Frameworks

Early Fusion (Data-Level Integration): Raw or pre-processed data from multiple omics layers (e.g., genomics, transcriptomics, proteomics) are concatenated into a single, multi-dimensional feature matrix before being input into a downstream model.

Late Fusion (Decision-Level Integration): Each omics data type is modeled independently. The resulting predictions, embeddings, or statistical outputs are then integrated at the final decision stage.

The choice between these approaches hinges on data heterogeneity, sample size, computational resources, and the specific biological question.

Quantitative Comparison of Performance and Characteristics

Live search results from recent benchmarking studies (2023-2024) indicate the following comparative profiles:

Table 1: Comparative Analysis of Early vs. Late Fusion

| Aspect | Early Fusion | Late Fusion |

|---|---|---|

| Typical Accuracy | Higher in data-rich, homogeneous scenarios (e.g., ~85% AUC in cancer subtyping with matched samples) | More robust with missing data or high heterogeneity (e.g., ~82% AUC in similar tasks) |

| Data Requirements | Requires complete, matched samples across all omics. Sensitive to missing data. | Can handle unmatched samples and missing modalities. |

| Model Complexity | Single, often complex model (e.g., deep neural network). Risk of overfitting. | Multiple simpler models, reducing per-model complexity. |

| Interpretability | Challenging; interactions are learned implicitly within a black box. | Higher; modality-specific models are easier to interpret, fusion is explicit. |

| Computational Load | High during training (large feature space). Inference is straightforward. | Distributed; training can be parallelized. Fusion step is lightweight. |

| Key Strength | Captures cross-modal correlations and interactions at the finest granularity. | Flexibility and robustness to real-world data challenges. |

Table 2: Suitability Guide Based on Research Context

| Research Context | Recommended Paradigm | Rationale |

|---|---|---|

| Discovery of novel cross-omics biomarkers | Early Fusion | Enables the model to detect complex, non-linear feature interactions across modalities. |

| Integrating legacy datasets with missing modalities | Late Fusion | Independent models can be trained on available data; only shared samples needed for final fusion. |

| Real-time clinical prediction with evolving data types | Late Fusion | New omics models can be added without retraining the entire system. |

| Small sample size (n < 100) | Late Fusion (or intermediate) | Reduces risk of overfitting compared to a high-dimensional early fusion model. |

Experimental Protocols for Benchmarking Integration Stages

Protocol 1: Benchmarking Framework for Multi-Omic Integration

- Objective: Empirically compare early and late fusion performance on a specific task (e.g., patient survival prediction).

- Input Data: Matched multi-omics data (e.g., RNA-Seq, DNA methylation, RPPA) from a source like TCGA.

- Preprocessing: Per-modality normalization, feature selection (e.g., top 1000 variant genes, most variable CpG sites).

- Early Fusion Pipeline:

- Concatenate selected features from all modalities into a unified matrix (samples x total_features).

- Apply dimensionality reduction (e.g., PCA, UMAP) or use a regularization method (e.g., Lasso, Elastic Net).

- Train a single supervised model (e.g., Cox model, Random Forest) on the reduced/regularized features.

- Perform cross-validation and evaluate using C-index or AUC.

- Late Fusion Pipeline:

- Train a separate predictive model for each omics modality.

- Extract prediction scores (e.g., risk scores) or latent representations (e.g., first principal component) from each model.

- Concatenate these decision-level features into a meta-feature vector.

- Train a "meta-learner" (e.g., a simple linear model) on these combined outputs to make the final prediction.

- Evaluate using the same metric as above.

- Analysis: Compare performance, robustness to noise, and interpretability of outputs.

Visualizing Integration Workflows and Logical Relationships

Diagram 1: Early vs. Late Fusion Workflow Comparison (82 chars)

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagents and Computational Tools for Multi-Omics Integration

| Item / Solution | Function / Purpose | Example (Non-exhaustive) |

|---|---|---|

| Multi-Omic Reference Datasets | Provide matched, clinically annotated data for method development and benchmarking. | TCGA (The Cancer Genome Atlas), CPTAC (Clinical Proteomic Tumor Analysis Consortium) |

| Batch Effect Correction Tools | Correct for non-biological technical variation between omics assay batches, critical for early fusion. | ComBat (in sva R package), Harmony, limma's removeBatchEffect |

| Imputation Libraries | Handle missing data values, often a prerequisite for early fusion. | scikit-learn IterativeImputer, MissForest (R), deep learning imputers (e.g., scVI for single-cell) |

| Multi-View Learning Packages | Provide implemented algorithms for both early and late fusion strategies. | mvlearn (Python), MOFA2 (R, for factor analysis), SnapATAC2 (for multi-omic single-cell) |

| Meta-Learner Algorithms | Simple models used to combine predictions in late fusion pipelines. | Logistic Regression, Linear Discriminant Analysis, Ensemble methods (Voting Classifier) |

| Containerization Software | Ensure computational reproducibility of complex, multi-step integration pipelines. | Docker, Singularity/Apptainer |

| High-Performance Computing (HPC) / Cloud Credits | Provide necessary computational resources for training large early fusion models or many late fusion models. | AWS, Google Cloud, Azure, institutional HPC clusters |

The decision between early and late fusion is not a quest for a universally superior method, but a strategic alignment of the integration stage with the research problem's constraints and goals. Early fusion is powerful for discovering intricate, cross-modal signals in complete datasets, while late fusion offers pragmatic robustness for heterogeneous, real-world data. A systematic evaluation using the provided frameworks and tools, grounded in the specific thesis of multi-omics method selection, is paramount for developing predictive, interpretable, and biologically insightful integrated models.

This whitepaper, framed within the context of a broader thesis on selecting multi-omics integration methods, provides an in-depth technical guide to three foundational matrix factorization and dimensionality reduction techniques: Principal Component Analysis (PCA), Canonical Correlation Analysis (CCA), and Multi-Omics Factor Analysis v2 (MOFA+). For researchers, scientists, and drug development professionals, understanding the mathematical underpinnings, applications, and practical protocols of these methods is critical for informed method selection in integrative multi-omics studies.

Technical Foundations

Principal Component Analysis (PCA)

PCA is an unsupervised linear dimensionality reduction technique. Given a centered data matrix X (n samples × p features), PCA seeks orthogonal directions of maximum variance via an eigen-decomposition of the covariance matrix C = (1/(n-1))XᵀX. The principal components (PCs) are derived by solving Cv = λv, where v are the eigenvectors (loadings) and λ the eigenvalues (explained variances). The low-dimensional representation is Z = XV, where V contains the top k eigenvectors.

Core Use Case: Unsupervised exploration of a single high-dimensional omics data set.

Canonical Correlation Analysis (CCA)

CCA is a supervised method for finding correlations between two sets of variables. Given two centered matrices X₁ (n × p₁) and X₂ (n × p₂), CCA finds projection vectors w₁ and w₂ that maximize the correlation corr(X₁w₁, X₂w₂). This is solved via a generalized eigenvalue problem derived from the combined covariance matrix. Sparse CCA (sCCA) variants incorporate L1 penalties (e.g., using PMA or PenalizedMatrixDecomposition R packages) to handle high-dimensional data (p >> n) by promoting sparsity in the loadings.

Core Use Case: Identifying shared patterns of variation between two matched omics data sets.

Multi-Omics Factor Analysis v2 (MOFA+)

MOFA+ is a Bayesian group factor analysis framework that generalizes PCA and CCA. It models multiple (m) omics data matrices {X¹, ..., Xᵐ} as linear functions of a shared low-dimensional latent space Z (n × k). The model is: Xᵐ = Z(Wᵐ)ᵀ + Εᵐ, where Wᵐ are view-specific loadings and Εᵐ is Gaussian noise. It uses variational inference for scalable parameter estimation. Key advantages include handling of missing values, different data types (continuous, binary, counts), and quantification of variance explained per factor per view.

Core Use Case: Unsupervised integration of multiple (≥2) omics data sets with complex experimental designs.

Comparative Analysis

The following table summarizes the quantitative and functional characteristics of the three methods, critical for selection in a multi-omics integration pipeline.

Table 1: Core Method Comparison for Multi-Omics Integration

| Feature | PCA | (Sparse) CCA | MOFA+ |

|---|---|---|---|

| Statistical Goal | Maximize variance in single view | Maximize correlation between two views | Capture shared & specific variance across multiple views |

| # of Data Views | 1 | 2 (classic), ≥2 (extensions) | ≥2 (native) |

| Supervision | Unsupervised | Supervised (view-pairing) | Unsupervised |

| Sparsity | No (dense loadings) | Yes (enforced via penalty) | Yes (via ARD priors) |

| Handles p >> n | No (requires pre-filtering) | Yes (via sparsity) | Yes |

| Data Types | Continuous, normalized | Continuous | Continuous, binary, count |

| Missing Data | Not natively | Not natively | Yes (model-based imputation) |

| Variance Decomposition | Per PC in single view | Correlation per factor | Per factor per view |

| Key Output | Loadings (V), Scores (Z) | Canonical vectors (w₁, w₂), Correlations | Latent factors (Z), Weights (Wᵐ), Variance explained |

Experimental Protocols for Method Evaluation

Protocol: Benchmarking Integration Performance

This protocol evaluates the ability of PCA, sCCA, and MOFA+ to recover biologically meaningful signals.

- Data Simulation: Generate simulated multi-omics data with known ground truth factors using the MOFAdata R package or custom scripts. Introduce noise and missing values at controlled levels.

- Method Application:

- PCA: Apply to each omics layer separately. Use prcomp() in R or sklearn.decomposition.PCA in Python.

- sCCA: Apply to each pair of omics layers using the PMA or mixOmics R package. Tune sparsity parameters via cross-validation.

- MOFA+: Train model using the MOFA2 R/Python package. Specify data likelihoods (Gaussian, Poisson, Bernoulli) appropriately. Determine optimal number of factors via automatic relevance determination (ARD) and ELBO convergence.

- Performance Metrics: Calculate recovery of ground truth latent factors (using correlation metrics), clustering accuracy of samples in latent space (adjusted Rand index), and computational runtime.

Protocol: Analysis of a Public Multi-Omics Cancer Dataset (e.g., TCGA)

A practical workflow for real-world data integration.

- Data Acquisition & Preprocessing:

- Download matched mRNA expression, DNA methylation, and miRNA data from the Genomic Data Commons for a specific cancer cohort (e.g., TCGA-BRCA).

- Preprocess each layer: log2-transform mRNA, M-value transform methylation, and normalize miRNA counts. Perform feature selection (e.g., top 5000 most variable features per layer).

- Format data into matrices with matched samples (n) as rows.

- Dimensionality Reduction & Integration:

- Apply PCA individually to each preprocessed matrix.

- Apply sCCA pairwise (e.g., mRNA vs. methylation, mRNA vs. miRNA).

- Apply MOFA+ to all three matrices simultaneously.

- Downstream Analysis: Associate latent factors from each method with clinical annotations (e.g., survival, tumor stage) using Cox regression or ANOVA. Perform pathway enrichment on high-loading features for interpretable factors.

Visual Guides

PCA Algorithm Flow

Multi-Omics Integration Strategy Map

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Multi-Omics Factorization

| Item (Software/Package) | Primary Function | Key Application Note |

|---|---|---|

| R stats package (prcomp) | Implements core PCA algorithm. | Fast SVD-based PCA. Essential for baseline single-view analysis. |

| R mixOmics package | Provides sparse CCA (sCCA), DIABLO for >2 views. | Critical for supervised, pairwise integration with feature selection. |

| R/Python MOFA2 package | Implements the MOFA+ model. | Primary tool for flexible, unsupervised integration of multiple data types. |

| Bioconductor MultiAssayExperiment | Data structure for coordinated multi-omics data. | Container for matched samples across assays, ensuring data integrity. |

| R ggplot2 / Python seaborn | High-quality visualization of latent spaces, loadings, variance. | Creates publication-ready figures for factor interpretation. |

| High-Performance Computing (HPC) Cluster | Parallel processing for large-scale data and model training. | Required for genome-scale sCCA or MOFA+ on large cohorts (n>1000). |

| R PMA (Penalized Matrix Decomposition) | Alternative package for sparse CCA/PCA. | Useful for specific penalty formulations in two-view integration. |

| Simulation Framework (e.g., MOFAdata) | Generates synthetic multi-omics data with known structure. | Validates method performance and powers benchmark studies. |

Within the comprehensive thesis on How to choose a multi-omics integration method, a critical decision point arises when dealing with high-dimensional data from single or multiple sources where the underlying biological structure is assumed to be modular and governed by networks. Similarity-based network approaches provide a powerful framework for this context. Two seminal methodologies are Weighted Gene Co-expression Network Analysis (WGCNA) for single-omics studies and Similarity Network Fusion (SNF) for multi-omics integration. This guide details their core principles, protocols, and applications in biomedical research.

Core Methodologies and Comparative Framework

Weighted Gene Co-expression Network Analysis (WGCNA)

WGCNA constructs a signed or unsigned network from a single-omics data matrix (e.g., gene expression). Its power lies in using a soft-thresholding power (β) to emphasize strong correlations and downweight weak ones, adhering to scale-free topology principles. Key steps include:

- Similarity Calculation: Compute a matrix of pairwise correlations (e.g., Pearson) between all features (genes).

- Adjacency Matrix Formation: Transform the similarity matrix using a power function: ( a{ij} = |cor(xi, x_j)|^β ).

- Topological Overlap Matrix (TOM): Calculate TOM to measure network interconnectedness, reducing noise and spurious connections.

- Module Detection: Use hierarchical clustering on the TOM-based dissimilarity to identify modules (clusters) of highly interconnected genes.

- Module-Trait Association: Relate module eigengenes (first principal component of a module) to external sample traits to identify biologically relevant modules.

Similarity Network Fusion (SNF)

SNF integrates multiple data types (e.g., mRNA, miRNA, methylation) from the same set of samples. It creates separate sample similarity networks for each data type and then iteratively fuses them into a single, robust network that captures shared biological information.

- Patient Similarity Networks: For each omics data type, construct a sample-to-sample similarity matrix (typically using Euclidean distance and a scaled exponential kernel).

- Normalized Similarity Matrices: Create two matrices per view: a sparse K-nearest neighbors matrix (P) capturing local relationships, and a full similarity matrix (S) used for information propagation.

- Iterative Fusion: Networks are updated iteratively by diffusing information from each data type through the others: ( P^{(v)} = S^{(v)} \times (\frac{\sum_{k\neq v} P^{(k)}}{m-1}) \times (S^{(v)})^T ), where v is the data type and m is the total number of types.

- Clustering: Apply spectral clustering to the final fused network to identify patient subtypes.

Table 1: Core Algorithmic Comparison: WGCNA vs. SNF

| Feature | WGCNA | Similarity Network Fusion (SNF) |

|---|---|---|

| Primary Design | Single-omics feature network (gene-gene) | Multi-omics sample network (patient-patient) |

| Core Similarity Metric | Pearson/Spearman correlation (feature-feature) | Euclidean distance → exponential kernel (sample-sample) |

| Key Matrix | Topological Overlap Matrix (TOM) | Fused patient similarity network |

| Network Type | Weighted, undirected | Weighted, undirected |

| Main Output | Modules of correlated features (genes) | Integrated patient subgroups/clusters |

| Typical Application | Gene module discovery, hub gene identification, trait association | Patient stratification, integrative subtyping, survival analysis |

Experimental Protocols

Protocol for a Standard WGCNA Analysis (RNA-seq)

Input: Normalized gene expression matrix (genes x samples).

- Data Preparation: Filter lowly expressed genes. Check for outliers samples via hierarchical clustering.

- Soft-Threshold Selection: Choose the power (β) for which the scale-free topology fit index (R²) reaches a plateau (e.g., >0.85). Typically ranges 3-20.

- Network Construction & Module Detection:

adjacency = adjacency(datExpr, power = softPower, type = "signed")TOM = TOMsimilarity(adjacency)dissTOM = 1 - TOMgeneTree = hclust(as.dist(dissTOM), method = "average")dynamicMods = cutreeDynamic(dendro = geneTree, distM = dissTOM, deepSplit = 2, pamRespectsDendro = FALSE, minClusterSize = 30)

- Module-Trait Correlation: Calculate module eigengenes and correlate with clinical traits. Visualize as a heatmap.

- Downstream Analysis: Extract genes in significant modules for pathway enrichment (e.g., GO, KEGG). Identify intramodular hub genes (high module membership).

Protocol for SNF on Multi-omics Data (mRNA + Methylation)

Input: Normalized matrices for mRNA expression and DNA methylation (samples x features) from the same cohort.

- Data Standardization: Z-score normalize features within each data type.

- Similarity Network Construction (per data type):

- Calculate pairwise Euclidean distance between samples: ( D{ij} = \sqrt{\sum{k}(x{ik} - x{jk})^2} )

- Convert to similarity using a scaled exponential kernel: ( W{ij} = exp(-\frac{D{ij}^2}{\mu \epsilon_{ij}}) ), where µ is a hyperparameter and εij is a scaling factor.

- Construct KNN matrix P (v) for each view (v). Typical K=20-30.

- Network Fusion:

- Initialize P(1) and P(2).

- Iterate until convergence (t ~ 20): Update each P(v) by fusing with the others using the diffusion equation.

- Clustering on Fused Network:

- Apply spectral clustering on the final fused matrix to obtain patient cluster labels.

- Validation: Assess clusters via survival analysis (Kaplan-Meier log-rank test) and differential expression/ methylation between clusters.

Table 2: Typical Hyperparameter Values in SNF

| Parameter | Common Range/Value | Description |

|---|---|---|

| K (Number of Neighbors) | 20 - 30 | Controls sparsity of local affinity matrices. Higher K increases connectivity. |

| μ (Hyperparameter in Kernel) | 0.3 - 0.8 | Normalizes distance scales. Often set empirically. |

| Iteration Number (t) | 10 - 25 | Usually converges within 20 iterations. |

| Alpha (Kernel Exponent) | Typically 0.5 | Used in some SNF variants. |

Visualization of Workflows and Relationships

WGCNA Gene Module Discovery Workflow

SNF Multi-Omics Integration Workflow

Network Method Selection in Multi-Omics Thesis

The Scientist's Toolkit: Key Reagent Solutions

Table 3: Essential Computational Tools & Packages

| Tool/Package | Primary Function | Application Context |

|---|---|---|

R WGCNA |

Implements the entire WGCNA pipeline. | Constructing signed/unsigned co-expression networks, module detection, and trait association in R. |

R SNFtool / Python snfpy |

Provides functions for Similarity Network Fusion. | Performing SNF integration and spectral clustering in R or Python environments. |

dynamicTreeCut (R) |

Dynamic branch cutting for hierarchical clustering. | Identifying clusters (modules) in dendrograms produced by WGCNA. |

impute (R) |

Imputation of missing data (e.g., KNN impute). | Preprocessing omics data before WGCNA/SNF to handle missing values. |

cluster / sklearn |

Spectral clustering and other algorithms. | Clustering the fused matrix from SNF or performing alternative analyses. |

igraph / networkx |

General network analysis and visualization. | Advanced network manipulation, visualization, and calculation of graph properties post-WGCNA. |

survival (R) |

Survival analysis. | Validating patient subtypes from SNF using Kaplan-Meier and Cox models. |

Selecting an appropriate multi-omics integration method is a critical challenge in systems biology and precision medicine. The choice hinges on the biological question, data characteristics (scale, noise, heterogeneity), and desired output (molecular classification, biomarker discovery, causal inference). This guide provides an in-depth technical examination of two pivotal algorithmic families—ensemble methods like Random Forests and neural architectures like Autoencoders—within this thesis context. Their application ranges from early-stage feature selection and data reduction to constructing integrated, low-dimensional representations of complex genomic, transcriptomic, proteomic, and metabolomic data.

Core Algorithmic Foundations

Random Forests: Ensemble-Based Feature Selection & Classification

Random Forests (RF) are an ensemble learning method that operates by constructing a multitude of decision trees during training. For multi-omics, RF is primarily used for feature selection (identifying key biomarkers across omics layers) and classification (e.g., disease subtyping).

Key Experimental Protocol for Multi-Omics Feature Selection:

- Data Preparation: Scale and normalize each omics dataset (e.g., RNA-seq counts, protein abundance) individually. Concatenate features into a single matrix (samples x features) with a target phenotypic variable.

- Model Training: Train a Random Forest regressor/classifier. Use a high number of trees (n_estimators=1000+) and appropriate depth control to prevent overfitting.

- Feature Importance Calculation: Compute Gini importance or permutation importance for each feature.

- Multi-Omics Ranking: Aggregate importances by omics layer and within each layer to identify top contributors.

- Validation: Use out-of-bag error or a held-out test set to assess model performance. Validate selected features via stability analysis across bootstrap samples.

Quantitative Performance Summary (Recent Benchmarks):

Table 1: Performance of Random Forests in Multi-Omics Classification Tasks (2020-2023)

| Study Focus | Data Types | # Features | Key Metric (RF) | Comparative Advantage |

|---|---|---|---|---|

| Cancer Subtyping | RNA-seq, DNA Methylation | ~50,000 | AUC: 0.89-0.94 | Robustness to noise & outliers |

| Disease Prognosis | Proteomics, Metabolomics | ~1,200 | Accuracy: 82.5% | Non-linear pattern capture |

| Biomarker Discovery | Genomics, Transcriptomics | ~100,000 | Feature Stability: High | Intrinsic feature importance ranking |

Autoencoders: Deep Learning for Dimensionality Reduction & Integration

Autoencoders (AEs) are neural networks designed for unsupervised learning of efficient codings. In multi-omics, variational autoencoders (VAEs) and multi-modal AEs are used to learn a joint, low-dimensional latent representation that integrates all omics layers.

Key Experimental Protocol for Multi-Modal VAE Integration:

- Architecture Design:

- Input: Separate encoder networks for each omics type (handling different input dimensions).

- Bottleneck: A joint latent layer (e.g., 32-128 dimensions) where integration occurs. For a VAE, this layer parameterizes a probability distribution.

- Output: Separate decoder networks reconstructing each original omics input.

- Training: Minimize a combined loss function: Reconstruction Loss (MSE) + β * KL Divergence (for VAE, enforcing a structured latent space).

- Integration & Downstream Analysis: Extract latent vectors for each sample. Use these for clustering, visualization, or as features in a downstream predictor.

- Interpretation: Employ attribution methods to trace latent features back to input variables.

Quantitative Performance Summary (Recent Benchmarks):

Table 2: Performance of Autoencoder Architectures in Multi-Omics Integration (2021-2024)

| Architecture | Data Types | Latent Dim | Key Metric | Primary Use Case |

|---|---|---|---|---|

| Stacked Denoising AE | Transcriptomics, Proteomics | 50 | Reconstruction R²: 0.78 | Noise reduction, imputation |

| Multi-modal VAE | miRNA, mRNA, Clinical | 32 | Clustering Concordance: 0.85 | Integrative patient stratification |

| Graph-Convolutional AE | Single-cell Multi-omics | 64 | Bio-conservation Score: 0.91 | Integrating scRNA-seq & scATAC-seq |

Comparative Decision Framework for Method Selection

Table 3: Choosing Between Random Forests and Autoencoders for Multi-Omics Integration

| Criterion | Random Forests | Autoencoders |

|---|---|---|

| Primary Goal | Feature selection, classification, handling missing data | Dimensionality reduction, data integration, generative modeling |

| Data Scale | Handles high-dimensionality well, but extreme p>>n can be challenging | Excels with very high-dimensional data, requires larger n for training |

| Interpretability | High: Direct feature importance scores | Lower: Latent space requires post-hoc interpretation |

| Non-linearity | Models complex interactions implicitly | Models highly complex, hierarchical non-linear relationships |

| Data Types | Best for tabular, concatenated data | Can model complex multi-modal inputs natively |

| Thesis Context | Choose when the goal is biomarker identification or predictive modeling with a clear outcome. | Choose when the goal is exploratory integration, uncovering novel patient subgroups, or data compression. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 4: Key Reagent Solutions for Computational Multi-Omics Experiments

| Item | Function & Relevance |

|---|---|

| scikit-learn | Primary library for implementing Random Forests; provides robust tools for preprocessing, model evaluation, and feature importance calculation. |

| PyTorch / TensorFlow | Deep learning frameworks essential for building and training custom autoencoder architectures, including VAEs. |

| MOFA+ (R/Python) | A dedicated Bayesian framework for multi-omics factor analysis, a strong alternative/complement to AE-based integration. |

| Scanpy (Python) | Ecosystem for single-cell multi-omics analysis, includes wrappers for integration methods. |

| Conda/Docker | Environment and containerization tools critical for replicating complex computational pipelines and ensuring reproducibility. |

| High-Performance Computing (HPC) Cluster or Cloud GPU | Necessary computational resources for training deep learning models on large multi-omics datasets. |

Visualized Workflows & Architectures

Multi-omics Analysis with Random Forests

Multi-modal Autoencoder for Omics Integration

Choosing a Multi-Omics ML Method

Within the broader thesis on How to choose a multi-omics integration method research, a critical axiom emerges: there is no universally superior method. The optimal choice is determined by a deliberate alignment between the researcher's biological or clinical goal, the inherent structure of the multi-omics data, and the method's mathematical assumptions. This guide provides a structured decision framework to navigate this complex landscape.

Core Decision Matrix: Goal-Driven Methodology Selection

The primary goal dictates the methodological approach. The following table categorizes common objectives and matches them to families of integration methods.

Table 1: Strategic Alignment of Goal and Integration Approach

| Primary Research Goal | Description | Suitable Method Families | Key Output |

|---|---|---|---|

| Discovery-Driven | Unsupervised exploration to identify novel patterns, clusters, or molecular subtypes without prior labels. | Early Integration (Concatenation), Matrix Factorization (NMF, JIVE), Similarity-Based (SNF), Deep Learning (Autoencoders). | New disease subtypes, composite biomarkers, latent molecular factors. |

| Prediction-Driven | Supervised learning to predict a clinical outcome (e.g., survival, response) using multi-omics features as input. | Intermediate/Late Integration, Regularized Regression (LASSO, Elastic Net), Kernel Methods, Stacked Models, Deep Neural Networks. | A predictive model with validated accuracy for the target endpoint. |

| Network & Interaction-Driven | Understand interactions, regulatory relationships, and pathways across omics layers. | Bayesian Networks, Multi-Layer Networks, Pathway-Centric Integration, Causal Inference Models. | A directed or undirected graph detailing cross-omic interactions and key hub nodes. |

| Dimension Reduction & Visualization | Reduce high-dimensional data to 2D/3D for interpretation and exploratory plotting. | PCA, t-SNE, UMAP (on pre-integrated matrices), Multi-Omics Factor Analysis (MOFA). | Low-dimensional embeddings where each point represents a sample. |

Data Structure Considerations & Method Constraints

The feasibility of the methods in Table 1 is governed by data properties. Quantitative constraints are summarized below.

Table 2: Data Structure Requirements and Method Compatibility

| Data Characteristic | Question | Method Implications |

|---|---|---|

| Sample Size (n) | n << features (p)? | Avoid methods prone to overfitting (e.g., simple concatenation+regression). Use strong regularization (LASSO) or Bayesian approaches. |

| Dimensionality | High p across all omics? | Prioritize dimension reduction before integration (e.g., MOFA, DIABLO) or use deep learning autoencoders. |

| Data Type & Scale | Mixed data types (continuous, count, binary)? | Choose methods designed for multi-view data (e.g., Generalized Canonical Correlation Analysis, mixOmics). |

| Missing Data | Missing blocks (e.g., some omics missing for some samples)? | Require methods robust to missingness: MOFA, Multi-Omics Patient-Specific Pathway Analysis. |

| Temporal/Paired Design | Longitudinal or matched samples? | Need time-aware integration: Multi-Omics Dynamic Bayesian Networks, Longitudinal Integration (MINT). |

Experimental Protocols for Benchmarking Integration Methods

To empirically evaluate chosen methods, a standardized benchmarking protocol is essential.

Protocol 1: Benchmarking for Subtype Discovery

- Objective: Assess the biological relevance and stability of clusters identified by an unsupervised integration method.

- Procedure:

- Integration & Clustering: Apply method (e.g., SNF, iCluster) to training dataset. Perform clustering (e.g., k-means, spectral) on the integrated matrix.

- Internal Validation: Calculate internal metrics (Silhouette Width, Davies-Bouldin Index) on the training set.

- Biological Validation: Perform differential expression/abundance analysis between clusters. Conduct enrichment analysis (GO, KEGG) on differential features. Evaluate association with known clinical labels (e.g., log-rank test for survival differences).

- Stability Assessment: Use bootstrapping or subsampling to measure cluster robustness (e.g., Jaccard similarity of cluster assignments).

Protocol 2: Benchmarking for Outcome Prediction

- Objective: Compare the predictive performance of supervised integration models.

- Procedure:

- Data Splitting: Split data into Training (70%), Validation (15%), and Hold-out Test (15%) sets, preserving outcome distribution.

- Model Training: Train candidate models (e.g., late integration with random forest vs. DIABLO vs. neural network) on the Training set.

- Hyperparameter Tuning: Use k-fold cross-validation on the Training set, guided by the Validation set, to optimize parameters.

- Final Evaluation: Apply the tuned model to the unseen Hold-out Test Set. Report metrics: AUC-ROC (classification), Concordance Index (survival), or RMSE (regression).

- Feature Importance: Extract and compare top predictive features from each model for biological interpretability.

Visualizing the Decision Framework and Workflows

Title: Decision Tree for Multi-Omics Method Selection

Title: Core Multi-Omics Integration Workflow

Table 3: Key Research Reagent Solutions for Multi-Omics Integration Studies

| Tool/Resource | Type | Primary Function |

|---|---|---|

| mixOmics (R) | Software Package | Provides a comprehensive, well-documented toolkit for multivariate multi-omics integration (e.g., DIABLO, sGCCA). Essential for supervised/unsupervised analysis. |

| MOFA2 (R/Python) | Software Package | Implements Multi-Omics Factor Analysis for unsupervised discovery of latent factors from multi-view data. Handles missing data effectively. |

| ConsensusClusterPlus (R) | Software Package | Provides a robust framework for assessing cluster stability, critical for validating discovered subtypes from any integration method. |

| OmicsEV (R/Python) | Software Tool | A quality validation pipeline for multi-omics data, evaluating batch effects and technical noise before integration. |

| MultiAssayExperiment (R) | Data Container | A standardized Bioconductor data structure for coordinating multiple omics experiments on overlapping sample sets. Ensures data integrity. |

| Simulated Multi-Omics Datasets | Benchmark Data | Synthetic data with known ground truth (e.g., pre-defined subtypes, causal features) for method calibration and benchmarking. |

| The Cancer Genome Atlas (TCGA) | Public Data Resource | A canonical source of real, large-scale, paired multi-omics data with clinical annotations for method testing and hypothesis generation. |

Navigating Pitfalls and Fine-Tuning Your Integration Pipeline

Within the critical research on How to choose a multi-omics integration method, the fidelity of integration results is fundamentally dependent on the rigorous preprocessing of individual omics datasets. Successful integration methods—whether early (concatenation-based), late (model-based), or intermediate (transformation-based)—require homogenous, high-quality input data. This guide details three universal preprocessing hurdles: batch effects, normalization, and missing data, providing technical protocols to ensure robust downstream integration.

Batch Effects: Identification and Correction

Batch effects are systematic technical variations introduced during different experimental runs, sequencing dates, equipment, or reagent lots. They can confound biological signals and lead to false conclusions in integrated analysis.

Quantitative Impact Assessment

The following table summarizes common metrics for batch effect detection:

Table 1: Metrics for Batch Effect Detection

| Metric | Formula / Description | Threshold for Significant Batch Effect | Common Tool |

|---|---|---|---|

| Principal Variance Contribution Analysis (PVCA) | PVCA = Variance attributed to batch factor / Total variance | > 10% contribution | pvca R package |

| Silhouette Width | s(i) = (b(i) - a(i)) / max(a(i), b(i)); where a=mean intra-batch distance, b=mean nearest-batch distance | Average s(i) close to 1 (strong batch structure) | cluster R package |

| Distance-based Discriminant Ratio | DDR = (mean inter-batch distance) / (mean intra-batch distance) | DDR >> 1 | Custom calculation |

Experimental Protocol: Using Control Samples for Batch Monitoring

Objective: To empirically quantify batch effects using spike-in controls or pooled reference samples. Materials: Commercially available ERCC (External RNA Controls Consortium) spike-in mixes for RNA-seq, or pooled sample aliquots stored for long-term use. Procedure:

- Spike-in Addition: Add a consistent amount of ERCC RNA spike-in mix (e.g., 1 µl of Mix 1 per 10 µg total RNA) to each sample prior to library preparation across all batches.

- Processing: Process samples through sequencing.

- Analysis: Map reads to the combined genome and spike-in reference. Calculate log2 counts for spike-in controls.

- Assessment: Perform PCA solely on the spike-in control expression matrix. A strong separation by batch in the PCA plot indicates a pronounced technical batch effect requiring correction.

Batch Correction Methodologies

- ComBat (sva package): Uses an empirical Bayes framework to adjust for known batches. Suitable for multi-omics when applied per platform.

- Harmony: Iteratively projects data into a shared space while removing batch-specific centroids. Effective for single-cell and bulk integration.

- Remove Unwanted Variation (RUV): Utilizes control genes/samples or replicates to estimate and subtract unwanted factors.

Diagram Title: Batch Effect Correction Workflow for Multi-Omic Data

Normalization: Enabling Cross-Platform Comparability

Normalization adjusts data for technical artifacts (e.g., sequencing depth, library size, protein total ion current) to make measurements comparable across samples and, crucially, across different omics layers prior to integration.

Normalization Techniques by Data Type

Table 2: Common Normalization Methods Across Omics Layers

| Omics Layer | Common Method | Algorithm / Rationale | Key Consideration for Integration |

|---|---|---|---|

| Transcriptomics | TMM (edgeR) / DESeq2 | Scales library sizes based on a trimmed mean of log expression ratios (TMM) or median ratio (DESeq2). | Ensures gene expression distributions are comparable across samples. |

| Proteomics | Median Centering / vsn | Centers abundance values per sample to the global median or uses variance-stabilizing normalization. | Corrects for varying total ion current between MS runs. |

| Metabolomics | Probabilistic Quotient | Normalizes each sample spectrum to a reference (e.g., median sample) using the most probable dilution factor. | Accounts for differences in urine concentration or biomass. |

| Epigenomics | Reads Per Million (RPM) | Scales ChIP-seq or ATAC-seq read counts by total mapped reads per sample. | Allows comparison of peak intensities across samples. |

Experimental Protocol: Cross-Modality Normalization for Paired Samples